DeepSeek vs Qwen in 2026: An Engineer’s Side-by-Side

If you have a budget spreadsheet open and you’re trying to choose between two open-weight Chinese model families, the deepseek vs qwen decision usually comes down to four numbers: input price, output price, context length, and how the model behaves on your actual workload. I’ve shipped production traffic on DeepSeek V4-Pro and V4-Flash since the V4 Preview release, and on Qwen3 and Qwen3.5 before that. Both families publish open weights, both expose OpenAI-compatible APIs, and both undercut Western frontier pricing by a wide margin. They are not, however, interchangeable. This article walks through pricing, benchmarks, context windows, licensing, and a worked cost example so you can pick correctly the first time.

Verdict: which one should you pick?

For most developers building chat, RAG, or agent workloads, DeepSeek V4-Flash wins on raw price-per-token and on the simplicity of its API surface. It posts $0.14 per million uncached input tokens and $0.28 per million output tokens, with a 1M-token context window by default. Qwen’s open-weight tier is competitive and its top hosted model — Qwen3.6-Max-Preview, available through Qwen Studio and the Alibaba Cloud Model Studio API as a proprietary, hosted model with no open weights, with API compatible with both OpenAI and Anthropic specifications — is a credible frontier competitor. But Qwen’s pricing is fragmented across providers and tiers, and Alibaba has begun closing the open-weight door at the top of the lineup.

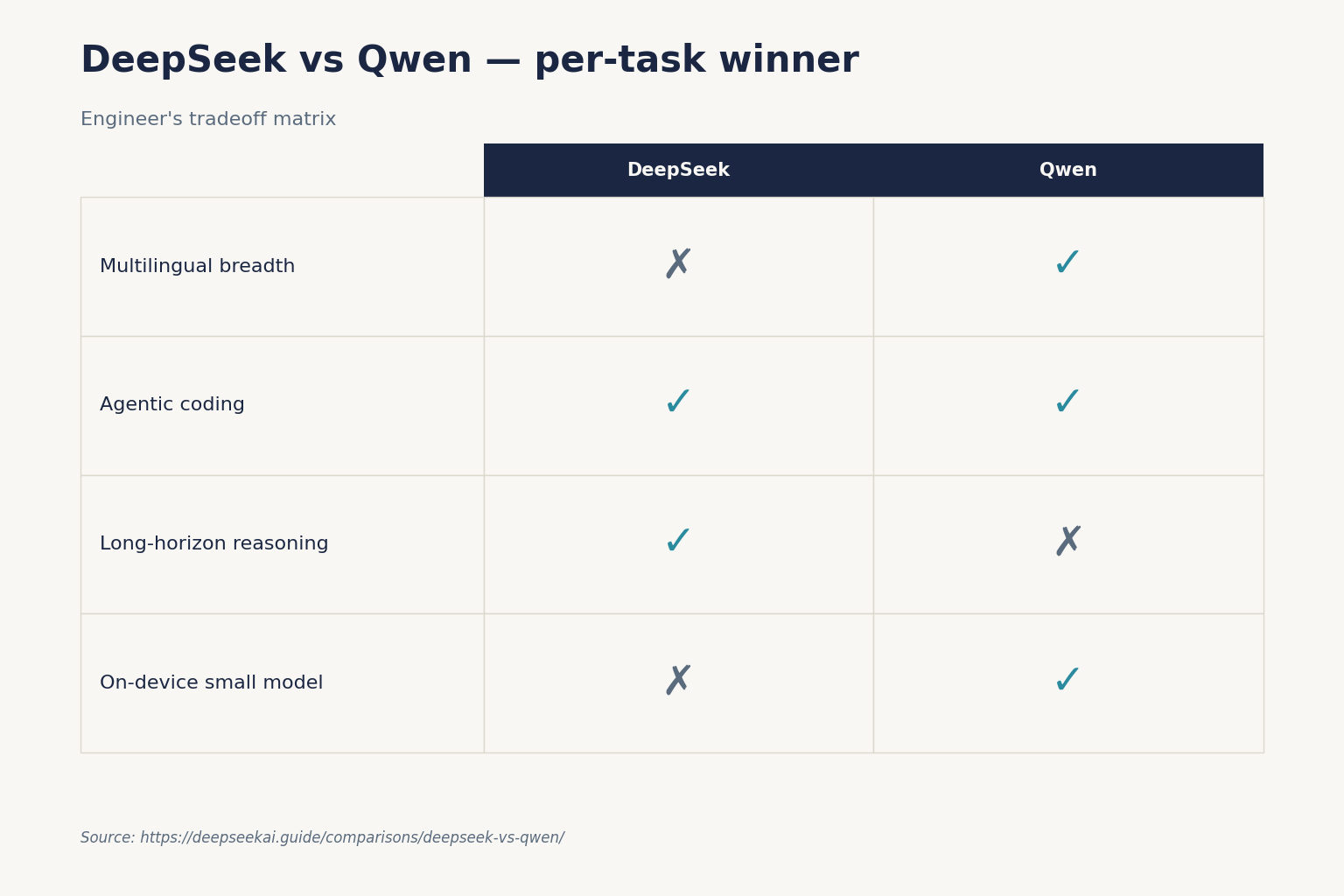

Pick DeepSeek V4 if you want one open-weight model file, one pricing page, one API surface, and the cheapest tokens at frontier-adjacent quality. Pick Qwen if you need first-class multimodal (image, video, audio) input, native Model Context Protocol agent plumbing, or a smaller dense model (4B–32B) you can run on a single GPU.

At a glance: DeepSeek vs Qwen in one table

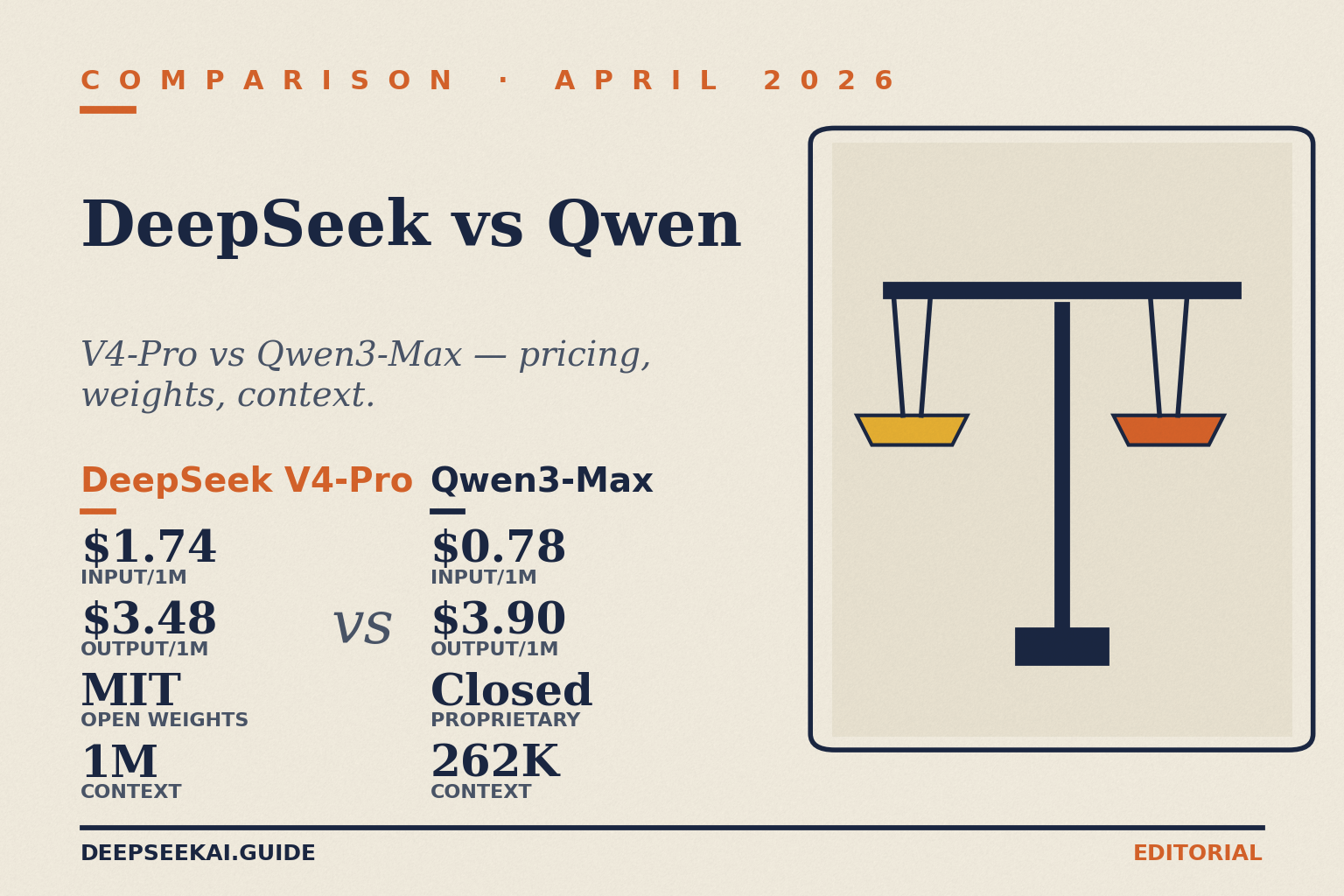

The headline tiers each family ships in April 2026, with verified numbers from each provider’s official sources:

| Model | Total / Active params | Context | Input miss $/M | Output $/M | Open weights? |

|---|---|---|---|---|---|

| DeepSeek V4-Pro | 1.6T / 49B (MoE) | 1,000,000 | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list | Yes (MIT) |

| DeepSeek V4-Flash | 284B / 13B (MoE) | 1,000,000 | $0.14 | $0.28 | Yes (MIT) |

| Qwen3.6-Max-Preview | Not disclosed | See provider | See provider | See provider | No (proprietary) |

| Qwen3.6-Plus | Hybrid linear attention + MoE | 1,000,000 | ~$0.325 (OpenRouter) | ~$1.95 (OpenRouter) | Partial |

| Qwen3.6-35B-A3B | 35B / 3B (MoE) | 262K (extendable) | Self-host or provider | Self-host or provider | Yes (Apache 2.0) |

| Qwen3-Max | Not disclosed | 262K | $0.78 | $3.90 | No (proprietary) |

DeepSeek pricing comes from DeepSeek’s own pricing page (verified April 2026; cache-hit rates are now $0.0028/M on Flash and $0.0145/M list on Pro after the 2026-04-26 drop); Qwen3.6-Plus rates are from $0.325 per million input tokens and $1.95 per million output tokens on OpenRouter; Qwen3 Max pricing starts at $0.780 per million input tokens and $3.90 per million output tokens. Always check the provider’s live pricing page before committing — Qwen rates in particular vary by deployment region and provider.

Architecture and lineage

Both families use Mixture-of-Experts (MoE) at the top end and dense models at the bottom, but they get there differently.

DeepSeek V4: two tiers, one feature set

DeepSeek V4 was released on April 24, 2026 as a Preview. It ships as exactly two model IDs — deepseek-v4-pro (1.6T total / 49B active) and deepseek-v4-flash (284B / 13B active) — both open-weight under MIT. Thinking mode is a request parameter, not a separate model: you set reasoning_effort="high" and pass extra_body={"thinking": {"type": "enabled"}} on either tier. The legacy deepseek-chat and deepseek-reasoner IDs still resolve, mapping to deepseek-v4-flash, but they retire at 2026-07-24 15:59 UTC. If you’re maintaining an older integration, the migration is a one-line model= swap; base_url stays the same. For more depth on the family see DeepSeek V4.

Qwen3.6: a wider lineup, more fragmentation

Alibaba’s lineup is broader. The Qwen 3.6 lineup spans Max-Preview at the top of the family, the Qwen Plus variance for balanced workloads, Flash for speed-first tasks, and 35B-A3B for those running locally. The Qwen3.5-Omni and Qwen3.6-Plus were released in April 2026 as proprietary; access is limited to the chatbots’ websites and the Alibaba cloud platform, while the Qwen3.6-35B-A3B model was released under the Apache 2.0 license in the same month. The previous-generation Qwen3 family is fully open-source and remains widely deployed; Qwen3-VL-2B-Instruct alone has surpassed 18 million downloads globally.

The pattern matters: DeepSeek publishes a single pair of open-weight tiers and prices them on one page. Qwen ships a much wider catalogue with proprietary tiers at the top and open weights only at the lower and middle sizes. If you want a clean mental model and predictable pricing, DeepSeek is simpler.

Coding

This is where both families have invested heavily. DeepSeek’s V4 announcement reports deepseek-v4-pro at 80.6% on SWE-Bench Verified — refer to the V4 technical report for the exact split before you quote it in a customer-facing deck. Qwen’s frontier model is in the same neighbourhood: Qwen3.6-Plus achieves a 78.8 score on SWE-bench Verified, and Alibaba claims that Qwen 3.6-Max-Preview ranked first across several major benchmarks that test coding and agent capabilities, including SWE-bench Pro, Terminal-Bench 2.0, SkillsBench, QwenClawBench, QwenWebBench, and SciCode (those are self-reported, but the gap to other frontier labs is genuinely small).

In practice I’ve found V4-Pro stronger on long-horizon refactors that span dozens of files, and Qwen3.6-Plus stronger on idiomatic front-end work. Neither will save you from a bad spec. For a deeper coding-only comparison, see DeepSeek Coder vs Copilot and our DeepSeek for coding walkthrough.

Reasoning

Both families now expose reasoning as a parameter rather than a separate SKU. On DeepSeek V4 you flip reasoning_effort to "high" or "max"; the API returns reasoning_content alongside the final content. On Qwen, the equivalent feature is the enable_thinking parameter on Plus/Max-tier models. Alibaba’s docs note one quirk: if you enable thinking mode but the model does not output a thought process, you are billed at the non-thinking mode rate. That’s friendlier billing behaviour than I expected.

The Qwen3.6 lineup also adds a small but useful feature for agent workloads: Max-Preview ships with preserve_thinking, which carries reasoning traces across multi-turn conversations, and Alibaba specifically recommends it for agentic tasks where context continuity matters. DeepSeek’s V4 surface doesn’t have a direct equivalent today; you reconstruct continuity by replaying messages, since the API is stateless — the client must resend conversation history with every request. The web chat and mobile app maintain session state for users; the API does not.

Writing and multilingual

Qwen has invested noticeably more in multilingual breadth. The Qwen3.5 models support 201 languages and dialects, up from the previous generation’s 82 — Counterpoint’s Einstein said this feature reflects Alibaba’s global ambitions. DeepSeek V4 is strong on English and Chinese and competent across the major European languages, but for Tagalog, Swahili, or Bengali content I’d still pick Qwen.

For long-form English and creative writing, DeepSeek V4 with temperature=1.5 produces more idiomatic prose in my testing. Qwen tends to over-explain. Try both on a sample of your own copy; the difference is stylistic and worth checking. See DeepSeek for writing for prompt patterns.

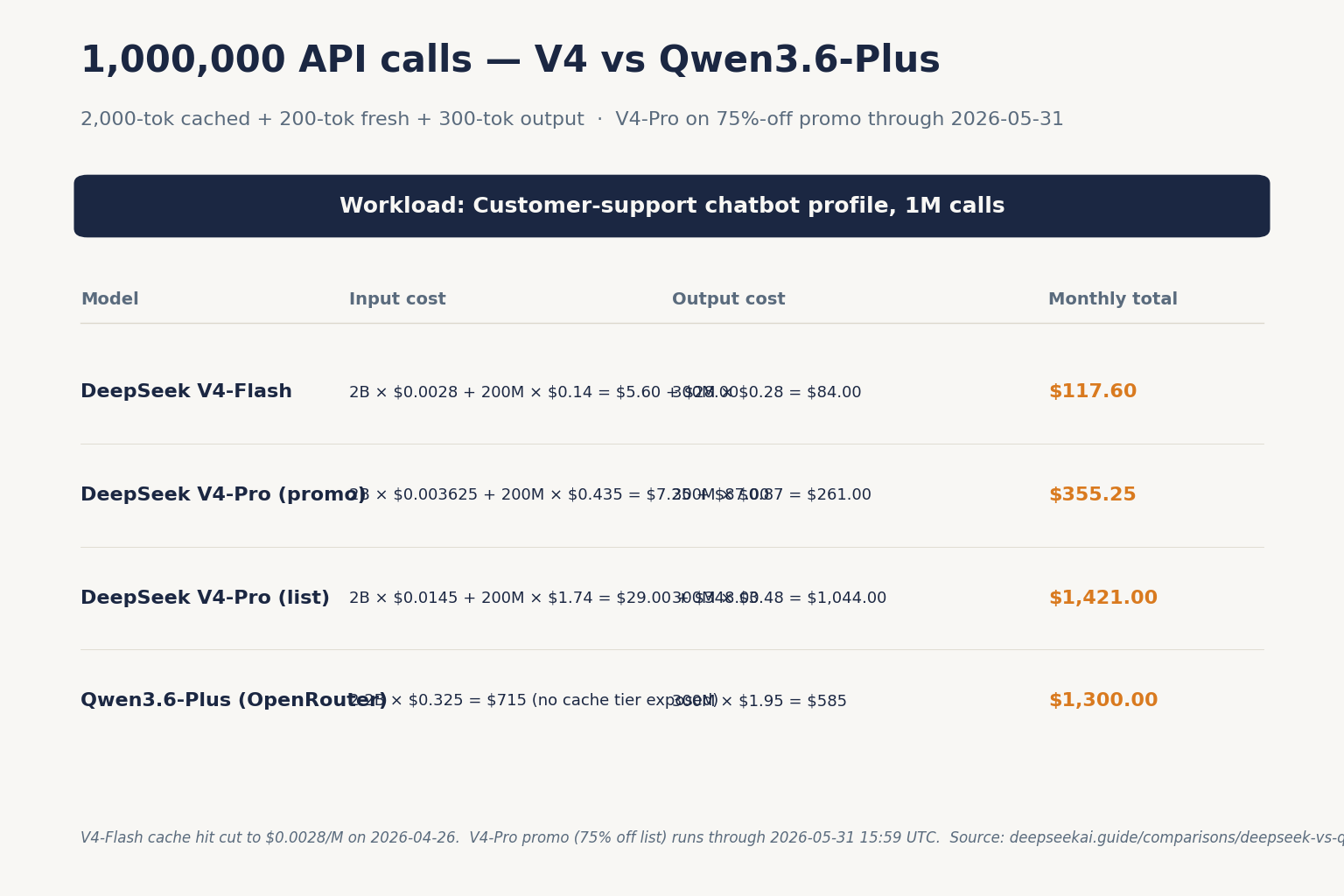

Pricing: a worked example

Comparing token rates across providers is messy. Let’s cost out one realistic workload — a customer-support chatbot doing 1,000,000 calls with a 2,000-token system prompt (cached), a 200-token user message, and a 300-token reply — on each side.

DeepSeek V4-Flash

Cached input : 2,000 × 1,000,000 = 2,000,000,000 × $0.0028/M = $ 5.60

Uncached input: 200 × 1,000,000 = 200,000,000 × $0.14/M = $ 28.00

Output : 300 × 1,000,000 = 300,000,000 × $0.28/M = $ 84.00

------

Total $ 117.60DeepSeek V4-Pro

Cached input : 2,000,000,000 × $0.0145/M = $ 29.00

Uncached input: 200,000,000 × $1.74/M (list) = $ 348.00

Output : 300,000,000 × $3.48/M (list) = $1,044.00

---------

Total $1,421.00 list (or $355.25 during the 75% V4-Pro promo through 2026-05-31)Qwen3.6-Plus (via OpenRouter, illustrative)

OpenRouter does not currently expose a separate cache-hit rate for Qwen3.6-Plus, so we cost the full input as standard:

Input : 2,200,000,000 × $0.325/M = $ 715.00

Output : 300,000,000 × $1.95/M = $ 585.00

---------

Total $1,300.00For this workload V4-Flash beats Qwen3.6-Plus by roughly 11×, and V4-Pro lands cheaper than Qwen3.6-Plus while sitting in the frontier tier. Caveat: if your provider exposes a cached-input rate for Qwen the gap narrows — standard input is around $0.23 per 1M tokens and cached input is roughly $0.02 to $0.05 per 1M tokens on some Qwen providers. Verify before you build. Use the DeepSeek pricing calculator to plug in your own token volumes.

Privacy and licensing

Both APIs send data to servers in China by default unless you choose a non-Mainland deployment region. Qwen offers more deployment options on Alibaba Cloud Model Studio: in international deployment mode, endpoints and data storage are located in the Singapore region, and model inference compute resources are dynamically scheduled globally (excluding the China Mainland region), plus US (Virginia) and EU (Frankfurt) regions. DeepSeek’s hosted API does not offer regional deployments today; if data residency matters, that’s a Qwen win — or a reason to self-host DeepSeek’s open weights.

Licensing: every recent DeepSeek release (V4-Pro, V4-Flash, V3.2, V3.1, R1) publishes both code and weights under MIT. Qwen is more mixed. Many Qwen models are distributed under the free and open-source Apache 2.0 license, the source-available Qwen License, or the non-commercial Qwen Research License; other proprietary Qwen models are served through Alibaba Cloud. Read the licence on the specific Hugging Face repo before deploying — the answer is not always “Apache 2.0”. For more on this see is DeepSeek open source.

Ecosystem and tooling

Both APIs are OpenAI-compatible, so you can switch by changing base_url and api_key. Qwen also offers Anthropic-compatible endpoints; DeepSeek added Anthropic compatibility alongside V4. The minimal Python skeleton is identical for both — chat requests hit POST /chat/completions, the OpenAI-compatible endpoint on either provider:

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Summarise this PR."}],

)Qwen leans further into agent tooling. Qwen3 natively supports the Model Context Protocol (MCP) and robust function-calling, leading open-source models in complex agent-based tasks, and Alibaba ships Qwen Code, an open-source AI agent for the terminal, optimized for Qwen models that helps you understand large codebases and automate tedious work. DeepSeek’s tool calling is solid and OpenAI-compatible, but the surrounding agent SDK story is thinner. If you’re building a CLI agent on top of an open-weight model, Qwen has a head start. If you’re building a chat product, retrieval pipeline, or fine-tuned domain model, DeepSeek’s smaller footprint is an advantage.

When to pick DeepSeek vs Qwen

Use this as a decision filter:

- Pick DeepSeek V4-Flash when you want the lowest cost-per-token at frontier-adjacent quality, a single 1M-token context tier, and MIT-licensed weights you can self-host.

- Pick DeepSeek V4-Pro when SWE-Bench-class agentic coding or long-horizon reasoning justifies the ~6× output-price step up from Flash.

- Pick Qwen3.6-Plus or Max-Preview when you need native multimodal (image, video, audio) input or first-class agent tooling like MCP and Qwen Code.

- Pick Qwen3.6-35B-A3B when you want an Apache-2.0 model small enough to fit on one or two GPUs, with current-generation quality.

- Pick Qwen for multilingual coverage beyond English/Chinese/major European, especially Southeast Asian languages.

Alternatives worth comparing

If you’re shortlisting open and semi-open Chinese frontier models, also look at DeepSeek vs Kimi and DeepSeek vs GLM. For a head-to-head against Western frontier offerings, see DeepSeek vs Claude and the broader AI comparison hub. The best Chinese AI models roundup ranks the full field by use case.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

Is DeepSeek better than Qwen?

It depends on the workload. DeepSeek V4-Flash is cheaper per token and simpler to integrate, with one open-weight tier and MIT-licensed weights. Qwen3.6 has stronger native multimodal support (image, video, audio), broader multilingual coverage across 201 languages and dialects, and tighter agent tooling via MCP and Qwen Code. For a fuller breakdown of trade-offs, see our DeepSeek vs competitors review.

How much cheaper is DeepSeek than Qwen?

For a typical chatbot workload using DeepSeek V4-Flash ($0.14 input miss, $0.28 output per million tokens) versus Qwen3.6-Plus on OpenRouter (about $0.325 input, $1.95 output per million tokens), DeepSeek is roughly 11× cheaper at the line item. Caching narrows the gap on Qwen but DeepSeek’s cache-hit rate of $0.0028/M is still lower. Use the DeepSeek cost estimator to model your own volumes.

Can I run Qwen and DeepSeek locally on the same hardware?

Yes for the smaller and middle-sized variants. DeepSeek V3.2 and the R1-Distill family run via Ollama and llama.cpp; Qwen3 dense models from 0.6B to 32B and the Qwen3.6-35B-A3B MoE all run on the same toolchains. The 1.6T-parameter DeepSeek V4-Pro requires multi-GPU inference. See our running DeepSeek on Ollama guide for setup steps.

Does Qwen support thinking mode like DeepSeek?

Yes. Qwen3.5/3.6 Plus and Max models accept an enable_thinking parameter that toggles a reasoning trace, similar to DeepSeek V4’s reasoning_effort with the thinking flag enabled. Alibaba notes that if you enable thinking mode but the model does not output a thought process, you are billed at the non-thinking rate. DeepSeek returns reasoning_content alongside the final content; details in our DeepSeek API documentation.

Are DeepSeek and Qwen weights both open source?

Partially. Every recent DeepSeek release (V4-Pro, V4-Flash, V3.2, R1) publishes weights under MIT. Qwen is mixed: many models ship under Apache 2.0, but Qwen3.5-Omni and Qwen3.6-Plus were released in April 2026 as proprietary, accessible only through Alibaba’s chat and cloud platform. Qwen3.6-35B-A3B is open under Apache 2.0. For the broader picture see open-source AI like DeepSeek.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.