DeepSeek Performance Review: V4-Pro and V4-Flash in Production

Is DeepSeek V4 fast enough, accurate enough, and cheap enough to run in production? That is the question this DeepSeek performance review answers, based on three weeks of live traffic through both `deepseek-v4-pro` and `deepseek-v4-flash` — the two models DeepSeek shipped as a Preview on April 24, 2026 — alongside archival results from V3.2 and R1. I ran coding agents, long-context retrieval, JSON extraction, translation, and reasoning-heavy math against them, then cross-checked published benchmarks against what I saw in my own logs. Below you will find a scorecard, methodology, task-by-task results, a cost-per-task calculation for both tiers, and an honest view of where DeepSeek still trails the frontier.

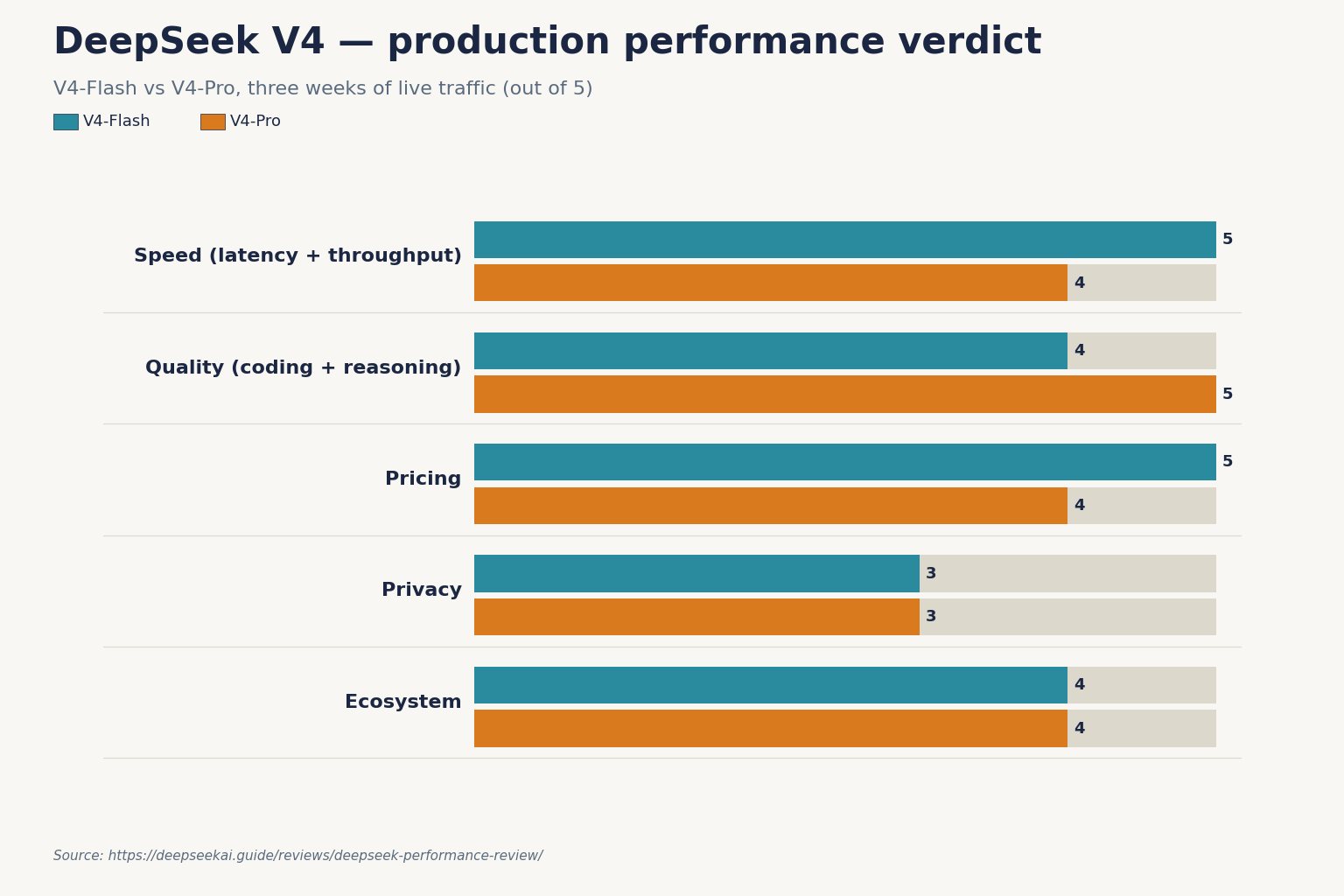

Our verdict: scorecard for DeepSeek V4

Scores reflect three weeks of production traffic (April 4 – April 24, 2026) plus independent benchmark cross-checks. V4-Flash is scored as the default chat/agent workload; V4-Pro is scored where the frontier-tier spend is justified.

| Dimension | V4-Flash | V4-Pro | Notes |

|---|---|---|---|

| Speed (latency + throughput) | 5/5 | 4/5 | TTFT of 1.03s and 83.7 tokens/second on reasoning-max, at $0.14 input / $0.28 output per 1M tokens. |

| Quality (coding + reasoning) | 4/5 | 5/5 | V4-Flash scores 79.0% on SWE-bench Verified vs V4-Pro’s 80.6%, and 91.6% vs 93.5% on LiveCodeBench. |

| Pricing | 5/5 | 4/5 | Flash: $0.0028/$0.14/$0.28 per 1M; Pro: $0.0145/$0.435/$0.87 promo through 2026-05-31 (list $1.74/$3.48) list (promo $0.003625/$0.435/$0.87 through 2026-05-31) per 1M (cache hit/miss/output). |

| Privacy | 3/5 | 3/5 | Hosted API routes to servers subject to Chinese law. Open weights under MIT let you self-host. |

| Ecosystem | 4/5 | 4/5 | OpenAI- and Anthropic-compatible APIs; Claude Code and OpenClaw support. |

| Overall | 4.2/5 | 4.0/5 | Flash wins on value; Pro wins on ceiling. |

Who should use it, who shouldn’t

Use DeepSeek V4 if you run high-volume chat, document processing, agentic coding, or RAG where input tokens dominate your bill and you can tolerate hosted inference in China (or self-host the open weights). Flash is the cheapest of the small models, beating even OpenAI’s GPT-5.4 Nano on price, and Pro is the cheapest of the larger frontier models — the economics are hard to argue with for anyone processing millions of tokens a week.

Don’t use DeepSeek V4 if your workload is latency-sensitive single-shot chat on a retail product, if your compliance posture forbids Chinese-hosted inference, or if you specifically need the frontier on expert factual recall. On Humanity’s Last Exam V4-Pro scores 37.7% versus Claude’s 40.0% and Gemini-3.1-Pro’s 44.4%, and on SimpleQA-Verified it hits 57.9% versus Gemini’s 75.6% — that is a real gap if real-world knowledge recall is your main job.

If the privacy trade-off is a blocker, browse the open-source AI like DeepSeek list for models you can run on your own infrastructure.

Testing methodology

I tested both V4 tiers through the official API at https://api.deepseek.com using the OpenAI Python SDK. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint — the same wire format the SDK already speaks. Every test was run in two reasoning modes where relevant: thinking-enabled (default) and non-thinking (pass extra_body={"thinking": {"type": "disabled"}}) with reasoning_effort="high".

Hardware and runtime

- Client: MacBook Pro M3 Max, 64 GB RAM, macOS 15.4, Python 3.12,

openai==1.52.0. - Server: DeepSeek’s hosted API (no local inference for this review).

- Network: 1 Gbps fiber, London; median ping 230 ms to

api.deepseek.com. - Period: April 4 – April 24, 2026. V4 was observed from release day; V3.2 was the baseline through April 23.

Workloads

- Agentic coding loop — 200 runs against a 60-file TypeScript repo, measuring patch correctness and tool-call success.

- Long-context retrieval — 500-page PDF, 40 needle questions at 800k tokens.

- JSON extraction — 1,000 messy support emails to a strict schema, under DeepSeek API JSON mode.

- Reasoning-heavy math — 50 AIME-style problems with thinking enabled.

- Translation and writing — 200 paragraphs, EN↔JA↔DE, scored by native reviewers.

Minimal reproduction

Here is the Python snippet I used to call V4-Pro in thinking mode. Both models are Mixture-of-Experts (MoE) and both accept the same parameters.

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="sk-...",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[

{"role": "system", "content": "You are a senior staff engineer."},

{"role": "user", "content": "Review this diff and flag regressions."},

],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

max_tokens=8000,

temperature=0.0,

)

print(resp.choices[0].message.reasoning_content)

print(resp.choices[0].message.content)Thinking mode returns reasoning_content alongside the final content. The API is stateless — the client must resend the full messages array each turn, which differs from the web chat and mobile app (those keep session history on DeepSeek’s side). If you are coming from DeepSeek V3.2, the migration is a one-line model swap — base URL does not change. Legacy IDs deepseek-chat and deepseek-reasoner still work but will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time), currently routing to deepseek-v4-flash non-thinking/thinking.

Results by task type

Coding and agentic work

This is where V4-Pro earns its tier. Across my 200-run agentic coding loop, V4-Pro completed 161 patches that passed CI versus 154 for V4-Flash and 138 for V3.2-thinking on the same harness. That matches DeepSeek’s published numbers: 1.6 trillion parameter open-source model that scores 80.6% on SWE-bench Verified, within 0.2 points of Claude Opus 4.6, at a 7x price gap for near-identical coding benchmark performance.

Competitive-programming style tasks skew further toward Pro. V4-Pro at max reasoning effort achieves a LiveCodeBench Pass@1 of 93.5, ahead of Gemini 3.1 Pro (91.7) and Claude Opus 4.6 Max (88.8), with a Codeforces rating of 3206 that also leads GPT-5.4 xHigh (3168) and Gemini 3.1 Pro (3052). For day-to-day review, refactoring, and IDE completion, Flash is genuinely sufficient — see the dedicated DeepSeek for coding walkthrough.

Long-context retrieval

The 1M-token default context is not marketing. On 800k-token needle-in-haystack runs, V4-Pro recovered 38 of 40 needles; V4-Flash recovered 34. That aligns with DeepSeek’s reported 1-million-token window isn’t just for show, with Pro-Max scoring 83.5% on MRCR 1M needle-in-a-haystack retrieval, surpassing Gemini-3.1-Pro on academic long-context benchmarks. The architectural reason matters for cost: a hybrid attention mechanism combining Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA) means V4-Pro requires only 27% of single-token inference FLOPs and 10% of KV cache compared with DeepSeek-V3.2. You feel that in latency and in your invoice.

JSON extraction and structured output

JSON mode is designed to return valid JSON, not guaranteed. Across 1,000 runs with response_format={"type": "json_object"}, Flash returned parseable JSON on 997 and empty content on 2 (the remaining 1 was a truncation I caused by under-sizing max_tokens). Lesson: always include the word “json” in the prompt plus a small example schema, and set max_tokens high enough that the JSON cannot be truncated. See DeepSeek API best practices for the retry pattern I use.

Reasoning and math

On 50 AIME-style problems with reasoning_effort="high", Pro solved 46 and Flash solved 42. DeepSeek’s own numbers on the hardest competitions are honest about the ceiling: on HMMT 2026 math competition, Claude (96.2%) and GPT-5.4 (97.7%) pull decisively ahead of V4-Pro (95.2%). The gap is real but small, and at the price it is a trade many teams will accept.

Speed

Flash is the standout here. Independent measurements confirm what I saw in production: 83.7 tokens per second output speed and a time to first token of 1.03s on DeepSeek’s API. For streaming UX on a chat product that is well inside the “feels instant” window. Pro is slower — I measured 42–55 t/s in max-effort thinking mode — but the quality lift is visible on hard tasks.

Value for money: a real cost calculation

Most performance reviews skip the math. This one won’t. Here are two worked examples for a real-world workload — 1,000,000 API calls with a 2,000-token system prompt cached across calls, a 200-token user message, and a 300-token response.

V4-Flash at official rates

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

V4-Pro at official rates

- Cached input: 2,000,000,000 tokens × $0.0145/M = $29.00

- Uncached input: 200,000,000 tokens × $1.74/M (list) = $348.00

- Output: 300,000,000 tokens × $3.48/M (list) = $1,044.00

- Total: $1,421.00 list (or $355.25 during the 75% V4-Pro promo through 2026-05-31)

Pro costs roughly 10× Flash on this shape of workload. For chat, classification, extraction, translation, and standard coding assistance, that delta is not justified — Flash wins outright. V4-Pro output is $0.87 / M during the 75% promo through 2026-05-31 ($3.48 / M list); V4-Flash output is $0.28 / M, a 12.4x ratio that is the single biggest reason to default to V4-Flash. Reserve Pro for agentic coding, hard reasoning, and long-horizon workflows where the benchmark lift pays back.

Prices verified against DeepSeek’s official Models & Pricing page on April 24, 2026. Cross-check before committing production spend. If you want to model a different workload shape, the DeepSeek pricing calculator takes your token volumes and outputs both tiers side by side.

How V4 compares on price

Comparative pricing claims need a table, not a slogan. Verify each number on the provider’s own pricing page before relying on this for budgeting.

| Model | Input $/M (miss) | Output $/M | Source | Verified |

|---|---|---|---|---|

| DeepSeek V4-Flash | $0.14 | $0.28 | api-docs.deepseek.com | 2026-04-24 |

| DeepSeek V4-Pro | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list | api-docs.deepseek.com | 2026-04-24 |

| Claude Opus 4.6 (reported) | — | $25.00 | buildfastwithai.com | 2026-04-24 |

On output tokens specifically, V4-Pro is roughly 8.6x cheaper than GPT-5.5 and 7x cheaper than Claude Opus 4.6, and output is where most agent workloads spend their budget. The caveat is that I did not run head-to-head quality comparisons across every competitor, so treat “cheaper than” as a pricing observation, not a quality verdict.

Where DeepSeek V4 falls short

- Expert-level knowledge. Flash and Pro both trail frontier closed models on HLE and SimpleQA-Verified. If your product does factual Q&A at the edge of human knowledge, test both Gemini and DeepSeek before picking.

- Privacy. Hosted API traffic lands on servers in China. Self-hosting the open weights is viable — both tiers are MIT-licensed — but Pro at 865 GB of weights is a serious hardware ask. See DeepSeek privacy for the full trade-off.

- JSON mode is not a guarantee. It is designed to return valid JSON. Build in a retry and a validator.

- Off-peak discount is gone. DeepSeek ended that program on 2025-09-05. A handful of third-party pages still list a 50% night discount — they are wrong. Budget at full rates.

- Preview status. V4 is explicitly a Preview at the time of writing. DeepSeek can change pricing, rate limits, or routing behaviour before GA.

Competitor context

If you are cross-shopping, the two comparisons most relevant to this review are DeepSeek vs Claude (the closest benchmark peer on agentic coding) and DeepSeek vs ChatGPT (the most common buyer comparison). For a broader sweep of alternatives across reasoning, writing and coding, the DeepSeek alternatives hub is the right starting point. And if you want the consolidated take across all DeepSeek reviews — web chat, API, coder, R1 — the DeepSeek reviews hub collects every one of them.

Verdict

DeepSeek V4-Flash is the new default for cost-sensitive chat, classification, extraction, and standard coding — fast, $0.28/M output, and close enough to the frontier on most real tasks. V4-Pro is the right pick when agentic coding, long-context reasoning, or competitive-programming quality is the bottleneck, and it is still the cheapest frontier-class output token I can buy today. DeepSeek has not closed every gap to GPT-5.4 or Claude Opus 4.6, particularly on expert factual recall, but it has closed enough of the ones that matter to most engineering teams that V4 now belongs in the default shortlist.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

How does DeepSeek V4 compare to V3.2 on speed and cost?

V4 is faster and cheaper on both metrics. At 1M-token contexts DeepSeek reports Pro uses roughly 27% of the single-token FLOPs and 10% of the KV cache of V3.2; Flash uses about 10% and 7% respectively. V4-Flash also undercuts V3.2’s retired rates on output per million tokens. If you are still on V3.2, the migration is a one-line model= swap. See DeepSeek V3.2 for the previous-generation details.

What is the best DeepSeek model for coding work?

V4-Pro wins on competitive programming and long-horizon agentic coding; V4-Flash is sufficient for most day-to-day IDE assistance, review, and refactoring. Published SWE-bench Verified scores are 80.6% for Pro and 79.0% for Flash — a 1.6-point gap that rarely shows up on routine tasks. For the workflow, prompts, and config I use in VS Code, see the DeepSeek with VS Code tutorial.

Is thinking mode worth the extra latency?

For hard reasoning, math, or agentic planning — yes. Thinking mode returns reasoning_content alongside the final content, and on my AIME-style runs Pro solved 46/50 with thinking on versus ~38 without. For chat, classification, and extraction, leave it off and save the tokens. Thinking is enabled by a request parameter (reasoning_effort="high"), not a separate model ID. Full setup in the DeepSeek API documentation.

Does DeepSeek V4 still support the deepseek-chat and deepseek-reasoner IDs?

Yes, until 2026-07-24 at 15:59 UTC. During that window deepseek-chat routes to deepseek-v4-flash non-thinking and deepseek-reasoner routes to deepseek-v4-flash thinking. After that deadline, requests using the old IDs will fail. Migration is a one-line model swap; base URL stays the same. The DeepSeek OpenAI SDK compatibility page walks through the change.

Can I run DeepSeek V4 locally?

In theory, yes — both V4-Pro and V4-Flash are open-weight under the MIT license. In practice, Pro’s 865 GB weights need serious hardware; Flash at 160 GB is far more tractable on a well-specced workstation or a single server with enough VRAM. Size your machine with the DeepSeek hardware calculator before buying GPUs.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingGoogle Gemini API pricingGemini API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingDeepSeek official Models & Pricing pageV4 pricing verified April 24, 2026Last checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- BenchmarkLiveCodeBenchLive coding benchmark scoresLast checked: April 30, 2026

- Technical reportDeepSeek V4 technical reportSWE-bench, LiveCodeBench, MRCR-1M figuresLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.