DeepSeek V3 Review: A Practitioner’s Take in 2026

Should you still build on DeepSeek V3 in April 2026, or has the lineage moved on? This DeepSeek V3 review answers that directly: V3 was a landmark December 2024 release that proved a 671B-parameter Mixture-of-Experts model could match closed-source flagships at a fraction of the training spend, but the API surface has rolled forward through V3.1, V3.2, and now V4. I ran V3 in production from January 2025 onward, kept notes on its quirks, and have since migrated workloads to V3.2 and V4-Flash. Below: the scorecard, benchmark numbers from the original technical report, where V3 still earns its keep, where it falls short, and what to use instead today.

Our verdict: a milestone model, now superseded

DeepSeek V3 is the model that made Western developers take Chinese open-weight LLMs seriously. It still runs well as a self-hosted base for organisations that captured the original release. But for new API workloads in April 2026, V3 is the wrong default — its successors are cheaper, faster, and better. The scorecard reflects V3 as it shipped, not as a current API recommendation.

| Dimension | Score (out of 5) | Notes |

|---|---|---|

| Speed | 3.5 | Solid for a 671B MoE; later V3-0324 update pushed inference faster. |

| Output quality | 4 | Strong on math and code; verbose on open-ended writing. |

| Pricing (at launch) | 4.5 | $0.27/M input miss, $1.10/M output — undercut frontier closed models heavily in early 2025. |

| Privacy posture | 2.5 | Hosted API processes data on servers in China; open weights mitigate this for self-hosters. |

| Ecosystem fit | 4 | OpenAI-SDK compatible; broad Hugging Face and Ollama support from day one. |

| Overall | 3.7 / 5 | Historically excellent; not the right new build target in 2026. |

The headline: if you already self-host the open weights and your workload is steady, V3 is fine. If you are picking a hosted API model today, jump straight to V4-Flash via the DeepSeek models hub and skip the historical lineage.

Who should use it, who shouldn’t

Use V3 if:

- You already have a self-hosted V3 deployment running on your own H100/H200 cluster and the workload is stable.

- You are doing reproducibility research that requires the December 2024 checkpoint specifically.

- You are studying MoE architecture — V3’s auxiliary-loss-free load balancing and multi-token prediction objective remain interesting reference designs.

Don’t use V3 if:

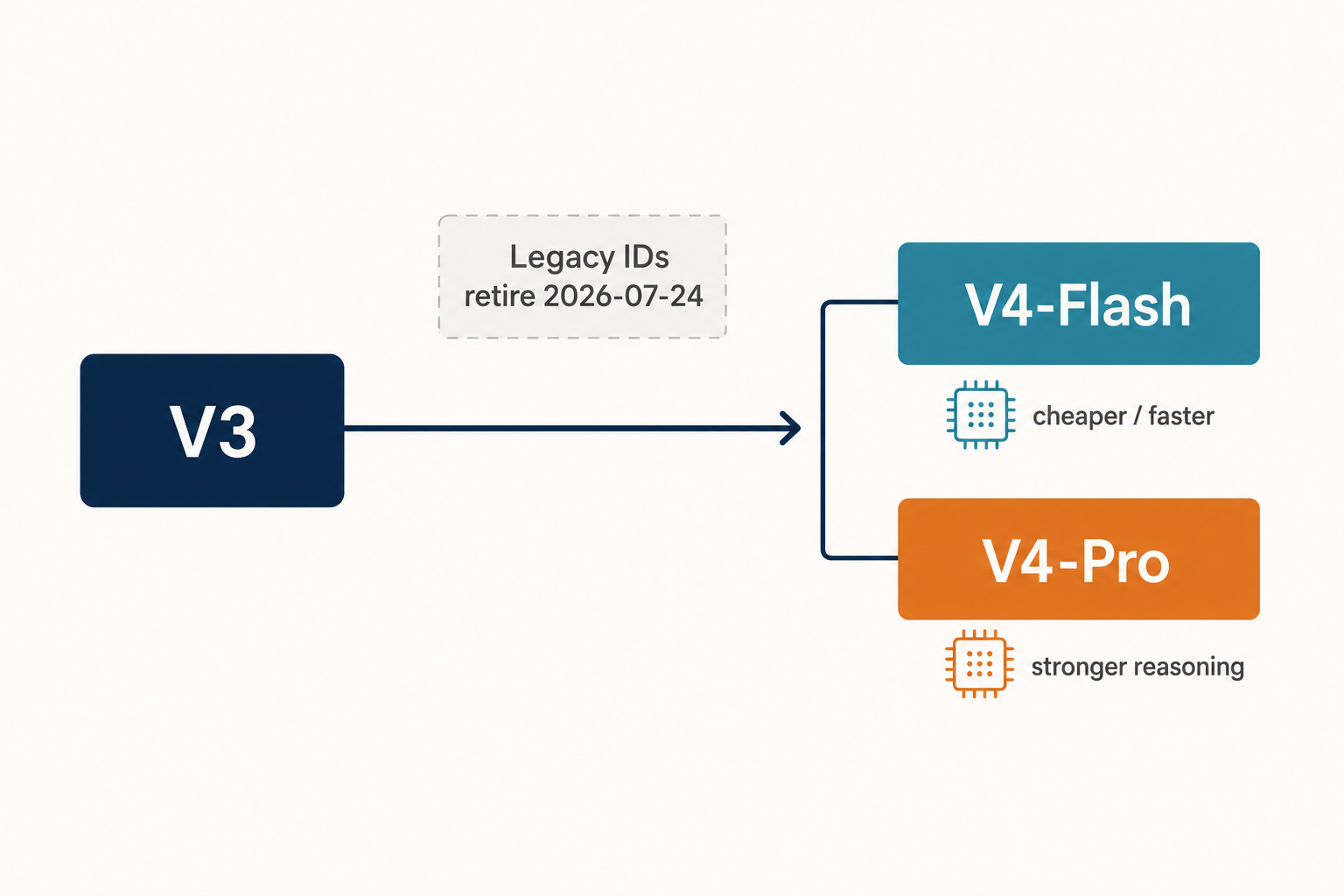

- You are building new on the hosted API. The legacy

deepseek-chatanddeepseek-reasoneraliases now route to DeepSeek V4-Flash, and they retire entirely on 2026-07-24 at 15:59 UTC. - You need long context. V3 capped at 128K tokens; V4 ships a 1,000,000-token context with up to 384,000 tokens of output.

- You need built-in thinking mode. V3 was non-thinking; reasoning lived in the separate R1 model.

Testing methodology

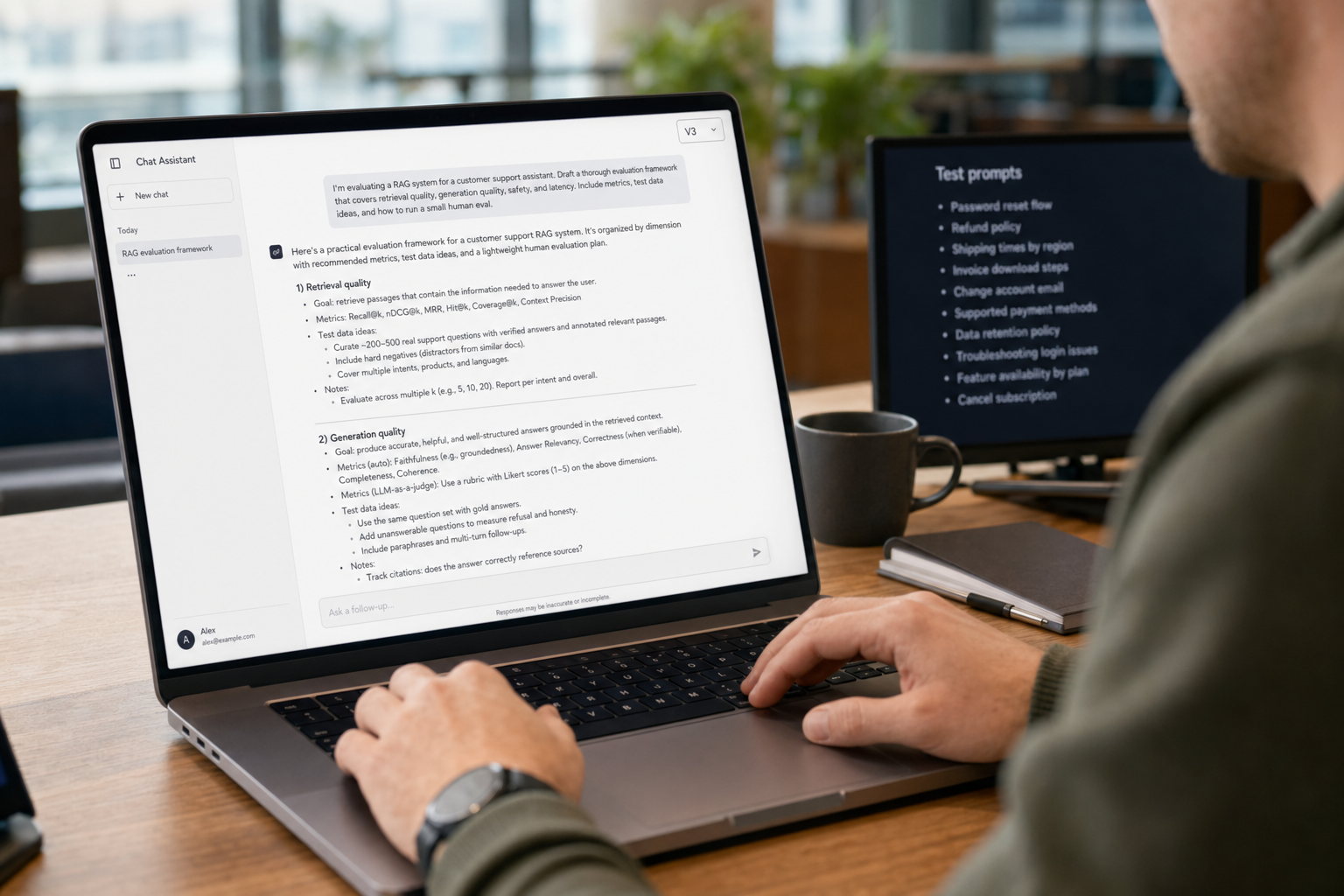

I tested V3 between January and August 2025 on three surfaces: the official DeepSeek hosted API (then aliased as deepseek-chat), a self-hosted FP8 deployment on an 8×H100 node, and the model card on Hugging Face for offline evaluation. Workloads spanned production code review for a TypeScript codebase, technical writing assistance, mathematical proof checking, and a multilingual customer-support classifier. Results were tracked against a parallel ChatGPT (GPT-4o) and Claude 3.5 Sonnet pipeline running the same prompts.

Architecture under test

DeepSeek’s V3 technical report describes the model as a strong Mixture-of-Experts language model with 671B total parameters with 37B activated for each token, adopting Multi-head Latent Attention (MLA) and DeepSeekMoE architectures, and pioneering an auxiliary-loss-free strategy for load balancing. The team pre-trained V3 on 14.8 trillion diverse and high-quality tokens, followed by Supervised Fine-Tuning and Reinforcement Learning stages. The MoE design is what made V3 viable on a constrained budget — only a fraction of parameters fire per token.

Results by task type

Coding

V3 was the standout open model for code at release. On HumanEval-Mul it cleared the open-source field, and on real-world TypeScript review it produced cleaner refactors than GPT-4o on multi-file context up to about 60K tokens. It struggled past 80K — coherence degraded well before the advertised 128K window, a known weakness across long-context models of that era. The follow-up V3-0324 checkpoint addressed this somewhat, with stronger reasoning, faster code generation, and improved front-end design capabilities over the December release.

Mathematical reasoning

V3 was excellent at math without thinking-mode overhead. On educational benchmarks such as MMLU, MMLU-Pro, and GPQA, DeepSeek-V3 outperformed all other open-source models, achieving 88.5 on MMLU, 75.9 on MMLU-Pro, and 59.1 on GPQA per the technical report. For workloads needing step-by-step reasoning style reasoning, however, you wanted R1, not V3 — see DeepSeek R1.

Writing

V3 had the open-source curse of verbosity. It would produce well-structured prose, but it tended to over-elaborate, especially in non-thinking conversational use. Tightening output style required prompt engineering (see the DeepSeek prompt engineering tutorial for techniques that still apply to the V4 family).

Benchmark snapshot

The numbers below are the original V3 (Chat) figures DeepSeek published. Cross-check the table in the technical report before quoting them in formal documents.

| Benchmark | DeepSeek V3 | Source |

|---|---|---|

| MMLU | 88.5 | V3 technical report |

| MMLU-Pro | 75.9 | V3 technical report |

| GPQA-Diamond | 59.1 | V3 technical report |

| DROP (3-shot F1) | 91.6 | V3 technical report (GitHub README) |

| MATH-500 | 90.2 | Helicone summary of report |

| SWE-Bench Verified | 42.0 | Helicone summary of report |

| GSM8K (Azure listing) | 89.3 | Microsoft Foundry catalog |

For the historical comparison-table baseline against all other open-source models at release, V3 was at or near the top in nearly every category, particularly in code and math.

Value for money

V3 launched with API pricing that was unusually aggressive for a frontier-class model. At the time, the published rates were $0.27 per million input tokens (cache miss) and $1.10 per million output tokens — a fraction of what GPT-4o or Claude 3.5 Sonnet charged. Those rates are no longer current. V3.2 superseded them in late 2025, and V4-Flash undercut V3.2 on 2026-04-24. For today’s DeepSeek API pricing, do not quote the V3 numbers as live.

Worked example: V4-Flash today versus V3 historical

Take a workload of 1,000,000 calls per month, each with a 2,000-token cached system prompt, a 200-token uncached user message, and a 300-token response. Using deepseek-v4-flash at the rates published as of April 2026:

Input, cache hit : 2,000,000,000 tokens × $0.0028/M = $5.60

Input, cache miss : 200,000,000 tokens × $0.14/M = $28.00

Output : 300,000,000 tokens × $0.28/M = $84.00

-------

Total $117.60Historically, the same workload at original V3 rates ($0.27 input miss / $1.10 output, no published cache discount on day one) would have run several multiples higher. V4-Flash is the cheapest place to run this profile inside the DeepSeek family today; for frontier-tier work you’d consider V4-Pro at roughly seven times the spend. Use the DeepSeek pricing calculator for your own numbers.

How V3 actually worked through the API

If you’re maintaining a legacy V3 integration, two technical points matter. First, the API is and always was stateless — chat requests hit POST /chat/completions, the OpenAI-compatible endpoint, and the client must resend the full message history on every call. The web chat keeps session history; the API does not. Second, V3 was reachable via the deepseek-chat alias. Today that alias still resolves, but it now points to V4-Flash in non-thinking mode, and the alias retires on 2026-07-24 at 15:59 UTC.

A minimal Python call against the current V4 endpoint, using the OpenAI SDK pattern, looks like this:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Summarise this PR diff."}],

temperature=0.0,

max_tokens=1024,

)

print(resp.choices[0].message.content)Note temperature=0.0 for code work — DeepSeek’s official guidance pairs 0.0 with code and math, 1.3 with general conversation, and 1.5 with creative writing. The same SDK-swap pattern (just change base_url and api_key) also worked for V3 unchanged.

Strengths that aged well

- Architectural openness. The full 671B-parameter MoE with 37B activated per token, complete with a detailed report, gave the research community a real reference design.

- Training efficiency. DeepSeek-V3 required only 2.788M H800 GPU hours for its full training, and the broader Reuters-sourced figure puts compute cost at roughly $5.6M — a fraction of GPT-4-class budgets. This was the headline that drove the January 2025 hype cycle.

- OpenAI SDK compatibility. Day-one drop-in for any team already on the OpenAI Python or Node libraries.

- Open weights on Hugging Face. Self-hosting was practical for organisations with the hardware budget. Note licensing: V3’s original base/chat weights shipped under a separate DeepSeek Model License (code MIT); newer V3.1, V3.2, R1, and the V4 family publish weights under MIT.

Weaknesses that pushed me to migrate

- 128K context ceiling. Adequate at launch; cramped by mid-2025. V4’s 1M-token default is the right answer for code repositories and long-document workflows.

- No native thinking mode. Reasoning required jumping to R1, which was a separate model. V4 collapses that into a

reasoning_effortparameter on either tier — see the DeepSeek API documentation. - Long-context coherence. Real-world degradation began well before the advertised limit, especially on multi-file code review.

- Alignment. Microsoft’s Azure listing notes that researchers have found DeepSeek V3 to be less aligned than other models, resulting in higher risks that the model will produce potentially harmful content and lower scores on safety and jailbreak benchmarks. Treat this as a real consideration for consumer-facing deployments.

- Hosted-API privacy. Data is processed on servers subject to Chinese law. The open weights are the mitigation if this matters to you; see DeepSeek privacy for the longer treatment.

Competitor context

At release, V3’s main reference points were GPT-4o and Claude 3.5 Sonnet. DeepSeek V3 outperformed GPT-4o, Claude 3.5 Sonnet, and Llama-3 with a score of 82.6 on HumanEval in independent re-tests. For a head-to-head against the closed-source flagship of the era, see DeepSeek V3 vs GPT-4o; for the broader proprietary comparison, DeepSeek vs Claude. For 2026 alternatives, the realistic shortlist runs through the V4 family on DeepSeek’s side and the current GPT-5, Claude 4, and Gemini 3 generations on the proprietary side — version numbers in those families change frequently, so verify against vendor pricing pages before committing.

Migration path: V3 → V4 today

- Replace

model="deepseek-chat"withmodel="deepseek-v4-flash". Keepbase_url="https://api.deepseek.com"as is. - If you previously routed reasoning workloads to

deepseek-reasoner, switch todeepseek-v4-flash(ordeepseek-v4-profor frontier-tier) and addreasoning_effort="high"withextra_body={"thinking": {"type": "enabled"}}. The response returnsreasoning_contentalongside the finalcontent. - Audit prompts that relied on V3’s 128K context. They will run unchanged at 1M, but you can now consolidate workflows that previously chunked input.

- Re-run your eval set. V4 changes some output style defaults; long-form prompts may want

temperatureadjustment per DeepSeek’s guidance. - Complete migration before 2026-07-24 15:59 UTC. After that, requests with the legacy IDs will fail.

Verdict

DeepSeek V3 was the open-weight model that closed the gap with frontier proprietary systems on benchmarks that mattered, at a budget that made the closed labs uncomfortable. As a piece of engineering and as a research artefact, it remains worth studying. As a production target in April 2026, it’s a historical waypoint — the right move is to read this review for context, then build on V4-Flash. Browse DeepSeek reviews for the rest of the lineage.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

Is DeepSeek V3 still available in 2026?

The open weights remain on Hugging Face under their original December 2024 release. The hosted API has rolled forward — the legacy deepseek-chat alias that originally pointed to V3 now routes to V4-Flash and retires on 2026-07-24 at 15:59 UTC. If you need V3 specifically, self-host from the open weights. For the current hosted experience, see the DeepSeek V4 page.

How does DeepSeek V3 compare to GPT-4o on benchmarks?

On the V3 technical report’s tables, V3 reached 88.5 on MMLU and posted competitive numbers across code and math benchmarks against GPT-4o-0513. Independent retests put V3 at 82.6 on HumanEval, ahead of GPT-4o on that specific benchmark. For a structured head-to-head, the DeepSeek V3 vs GPT-4o page walks through each task type with worked examples.

What context window does DeepSeek V3 support?

V3 supports 128K tokens. DeepSeek-V3 performs well across all context window lengths up to 128K on Needle-In-A-Haystack tests according to the official model card, though in real-world long-document use coherence tended to degrade earlier. By comparison, the V4 family ships a 1M-token default context. For a quick check on token budgets, use the DeepSeek token counter.

Can I run DeepSeek V3 locally?

Yes, with serious hardware. The 671B-parameter weights total roughly 685GB on Hugging Face including the multi-token-prediction module. You’ll want a multi-GPU node (8×H100 80GB or equivalent) and FP8 inference for tolerable latency. Quantised community builds run on smaller clusters at quality cost. The install DeepSeek locally tutorial covers the setup in detail.

Why did DeepSeek V3 cost so little to train?

Three factors: an FP8 mixed-precision training framework, the auxiliary-loss-free MoE load-balancing strategy, and tight algorithm-framework-hardware co-design that nearly fully overlapped computation with cross-node communication. DeepSeek-V3 required only 2.788M H800 GPU hours for its full training, with a Reuters-cited dollar cost around $5.6M — orders of magnitude below GPT-4-class budgets. For more on the company’s research output, see DeepSeek research papers.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- RepositoryDeepSeek-AI on GitHubOpen-weight release details, training/inference notesLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingGoogle Gemini API pricingGemini API rates for cross-vendor comparisonsLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- Technical reportDeepSeek-V3 technical reportArchitecture, MMLU/MMLU-Pro/GPQA scores, training costLast checked: April 30, 2026

Context sources

- AnalysisHelicone: DeepSeek V3 benchmark summaryMATH-500 and SWE-Bench Verified figures for V3Last checked: April 30, 2026

- NewsMicrosoft Foundry catalog: DeepSeek V3 listingGSM8K Azure listing number, alignment risk noteLast checked: April 30, 2026

- NewsReuters: DeepSeek V3 ~$5.6M training costCompute-cost figure for V3 trainingLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.