The Real DeepSeek Limitations You Should Know in 2026

If you are weighing DeepSeek V4 against GPT-5, Claude or Gemini for a production workload, the marketing pages will not tell you where it falls short. This guide catalogues the real DeepSeek limitations as of April 25, 2026 — the technical caveats, the regulatory friction, the benchmark gaps DeepSeek itself acknowledges, and the workflow gotchas that only show up after a week of real use. I run V4-Pro and V4-Flash in production today, and ran V3, V3.2 and R1 before that, so every point below traces to either hands-on testing or a primary source you can click through to. By the end you will know exactly where DeepSeek is the right choice and where it is not.

The short answer: where DeepSeek V4 still falls short

DeepSeek V4 is impressive on price and on agentic coding, but it is not a clean win against the closed-source frontier. DeepSeek itself describes V4 as following “a developmental trajectory that trails current-generation frontier models by approximately 3 to 6 months.” That candour is unusual, and it matches what I see in day-to-day testing. Below is the honest scorecard.

| Limitation | Severity | Affected surface |

|---|---|---|

| Text-only — no native image, audio or video input | High | API + chat |

| Trails GPT-5.4 and Gemini 3.1 Pro on world-knowledge benchmarks | Medium | API + chat |

| API is stateless — no server-side memory | Medium | API only |

| Country and government-device restrictions | Medium–High | App + API |

| Privacy: data processed under Chinese jurisdiction | High | App + API |

| No off-peak discount since 2025-09-05 | Low | API |

Legacy deepseek-chat / deepseek-reasoner retire 2026-07-24 15:59 UTC |

Medium | API |

| JSON mode designed-not-guaranteed; can return empty content | Medium | API |

| Think Max needs ≥384K context window allocated | Low | API |

| Local inference needs heavy GPU infrastructure | High | Self-host |

1. V4 is text-only — no image, audio or video input

This is the biggest functional gap for many readers. Both V4 Flash and V4 Pro support text only, unlike many of its closed-source peers, which offer support for understanding and generating audio, video, and images. If your pipeline needs vision (chart reading, screenshot analysis, document OCR with diagrams), V4 cannot do it natively today. As of April 2026, V4 is text-only; DeepSeek states they are working on multimodal capabilities, so keep a fallback if your pipeline needs image inputs.

For image-aware DeepSeek work, you currently fall back to the older DeepSeek VL2 family, which is a separate model line with its own licensing and quality trade-offs.

2. Knowledge benchmarks still trail GPT-5.4 and Gemini 3.1 Pro

V4 closes most of the reasoning and coding gap, but factual recall is still where closed-source models lead. The models seem to fall slightly behind frontier models in knowledge tests, specifically OpenAI’s GPT-5.4 and Google’s latest Gemini 3.1 Pro, suggesting a developmental trajectory that trails the leading frontier models by approximately 3 to 6 months.

Concretely: on Humanity’s Last Exam, V4-Pro scores 37.7 — just below GPT-5.4 (39.8), Claude (40.0), and Gemini (44.4). If your workload is heavy on obscure factual lookups (legal precedent, scientific minutiae, enterprise wiki Q&A), you will feel this. For comparative depth see DeepSeek vs ChatGPT and DeepSeek vs Gemini.

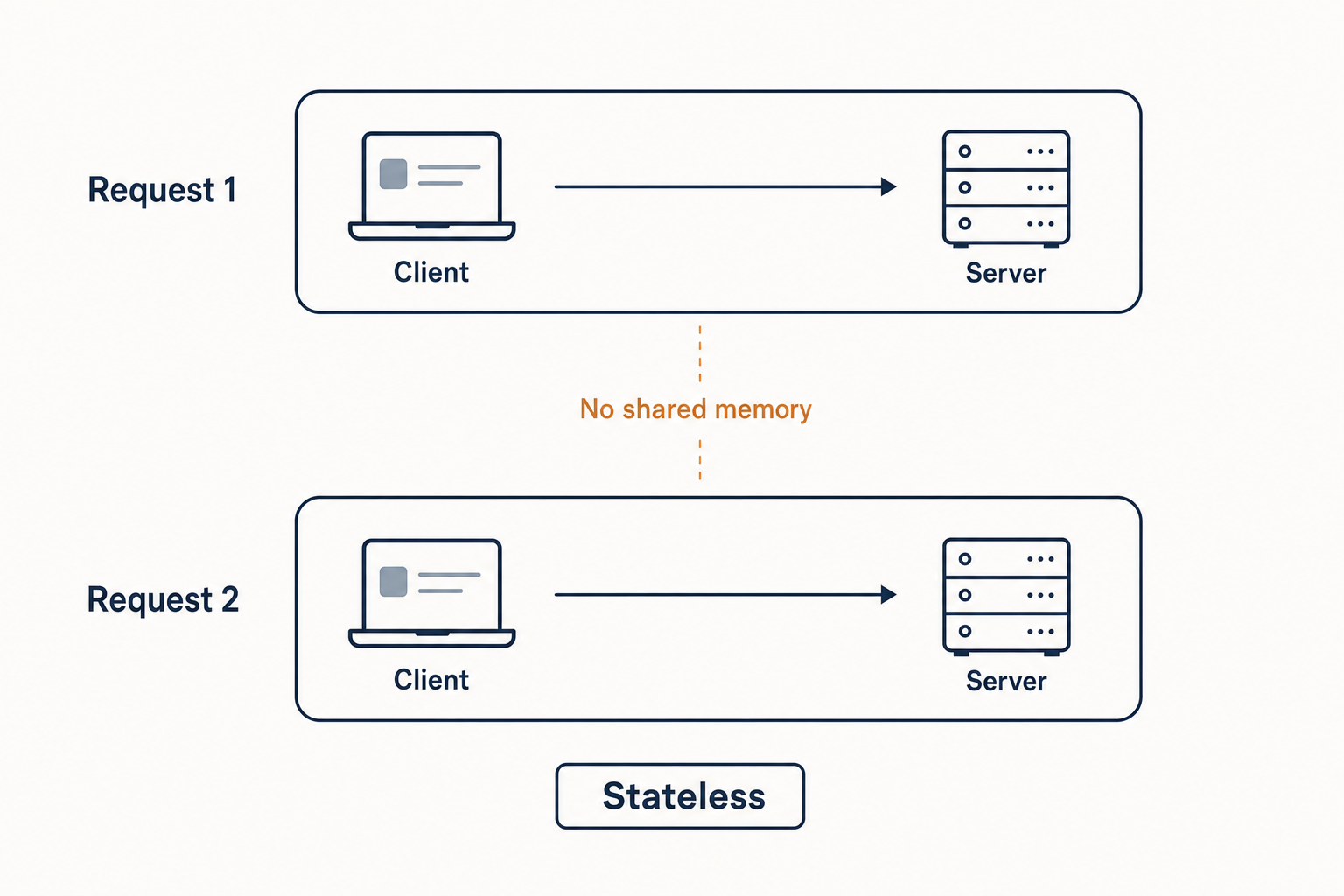

3. The API is stateless — and that surprises people

The web chat at chat.deepseek.com keeps your conversation history; the API does not. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint, and DeepSeek does not store prior turns server-side. Your client must resend the entire messages array on every call.

Minimal Python using the OpenAI SDK against DeepSeek V4-Flash:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="sk-...",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": "You are concise."},

{"role": "user", "content": "Summarise the migration plan."},

],

temperature=1.3,

max_tokens=512,

)

print(resp.choices[0].message.content)That stateless design is fine — every major provider does it the same way — but engineers coming from the chat UI sometimes assume “memory” carries over. It does not. See DeepSeek API best practices and DeepSeek context caching for ways to reduce the cost of resending history.

4. Country restrictions and government-device bans

DeepSeek’s regulatory footprint is uneven. Multiple US states, Australia, Taiwan, South Korea, Denmark and Italy introduced bans or other restrictions on DeepSeek-R1 shortly after its release, citing privacy and national security concerns. Some countries banned government agencies last year from using DeepSeek, including Italy, the United States, and South Korea, citing national security concerns; Germany also banned DeepSeek in Apple and Google app stores in 2025, citing illegal transfer of user data to China.

If you work in US public-sector procurement, UK central government, or any defence-adjacent contractor, treat DeepSeek as restricted by default and check your specific jurisdiction. The availability by country breakdown and latest US restrictions tracker are kept current.

5. Privacy: data processed under Chinese jurisdiction

Conversations sent to chat.deepseek.com or the official API traverse infrastructure subject to Chinese law. That is a structural fact, not a marketing point — and it is the single biggest reason enterprise procurement teams reject DeepSeek for sensitive workloads. The DeepSeek privacy explainer and the is DeepSeek safe guide go into the threat model.

The mitigation, if your security model demands it, is to run the open weights yourself. V4 is released under the standard MIT license, so self-hosting is permitted — see how to install DeepSeek locally.

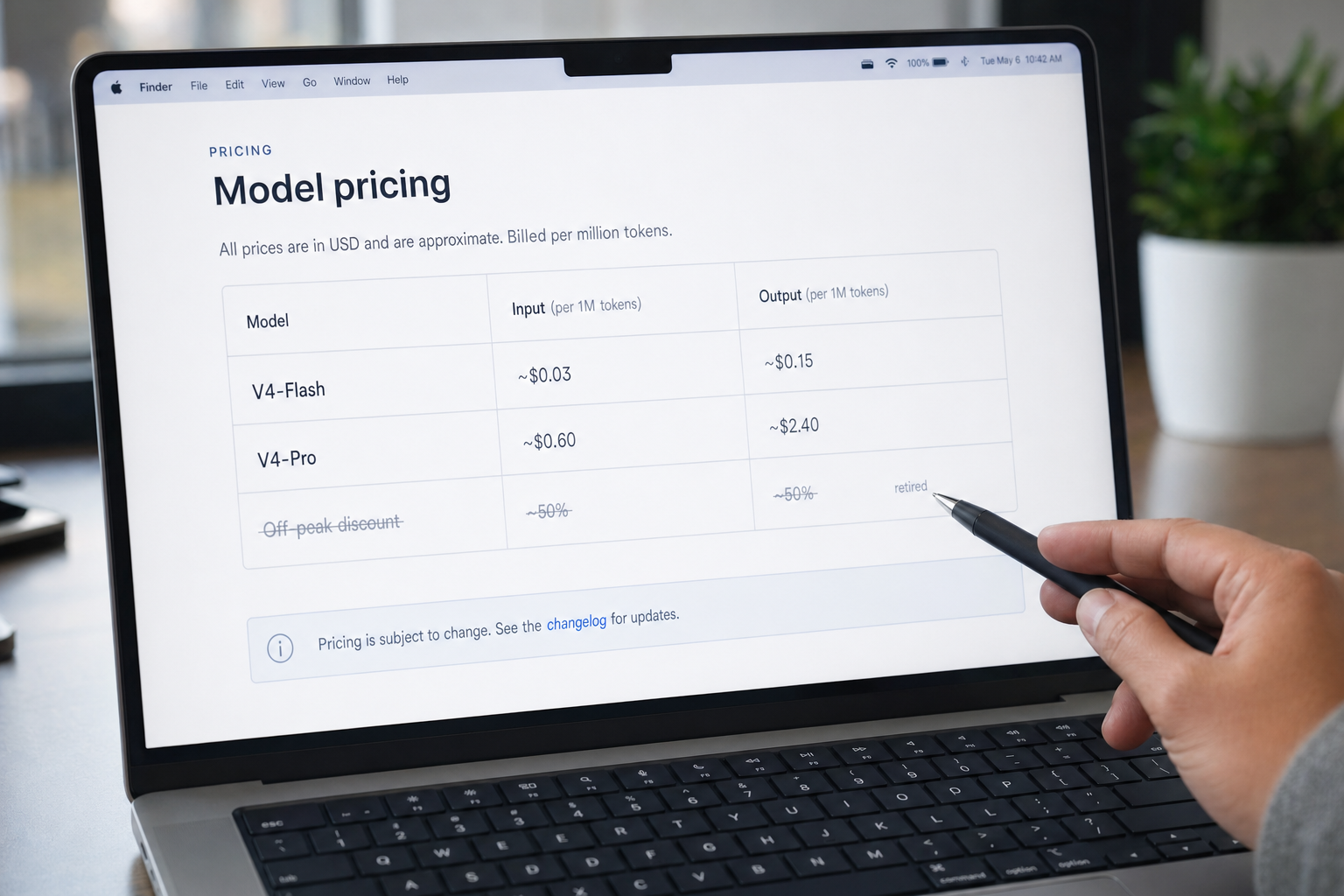

6. Pricing nuance: the off-peak discount is gone

Older DeepSeek tutorials still describe a 50%/75% night-time API discount. That promotion ended on 2025-09-05 and was not reintroduced when V4 shipped on April 24, 2026. If you costed a project against the old off-peak rates, redo the math at standard rates.

Current per-million-token pricing, sourced from DeepSeek’s official pricing page as of April 2026:

| Tier | Cache hit input | Cache miss input | Output |

|---|---|---|---|

deepseek-v4-flash |

$0.0028 | $0.140 | $0.280 |

deepseek-v4-pro |

$0.003625 promo / $0.0145 list | $0.435 promo / $1.740 list | $0.87 promo / $3.480 list |

Worked cost example — V4-Flash

One million calls per day with a 2,000-token cached system prompt, a 200-token user message, and a 300-token reply, on deepseek-v4-flash:

Cached input : 2,000,000,000 tokens × $0.0028/M = $5.60

Uncached input: 200,000,000 tokens × $0.140/M = $28.00

Output : 300,000,000 tokens × $0.280/M = $84.00

---------

Total $117.60Notice you cannot skip the uncached-input line — each new user message is a miss against the cached prefix. Run your own numbers in the DeepSeek pricing calculator. Even with the worked example, V4-Pro at the same workload comes to $1,421 — roughly a 10× spread, so model selection matters.

7. Legacy model IDs retire on July 24, 2026

If your codebase calls model="deepseek-chat" or model="deepseek-reasoner", you have a hard deadline. DeepSeek-chat and deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC), currently routing to deepseek-v4-flash non-thinking and thinking respectively. Migration is a one-line swap to deepseek-v4-flash or deepseek-v4-pro; the base_url does not change. The API documentation covers the full migration path.

8. JSON mode is designed, not guaranteed

DeepSeek’s structured-output mode is not bulletproof. The official guidance: include the literal word “json” plus a small example schema in your prompt, set max_tokens high enough to avoid truncation, and handle the case where the API returns empty content. Treat it as best-effort and validate every response with a parser. The JSON mode reference shows the exact prompt pattern.

9. Thinking-mode constraints

V4 offers three reasoning effort modes on either model ID. Thinking is enabled per request — there is no separate “reasoner” model in V4. The response returns reasoning_content alongside the final content. Two practical limits:

- Thinking mode does not support the temperature, top_p, presence_penalty, or frequency_penalty parameters; setting these will not trigger an error but will also have no effect. If you tuned a system around

temperature=0determinism in non-thinking mode, that knob disappears in thinking mode. - Think Max requires a context window of at least 384K tokens; the recommended sampling parameters across all modes are temperature=1.0, top_p=1.0. Allocating only 128K (the V3 default) will silently truncate.

10. Self-hosting needs serious GPU infrastructure

The MIT license on V4 weights is a real win for sovereignty, but the hardware bill is sobering. Pro is 865GB on Hugging Face, Flash is 160GB. Even in FP4+FP8 mixed precision, the memory requirements are substantial; for most teams, the API is the practical path.

Flash is borderline runnable on a high-end workstation; Pro effectively requires a multi-GPU server. Use the hardware calculator before ordering kit, and read the system requirements guide. For most teams, the cost-effective answer is the hosted API plus the open weights kept as a contingency.

How limitations differ between the chat, the app and the API

| Surface | Conversation memory | Multimodal | Privacy posture |

|---|---|---|---|

| Web chat (chat.deepseek.com) | Server-side per session | Text only in V4 | Data processed under Chinese law |

| Mobile apps (iOS, Android) | Server-side per session | Text only in V4 | Data processed under Chinese law; some app-store bans |

| Hosted API | Stateless — client resends history | Text only in V4 | Same jurisdiction as chat |

| Self-hosted weights | You build it | Text only in V4 | Whatever you make it |

If you have not picked a surface yet, the browser vs app comparison walks through the trade-offs.

Where these limitations are not a problem

Despite the gaps, V4 is a strong default for several profiles:

- Cost-sensitive coding agents. V4-Pro scores 80.6% on SWE-bench Verified — within 0.2 points of Claude Opus 4.6 — and costs $0.87 promo / $3.48 list per million output tokens versus Claude Opus 4.6’s $25 per million output (verify on Anthropic’s pricing page as of April 2026), a 7× price gap at near-identical coding benchmark performance.

- Long-document workloads. The 1M-token default context with output up to 384K tokens handles entire codebases or long PDFs in a single call.

- Math and formal reasoning. On Putnam-200 Pass@8 with minimal tools, V4-Flash-Max scores 81.0, compared to 35.5 for Seed-2.0-Pro and 26.5 for Gemini-3-Pro; on Putnam-2025 V4 reaches a proof-perfect 120/120.

- Sovereignty-conscious deployments willing to self-host the MIT-licensed weights.

Verdict

DeepSeek V4’s limitations are real but bounded. Text-only input and Chinese-jurisdiction privacy posture are the two that will rule it out for some teams immediately. The benchmark gap on world knowledge is narrowing each generation but still favours GPT-5 and Gemini for factual recall. Pricing remains a structural advantage, and the open weights protect you from vendor lock-in. For a fuller scorecard see the DeepSeek review or browse the DeepSeek beginner guides hub.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

What are the main DeepSeek limitations in 2026?

The headline DeepSeek limitations are: V4 is text-only with no native image, audio or video input; world-knowledge benchmarks trail GPT-5.4 and Gemini 3.1 Pro by 3 to 6 months on DeepSeek’s own assessment; the API is stateless; and conversations are processed under Chinese jurisdiction. Pricing remains a structural advantage. See the full breakdown in our DeepSeek review.

Is DeepSeek safe for confidential business data?

For genuinely confidential data, the hosted DeepSeek API and chat are not appropriate, because traffic is processed in jurisdictions subject to Chinese law and law-enforcement access is possible under legal process. The mitigation is to self-host the MIT-licensed V4 weights inside your own perimeter. Read the privacy explainer for the full threat model.

Does DeepSeek V4 support images or voice?

No. V4-Pro and V4-Flash are both text-only as of April 2026; DeepSeek has stated multimodal capabilities are in development. For vision tasks today, the older DeepSeek VL2 family is the in-house option, but it is a separate model with its own quality and licensing trade-offs. If multimodality is critical, keep a GPT-5 or Gemini fallback.

Why does the DeepSeek API “forget” my conversation?

Because POST /chat/completions is stateless by design — DeepSeek does not store prior turns on the server. The web chat keeps a session for you; the API does not. To sustain multi-turn conversations, your client must resend the full messages array each call. The context caching guide shows how to reduce the cost of resending long history.

How does DeepSeek V4 compare to GPT-5 and Claude on coding?

V4-Pro is competitive on agentic coding benchmarks: 80.6% on SWE-bench Verified, within 0.2 points of Claude Opus 4.6, at roughly one-seventh the per-token output price. GPT-5.5 has since reopened the gap on Terminal-Bench 2.0. For a side-by-side, see DeepSeek vs Claude or the broader AI comparison hub.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- PricingDeepSeek API pricing pageOfficial rates underpinning the cost-comparison guidanceLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.