A Practitioner’s Guide to DeepSeek for Finance Workflows

If you cover earnings, build DCF models, or sit on a risk desk, the question is no longer whether large language models help — it is which one to put in front of regulated data. This guide walks through DeepSeek for finance the way I actually use it: a 1.6-trillion-parameter open-weight model behind an OpenAI-compatible API, priced low enough that a junior analyst can throw an entire 10-K at it without flinching. I’ll show ten concrete workflows with prompts I run in production, the limitations every CFO should know about, and a costed example so you can budget before procurement asks. By the end you will know exactly where DeepSeek V4 earns its place in a finance stack and where it does not.

The problem finance teams are actually trying to solve

Most finance work is not “ask the AI a clever question.” It is reading the same kinds of documents — 10-Ks, 10-Qs, credit memos, ISDA schedules, board packs — and producing the same kinds of artefacts: variance commentary, a model tab, a risk note, a one-pager. The bottleneck is not creativity; it is the hours spent extracting structured numbers and language from unstructured PDFs, then re-formatting them for someone else’s template.

Three properties matter when picking a model for that work:

- Long context, because filings and credit files are long.

- Cheap tokens, because volume is high and budgets are scrutinised line by line.

- Predictable structured output (JSON, tables) so downstream code can consume it without a regex carnival.

DeepSeek V4 hits all three. DeepSeek-V4 ships as two Mixture-of-Experts models — DeepSeek-V4-Pro with 1.6T parameters (49B activated) and DeepSeek-V4-Flash with 284B parameters (13B activated) — both supporting a context length of one million tokens. Output can run up to 384,000 tokens, which matters when you ask for a full earnings deck-in-prose. For background on the family, see DeepSeek V4.

Which DeepSeek model for which finance task

V4 ships as two tiers. Both are open-weight under the MIT licence, both support the same thinking modes, but they sit at very different price points.

| Model | Best for | Input (cache miss) / 1M | Output / 1M | Context |

|---|---|---|---|---|

deepseek-v4-flash |

Filings extraction, variance commentary, KYC summaries, batch reconciliation | $0.14 | $0.28 | 1M tokens |

deepseek-v4-pro |

Multi-step modelling, complex credit analysis, agentic workflows over a deal room | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list | 1M tokens |

Legacy deepseek-chat / deepseek-reasoner |

Existing integrations until migration | routes to V4-Flash | routes to V4-Flash | 1M tokens |

Rates per DeepSeek’s official pricing page as of April 24, 2026 — V4-Flash at $0.0028 cache hit / $0.14 cache miss / $0.28 output per 1M tokens, and V4-Pro at promotional $0.435 / $0.87 (75% off through 2026-05-31; list $0.0145 / $1.74 / $3.48) per 1M tokens. Confirm against the DeepSeek API pricing page before committing budget. DeepSeek states that deepseek-chat and deepseek-reasoner will be retired after July 24, 2026, 15:59 UTC. If you maintain old integrations, plan a one-line model= swap.

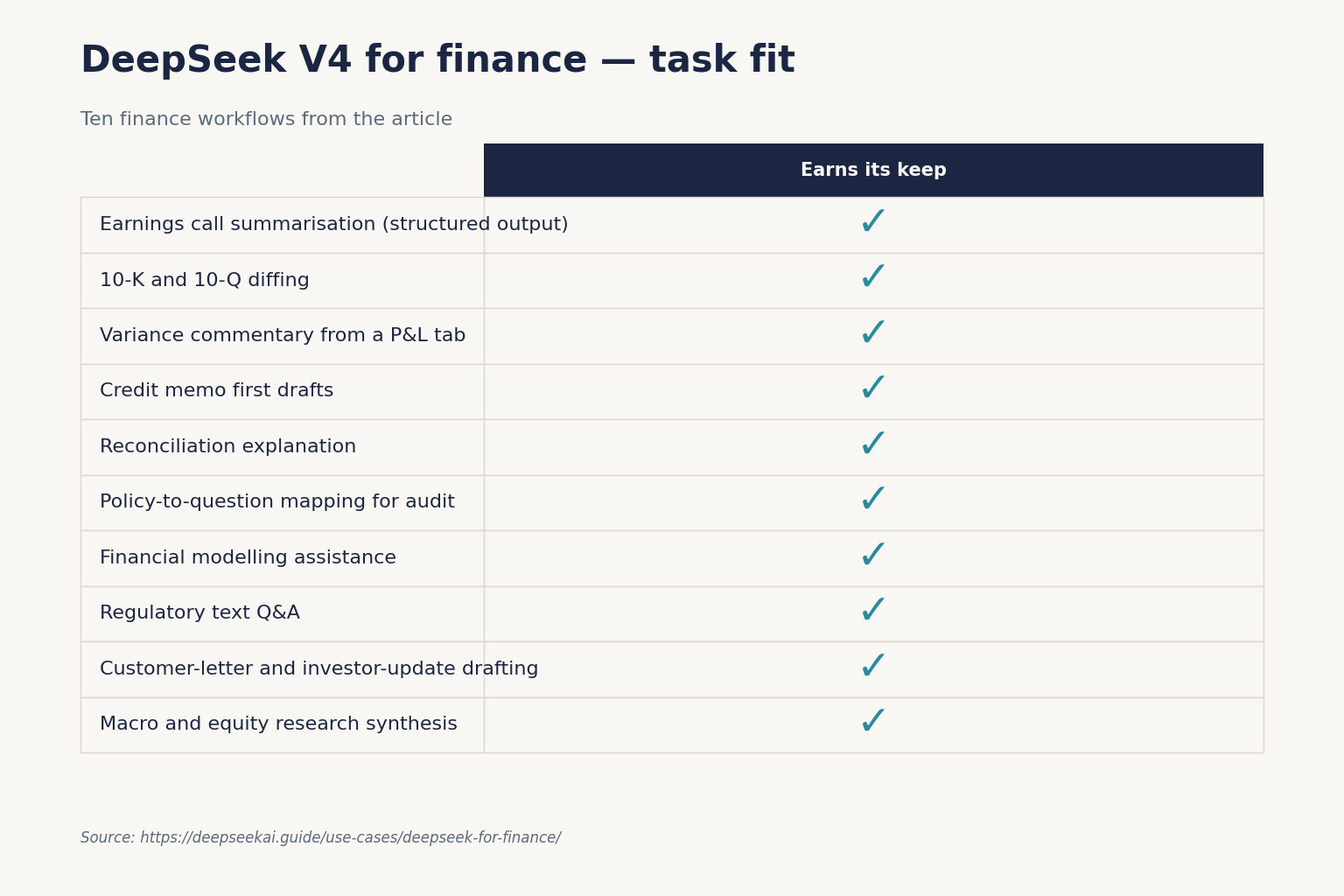

Ten workflows that actually save hours

1. Earnings call summarisation with structured output

Paste the transcript, ask for a JSON object with revenue commentary, guidance changes, and analyst-question themes. Set response_format={"type": "json_object"} and include the word “json” plus a small example schema in the prompt. JSON mode is designed to return valid JSON — handle the occasional empty content and set max_tokens high enough to avoid truncation. See DeepSeek API JSON mode for the full pattern.

2. 10-K and 10-Q diffing

Drop both filings into a single prompt. With a 1,000,000-token context you can usually fit two consecutive 10-Qs and a 10-K together. Ask for changed risk-factor language, segment-level revenue movements, and any new accounting policies. Use deepseek-v4-flash in non-thinking mode for the first pass; escalate to deepseek-v4-pro only when language is genuinely ambiguous.

3. Variance commentary from a P&L tab

Export the actual-vs-budget tab as CSV, paste it inline, and ask the model to write the standard “favourable / unfavourable / driver” commentary that finance teams produce monthly. Set temperature=1.0, the official guidance for data-analysis work.

4. Credit memo first drafts

Feed the model the borrower’s last three years of financials, recent news, and the term sheet. Ask for a draft credit memo following your template (paste the template in the system prompt — it caches well across borrowers). The cached system prompt is what makes the volume affordable.

5. Reconciliation explanation

When two ledgers disagree, paste both extracts and ask the model to identify likely categories of difference (timing, FX, reclass). It will not solve the reconciliation, but it shrinks the haystack.

6. Policy-to-question mapping for audit

Paste an internal control policy and a list of audit questions. Ask for a mapping table showing which paragraph of the policy answers which question, and where gaps exist. This is mechanical work that auditors hate and the model handles in seconds.

7. Financial modelling assistance

For complex multi-step tasks — building a three-statement model from a prospectus, sanity-checking covenant headroom under stress scenarios — switch to deepseek-v4-pro with thinking enabled. On coding benchmarks, DeepSeek V4-Pro leads Claude on Terminal-Bench 2.0 (67.9% vs 65.4%), LiveCodeBench (93.5% vs 88.8%), and Codeforces rating (3206 vs no reported score). That coding strength translates directly to writing solid Excel formulas, Python pandas snippets, and SQL.

8. Regulatory text Q&A

Paste the relevant portions of Basel III, IFRS 9, or your local equivalent and ask precise questions. The 1M-token context means you can include enough surrounding text that the model’s answer is grounded in what you gave it rather than its training data — important for anything regulator-facing.

9. Customer-letter and investor-update drafting

Paste the underlying numbers and bullet points. Ask for the letter in your firm’s voice (paste two prior letters as style examples). Use temperature=1.3 for general-conversation tone or 1.5 for more creative copy.

10. Macro and equity research synthesis

Drop in five sell-side notes plus a transcript and ask for the consensus view, the dissenting view, and what would have to be true for each to be right. For more on long-form synthesis, see DeepSeek for research.

A minimal Python integration

DeepSeek’s API is OpenAI-compatible. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint, and the only changes from a vanilla OpenAI client are base_url and api_key. The API is also Anthropic-compatible at the same base URL, so existing Anthropic SDK code works with a key swap.

Here is a minimal Python example for an earnings-summary call:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="sk-...",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": "You extract structured data from earnings calls. Respond in json."},

{"role": "user", "content": transcript_text + "nnReturn json: {revenue_commentary, guidance, themes}"},

],

response_format={"type": "json_object"},

temperature=1.0,

max_tokens=4000,

)

print(resp.choices[0].message.content)

The API is stateless — you must resend the conversation history with each request. The web chat at chat.deepseek.com keeps session history for you, but anything you build through the API stores state on your side. Walk-through: DeepSeek API getting started.

Thinking mode for harder questions

Thinking mode is a request parameter, not a separate model. Add reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}}, or reasoning_effort="max" for maximum effort. The response returns reasoning_content alongside the final content, so you can log or hide the trace as your compliance posture demands.

What a real workload costs

Suppose you summarise 1,000,000 earnings-related documents in a year on deepseek-v4-flash: a 2,000-token cached system prompt with templates, a 200-token user message per call, and a 300-token JSON response.

| Bucket | Tokens | Rate / 1M | Cost |

|---|---|---|---|

| Cached input | 2,000,000,000 | $0.0028 | $56.00 |

| Uncached input | 200,000,000 | $0.14 | $28.00 |

| Output | 300,000,000 | $0.28 | $84.00 |

| Total | $117.60 |

The same workload on deepseek-v4-pro would total $1,421.00 — roughly 12× more. For most filings work, V4-Flash is the right default. Reserve V4-Pro for the small fraction of tasks where the benchmark lift earns its keep. For more on prefix caching, see DeepSeek context caching.

Limitations every finance team should plan around

- Hallucinated numbers. Never let the model invent a figure. Paste the source data and ask it to extract or transform — not recall. If a number is not in the prompt, the answer is “unknown.”

- Real-time data. DeepSeek does not have live market access via the API. Pipe quotes and rates in yourself.

- Factual recall gaps. SimpleQA-Verified at 57.9% versus Gemini’s 75.6% reveals a meaningful factual knowledge retrieval gap. If your use case requires accurate real-world knowledge recall, Gemini holds a clear edge. Treat DeepSeek as a transformation engine, not an encyclopaedia.

- Math at the extremes. On HMMT 2026, Claude (96.2%) and GPT-5.4 (97.7%) pull ahead of V4-Pro (95.2%). For the most complex mathematical reasoning tasks specifically, V4-Pro trails on this metric. For institutional-grade quant work, validate critical computations in code rather than asking the model to do arithmetic in prose.

- Data residency. The DeepSeek API processes requests on infrastructure subject to Chinese law. Many regulated finance shops will not accept that for client data; check with your CISO before piping in anything PII or MNPI. See DeepSeek privacy.

- Audit trail. Stateless API calls mean you must log prompts and responses yourself for SOX or MiFID II purposes. Build that into the wrapper from day one.

Where other tools beat DeepSeek for finance sub-tasks

Honest engineering says: do not run everything through one model.

- Heavy multi-step coding agents in a regulated environment. Claude’s app surface and tool-use ergonomics still win for some teams. See DeepSeek vs Claude.

- Deep factual lookup over the open web. Gemini’s grounding to Google search is hard to match.

- Self-hosted on-prem deployment for the most sensitive desks. Both V4 tiers are MIT-licensed open weights, but inference at 1.6T parameters is non-trivial; smaller alternatives may fit better. The DeepSeek alternatives hub catalogues options.

Getting started for a finance team

- Spin up an API key in a sandbox account and verify the OpenAI SDK pattern works against

https://api.deepseek.com. - Build one wrapper that logs every prompt and response to your own datastore. Compliance will thank you.

- Pick a single workflow — variance commentary is a good starter — and put it behind that wrapper.

- Measure cost with the DeepSeek pricing calculator; revisit the V4-Flash vs V4-Pro split monthly as volumes grow.

- Only then expand into adjacent workflows. Resist the urge to build “an AI platform” before you have one workflow paying for itself.

For broader context on the use-case landscape, browse the DeepSeek use cases hub.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Is DeepSeek safe to use for confidential financial data?

Treat it the way you would any cloud API based outside your jurisdiction. DeepSeek processes requests on infrastructure in China, so most regulated finance shops will not send client PII or MNPI through the public API. For internal documents and public filings the calculation is different but still requires sign-off from your CISO and DPO. Read the DeepSeek privacy overview before scoping a pilot.

How much does DeepSeek cost for a typical finance team?

For document-heavy workflows like filings extraction and variance commentary, deepseek-v4-flash at $0.14 per million input (cache miss) and $0.28 per million output tokens keeps a small team’s monthly bill in the low hundreds of dollars. Heavy agentic workflows on V4-Pro run roughly ten times higher. Estimate before you build with the DeepSeek cost estimator.

Can DeepSeek replace a financial analyst?

No. It accelerates the mechanical work — extraction, drafting, reconciliation explanation — but does not replace judgement on numbers, context on relationships, or accountability for a recommendation. The right framing is use, not replacement. The DeepSeek for business page covers similar ground for adjacent functions.

What context window does DeepSeek V4 offer for long filings?

Both V4 tiers default to a 1,000,000-token context with output up to 384,000 tokens. That is enough to fit a full 10-K, two 10-Qs, and a research note in a single request. Older models had much smaller windows; if you are still on a legacy ID, plan migration before the July 24, 2026 cutoff. See DeepSeek V4-Pro for the full spec.

Does DeepSeek work with the OpenAI Python SDK?

Yes. The API matches OpenAI’s Chat Completions wire format, so you change only base_url to https://api.deepseek.com and swap in your DeepSeek key. The Anthropic SDK also works against the same base URL. No call-site rewriting is required — see DeepSeek OpenAI SDK compatibility.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.