DeepSeek vs Kimi (2026): Which Open-Weight Model Wins?

You are choosing between two open-weight Chinese frontier models for a real workload — maybe an agent, maybe a coding pipeline, maybe a long-document RAG system — and the marketing pages are not helping. The deepseek vs kimi question matters because both labs have shipped major releases inside a six-month window, both publish weights under permissive licences, and both undercut Western APIs by a wide margin. They are not interchangeable. DeepSeek V4, released April 24, 2026, splits into two tiers built around a million-token context. Kimi K2.6, released a few days earlier, focuses on swarm-based agents and long-horizon coding inside a 256K window. This article gives you the verdict, the numbers, a worked cost example for a typical workload, and a clear decision rule.

Verdict: which one to pick

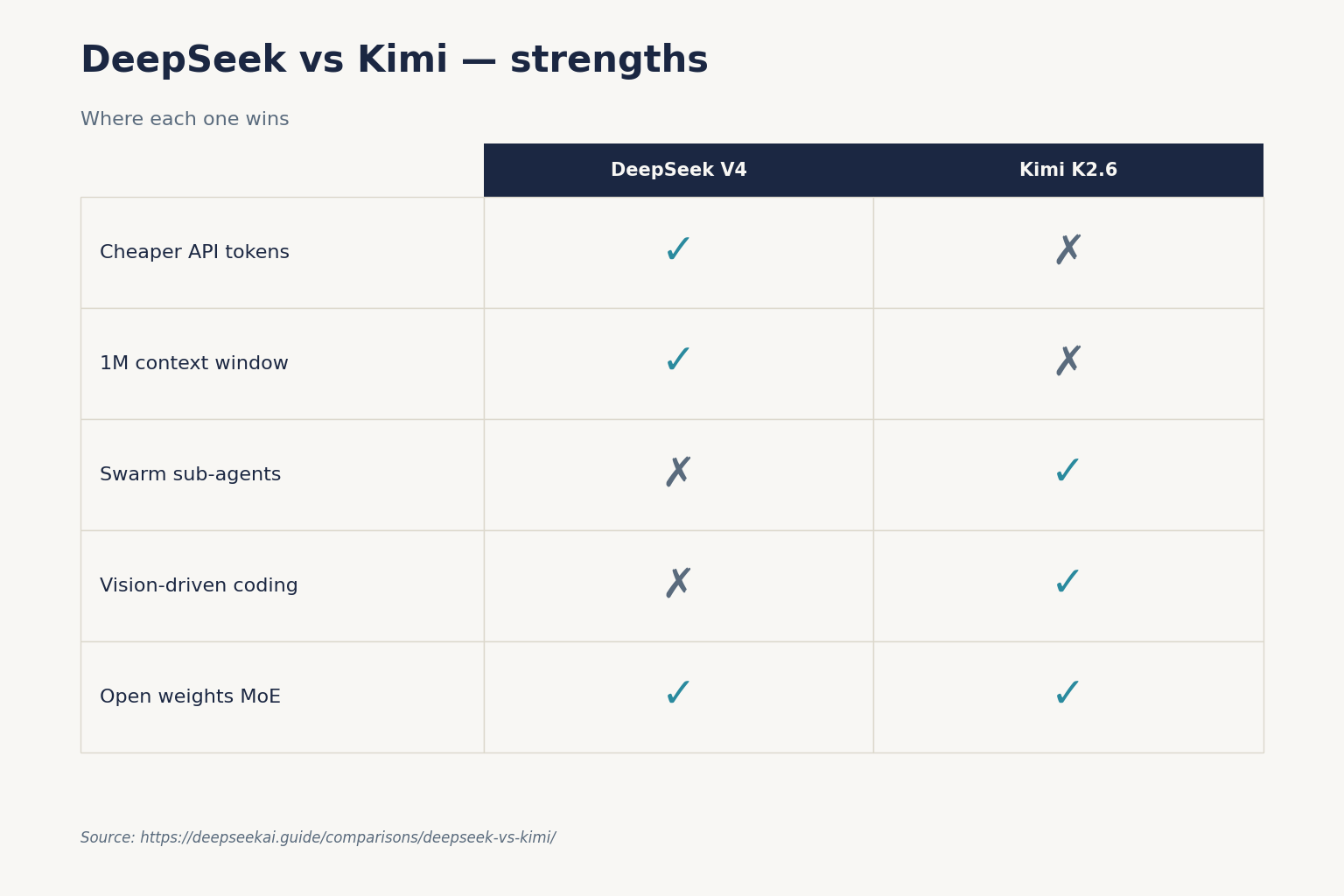

For most workloads, DeepSeek V4-Flash is the cheaper and broader default. It costs roughly a quarter of Kimi K2.6 on output tokens, ships with a 1M-token context out of the box, and can switch reasoning effort with a single parameter. Pick Kimi K2.6 when your workload is agentic coding with many parallel sub-agents, long-running autonomous sessions, or vision-grounded UI generation — those are the areas Moonshot has explicitly optimised, and where DeepSeek V4 trades blows rather than dominating.

Both are open-weight Chinese MoE models. Both expose OpenAI- and Anthropic-compatible APIs. The split between them is real but narrow: DeepSeek wins on price, raw context length, and breadth of tier choice; Kimi wins on agent orchestration, multimodal coding, and one-shot long-horizon execution.

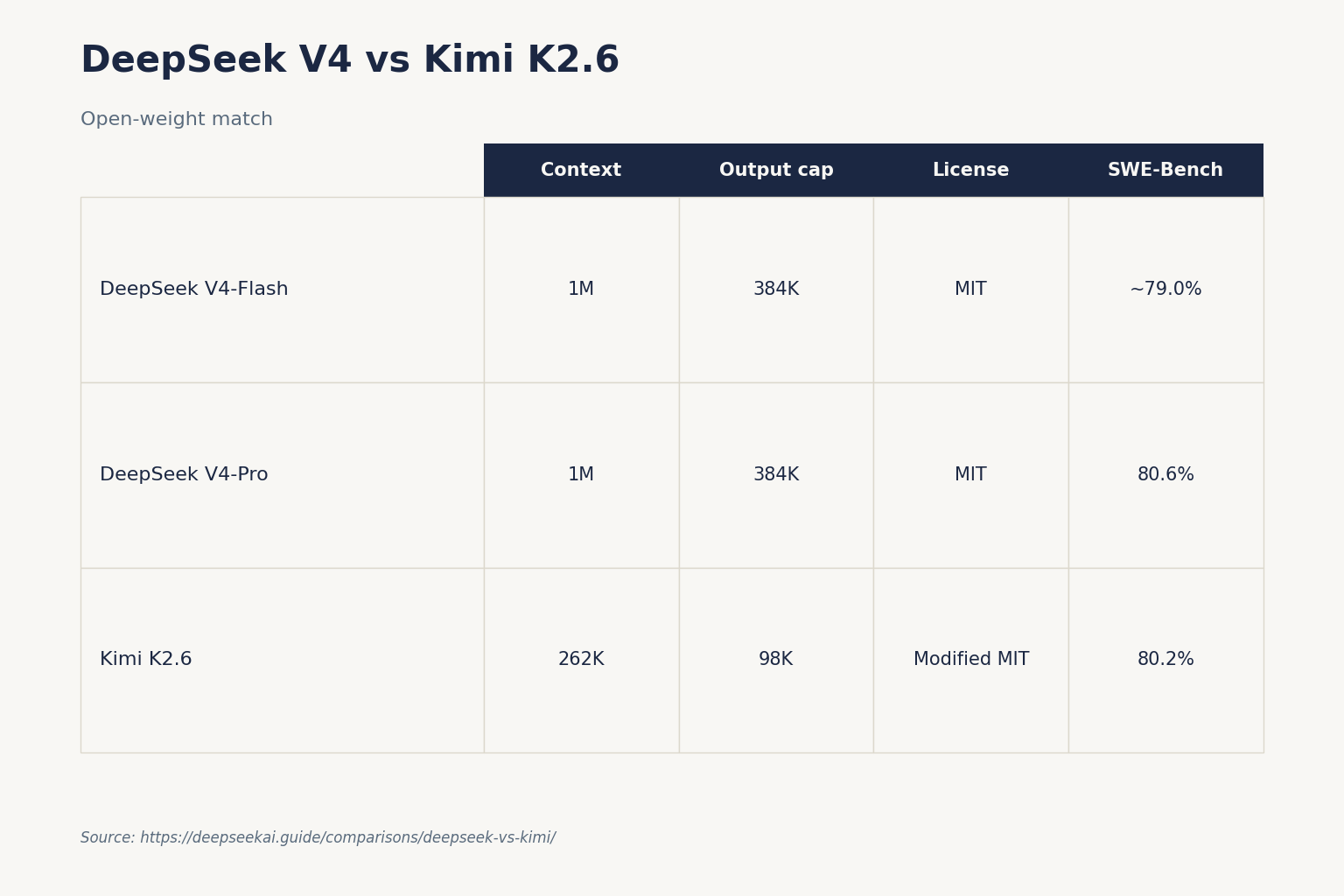

At a glance: deepseek vs kimi specs

The headline numbers, sourced from the labs’ own release materials and pricing pages as of April 25, 2026:

| Feature | DeepSeek V4-Flash | DeepSeek V4-Pro | Kimi K2.6 |

|---|---|---|---|

| Total parameters | 284B (MoE) | 1.6T (MoE) | 1T (MoE) |

| Active per token | 13B | 49B | 32B |

| Context window | 1,000,000 tokens | 1,000,000 tokens | 262,144 tokens |

| Max output | 384,000 tokens | 384,000 tokens | 98,304 tokens (reasoning) |

| Input (cache miss) $/M | $0.14 | $0.435 promo / $1.74 list (75% off through 2026-05-31) | see Pricing section |

| Output $/M | $0.28 | $0.87 promo / $3.48 list (75% off through 2026-05-31) | see Pricing section |

| Weights licence | MIT | MIT | Modified MIT |

| Released | 2026-04-24 | 2026-04-24 | April 2026 |

| SWE-Bench Verified | ~79.0% (Flash) | 80.6% | 80.2% |

| Terminal-Bench 2.0 | 56.9% | 67.9% | 66.7% |

The benchmark gap on SWE-Bench Verified between V4-Pro (80.6%), Kimi K2.6 (80.2%) and Claude (80.8% per the same DeepSeek report) is a fraction of a percentage point. The gap is consistent but not dramatic — roughly 1–3 percentage points across most benchmarks. Pricing, not benchmarks, is the deciding factor for most teams.

Coding: where each model earns its keep

Both labs have leaned hard into agentic coding for this generation. The differences are in shape, not raw score.

DeepSeek V4-Pro hits 80.6 on SWE-Bench Verified, within a fraction of Claude (80.8) and matching Gemini (80.6), and beats Claude on Terminal-Bench 2.0 in DeepSeek’s own table. Kimi K2.6 reports SWE-Bench Verified (80.2) and Terminal-Bench 2.0 (66.7) on its release post — close enough to V4-Pro that you can treat them as peers on standard benchmarks.

Kimi’s distinct angle is the swarm. Scaling horizontally to 300 sub-agents executing 4,000 coordinated steps, K2.6 can dynamically decompose tasks into parallel, domain-specialized subtasks, delivering end-to-end outputs from documents to websites to spreadsheets in a single autonomous run. That is a massive leap from K2.5’s limit of 100 sub-agents and 1,500 steps. If your application is a long-running coding agent (think: open a ticket, find the bug, write the fix, write the tests, run them), Kimi has a runtime story DeepSeek does not yet match.

DeepSeek’s counter-argument is the million-token context. If your code task involves a large monorepo dumped into the prompt, V4-Flash and V4-Pro hold roughly 4× the context Kimi does, and the FIM (Fill-In-the-Middle) completion endpoint is available in non-thinking mode. For a deeper look at coding-specific trade-offs, see DeepSeek for coding.

Vision and multimodal coding

Kimi K2.6 ships as a native multimodal agentic model that advances practical capabilities in long-horizon coding, coding-driven design, proactive autonomous execution, and swarm-based task orchestration. Moonshot trained vision and text together rather than bolting vision on later, and it shows on UI-from-screenshot tasks. DeepSeek V4 is text-only at preview, with multimodal on the roadmap.

Reasoning: thinking modes compared

Both families let you switch reasoning effort per request. The mechanics differ.

DeepSeek V4. Thinking mode is a request parameter, not a separate model ID. On either deepseek-v4-pro or deepseek-v4-flash, set reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}} for thinking, or reasoning_effort="max" for the maximum-effort variant. The response then returns reasoning_content alongside the final content. Default behaviour is thinking-enabled; pass extra_body={"thinking": {"type": "disabled"}} to opt out.

Kimi K2.6. Kimi exposes instant and thinking modes plus a separate preserve_thinking mode, which retains full reasoning content across multi-turn interactions and enhances performance in coding agent scenarios. This feature is disabled by default. Kimi K2 Thinking, the dedicated reasoning variant, was a native INT4 quantization model with 256k context window, achieving lossless reductions in inference latency and GPU memory usage, and is what most agent stacks call when they want long deliberation.

For sustained reasoning depth, Kimi has the longer track record — starting with Kimi K2, we built it as a thinking agent that reasons step-by-step while dynamically invoking tools. It posts new high marks on Humanity’s Last Exam (HLE), BrowseComp, and other benchmarks by dramatically scaling multi-step reasoning depth and maintaining stable tool-use across 200–300 sequential calls. DeepSeek V4-Pro (reasoning_effort=max) closes the gap on academic-reasoning benchmarks, but trails on raw HLE.

Writing

Both models handle bilingual English-Chinese workloads better than most Western frontier models. In our testing on long-form English drafts, V4-Flash and Kimi K2.6 produce similar prose quality at default settings; Kimi tends to over-structure with bullets and headings, while DeepSeek V4-Flash leans toward continuous paragraphs. For a task like “rewrite this 8K-word document in a different register”, DeepSeek’s longer context window means you can include more reference material in the prompt without chunking. See DeepSeek for writing for prompt patterns we use day to day.

Pricing: the headline reason most teams pick one

API pricing is where the gap opens. All figures are USD per 1M tokens, drawn from the labs’ own published rate cards as of April 25, 2026.

| Model | Input (cache miss) | Input (cache hit) | Output | Source |

|---|---|---|---|---|

| DeepSeek V4-Flash | $0.14 | $0.0028 | $0.28 | DeepSeek pricing page, 2026-04-25 |

| DeepSeek V4-Pro | $0.435 promo / $1.74 list | $0.003625 promo / $0.0145 list | $0.87 promo / $3.48 list | DeepSeek pricing page (75% promo through 2026-05-31) |

| Kimi K2.6 (OpenRouter) | $0.7448 | n/a published | $4.655 | OpenRouter listing, 2026-04-25 |

| Kimi K2.5 (Moonshot) | $0.60 | auto cache discount | $2.50 | Moonshot platform, March 2026 |

Two things to note. First, Moonshot’s own platform pricing for K2.5 still applies in many regions during the K2.6 rollout; the K2.6 figures above are the OpenRouter resale rate. Moonshot AI adjusts rates periodically, so always verify on platform.moonshot.ai before committing. Second, DeepSeek’s V4-Flash output rate ($0.28) is roughly an order of magnitude below Kimi K2.6’s listed output rate.

For the wider picture, see DeepSeek API pricing.

Worked example: 1M API calls per month

Take a real workload. A SaaS app with a 2,000-token cached system prompt, a 200-token user message and a 300-token response, run a million times in a month. Costs out on deepseek-v4-flash:

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

The same workload on Kimi K2.6 at OpenRouter rates, ignoring cache discounts, comes to roughly:

- Input: 2,200,000,000 tokens × $0.7448/M ≈ $1,638.56

- Output: 300,000,000 tokens × $4.655/M ≈ $1,396.50

- Total: ~$3,035

That is roughly 26× more expensive than V4-Flash for an identical token shape. Kimi’s automatic prefix caching narrows the gap on highly repetitive prompts, but the ratio remains in DeepSeek’s favour for cost-sensitive workloads. If you need the frontier tier, the V4-Pro list version of the same calculation totals $1,421 (or $355.25 during the 75% promo through 2026-05-31) — still well below Kimi’s published K2.6 rate. Build your own scenarios with the DeepSeek pricing calculator.

Privacy and data residency

Both companies are based in China, both process API traffic on Chinese-jurisdiction infrastructure, and both are subject to Chinese law on data access. There is no meaningful privacy advantage one way or the other if your concern is jurisdiction. The mitigations are identical: run the open weights yourself, route through a third-party host (OpenRouter, DeepInfra, Cloudflare Workers AI for Kimi, Fireworks for DeepSeek), or limit what you send.

Kimi K2.6 went live on Workers AI, bringing a 1T parameter MoE model with 32B active parameters, 262.1k context window, vision, and agentic capabilities to the Cloudflare Developer Platform. That is a clean way for non-Chinese teams to use Kimi without registering on Moonshot’s platform — the friction for international developers: registration typically requires a Chinese mobile number. DeepSeek’s developer registration is more permissive in our experience, accepting international email and card payments directly.

Ecosystem and developer experience

DeepSeek’s API is OpenAI-compatible at https://api.deepseek.com; chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. The Anthropic SDK works against the same base URL. The API is stateless — every request must resend the full conversation history. The web chat and mobile app keep state for you; the API does not.

Minimal Python:

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Summarise this doc."}],

temperature=1.3,

max_tokens=4096,

)

print(resp.choices[0].message.content)

Legacy IDs deepseek-chat and deepseek-reasoner are still accepted and currently route to deepseek-v4-flash, but they retire on 2026-07-24 at 15:59 UTC. Migrating is a one-line model= swap; the base_url does not change. New code should use V4 IDs directly. Walk through it in the DeepSeek API getting started guide.

Kimi’s API mirrors the same OpenAI conventions. You can access Kimi-K2.6’s API on https://platform.moonshot.ai and we provide OpenAI/Anthropic-compatible API for you. Kimi additionally ships a CLI for terminal coding workflows and integrations into VS Code, Cursor and JetBrains via partner gateways — that toolchain is more developed today than DeepSeek’s first-party tooling.

Core parameters worth setting on either: temperature (DeepSeek recommends 0.0 for code, 1.3 for general chat, 1.5 for creative writing), top_p, max_tokens, plus tool calling, JSON mode and streaming. JSON mode is designed to return valid JSON, not guaranteed — include the word “json” plus an example schema in the prompt and set max_tokens high enough to avoid truncation. See DeepSeek API JSON mode for the failure patterns.

When to pick which

Pick DeepSeek V4 when

- Cost per token dominates your decision — V4-Flash is roughly 1/10th of Kimi K2.6 on output.

- You need more than 256K of context in a single prompt.

- You want one provider that scales from cheap chat (V4-Flash) to frontier reasoning (V4-Pro (reasoning_effort=max)) under one model family.

- Your workload is text-only and benefits from DeepSeek context caching on repeated prefixes.

Pick Kimi K2.6 when

- You are running multi-agent or swarm-style workloads and want native orchestration of hundreds of sub-agents.

- The work is vision-driven coding (UI from screenshot, diagram-to-code).

- You need a model that has stable tool-use across 200–300 sequential calls as a primary design goal.

- Your stack already runs on Cloudflare Workers AI, where K2.6 is a first-class option.

Alternatives worth considering

If neither fits cleanly, the practical comparators are DeepSeek vs Qwen (Qwen has stronger small-model variants and broader multilingual coverage), DeepSeek vs GLM (Z.ai’s GLM family competes on reasoning at similar prices), and DeepSeek vs MiniMax if you want the V4-vs-M2.5 read on agents and long-context coding. For a wider field, see the DeepSeek comparisons hub.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Is DeepSeek better than Kimi for coding?

On SWE-Bench Verified the two are within a percentage point — V4-Pro at 80.6%, Kimi K2.6 at 80.2%. DeepSeek V4-Pro slightly leads Kimi K2.6 on Terminal-Bench 2.0 (67.9% vs 66.7%) per each lab’s release tables. Kimi has the edge on multi-agent swarm orchestration and vision-to-code; DeepSeek has the edge on context length and price. For deeper coding-specific guidance see our DeepSeek for coding guide.

How much cheaper is DeepSeek than Kimi?

For an identical 2,500-token-per-call workload run a million times, DeepSeek V4-Flash totals about $117.60 versus roughly $3,035 on Kimi K2.6 at OpenRouter rates — about 26× cheaper. The gap narrows on V4-Pro and on cache-heavy Kimi workloads, but DeepSeek wins on raw cost in nearly every realistic scenario. Model the exact numbers for your prompt with the DeepSeek cost estimator.

Can I run Kimi and DeepSeek locally?

Yes for both, with serious hardware. DeepSeek V4-Pro and Kimi K2.6 are both ~1T-parameter MoE models that need multi-GPU H100-class nodes for full precision. Quantised builds (4-bit, INT4) fit on smaller setups. DeepSeek V4-Flash at 284B/13B active is the more practical local-deploy option of the four. See install DeepSeek locally for the full process.

What is the context window difference?

DeepSeek V4-Flash and V4-Pro both default to a 1,000,000-token context window with up to 384,000 tokens of output. Kimi K2.6 was conducted with a context length of 262,144 tokens, with up to 98,304 tokens for reasoning generation. If your workload reliably needs more than ~256K of input, DeepSeek is the only practical choice. Check token counts beforehand with the DeepSeek context length checker.

Are DeepSeek and Kimi both open source?

Both publish weights publicly. DeepSeek V4-Pro and V4-Flash are released under MIT for both code and weights. Both the code repository and the model weights are released under the Modified MIT License for Kimi K2 Thinking and K2.6 — slightly more restrictive than pure MIT but commercially friendly. Training data and training code are not public for either lab. For the licensing nuances see is DeepSeek open source.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingGoogle Gemini API pricingGemini API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingDeepSeek API pricing pageV4-Flash / V4-Pro rates as of 2026-04-25Last checked: April 30, 2026

- PricingOpenRouter: Kimi K2.6 listingKimi K2.6 input/output rates via OpenRouterLast checked: April 30, 2026

Technical references

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.