Using DeepSeek for Customer Support: A Practitioner’s Guide

A support manager I spoke with last month had a familiar problem: 14,000 tickets a week, three time zones, four languages, and an SLA she could no longer hit without burning out the queue. She had tried two AI vendors. Both quoted enterprise prices that ate her margin. That is the gap DeepSeek for customer support is starting to fill. With the V4 Preview release on April 24, 2026, the math has shifted again — `deepseek-v4-flash` lists at $0.14 per million input tokens (cache miss) and $0.28 per million output, which makes deflection bots, draft-reply assistants, and macro-generation cheap enough to deploy across a whole queue rather than a pilot. This guide walks through the workflows that actually pay back, the prompts that work, and where you should not let the model anywhere near your customers.

The concrete problem support teams face

Support leaders are squeezed from three sides at once: ticket volume rises with the product roadmap, agent salaries rise with the labour market, and customers expect answers in minutes regardless of channel. The blunt response — hire more agents — stops working past a certain scale. The subtle response — automate the easy 40%, accelerate the next 40%, and route the hard 20% to humans with full context — is what a usable LLM finally enables.

The reason this is now feasible at small-team budgets is pricing. DeepSeek-V4 Preview is officially live and open-sourced, with DeepSeek-V4-Pro at 1.6T total / 49B active parameters and DeepSeek-V4-Flash at 284B total / 13B active parameters as the fast, efficient, economical choice. Both ship as open-weight Mixture-of-Experts models under MIT, and both default to a 1,000,000-token context window with output up to 384,000 tokens — enough to load an entire knowledge base, ticket history, and product changelog into a single prompt without chunking.

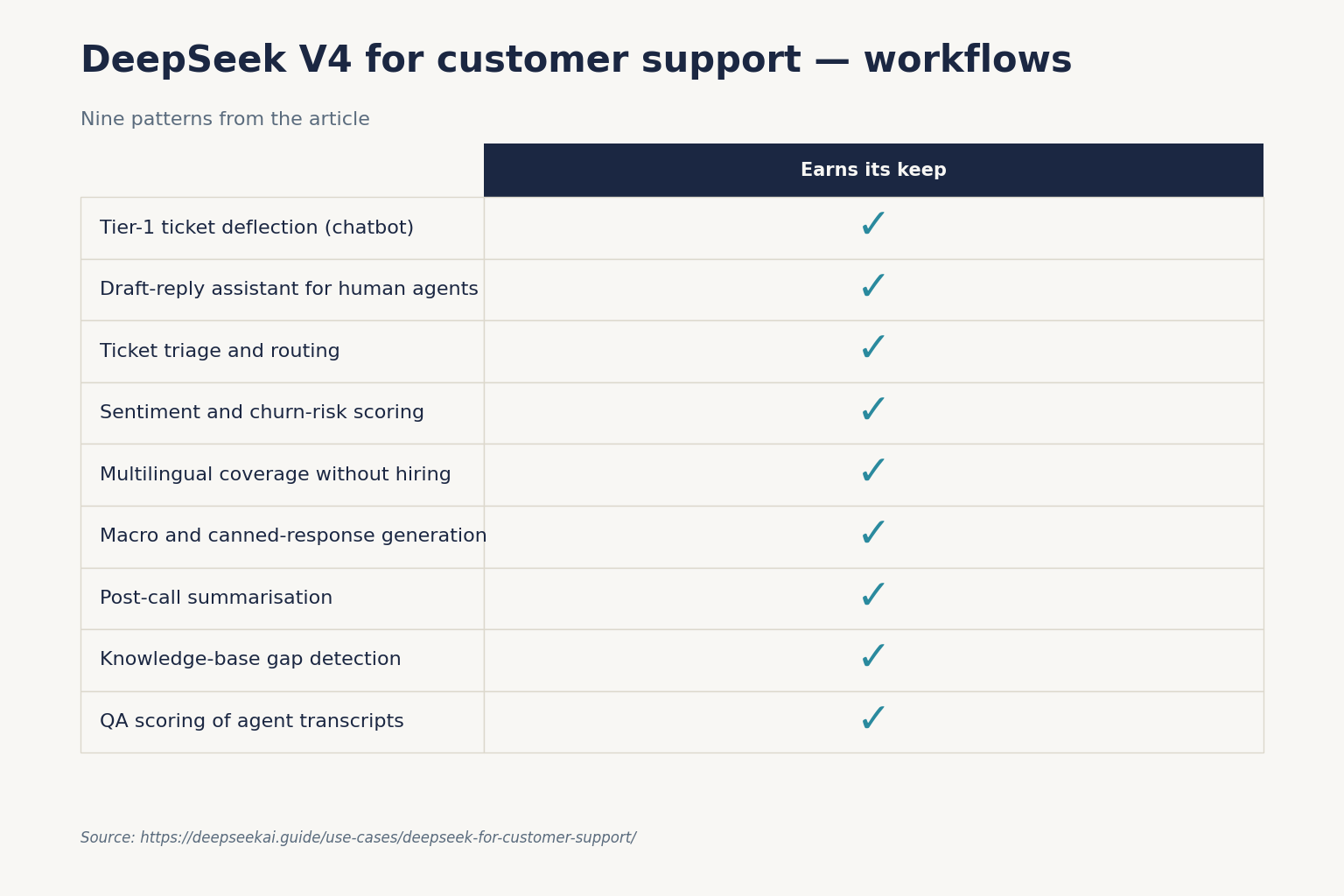

How DeepSeek helps: nine workflows that actually pay back

Below are the workflows I have either deployed in production or evaluated on real ticket data over the past six months. Each lists a recommended model tier and a prompt pattern.

1. Tier-1 ticket deflection (chatbot)

A retrieval-augmented chatbot on your help centre handles password resets, order status, refund eligibility, and shipping ETAs. Use deepseek-v4-flash in non-thinking mode for latency, paired with a vector store of your knowledge base. The prompt enforces “answer only from these documents; if missing, escalate.”

For the retrieval layer, see our DeepSeek RAG tutorial. For the chatbot wrapper itself, the DeepSeek Streamlit app walkthrough is the fastest way to a working prototype. If your tier-1 queue is order status, refunds and shipping rather than generic SaaS questions, the ecommerce workflow playbook covers the catalogue and review-classification patterns specifically.

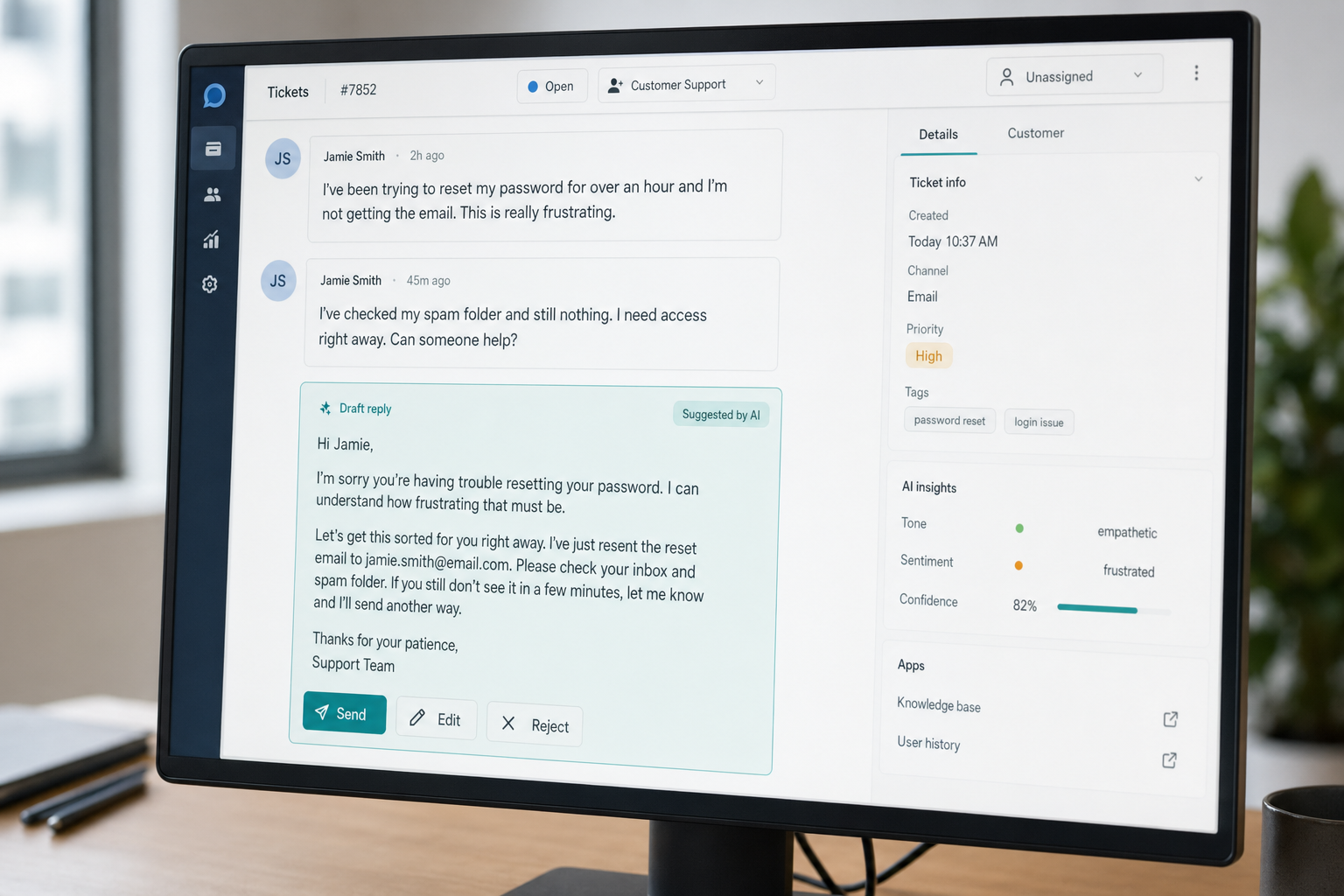

2. Draft-reply assistant for human agents

Rather than auto-sending, the model drafts a reply that the agent reviews, edits, and sends. This is the highest-ROI pattern I have seen — agents handle 2–3× more tickets per hour and quality goes up because the draft enforces tone and includes the right policy links.

3. Ticket triage and routing

Classify each inbound ticket by category, urgency, and required skill. Use JSON mode and a strict schema. Route to the correct queue automatically.

4. Sentiment and churn-risk scoring

Score every conversation for frustration, churn risk, and CSAT prediction. Surface at-risk accounts to customer success before the cancellation email arrives.

5. Multilingual coverage without hiring

The model translates inbound tickets to your agents’ working language and outbound replies back to the customer’s language. Useful for queues with long-tail languages where hiring native speakers is uneconomic.

6. Macro and canned-response generation

Mine your last six months of resolved tickets, cluster them, and have the model draft 50 macros that cover 80% of the volume. Cuts the manual work of building a macro library from weeks to an afternoon.

7. Post-call summarisation

For voice or video support, transcribe the call and have the model produce a structured CRM note: issue, root cause, resolution, follow-up. Stops agents losing 5–8 minutes per call to admin.

8. Knowledge-base gap detection

Run the model over the last 1,000 unresolved tickets and ask it to identify topics where your help centre has no article. Gives the docs team a prioritised backlog.

9. QA scoring of agent transcripts

Sample 100% of conversations (rather than the 2–3% a human QA team can review) and score against your rubric: empathy, accuracy, policy compliance, resolution.

The prompt patterns that work

Triage prompt (JSON mode, non-thinking)

The triage call is the highest-frequency request in a support stack, so use deepseek-v4-flash. Enforce JSON mode and pass the request to POST /chat/completions, the OpenAI-compatible endpoint:

You are a triage classifier for ACME Support.

Classify the ticket into a JSON object with this schema:

{

"category": "billing|technical|account|shipping|other",

"urgency": "low|medium|high|critical",

"language": "ISO-639-1 code",

"requires_human": true|false,

"summary": "one sentence under 25 words"

}

Reply with json only. Example:

{"category":"billing","urgency":"medium","language":"en","requires_human":false,"summary":"Customer wants a refund for duplicate charge on order 12345."}

TICKET:

<<customer message>>JSON mode is designed to return valid JSON, not guaranteed. The model can occasionally return empty content, so always handle that case, include the word “json” plus a small example schema in the prompt, and set max_tokens high enough that the response cannot truncate. Our DeepSeek API JSON mode reference covers the failure modes in detail.

Minimal Python call (OpenAI SDK)

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. The official OpenAI SDK works against DeepSeek by changing only base_url and api_key:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": SYSTEM_PROMPT},

{"role": "user", "content": ticket_body},

],

response_format={"type": "json_object"},

temperature=1.0,

max_tokens=512,

)Note the temperature=1.0 — DeepSeek’s own guidance is 0.0 for code, 1.0 for data analysis, 1.3 for general conversation, and 1.5 for creative writing. Triage is a data-analysis task. The API also supports streaming, tool calling, and context caching; see DeepSeek API best practices.

Draft-reply prompt (thinking mode for hard cases)

For complex tickets — refunds with policy edge cases, escalations, multi-step technical issues — switch to thinking mode on deepseek-v4-pro. Set reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}}. The response then returns reasoning_content alongside the final content, which is useful for QA review of why the model recommended a given resolution.

The API contract you need to know

Three points trip up teams moving from a chat UI to an API integration.

- The API is stateless. Unlike the web chat, which maintains session history, the API does not remember prior turns. Your client must resend the full

messagesarray on every request — system prompt, prior turns, latest user message. - One endpoint, two compatible surfaces. The base URL is

https://api.deepseek.comand the chat endpoint isPOST /chat/completions. DeepSeek’s guidance is to keep base_url and just update model to deepseek-v4-pro or deepseek-v4-flash, and the API supports both OpenAI ChatCompletions and Anthropic APIs. - Legacy IDs are on a clock. deepseek-chat and deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 UTC, currently routing to deepseek-v4-flash non-thinking and thinking respectively. Migration is a one-line

model=swap.

What it actually costs: a worked example

Imagine a mid-market SaaS with 1,000,000 support interactions per month routed through a draft-reply assistant. Each call has a 2,000-token system prompt (cached across calls — stable policy and product context), a 200-token user message (uncached on first read), and produces a 300-token draft.

On deepseek-v4-flash (the default recommendation)

| Bucket | Tokens | Rate per 1M | Cost |

|---|---|---|---|

| Input, cache hit | 2,000,000,000 | $0.0028 | $56.00 |

| Input, cache miss | 200,000,000 | $0.14 | $28.00 |

| Output | 300,000,000 | $0.28 | $84.00 |

| Total | $117.60 |

That is $117.60 per month for a million draft replies. Even if you escalate 10% of those tickets to deepseek-v4-pro at promotional $0.435 / $0.87 (75% off through 2026-05-31; list $0.0145 / $1.74 / $3.48) per million for cache hit / miss / output, the same workload runs to about $168.20 on Pro for that 100,000-call slice — total monthly bill around $320 for the whole queue. Reality-check the math against the DeepSeek cost estimator and DeepSeek API pricing page before locking a budget — Preview pricing can change.

The uncached-input line matters. Each new user message is a cache miss against your stable system prefix, so do not pretend the cache covers everything. Off-peak discounts are not active — DeepSeek discontinued them in September 2025 and did not reintroduce them with V4.

Limitations you have to design around

Honest list, from production experience.

- Hallucination on policy edge cases. Without retrieval, the model invents return windows, warranty terms, and SLA language. Every customer-facing reply must ground in your KB through RAG, or be human-reviewed.

- No live state. The API does not know whether your warehouse is on fire today. Pull order, account, and incident state from your systems and inject it into the prompt — do not ask the model to know.

- Privacy and data residency. Conversations sent to the DeepSeek API are processed on servers subject to Chinese law. For regulated workloads — healthcare, financial services, EU government — run open weights on your own infrastructure or pick a different provider. See our DeepSeek privacy writeup for what to disclose to customers.

- Tone drift in long sessions. The stateless API means tone is set fresh by your system prompt each call. If agents customise per ticket, capture those customisations in metadata and re-inject them.

- Preview status. V4 is a Preview release. Do not migrate every workflow on day one; pilot on the lower-risk queues first.

Where DeepSeek is not the best tool

I run V4 in production and still send some tasks elsewhere. Specifically:

- Voice support with sub-300ms latency targets. Lower-latency providers and dedicated speech models still beat any general LLM on the round trip. Use a hosted speech-to-text plus a small fast model.

- Regulated EU/UK financial advice. A vendor with a documented EU data-processing agreement is the right call regardless of model quality.

- Image-heavy support (returns photos, damage assessment). Use a vision-capable model with explicit image grounding. See DeepSeek vs Claude for a fair side-by-side, and DeepSeek vs ChatGPT for the consumer-app comparison.

Getting started: a two-week pilot plan

- Days 1–2: Get an API key (see how to get a DeepSeek API key) and run the triage prompt above against 1,000 historical tickets. Measure category accuracy against agent labels.

- Days 3–5: Stand up a RAG layer over your help centre. Use the DeepSeek RAG tutorial as the scaffold.

- Days 6–8: Build the draft-reply assistant as an agent-facing tool, not a customer-facing bot. Wire it into your helpdesk via the helpdesk’s macro or app framework.

- Days 9–11: Shadow-mode it. Generate drafts for every live ticket but do not send. Have agents rate the draft quality on a 5-point scale.

- Days 12–14: Cut over the highest-rated category to assisted send. Keep humans in the loop. Measure handle time, CSAT, and reopen rate.

You can browse adjacent playbooks in our DeepSeek use cases hub — the DeepSeek for business piece pairs well with this one.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

How does DeepSeek handle multilingual customer support?

Both V4 models translate and respond fluently across the major European and Asian languages without a separate translation step. In practice, you pass the ticket in its source language and instruct the model to detect language, respond in the same language, and tag the language code in the JSON metadata. For low-resource languages, sample-test before deploying. Our DeepSeek for translation guide covers tested language pairs and quality notes.

What is the difference between V4-Flash and V4-Pro for support workloads?

V4-Flash is the cost-efficient tier (284B total / 13B active parameters) at $0.14 input miss / $0.28 output per million tokens — right for triage, classification, and standard draft replies. V4-Pro is the frontier tier (1.6T / 49B active) at $0.435 / $0.87 during the 75% promo through 2026-05-31 (list $1.74 / $3.48) — reserve it for complex escalations, policy reasoning, or agentic workflows that pay back the roughly 12× output-cost premium. Compare specs in detail at DeepSeek V4-Flash and DeepSeek V4-Pro.

Can DeepSeek connect to Zendesk, Intercom, or Freshdesk?

Not natively — there is no first-party integration. Most teams wrap the API in a small service that listens for new-ticket webhooks from the helpdesk, calls POST /chat/completions, and writes the result back as an internal note or draft. The OpenAI SDK compatibility means most existing helpdesk-AI middleware ports across by changing the base URL. See DeepSeek OpenAI SDK compatibility for the contract.

Is it safe to send customer PII to the DeepSeek API?

For consumer support in non-regulated sectors, with appropriate disclosure to customers, many teams do. For healthcare, financial services, EU public-sector, or anywhere with strict residency requirements, run open weights on your own infrastructure rather than the hosted API — DeepSeek publishes V4 weights under MIT for exactly this reason. Read our DeepSeek privacy writeup before committing.

How do I estimate monthly cost before I commit?

Take your monthly ticket count, multiply by average tokens per call (system prompt cached + user message uncached + draft output), and apply the V4-Flash rates: $0.0028 cache hit, $0.14 cache miss, $0.28 output per million. A million tickets at the example sizing in this article costs about $117.60 per month on Flash. Use the DeepSeek pricing calculator to plug in your own token volumes.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.