DeepSeek Troubleshooting: Fix the Most Common Chat and API Problems

Your DeepSeek chat hangs on “Server is busy.” Your API call returns a 401 you swear worked yesterday. Your thinking-mode response never arrives. This DeepSeek troubleshooting guide walks through the failures I actually see in production, in the order you should test for them, with exact fixes rather than vague “check your settings” advice.

I run DeepSeek V4-Pro and V4-Flash in live systems today and ran V3, V3.2 and R1 before that. Every step below comes from a real incident or a reproducible error, cross-checked against DeepSeek’s current documentation as of April 24, 2026 — the day the V4 Preview shipped. You will finish this guide knowing how to diagnose web-chat, app, and API problems methodically, and which fixes to try first.

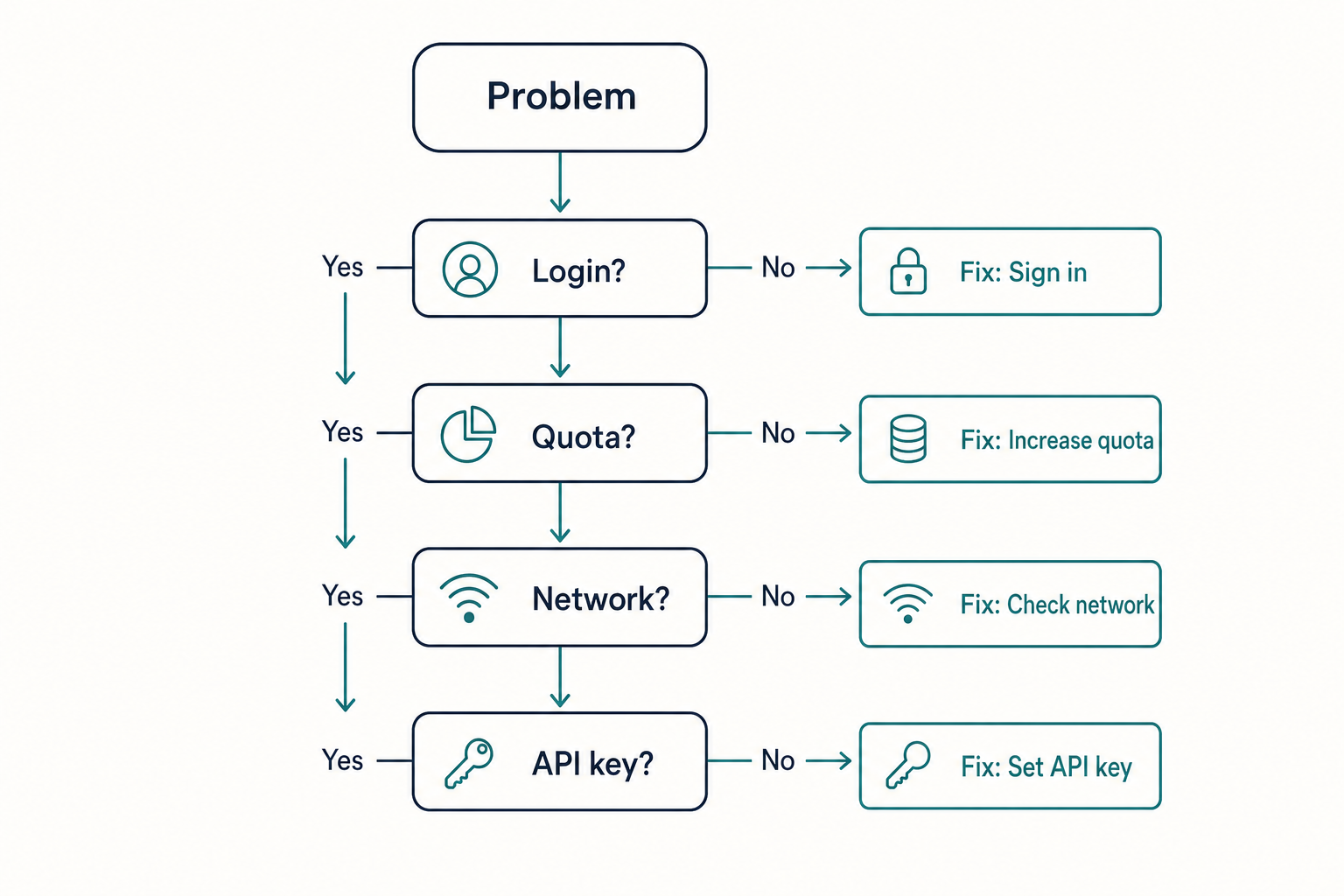

Start here: is it you, the network, or DeepSeek?

Before you change any code or reinstall anything, answer one question: is this a local problem, a network problem, or a service problem? Ninety percent of “DeepSeek is broken” reports fall into one of three buckets, and the fix is different for each.

- Local — browser cache, stale app, bad API key, malformed request.

- Network — ISP routing, VPN, corporate firewall, country-level block.

- Service — DeepSeek outage, regional throttle, model retirement.

A 30-second triage: open chat.deepseek.com in a private/incognito window on mobile data (not Wi-Fi). If it loads, your original browser, cache, or network is the problem. If it still fails, check DeepSeek’s status page and an independent DeepSeek status checker before you touch anything else.

Web chat and mobile app problems

“Server is busy, please try again later”

This is the most reported DeepSeek error, and it almost always means DeepSeek’s inference capacity is temporarily saturated — especially around a big release like the V4 Preview on April 24, 2026. It is not your account. Three practical responses:

- Wait 30–120 seconds and resend. Do not spam retry; that often extends the throttle.

- Switch between Expert Mode and Instant Mode. These map to V4-Pro and V4-Flash respectively; Flash usually clears the queue faster when Pro is hot.

- Shorten your prompt. A 40k-token paste at peak load is much more likely to fail than a 2k-token one.

Login loops, blank chat window, or missing history

If the login screen accepts your credentials but bounces you back, the culprit is usually a stale session cookie or a blocked third-party script. Clear cookies for deepseek.com, disable ad-blockers for that origin, and try again. If you are stuck on 2FA, see the reset DeepSeek password flow.

Missing chat history on the web is different. If you see “Login failed. Your email domain is currently not supported for registration” during registration, it is because your email is not supported by DeepSeek — sign up with a major international provider such as Gmail, Outlook, Hotmail, or Yahoo. Custom domains and some privacy-forwarding services are rejected.

Country or region blocks

DeepSeek is unavailable or restricted in several jurisdictions. Multiple US states, Australia, Taiwan, South Korea, Denmark and Italy introduced bans or other restrictions on DeepSeek-R1 shortly after its release, citing privacy and national security concerns. If the app works on mobile data but not on your corporate Wi-Fi, your employer or country may be filtering the domain. See the detailed DeepSeek availability by country breakdown before assuming a technical fault.

Mobile app crashes or “verify official app” uncertainty

Counterfeit DeepSeek apps are still common on third-party Android stores. If the app crashes on launch, asks for suspicious permissions, or shows an interface that does not match chat.deepseek.com, uninstall and reinstall from the official store listing — the verify official DeepSeek app guide lists the exact publisher names to check for.

API errors: the diagnostic order that saves time

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. A fast diagnostic rule: 4xx errors usually mean you need to fix something in the request, authentication, or account; 5xx errors usually mean retrying and checking service health matters more than editing your JSON. 429 sits in the middle — it often needs pacing and retry logic, not a full rewrite of your request.

Here is the minimal Python smoke test I run whenever anything looks broken. If this passes, the bug is in your application code, not the API:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="sk-...",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "ping"}],

max_tokens=16,

)

print(resp.choices[0].message.content)

Error code reference

| Code | Meaning | First thing to check |

|---|---|---|

| 400 | Malformed request | JSON shape, model name, message roles |

| 401 | Authentication failed | API key value, Authorization: Bearer header, environment variable loaded |

| 402 | Insufficient balance | Billing console — top up or check granted balance expiry |

| 422 | Invalid parameter | Parameter type (string vs number), unsupported combo (e.g. logprobs with thinking mode) |

| 429 | Rate limit / TPM exceeded | Back off with exponential retry; lower concurrency |

| 500 | Internal server error | Retry; if persistent, log request ID and contact support |

| 503 | Server overloaded | Wait and retry with jitter; check status page |

For the complete, always-current reference see the DeepSeek API error codes page.

401 Unauthorized — by far the most common

The API could not authenticate your request. Most common causes: a wrong API key, a missing Authorization: Bearer header, an environment variable that never loaded, or a secret that was copied with an extra character or whitespace. Fastest fix: verify that your application is actually sending the right key, in the right header, to the right endpoint. Print the first eight characters of the key your code is using and compare against the key shown in your dashboard — do not print the whole thing to a shared log.

429 Rate Limit and 503 Server Overloaded

These are transient. Retry with exponential backoff, jitter, and a cap. Wrap calls in a helper that handles 429 and 5xx only — never auto-retry 400, 401, or 422, because those are logic bugs that will keep failing until you change the request. If you consistently hit 429s under load, see the current rate-limit guidance and batch or stream where possible.

Requests hang forever with no response

Two usual suspects. First, you enabled JSON mode without telling the model what schema to return. Without a clear JSON instruction in the prompt, the API can appear stuck because the model may continue emitting whitespace until it reaches the token limit. Fix: include the literal word “json” and a small example schema in the prompt, and set max_tokens high enough to accommodate the full object. The JSON mode reference spells out the exact pattern.

Second, you set no client-side timeout. DeepSeek can hold a connection open while waiting for inference scheduling, especially in reasoning_effort="max" mode. Always set an explicit timeout (60–300 seconds depending on the request) and retry on timeout, not hang.

V4 migration and model-ID problems

The V4 Preview changed how model selection works. There are now two model IDs — deepseek-v4-pro (1.6T total / 49B active) and deepseek-v4-flash (284B / 13B). Keep base_url, just update model to deepseek-v4-pro or deepseek-v4-flash. Supports OpenAI ChatCompletions and Anthropic APIs. Both models support 1M context and dual modes (Thinking / Non-Thinking). deepseek-chat and deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time), currently routing to deepseek-v4-flash non-thinking/thinking.

If your old integration suddenly starts failing after July 24, 2026, check whether you are still sending model="deepseek-chat" or model="deepseek-reasoner". After the cutoff, those IDs will return errors instead of routing to V4-Flash.

Thinking mode returns empty content or truncates

In V4, thinking mode is a request parameter, not a separate model. Enable it with reasoning_effort="high" plus extra_body={"thinking": {"type": "enabled"}}, or reasoning_effort="max" for the heaviest setting. The response returns reasoning_content alongside the final content. If your application reads only content and prints nothing, you are discarding the trace, not seeing a bug.

If a thinking-max response truncates, you likely have max_tokens set too low. Thinking-max needs a working window of at least 384,000 tokens; a hard cap of 4,000 will cut off the answer mid-trace. Also note: in current official docs, temperature, top_p, presence_penalty, and frequency_penalty are accepted for compatibility in thinking mode but have no effect. logprobs and top_logprobs are not supported in thinking mode and can trigger an error.

Cost surprises and billing errors

If your bill is higher than expected, the usual cause is mixing model tiers or forgetting that user messages are an uncached miss against a cached system prompt. Worked example on deepseek-v4-flash at current rates (as of April 2026; verify on the official DeepSeek API pricing page):

1,000,000 calls, 2,000-token cached system prompt,

200-token user message, 300-token reply.

Cached input: 2,000,000,000 × $0.0028/M = $56.00

Uncached input: 200,000,000 × $0.14/M = $28.00

Output: 300,000,000 × $0.28/M = $84.00

--------

Total $117.60

On deepseek-v4-pro the same workload costs $1,421.00 — roughly 12× more. If you routed everything to Pro by accident, that is where your bill went. Pin the cheaper tier as your default and promote to Pro only for prompts where a benchmark or eval shows a real lift.

The 402 Insufficient Balance error is clear: your account balance is zero or your granted balance has expired. DeepSeek may offer a granted balance — a small promotional credit that can expire; check the billing console for current offers. Top up before any production deployment.

Platform-specific gotchas

VS Code and IDE extensions

If an extension that calls DeepSeek returns 401 while your terminal works, the extension is probably reading from a different environment. Most IDEs do not inherit shell environment variables on GUI launch. Put the key in the extension’s own settings or in a .env file the extension explicitly reads. The VS Code integration guide covers the exact setting paths.

Local Ollama or llama.cpp runs

“DeepSeek is slow on my M1” is almost always a quantisation or memory issue, not a bug. Check free RAM against the DeepSeek system requirements; a 70B parameter model will swap to disk and crawl on a 16 GB machine. Drop to a smaller distill or raise hardware before concluding the model is broken.

Langchain, LlamaIndex and wrappers

Wrapper libraries occasionally lag behind API changes. If you get a 422 on a request that worked last month, pin the wrapper version and test the raw POST /chat/completions call directly. If the raw call works, the wrapper is the bug — check its changelog for V4 parameter support.

When to give up and ask for help

After the checks above, if the same minimal smoke test fails from a clean environment on mobile data with a freshly regenerated API key, you are looking at a service-side problem. Capture the request ID from the response headers, the UTC timestamp, and the exact payload (redact the key), and open a support ticket through the official DeepSeek platform. Independent help lives at the DeepSeek beginner guides hub and the API documentation index.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Why does DeepSeek keep saying “server is busy”?

That message means DeepSeek’s inference servers are temporarily saturated, not that your account is broken. It spikes around major releases like the V4 Preview and during peak hours in Asia-Pacific. Wait 30–120 seconds and retry, switch between Expert Mode (V4-Pro) and Instant Mode (V4-Flash), or shorten your prompt. If it persists for an hour, check an independent DeepSeek status checker before concluding your setup is at fault.

How do I fix a 401 error on the DeepSeek API?

A 401 means authentication failed. The fix is almost always on your side: a wrong key, missing Authorization: Bearer header, an environment variable that never loaded, or whitespace copied with the secret. Regenerate the key in the dashboard, paste it into a fresh environment variable, and test with a minimal curl call. The full DeepSeek API authentication reference covers every header and SDK variant.

What happens when deepseek-chat and deepseek-reasoner retire?

Both legacy IDs are accepted until July 24, 2026, 15:59 UTC, and currently route to deepseek-v4-flash in non-thinking and thinking modes respectively. After that cutoff, requests using them will fail. Migration is a one-line change — swap model= to deepseek-v4-flash or deepseek-v4-pro; base_url stays the same. See the DeepSeek V4 overview for the full parameter map.

Is DeepSeek down right now, or is it just me?

Open chat.deepseek.com in a private window on mobile data. If it works there but not on your normal connection, the problem is local (cache, VPN, firewall, ISP). If it fails everywhere, check DeepSeek’s official status page and our status monitor. Widespread outages are posted within minutes. Regional restrictions also matter — some countries block the app entirely.

Can I recover a deleted DeepSeek account or chat history?

Generally no. Account deletion is permanent: chat history disappears, and any remaining API balance is forfeited. If your account was suspended in error, the official path is the Account Suspension Appeal form, with most reviews completed within three business days. Before deleting anything, read the delete DeepSeek account guide so you know exactly what is lost versus archived.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.