Using DeepSeek With the OpenAI SDK: A Practitioner’s Guide

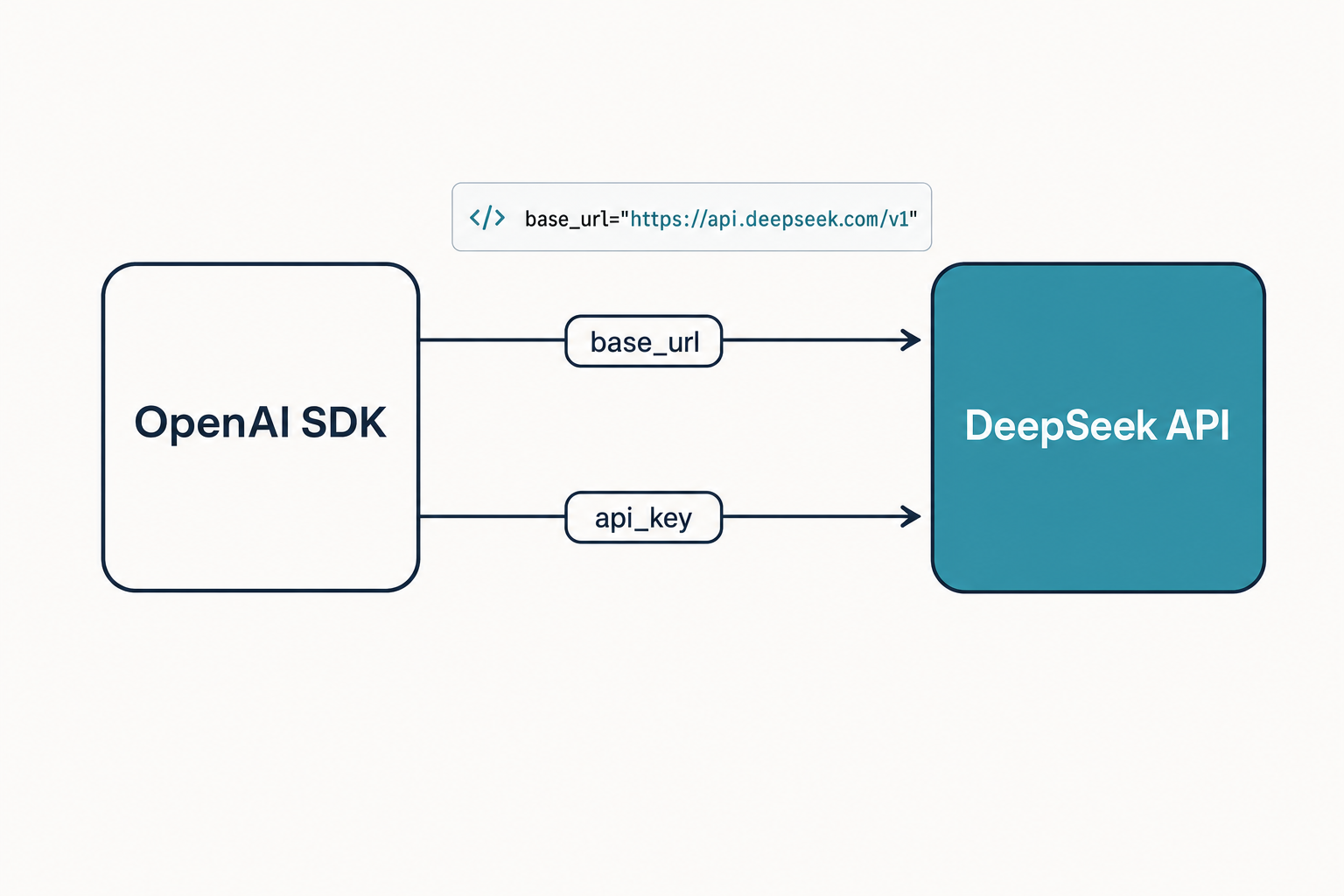

If you already have a working OpenAI integration and you want to point it at DeepSeek’s V4 models, the answer is genuinely two lines of code. DeepSeek OpenAI compatibility means the wire format mirrors OpenAI’s Chat Completions API closely enough that the official `openai` Python and Node SDKs work against `https://api.deepseek.com` after you swap the `base_url` and `api_key`. No request rebuilding, no response parser changes, no new dependency.

The catch — and there always is one — is in the V4-specific parameters, the stateless conversation model, the JSON-mode caveats, and the legacy IDs scheduled to retire on July 24, 2026. This guide walks through what migrates cleanly, what needs adjusting, what an honest cost example looks like, and where the OpenAI-compatible surface stops behaving like OpenAI.

What “OpenAI-compatible” actually means here

DeepSeek’s official documentation states plainly that the DeepSeek API uses an API format compatible with OpenAI/Anthropic, and by modifying the configuration, you can use the OpenAI/Anthropic SDK or software compatible with the OpenAI/Anthropic API to access the DeepSeek API. In practice that means the Chat Completions request and response envelopes — the messages array, the role/content shape, the choices[0].message.content path, the usage block, the stream=true SSE chunks — all match.

You point the SDK at DeepSeek by changing two values:

base_url→https://api.deepseek.com(orhttps://api.deepseek.com/v1)api_key→ your DeepSeek key from the platform console

The chat endpoint is POST /chat/completions. For OpenAI-style compatibility, you can also use https://api.deepseek.com/v1 as the base_url, but the v1 here has no relationship with the model’s version — it is a path alias the OpenAI SDK appends by default. Beta features such as Chat Prefix Completion and FIM (fill-in-the-middle) live under https://api.deepseek.com/beta.

One distinction worth getting right up front: DeepSeek also exposes an Anthropic-compatible surface against the same hostname. DeepSeek V4 ships on the OpenAI-compatible endpoint at https://api.deepseek.com/v1/chat/completions and the Anthropic-compatible endpoint at https://api.deepseek.com/anthropic. Pick whichever SDK matches your existing code; both hit the same underlying V4 models.

Quickstart: curl and Python

Here is the smallest possible call against deepseek-v4-flash, the cost-efficient tier, using DeepSeek’s default thinking mode. (For non-thinking mode, explicitly pass thinking: {"type": "disabled"} in the JSON body, or extra_body={"thinking": {"type": "disabled"}} in Python.) First, the curl version of POST /chat/completions:

curl https://api.deepseek.com/chat/completions

-H "Content-Type: application/json"

-H "Authorization: Bearer $DEEPSEEK_API_KEY"

-d '{

"model": "deepseek-v4-flash",

"messages": [

{"role": "system", "content": "You are a concise assistant."},

{"role": "user", "content": "Summarise the OpenAI Chat Completions schema in one sentence."}

],

"stream": false

}'The same call with the official OpenAI Python SDK — note that the only changes from a real OpenAI integration are base_url and the model string:

from openai import OpenAI

client = OpenAI(

api_key=os.environ["DEEPSEEK_API_KEY"],

base_url="https://api.deepseek.com",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": "You are a concise assistant."},

{"role": "user", "content": "Hello"},

],

)

print(resp.choices[0].message.content)If you want the frontier-tier model with thinking enabled, switch the model ID and pass DeepSeek-specific fields through extra_body, which is how the OpenAI SDK forwards non-OpenAI parameters to the server:

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Plan the migration."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)If you are just starting, the steps in our walkthrough on how to get a DeepSeek API key cover the console setup and the first $2 top-up that the platform requires before any request will succeed.

V4 model IDs and the legacy migration window

DeepSeek’s current generation, released April 24, 2026, ships as two open-weight MoE models that share the same OpenAI-compatible surface but differ in size and price:

| Model ID | Total / active params | Context | Tier |

|---|---|---|---|

deepseek-v4-pro |

1.6T / 49B | 1M tokens | Frontier |

deepseek-v4-flash |

284B / 13B | 1M tokens | Cost-efficient |

Both default to a 1,000,000-token context window and accept output up to 384,000 tokens. DeepSeek says V4-Pro has 1.6T total parameters with 49B active, while V4-Flash has 284B total parameters with 13B active, with the architecture using hybrid attention, MoE routing, and post-training designed to improve agentic behavior while cutting the compute burden of ultra-long context. The full architectural breakdown lives on the DeepSeek V4 page.

If you have an existing integration on the older IDs, here is the migration table:

| Legacy ID (accepted until 2026-07-24 15:59 UTC) | Currently routes to | Replace with |

|---|---|---|

deepseek-chat |

deepseek-v4-flash (non-thinking) |

deepseek-v4-flash |

deepseek-reasoner |

deepseek-v4-flash (thinking) |

deepseek-v4-flash + reasoning_effort="high" |

DeepSeek’s release notes are explicit on this: keep base_url, just update model to deepseek-v4-pro or deepseek-v4-flash; deepseek-chat and deepseek-reasoner will be fully retired and inaccessible after July 24, 2026, 15:59 UTC, currently routing to deepseek-v4-flash non-thinking/thinking. The base_url does not change. Migration is a one-line diff per call site.

Thinking mode is a parameter, not a model

This is the single biggest change for anyone coming from the V3.x era or from OpenAI’s o-series. In V4, thinking is a request parameter on either model, not a separate ID. Three settings are accepted:

- Non-thinking: pass

extra_body={"thinking": {"type": "disabled"}}. Fastest and cheapest per request. (V4 default is thinking-enabled.) - Thinking (high):

reasoning_effort="high"plusextra_body={"thinking": {"type": "enabled"}}. - Thinking-max:

reasoning_effort="max". Requiresmax_model_len >= 393216(384K tokens) to avoid truncation.

When thinking is on, the response carries reasoning_content alongside the final content. DeepSeek’s docs state that in thinking mode, the reasoning trace content is returned via the reasoning_content parameter, at the same level as content. Two operational details that matter:

- Thinking mode does not support the temperature, top_p, presence_penalty, or frequency_penalty parameters; for compatibility with existing software, setting these will not trigger an error but will also have no effect.

- On V4, when a thinking turn includes a

tool_callsentry, you must round-tripreasoning_contentback into the next request — omitting it triggers a 400. For pure thinking turns without tool calls, V4 does not require this round-trip; legacydeepseek-reasonerrejectsreasoning_contenton input, so strip it only when targeting that legacy ID.

Parameter compatibility, line by line

| Parameter | OpenAI | DeepSeek (V4) | Notes |

|---|---|---|---|

model |

Required | Required | deepseek-v4-pro or deepseek-v4-flash |

messages |

Required | Required | Same role/content shape |

temperature |

0–2 | 0–2 (non-thinking only) | Use 0.0 for code, 1.0 for analysis, 1.3 for chat, 1.5 for creative writing |

top_p |

Yes | Yes (non-thinking only) | Ignored in thinking mode |

max_tokens |

Yes | Yes (up to 384K on V4) | Set explicitly when using JSON mode |

stream |

Yes | Yes | Reasoning streams as delta.reasoning_content |

response_format |

Yes | Yes | JSON mode — see caveats below |

tools / tool_choice |

Yes | Yes | OpenAI-format function declarations work as-is |

reasoning_effort |

o-series only | V4 both tiers | "high" or "max" |

thinking |

n/a | Pass via extra_body |

{"type": "enabled"} |

logprobs / top_logprobs |

Yes | Errors in thinking mode | Use only in non-thinking |

For a deeper walk through the request shape, the DeepSeek API documentation explainer covers headers, error shapes, and the /models and /user/balance helper endpoints.

The stateless gotcha

The web chat at chat.deepseek.com keeps your conversation history server-side. The API does not. Every POST /chat/completions call is independent — the server has no memory of previous turns. Your client must resend the full messages array on every request to sustain a multi-turn conversation. This is identical to OpenAI’s behaviour, but worth stating because the chatbot UI obscures it.

Practically, that means a multi-turn loop looks like this:

messages = [{"role": "system", "content": "You are a helpful assistant."}]

while True:

user_text = input("> ")

messages.append({"role": "user", "content": user_text})

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=messages,

)

reply = resp.choices[0].message

messages.append({"role": "assistant", "content": reply.content})

print(reply.content)If you used thinking mode, append only content — never reasoning_content — to the next request.

JSON mode, tool calling, FIM, streaming

The advanced features all work through the OpenAI SDK; they just need the right caveats.

JSON mode

Set response_format={"type": "json_object"}. DeepSeek’s docs describe this as designed to return valid JSON, not guaranteed. Three rules keep production code safe:

- Include the word json in your prompt and a small example schema.

- Set

max_tokenshigh enough that the JSON cannot be truncated. - Handle the case where

contentcomes back empty.

The mechanics in detail are covered in our DeepSeek API JSON mode guide.

Tool calling

OpenAI-format tools declarations work without modification. The model emits tool_calls entries on the assistant message, your code executes them, and you append a {"role": "tool", "tool_call_id": ..., "content": ...} turn before the next request. Both thinking and non-thinking modes support tools.

FIM completion (Beta)

Fill-in-the-middle is available on V4 in non-thinking mode only, against the https://api.deepseek.com/beta base URL. Useful for IDE-style code suggestions; not useful if you also want step-by-step reasoning.

Streaming

Set stream=true and consume the SSE chunks. The deltas carry delta.content as on OpenAI; in thinking mode they additionally carry delta.reasoning_content. The streaming patterns and back-pressure tips are in our DeepSeek API streaming reference.

A worked cost example: V4-Flash vs V4-Pro

Pricing as of April 2026, per the official DeepSeek API documentation. Verify on the pricing page before committing — Preview-window rates can move.

| Tier | Cache hit input ($/M) | Cache miss input ($/M) | Output ($/M) |

|---|---|---|---|

deepseek-v4-flash |

$0.0028 | $0.14 | $0.28 |

deepseek-v4-pro |

$0.003625 (promo, list $0.0145) | $0.435 (promo, list $1.74) | $0.87 (promo, list $3.48) |

Imagine 1,000,000 calls with a 2,000-token system prompt (cached after the first call), a 200-token user message (always uncached — the cache only matches stable prefixes, not new user input), and a 300-token response.

On deepseek-v4-flash:

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 × $0.28/M = $84.00

- Total: $117.60

On deepseek-v4-pro:

- Cached input: 2,000,000,000 × $0.003625/M (promo) = $7.25 (list $29.00)

- Uncached input: 200,000,000 × $0.435/M (promo) = $87.00 (list $348.00)

- Output: 300,000,000 × $0.87/M (promo) = $261.00 (list $1,044.00)

- Total: $355.25 (V4-Pro 75% promo through 2026-05-31; list $1,421.00)

Pro is roughly 3× the cost of Flash during the V4-Pro 75% promo through 2026-05-31, and ~14× at list. The off-peak discount that applied in the V3.1/V3.2 era ended on September 5, 2025, and was not reintroduced with V4. For interactive estimates, the DeepSeek pricing calculator takes the same three buckets and produces a per-call number. Repeated prefixes get the cache-hit price tier automatically — the prefix-cache mechanics are covered in DeepSeek context caching.

Error handling and rate limits

Error codes match the OpenAI envelope, with two DeepSeek-specific quirks worth remembering:

402 Insufficient Balancefires immediately if your account has no credit. Top up before integration testing.422is the parameter-validation error you will see if you send an unsupported field combination — for example,logprobsin thinking mode.429and5xxerrors should be retried with exponential backoff.

Wrap calls in a retry helper that handles 429 and 5xx with exponential backoff; do not retry 4xx errors automatically — they are logic bugs, not transient failures. The full table of codes lives in the DeepSeek API error codes reference.

When OpenAI compatibility stops being a perfect fit

The compatibility surface is broad, but it is not 100%. A short, honest list of where it leaks:

- No Responses API. DeepSeek implements Chat Completions. If your code uses OpenAI’s newer

client.responses.createpath, you will need to fall back toclient.chat.completions.create. - No Assistants API, no Files API, no embeddings. The OpenAI-compatible surface is chat-completion-shaped, not the full OpenAI platform.

- Thinking-mode parameter quirks. Sampling controls are silently ignored;

logprobserrors out. - Sampling defaults differ. DeepSeek recommends temperature=1.0, top_p=1.0; do not import GPT-5.5 or Claude defaults.

- Anthropic SDK works too. If your stack already speaks Claude’s message format, point it at

https://api.deepseek.com/anthropicrather than rewriting it.

For broader patterns — retries, idempotency, observability — the DeepSeek API best practices page collects what we run in production. And if you are evaluating whether to swap providers at all, DeepSeek vs ChatGPT walks through the trade-offs honestly.

Migration checklist

- Get a key and add at least

$2to your balance — the platform refuses calls without it. - Change

base_urltohttps://api.deepseek.comand replace the API key. - Replace OpenAI model strings with

deepseek-v4-flash(default) ordeepseek-v4-pro(frontier-tier work). - If you have legacy

deepseek-chatordeepseek-reasonerin your code, update before July 24, 2026 15:59 UTC. - Decide thinking vs non-thinking per call site; gate

reasoning_effort="max"behind a feature flag because the cost can spike. - Keep your retry/backoff layer; DeepSeek’s error codes match the OpenAI shape closely enough that existing handlers usually port over unchanged.

- Run a small evaluation harness — even ten representative prompts across both tiers will tell you whether Pro’s quality lift is worth the spend on your data.

For a broader tour of what the API will and won’t do, see the full DeepSeek API docs and guides.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

How do I use the OpenAI SDK with DeepSeek?

Install the official openai package, then construct the client with base_url="https://api.deepseek.com" and your DeepSeek API key. Set model to deepseek-v4-flash or deepseek-v4-pro and call client.chat.completions.create() exactly as you would against OpenAI. Pass DeepSeek-specific fields like thinking through extra_body. Full setup steps are in our DeepSeek API getting started tutorial.

Is the DeepSeek API really compatible with OpenAI?

Yes for Chat Completions — the request and response shapes match closely enough that the OpenAI Python and Node SDKs work after changing base_url and api_key. Compatibility does not extend to OpenAI’s Responses API, Assistants, Files, or embeddings; those are not part of DeepSeek’s surface. For a deeper feature-by-feature breakdown, see the DeepSeek API review.

What is the base URL for DeepSeek’s OpenAI-compatible API?

Use https://api.deepseek.com for standard Chat Completions traffic. The OpenAI SDK also accepts https://api.deepseek.com/v1 — the /v1 here is just an SDK convention, not a model version. Beta features like FIM and Chat Prefix Completion live under https://api.deepseek.com/beta. Authentication and key scoping are covered in DeepSeek API authentication.

Can I keep using deepseek-chat and deepseek-reasoner?

Only until July 24, 2026 at 15:59 UTC. After that the legacy IDs are fully retired. Today they route to deepseek-v4-flash in non-thinking and thinking modes respectively, so the easiest migration is to swap the model string to deepseek-v4-flash and add reasoning_effort="high" wherever you currently call the reasoner. The full V4 model lineup is on our DeepSeek V4-Flash page.

Why does my OpenAI code work for DeepSeek chat but not embeddings?

Because DeepSeek’s OpenAI-compatible surface is Chat Completions only. There is no embeddings endpoint, no Assistants, no Files. If your application needs vector embeddings, run them through a separate provider or a self-hosted model and keep DeepSeek for generation. Code patterns and worked examples are collected in DeepSeek API code examples.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- OfficialOfficial DeepSeek API documentationOpenAI- and Anthropic-compatible surfaces, base URLs, V4 parametersLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

Methodology

Pricing figures were checked against the official DeepSeek pricing page and normalised into estimated monthly costs using sample token usage scenarios. Actual costs may vary depending on caching, input/output ratio, regional availability, and provider updates.

Data confidence

High — figures checked directly against the official pricing page on the review date.

Editorial note

DeepSeek occasionally runs promotional discounts that are not always reflected in the headline page. The pricing dataset on this site auto-refreshes monthly; this article reflects the dataset on the date shown above.