DeepSeek API Function Calling on V4: A Practical Guide

You want the model to fetch live data, hit your internal services, or chain steps through your own code — not hallucinate the answer. That is what DeepSeek API function calling is for, and on V4 it works through the same OpenAI-compatible `tools` array you already use elsewhere. The behaviour changed meaningfully in late 2025 and again with the V4 Preview on April 24, 2026: tool use now works in thinking mode, strict mode is supported on both modes, and the legacy `deepseek-chat` and `deepseek-reasoner` IDs are on a clock. This guide walks through the request shape, a complete Python loop, strict schemas, thinking-mode tool calls, error handling, and a costed worked example so you can ship without surprises.

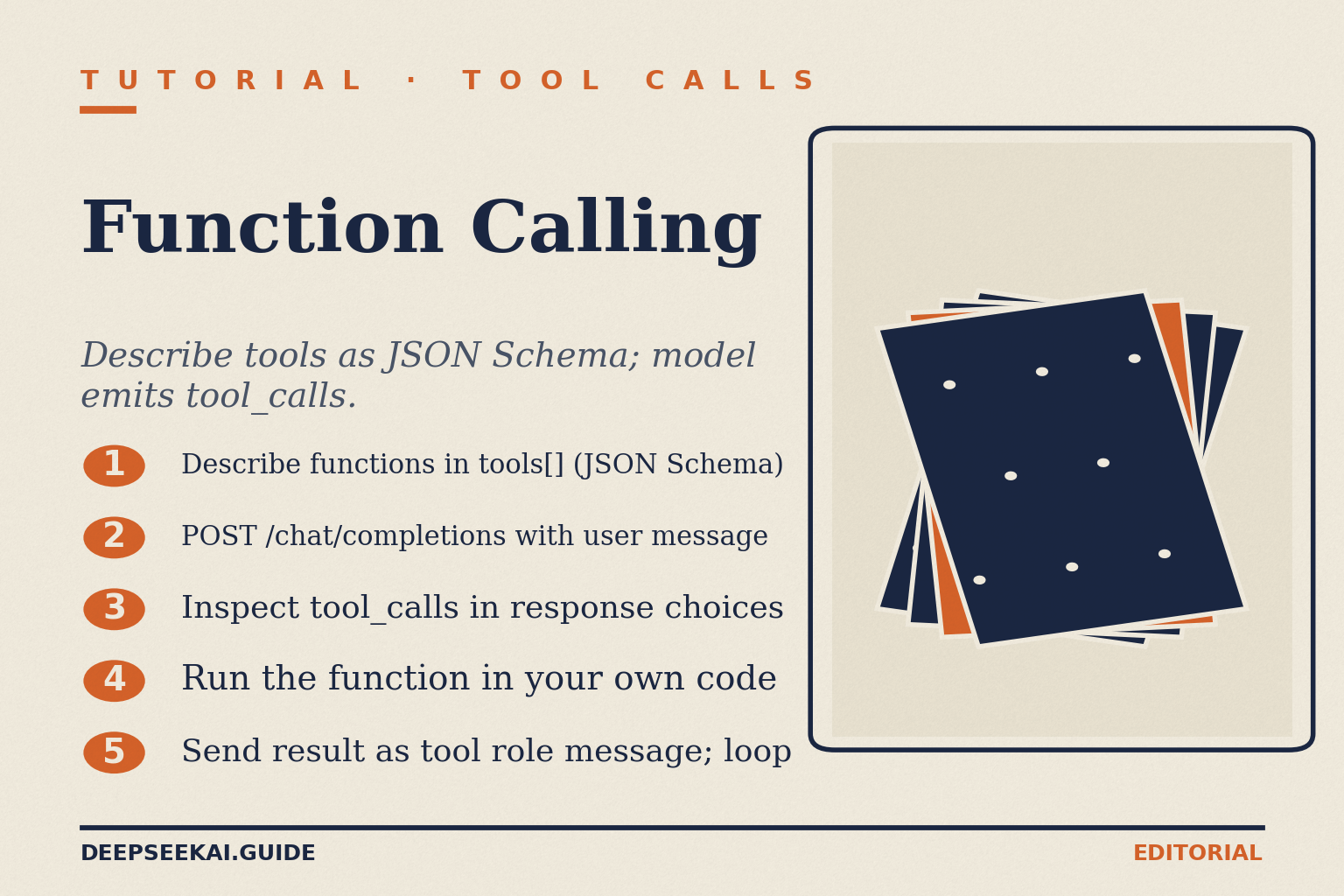

What DeepSeek API function calling actually is

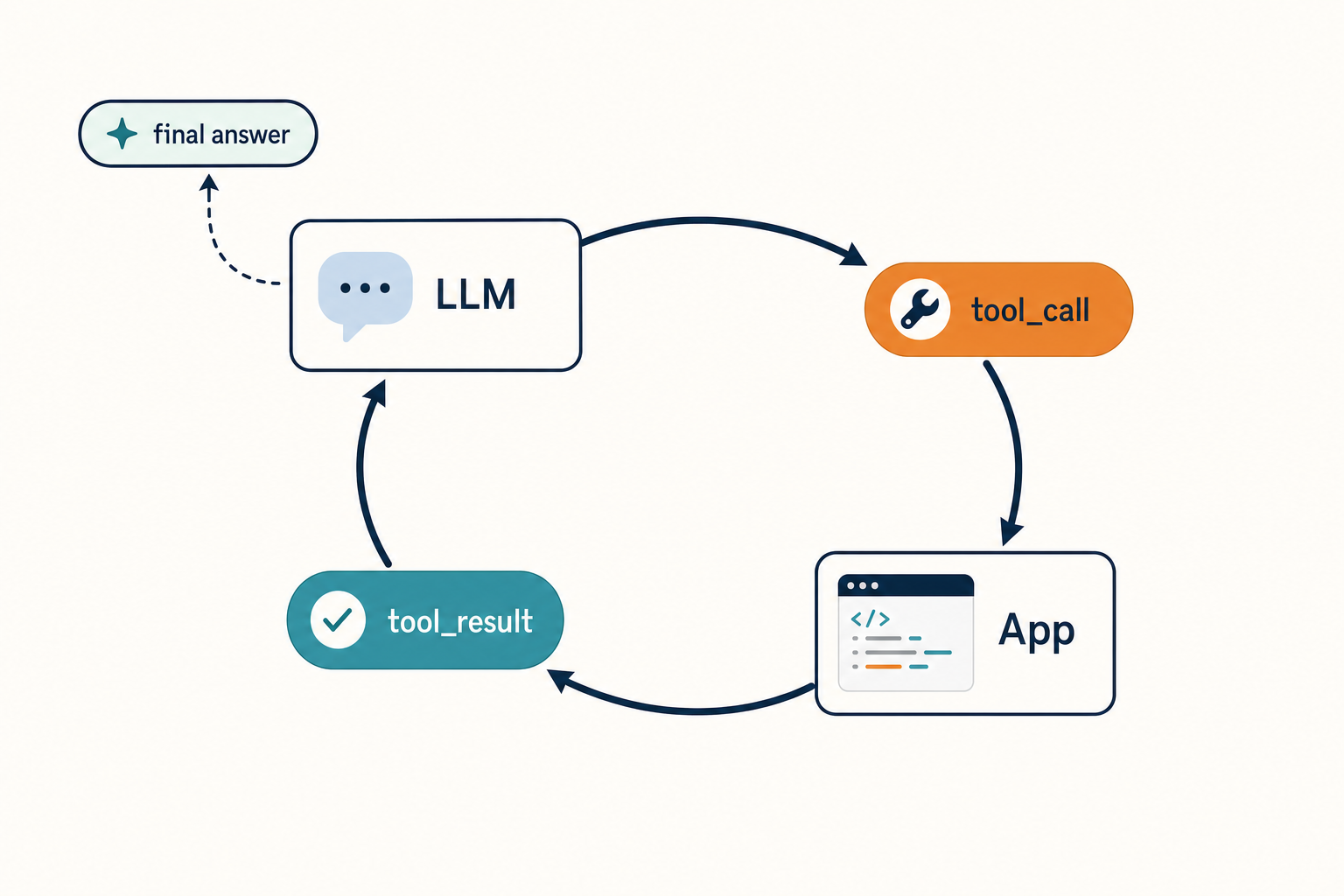

Function calling — DeepSeek’s docs use “Tool Calls” and “Function Calling” interchangeably — is a contract: you describe one or more functions in JSON Schema, send the user message, and the model decides whether to answer directly or return a structured tool-call request. The feature lets the model decide when it should request an external function instead of answering directly; the model can generate the tool request, but your application still has to execute the function and send the result back. Your backend is the execution layer; the model never touches your APIs or databases on its own.

That separation matters. The model proposes; your code disposes. If you forget to send the tool’s output back into the next request, the conversation stalls.

V4 model IDs and where function calling fits

The current generation is DeepSeek V4 (Preview), released April 24, 2026. It ships as two open-weight Mixture-of-Experts models with the same feature set: deepseek-v4-pro (1.6T total / 49B active) and deepseek-v4-flash (284B / 13B active). Both expose tool calling on the OpenAI-compatible POST /chat/completions endpoint at https://api.deepseek.com. The DeepSeek API uses an API format compatible with OpenAI/Anthropic; by modifying the configuration, you can use the OpenAI/Anthropic SDK or software compatible with the OpenAI/Anthropic API to access the DeepSeek API.

Legacy IDs still work, briefly. The model names deepseek-chat and deepseek-reasoner will be deprecated on 2026/07/24; for compatibility, they correspond to the non-thinking mode and thinking mode of deepseek-v4-flash, respectively. If you maintain an older integration, the migration is a one-line model= swap — base URL unchanged.

| Model ID | Tier | Tool calling | Thinking-mode tools | Strict mode |

|---|---|---|---|---|

deepseek-v4-pro |

Frontier | Yes | Yes | Yes (Beta) |

deepseek-v4-flash |

Cost-efficient | Yes | Yes | Yes (Beta) |

deepseek-chat (legacy) |

Routes to V4-Flash | Yes | No (non-thinking) | Yes |

deepseek-reasoner (legacy) |

Routes to V4-Flash | Yes | Yes | Yes |

Retirement for the two legacy IDs: 2026-07-24 15:59 UTC. After that, requests using them will fail. Plan accordingly. For a deeper map of every model, see the DeepSeek V4 overview and the DeepSeek V4-Flash tier page.

Quickstart: a minimal tool call

The wire format matches OpenAI’s exactly. Define tools in the tools array, send the request, inspect message.tool_calls, run the function in your code, then send the result back as a tool role message. Here is the canonical Python flow, which routes to deepseek-v4-pro in non-thinking mode:

from openai import OpenAI

client = OpenAI(

api_key="<your api key>",

base_url="https://api.deepseek.com",

)

tools = [

{

"type": "function",

"function": {

"name": "get_weather",

"description": "Get weather of a location, the user should supply a location first.",

"parameters": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "The city and state, e.g. San Francisco, CA",

}

},

"required": ["location"],

},

},

}

]

def send(messages):

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=messages,

tools=tools,

)

return resp.choices[0].message

messages = [{"role": "user", "content": "How's the weather in Hangzhou?"}]

msg = send(messages)

tool = msg.tool_calls[0]

messages.append(msg)

messages.append({"role": "tool", "tool_call_id": tool.id, "content": "24°C"})

final = send(messages)

print(final.content)The execution flow: the user asks about the weather in Hangzhou, the model returns the function call get_weather({location: ‘Hangzhou’}), your code calls the function and provides the result to the model, and the model returns in natural language, “The current temperature in Hangzhou is 24°C.” If you have not picked up an API key yet, the get a DeepSeek API key walkthrough takes a couple of minutes; the broader OpenAI-shaped flow is covered in DeepSeek OpenAI SDK compatibility.

The request and response shape

Three request fields drive the behaviour:

tools— an array of function descriptors. Each hastype: "function"and afunctionobject withname,description, and a JSON Schemaparametersblock.tool_choice— controls invocation. “none” means the model will not call any tool and instead generates a message; “auto” means the model can pick between generating a message or calling one or more tools; “required” means the model must call one or more tools; specifying a particular tool via {“type”: “function”, “function”: {“name”: “my_function”}} forces the model to call that tool.strict— set inside a function descriptor to enforce the schema.

On the response side, when the model wants to invoke a tool it returns finish_reason: "tool_calls" and a tool_calls array on the assistant message. The arguments to call the function are returned as generated by the model in JSON format; note that the model does not always generate valid JSON, and may hallucinate parameters not defined by your function schema — validate the arguments in your code before calling your function. Always wrap json.loads(tool.function.arguments) in a try/except.

Strict mode: enforcing the schema

Strict mode is a Beta feature that closes the validation loop on the server. In strict mode, the model strictly adheres to the format requirements of the Function’s JSON schema when outputting a tool call, ensuring that the model’s output complies with the user’s definition; it is supported by both thinking and non-thinking mode. The server will validate the JSON Schema of the Function provided by the user.

To enable it, add "strict": true to the function definition and lock the schema down — usually that means setting additionalProperties: false:

{

"type": "function",

"function": {

"name": "get_weather",

"strict": true,

"description": "Get weather of a location.",

"parameters": {

"type": "object",

"properties": {

"location": {"type": "string"}

},

"required": ["location"],

"additionalProperties": false

}

}

}If your schema uses types DeepSeek’s strict validator does not support, the request errors out before the model runs — useful, because it surfaces schema bugs at deploy time rather than mid-conversation. Pair this with DeepSeek API JSON mode when you need plain JSON output without a tool call.

Function calling in thinking mode

Thinking mode used to be a separate model. On V4 it is a request parameter: pass reasoning_effort="high" (or "max") plus extra_body={"thinking": {"type": "enabled"}} to either V4 model. From DeepSeek-V3.2, the API supports tool use in the thinking mode. The DeepSeek model’s thinking mode supports tool calls; before outputting the final answer, the model can perform multiple turns of reasoning and tool calls to improve the quality of the response.

One non-obvious rule trips up most first integrations: unlike turns in thinking mode that do not involve tool calls, for turns that do perform tool calls, the reasoning_content must be fully passed back to the API in all subsequent requests; if your code does not correctly pass back reasoning_content, the API will return a 400 error.

That means the assistant message you append back into messages after a thinking-mode tool call must include reasoning_content alongside the final content. The pattern looks like this:

messages.append({

"role": "assistant",

"content": resp.choices[0].message.content,

"reasoning_content": resp.choices[0].message.reasoning_content,

"tool_calls": resp.choices[0].message.tool_calls,

})Two more things to know in thinking mode: thinking mode does not support the temperature, top_p, presence_penalty, or frequency_penalty parameters — for compatibility with existing software, setting these parameters will not trigger an error but will also have no effect. If you need temperature control (DeepSeek recommends 0.0 for code, 1.0 for data analysis, 1.3 for general chat, 1.5 for creative writing), stay in non-thinking mode. The DeepSeek prompt engineering guide goes deeper on temperature defaults.

Multi-tool, multi-turn loops

Real workflows chain tools. The model might call get_date, get the answer, then call get_weather with that date. Each round trip is a separate request because the API is stateless — DeepSeek does not remember prior turns; you resend the full messages array every time. The web chat keeps history for you; the API does not. The shape of a robust loop:

- Send messages plus tools.

- If

finish_reason == "tool_calls": parse arguments, run each function, append tool results, loop. - If

finish_reason == "stop": returncontentto the user. - Cap the loop at 5–10 iterations to prevent runaway calls.

For a worked end-to-end loop with retries and logging, see DeepSeek API code examples; for a heavier orchestration story, the DeepSeek with LangChain tutorial wires the same pattern through LangChain’s tool nodes.

Error handling patterns

Three failure modes show up in production:

- Invalid JSON arguments. Wrap

json.loadsontool.function.arguments. On failure, send atoolmessage with an error string and let the model retry. - Hallucinated parameters or unknown tool names. Validate

tool.function.nameagainst your registry before dispatching. - 400 in thinking mode after a tool call. Almost always a missing

reasoning_contenton the appended assistant message.

Other status codes follow the standard pattern documented in DeepSeek API error codes. Rate limits — relevant when looping tools at scale — are covered in the DeepSeek API rate limits reference.

Costing a tool-calling workload

Tool calls multiply requests because each tool round trip is a fresh POST /chat/completions. Cost the workload across three token buckets: cached input, uncached input, output. Skipping the uncached line is the most common arithmetic error.

Worked example, deepseek-v4-flash: an agent makes 1,000,000 calls. Each call has a 2,000-token system+tool-schema prefix (cached after the first hit), a 200-token user/tool message (uncached miss against that prefix), and a 300-token response.

- Cached input: 2,000 × 1,000,000 = 2.0B tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200M tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300M tokens × $0.28/M = $84.00

- Total: $117.60

The same workload on deepseek-v4-pro at the 75% promo through 2026-05-31 costs $7.25 + $87.00 + $261.00 = $355.25 (list rates: $29.00 + $348.00 + $1,044.00 = $1,421.00) — roughly 3× Flash during the promo and 10× at list. Use Pro when the benchmark uplift on coding or agentic work justifies the spend; default to Flash otherwise. thinking_mode is the biggest cost lever: non-thinking skips the reasoning trace entirely and returns tokens at roughly V3.2 speed; thinking enables a reasoning block that costs extra tokens but improves accuracy on code and math; thinking_max produces the scores in DeepSeek’s headline table, also burns the most tokens, and is the only mode that requires a 384K+ context budget. Pricing is current as of April 2026 — verify on the DeepSeek API pricing page before committing budget. The DeepSeek context caching doc explains how the cache-hit rate gets applied automatically.

Streaming tool calls

Set stream=true and the SDK yields delta chunks. Tool call arguments arrive piece-by-piece as delta.tool_calls[*].function.arguments — accumulate them into a string and parse once the stream finishes that tool call. Reasoning traces stream separately when thinking mode is on; the delta.reasoning_content field carries them and you can surface them in the UI or drop them. The DeepSeek API streaming reference has a complete delta accumulator.

Anthropic-compatible alternative

DeepSeek also exposes an Anthropic-compatible surface against the same base URL — useful if your codebase already speaks Anthropic. Tool calling there uses Anthropic’s input_schema shape rather than OpenAI’s parameters. Pick one surface per service and stay consistent; the OpenAI shape is more widely supported in the ecosystem.

When to reach for tool calling

Tool calling earns its keep when the answer depends on data the model cannot have: live prices, your customer’s order, today’s calendar, a calculation that must be exact. It is overkill when you only need structured output — JSON mode is lighter. It is the wrong tool when you need genuine agency across many steps with planning; that is where thinking-mode tool calls plus a constrained tool registry pay off.

For broader API patterns and conventions, the DeepSeek API docs and guides hub indexes everything from authentication to webhooks.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

How does DeepSeek API function calling work?

You send a tools array describing your functions in JSON Schema. The model decides whether to answer directly or return a tool_calls array with a function name and JSON arguments. Your code executes the function, appends the result as a role: "tool" message, and re-sends the full conversation. The API is stateless, so history must come on every request. See the DeepSeek API documentation for the full schema.

What is strict mode in DeepSeek tool calls?

Strict mode is a Beta flag that forces the model’s tool-call output to conform exactly to your function’s JSON Schema. Set "strict": true in the function definition and the server validates the schema upfront. It is supported by both thinking and non-thinking mode. Use it whenever malformed arguments would break downstream code. The DeepSeek API best practices guide covers schema design.

Can DeepSeek call tools in thinking mode?

Yes, on both V4 models. Set reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}}. The critical rule: when a thinking turn produces a tool call, you must echo reasoning_content back on the assistant message in the next request, or the API returns a 400 error. Non-tool thinking turns drop the trace silently. Read the V4 thinking-mode walkthrough in the DeepSeek V4-Pro page.

Why is my function calling returning empty tool_calls?

Three usual causes: tool_choice is implicitly "auto" and the model decided text was enough — set "required" to force a call; the function description is too vague for the model to map intent to schema; or you are on a deprecated route. Check the DeepSeek API error codes reference for status-code patterns.

Does function calling work with the OpenAI SDK?

Yes — set base_url="https://api.deepseek.com" and the OpenAI Python or Node SDK works against DeepSeek without code changes. The official openai SDK works with a base-URL override; every existing OpenAI-compatible wrapper, including LangChain, LlamaIndex, and DSPy, also works. Full compatibility notes live in the DeepSeek OpenAI SDK compatibility guide.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- OfficialDeepSeek API tool-calling documentationTools-array schema, tool_calls response shape, strict-mode BetaLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

Methodology

Pricing figures were checked against the official DeepSeek pricing page and normalised into estimated monthly costs using sample token usage scenarios. Actual costs may vary depending on caching, input/output ratio, regional availability, and provider updates.

Data confidence

High — figures checked directly against the official pricing page on the review date.

Editorial note

DeepSeek occasionally runs promotional discounts that are not always reflected in the headline page. The pricing dataset on this site auto-refreshes monthly; this article reflects the dataset on the date shown above.