Understanding DeepSeek API Rate Limits in Production

You wired up `deepseek-v4-flash`, the smoke test passed, and the first real batch fell over with a wall of HTTP 429s. That is the moment most teams discover that DeepSeek API rate limits are not a fixed table of numbers — they are a dynamic concurrency control that flexes with server load and with how aggressively your account is bursting. There is no published “10 RPM, 200K TPM” tier card. The only documented behaviour is that the API throttles when it has to and signals that with a 429, sometimes after long keep-alive waits.

This guide walks through what DeepSeek actually documents, what happens at the wire level, and the production patterns that keep V4 traffic flowing.

What DeepSeek actually documents about rate limits

DeepSeek’s official rate-limit page is unusually short, and that brevity is the point. According to the DeepSeek API documentation, the API dynamically limits user concurrency based on server load, returns an HTTP 429 the moment you reach that limit, and may take some time to respond after a request is accepted. There is no published per-minute request quota, no token-per-minute table, and no tiered plan you can buy your way past.

The FAQ closes the loop on the obvious next question. DeepSeek does not currently support raising the dynamic rate limit on individual accounts, and there is a unified pricing standard with no tiered plans. In practice that means two things for anyone running DeepSeek V4 in production:

- The cap moves. The effective ceiling depends on global traffic at the moment your request lands. Quiet hours behave very differently from peak hours.

- You cannot pay to skip the queue. Spend more, and the same dynamic logic still applies. Engineering around the limit is the only lever you control.

This is a deliberate trade-off. As Simon Willison noted when the policy first surfaced in January 2025, a flexible cap lets teams fire hundreds of parallel requests through quiet periods, which is increasingly a competitive differentiator for large-scale data work. The flip side is that you have no firm ceiling to plan against.

The current API surface: V4, legacy IDs, and where 429s come from

Rate limits apply across the whole DeepSeek V4 generation, which released on April 24, 2026. V4 ships as two open-weight Mixture-of-Experts models, both addressed through the same OpenAI-compatible endpoint:

deepseek-v4-pro— frontier tier, 1.6T total / 49B active parameters.deepseek-v4-flash— cost-efficient tier, 284B total / 13B active parameters.

Thinking mode is a request parameter on either model, not a separate model ID. Set reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}}, or reasoning_effort="max" for maximum-effort reasoning. The response then returns reasoning_content alongside the final content. Thinking is enabled by default; pass extra_body={"thinking": {"type": "disabled"}} for non-thinking.

If you maintain older integrations: the legacy deepseek-chat and deepseek-reasoner IDs still work, currently routing to deepseek-v4-flash in non-thinking and thinking modes respectively (these are the only legacy IDs in the retirement notice; deepseek-coder is not a current hosted endpoint). They are fully retired on 2026-07-24 at 15:59 UTC. After that date, requests using those IDs will fail. Migrating is a one-line model= swap; base_url does not change. For the wider migration playbook, see our DeepSeek API documentation overview.

Quickstart: a request that respects the dynamic limit

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. The minimum viable Python call uses the OpenAI SDK with DeepSeek’s base URL:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_DEEPSEEK_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": "You are a precise assistant."},

{"role": "user", "content": "Summarise this changelog in 3 bullets."},

],

max_tokens=512,

temperature=1.3,

timeout=120, # generous read timeout — see keep-alive notes below

)

print(resp.choices[0].message.content)The equivalent curl call is the same pattern: POST to https://api.deepseek.com/chat/completions with a Bearer token. DeepSeek also publishes an Anthropic-compatible surface against the same base URL, so the Anthropic SDK works by swapping base_url and api_key. Either way, the rate-limit behaviour is identical. If you are still wiring up keys, the guide on how to get a DeepSeek API key walks through the console.

What “dynamic concurrency” feels like at the wire level

The behaviour that catches most teams out is not the 429 itself — it is what happens before the 429. While your request is waiting to be scheduled, DeepSeek keeps the TCP connection alive rather than dropping it. For non-streaming requests, the server returns empty lines while waiting for the request to be scheduled; for streaming requests it returns SSE keep-alive comments (: keep-alive), and clients parsing the HTTP response themselves must handle these empty lines or comments appropriately.

Two consequences worth designing for:

- Long-tail latencies are real. A request that “succeeds” might still have sat in the queue for minutes during peak hours. Set generous read timeouts and make sure your reverse proxy, serverless runtime, or API gateway does not cut the connection prematurely.

- If inference has not started after roughly 10 minutes, the server closes the connection. Treat this as the practical upper bound for a single in-flight request and design your retry logic accordingly.

What triggers a 429

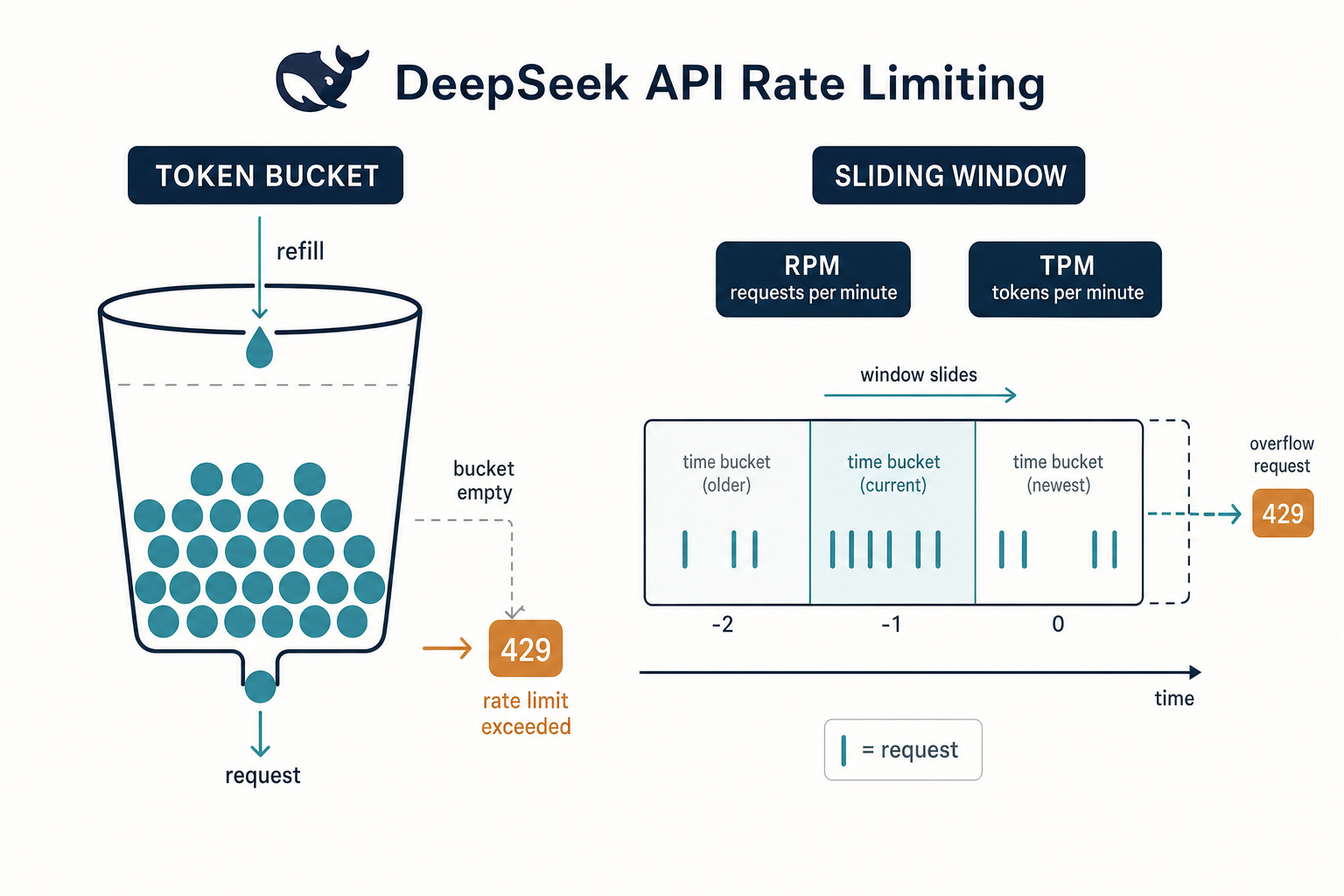

The official cause is concurrency, not raw request volume. Common triggers in practice include sending bursts of requests in short intervals, single-IP / single-key concentration, ignoring Retry-After hints, and concurrent in-flight requests exceeding what the dynamic limit allows at that moment. The third-party characterisation is consistent with the docs: DeepSeek does not impose a fixed strict cap, but if the service is under heavy load or one account makes an unusually high number of calls, the system may flag and throttle it, so bursting too many at once still triggers 429s.

A simple mental model: think of the limit as a token bucket whose refill rate the platform adjusts in real time. You will rarely hit it on a single sequential client. You will hit it the first time you fan out 200 parallel calls through a thread pool with no governor.

Production patterns that survive 429s

Because there is no number to plan against, the only sustainable approach is to make your client well-behaved. Four patterns cover the vast majority of real workloads.

1. Exponential backoff with jitter

The canonical retry loop on a 429 is exponential backoff: wait, retry, double the wait on each subsequent failure, and add a small random offset (jitter) so synchronised clients do not retry in lockstep. A workable Python implementation:

import random, time

from openai import OpenAI, RateLimitError

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

def call_with_backoff(messages, max_retries=6):

delay = 1.0

for attempt in range(max_retries):

try:

return client.chat.completions.create(

model="deepseek-v4-flash",

messages=messages,

max_tokens=512,

)

except RateLimitError:

if attempt == max_retries - 1:

raise

sleep_for = delay + random.uniform(0, delay * 0.5)

time.sleep(sleep_for)

delay *= 2Cap the maximum delay (60–120 seconds is sensible) and the retry count. The pattern matches DeepSeek’s own guidance on transient 429/5xx classes, and is the default recommendation in most API client libraries with built-in exponential-backoff support.

2. A bounded concurrency pool

Backoff alone is reactive. The proactive companion is a semaphore that caps how many requests you ever have in flight at once. Start at 8–16 concurrent requests, monitor the 429 rate, and tune from there. This is more effective than tuning RPM, because the limit DeepSeek enforces is concurrency-shaped to begin with.

3. Circuit breaker for sustained failure

When 25–30% of calls in a rolling minute return 429 or 5xx, stop sending for a cool-off window (30–120 seconds), then send one or two canary requests. Resume full traffic only when canaries succeed. This prevents retry storms during DeepSeek incidents and keeps your own queues from filling. A helpful side effect: a banner saying “API is throttling: we’ll resume shortly” is honest UX and far better than a spinner that pretends progress.

4. Cut the work, not just the rate

Every token you do not send is a token you cannot be throttled on. Two use points:

- Context caching. A repeated system prompt drops to the cache-hit price tier automatically. Pair this with the DeepSeek context caching guide to structure prompts so the cache actually hits.

- Streaming. Streaming does not raise the limit, but it gets useful tokens to the user faster, which means you can often finish work in fewer total round-trips. The DeepSeek API streaming guide covers the SSE shape.

Headers and parameters worth knowing

A short reference for the parameters that interact with rate-limit behaviour on V4. Both V4 tiers default to a 1,000,000-token context window with output up to 384,000 tokens, so most “limits” you hit will be concurrency, not size.

| Parameter | What it does | Notes for rate-limit work |

|---|---|---|

max_tokens |

Cap on output length | Lower this for chatty endpoints; long generations hold a slot longer. |

temperature |

Sampling randomness | 0.0 for code/maths, 1.3 for chat/translation, 1.5 for creative writing — DeepSeek’s official guidance. |

top_p |

Nucleus sampling | Use as an alternative to temperature, not both at extremes. |

stream |

SSE streaming | Keeps the connection warm via keep-alive comments under load. |

reasoning_effort |

"high" or "max" for thinking mode |

Thinking calls take longer per request, so they consume your concurrency budget more aggressively. |

response_format |

JSON mode ({"type": "json_object"}) |

Designed to return valid JSON, not guaranteed — handle empty content, prompt with the word “json” plus a sample schema, set max_tokens high enough to avoid truncation. |

Two notes the docs imply but do not always state explicitly. First, the API is stateless — clients must resend the full conversation history with every request, unlike the web chat which keeps state for the session. Second, FIM (Fill-In-the-Middle) completion is Beta and works in non-thinking mode only.

How rate limits interact with cost: a worked example

Throttling is not just a latency problem — it shapes how you should size budgets. The math below uses deepseek-v4-flash rates as published on DeepSeek’s pricing page (verify on the official pricing page as of April 2026; for an in-depth breakdown see our DeepSeek API pricing guide).

Scenario: 1,000,000 calls per month with a 2,000-token system prompt (cached after the first call), a 200-token user message (uncached on each call), and a 300-token response.

| Bucket | Tokens | Rate (USD / 1M) | Cost |

|---|---|---|---|

| Input — cache hit | 2,000,000,000 | $0.0028 | $5.60 |

| Input — cache miss | 200,000,000 | $0.14 | $28.00 |

| Output | 300,000,000 | $0.28 | $84.00 |

| Total | $117.60 |

Same workload on deepseek-v4-pro at the 75% promo through 2026-05-31 (cache hit $0.003625, miss $0.435, output $0.87) lands at $355.25 — about 3× the Flash bill (list rates $0.0145 / $1.74 / $3.48 give $1,421.00, ~14× Flash). The rate-limit angle: if Pro’s per-call latency is higher under load, you also burn more concurrency-seconds per request. Many teams keep Flash as the default for high-volume paths and reserve Pro for the small fraction of requests that genuinely benefit from frontier-tier reasoning.

Status pages, monitoring, and when to escalate

Sustained 429s that do not respond to backoff usually mean one of three things:

- A platform incident. Check DeepSeek’s status page first. If it is red, your retries are wasted work.

- Your client is misbehaving. Audit for unbounded fan-out, missing backoff, or a stuck retry loop that has been firing for hours.

- Your workload genuinely exceeds dynamic capacity at that hour. Smooth the load (queue), shift it to a quieter window, or split traffic across providers as a fallback. DeepSeek explicitly does not raise individual-account caps, so contacting support to “request a higher tier” is not a path that exists.

For deeper troubleshooting on every status code DeepSeek emits, the DeepSeek API error codes reference is the companion piece to this article. Teams running DeepSeek alongside other providers should also review our DeepSeek API best practices for a fuller production checklist, and the broader DeepSeek API docs and guides hub for the rest of the developer surface.

What this means in practice

Treat DeepSeek’s rate limits like weather, not architecture. The platform documents the behaviour (dynamic concurrency, 429 on contention, keep-alives during waits, no per-account dial), and the rest is on you: bounded concurrency, exponential backoff with jitter, a circuit breaker for sustained failure, and prompt designs that lean on context caching. Do those four things and 429s become routine background noise rather than incidents. Do none of them and you will rediscover the limit every Tuesday afternoon when traffic spikes.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

What are the DeepSeek API rate limits in requests per minute?

DeepSeek does not publish a fixed requests-per-minute or tokens-per-minute number. The official documentation describes a dynamic concurrency limit that adjusts to server load, returning HTTP 429 when you exceed it. There are no tiered plans, and individual accounts cannot have their cap raised on request. Engineer for backoff and bounded concurrency rather than a fixed RPM target. See the DeepSeek API documentation overview for context.

How do I fix a DeepSeek 429 “Too Many Requests” error?

On a 429, pause and retry with exponential backoff and jitter — start at one second, double on each retry, cap around 60–120 seconds, and limit total attempts. Pair that with a bounded concurrency pool (start at 8–16 in-flight requests) and a circuit breaker if 25–30% of calls in a rolling minute fail. Our DeepSeek API error codes guide covers the full status-code taxonomy.

Does DeepSeek V4 have different rate limits than V3.2?

The dynamic-concurrency policy is the same across the platform — there is no separate V4 rate-limit table. Both deepseek-v4-pro and deepseek-v4-flash share the policy, as do the legacy deepseek-chat and deepseek-reasoner IDs (which route to V4-Flash until the 2026-07-24 15:59 UTC retirement). For tier differences that do matter — context, output, pricing — see DeepSeek V4-Flash and DeepSeek V4-Pro.

Why does my DeepSeek request hang for minutes before returning?

That is the documented keep-alive behaviour, not a bug. While your request waits to be scheduled, DeepSeek sends empty lines (non-streaming) or SSE : keep-alive comments (streaming) to keep the TCP connection open. If inference has not started after roughly 10 minutes the server closes the connection. Set generous read timeouts and ensure your gateway does not cut long-running responses. The DeepSeek API streaming guide covers the SSE format.

Can I pay for higher DeepSeek API rate limits?

No. DeepSeek’s FAQ states there are no tiered plans and the dynamic limit is not raised on individual accounts. Pricing is unified across all customers — the difference between deepseek-v4-flash and deepseek-v4-pro is per-token cost, not throughput allocation. Smooth your traffic, cache aggressive prefixes via DeepSeek context caching, and use a fallback provider for hard SLA work. For full pricing detail see the DeepSeek API pricing guide.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- OfficialDeepSeek API rate-limit documentationDynamic concurrency policy, 429 behaviour, no tiered plansLast checked: April 30, 2026

- PricingDeepSeek API pricing pageV4-Flash worked-cost example ratesLast checked: April 30, 2026

- OfficialDeepSeek status pageTriage step for sustained 429s during platform incidentsLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

Context sources

- AnalysisSimon Willison: DeepSeek API rate-limit policy (Jan 2025)Rationale for flexible cap and competitive differentiation argumentLast checked: April 30, 2026

Methodology

Pricing figures were checked against the official DeepSeek pricing page and normalised into estimated monthly costs using sample token usage scenarios. Actual costs may vary depending on caching, input/output ratio, regional availability, and provider updates.

Data confidence

High — figures checked directly against the official pricing page on the review date.

Editorial note

DeepSeek occasionally runs promotional discounts that are not always reflected in the headline page. The pricing dataset on this site auto-refreshes monthly; this article reflects the dataset on the date shown above.