DeepSeek vs Gemini in 2026: V4 Meets Gemini 3.1 Pro

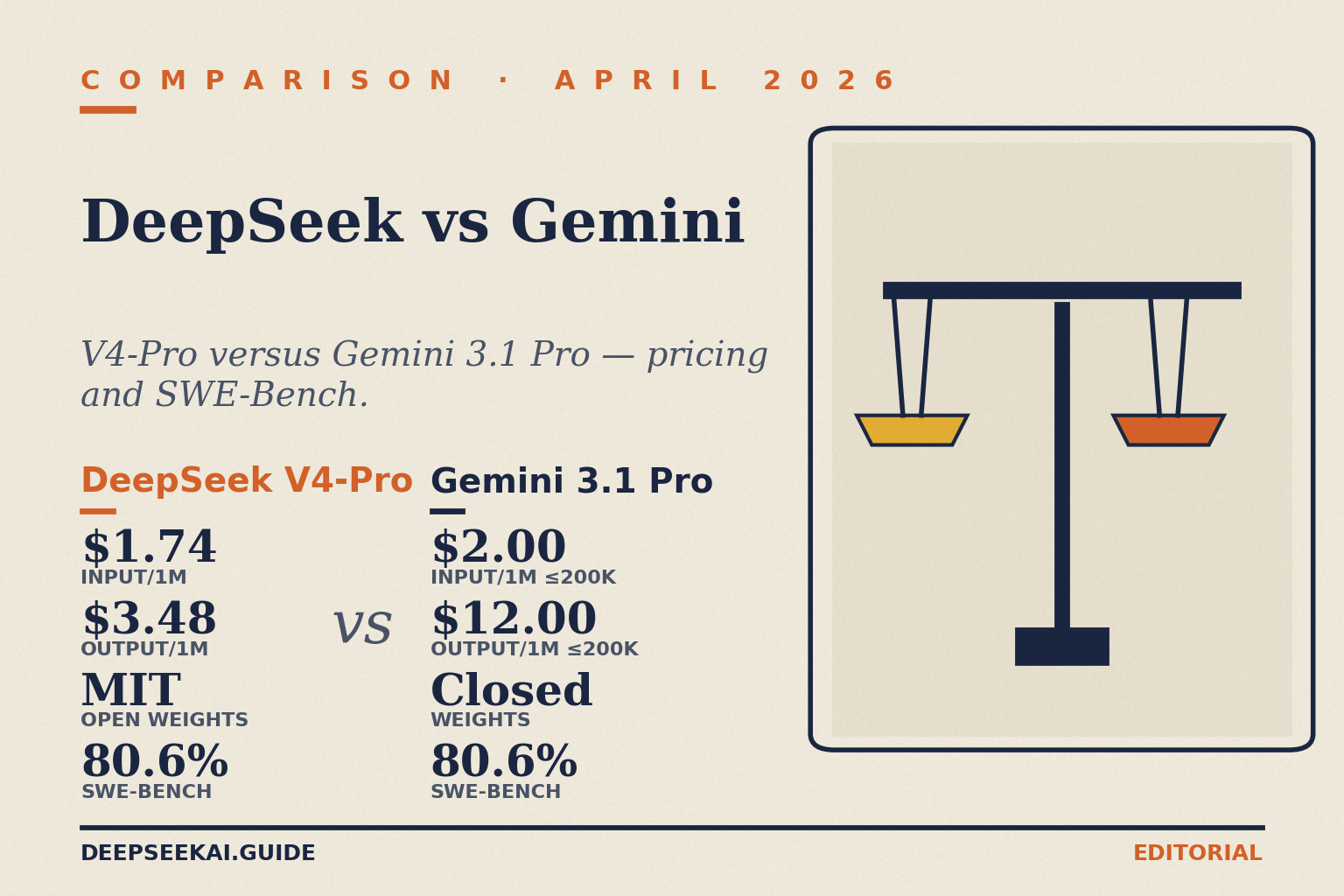

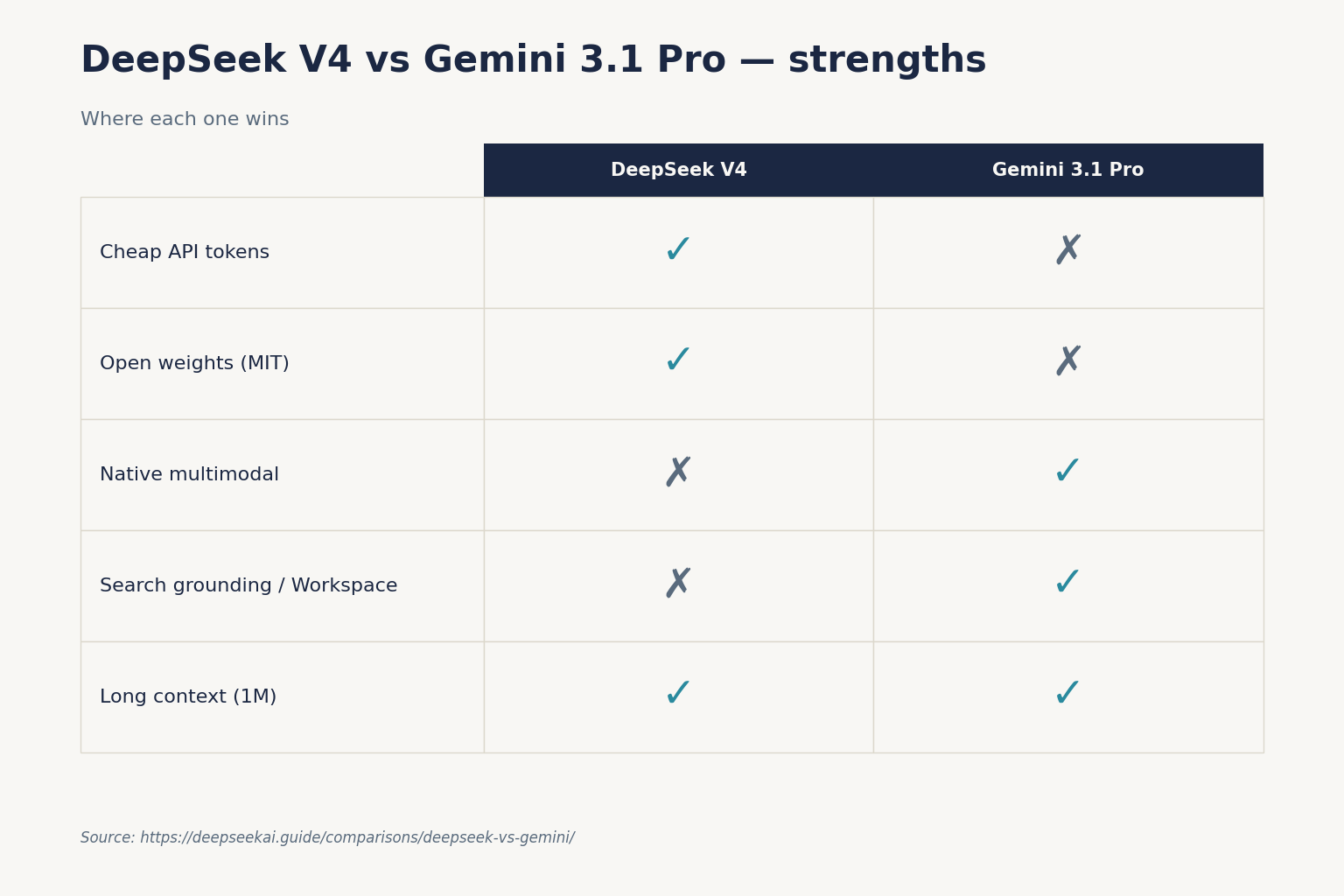

Short answer for April 2026: DeepSeek V4 and Gemini 3.1 Pro are effectively tied on the hardest public reasoning benchmark (both hit 80.6 % on SWE-Bench Verified) — but DeepSeek costs roughly one-third to one-fifth as much per output token, and ships open weights under MIT. Google wins on ecosystem depth, multimodal (video + image generation), and tight integration with Workspace and Search. DeepSeek wins on price, openness, and the option to self-host. Pick DeepSeek for coding and high-volume API work; pick Gemini when you need Google’s grounding tools, video understanding, or an enterprise Workspace stack.

The rest of this article is the reasoning behind that short answer: how the two lines compare across pricing, context, benchmarks, thinking modes, privacy posture, and day-to-day use — with sources, not hand-waving. DeepSeek V4 (the April 24, 2026 preview) is a genuinely new release and invalidates most “DeepSeek vs Gemini” articles you’ll find from 2025. If you’re considering a swap in either direction, this is the current picture.

At a glance: V4-Pro vs Gemini 3.1 Pro, V4-Flash vs Gemini 3 Flash

Two tiers on each side. The most useful comparison pairs the frontier-tier flagships (V4-Pro and Gemini 3.1 Pro) and the cost-efficient workhorses (V4-Flash and Gemini 3 Flash). All numbers below are from vendor pricing pages and technical announcements as of April 24, 2026 — verify at source before you commit to a production budget.

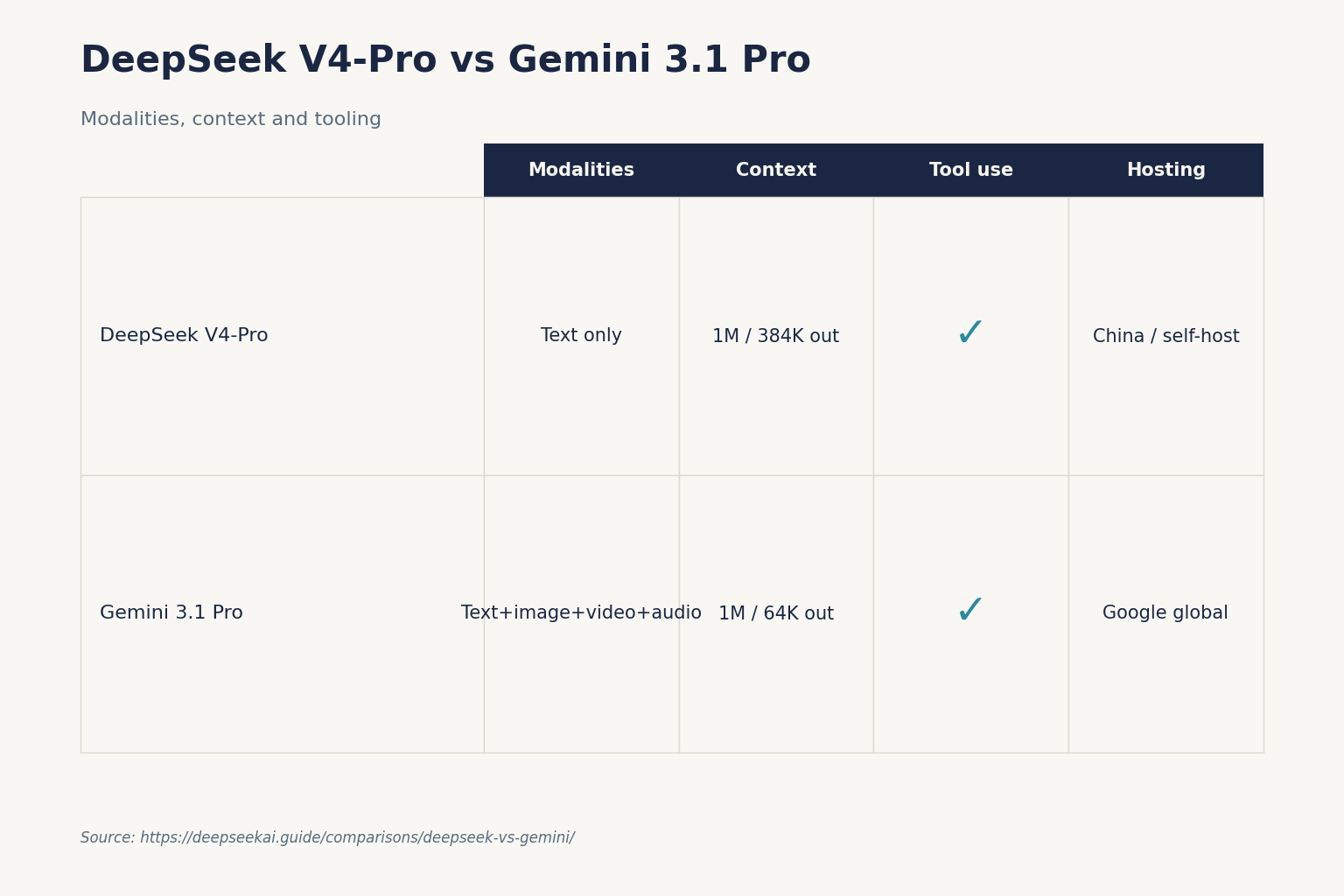

| Feature | DeepSeek V4-Pro | Gemini 3.1 Pro | DeepSeek V4-Flash | Gemini 3 Flash |

|---|---|---|---|---|

| Released | April 24, 2026 (Preview) | Current generation, April 2026 | April 24, 2026 (Preview) | Current generation |

| Parameters | 1.6T total / 49B active (MoE) | Undisclosed | 284B total / 13B active (MoE) | Undisclosed |

| License / weights | MIT, fully open-weight on Hugging Face | Closed / proprietary | MIT, fully open-weight on Hugging Face | Closed / proprietary |

| Context window | 1,000,000 input / 384,000 output | 1,000,000 input / 64,000 output | 1,000,000 input / 384,000 output | 1,000,000 input / 64,000 output |

| Input, cache miss ($/1M) | $0.435 promo (list $1.74) | $2.00 (≤200K ctx), $4.00 (>200K) | $0.14 | $0.50 |

| Input, cache hit ($/1M) | $0.003625 promo (list $0.0145) | Implicit caching; see Vertex AI pricing | $0.0028 | Implicit caching; see Vertex AI pricing |

| Output ($/1M) | $0.87 promo (list $3.48) | $12.00 (≤200K ctx), $18.00 (>200K) | $0.28 | $3.00 |

| Thinking control | reasoning_effort: high, max |

thinking_level: minimal, low, medium, high |

reasoning_effort: high, max |

thinking_level: minimal–high |

| SWE-Bench Verified | 80.6 % | 80.6 % | Not independently reported | Not independently reported |

| Terminal-Bench 2.0 | Beats Claude per DeepSeek’s numbers | 68.5 % | — | — |

| Multimodal | Text-only in V4 Preview | Text, image, video, audio understanding; image gen via sibling model | Text-only in V4 Preview | Text, image, video understanding |

| Consumer app | chat.deepseek.com + mobile; free | gemini.google.com + mobile; free tier + Google AI Pro ($19.99/mo) / Ultra ($249.99/mo) | (same chat) | (same chat) |

The big-picture read: on the published benchmarks where both labs compete directly, V4-Pro and Gemini 3.1 Pro are roughly peers. Gemini pulls ahead on breadth — native multimodal understanding, Google Search grounding, tight Workspace integration, video tooling. DeepSeek pulls ahead on the two things that move budgets: per-token cost (roughly 3–5× cheaper across the board) and open weights (you can download V4-Pro or V4-Flash, fine-tune them, and run them on your own GPU cluster — that option does not exist for Gemini).

Pricing: where DeepSeek’s gap is largest

Pricing is the dimension where the choice can get locked in by a spreadsheet rather than a preference. Here’s how the V4 tiers compare to the Gemini 3 tiers for a realistic API workload.

Assume 1,000,000 chat API calls with a 2,000-token cached system prompt, a 200-token user message (uncached on each call), and a 300-token response. At V4-Flash rates ($0.0028 cache-hit / $0.14 cache-miss / $0.28 output per 1M tokens):

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

At Gemini 3 Flash rates ($0.50 input / $3.00 output, with implicit caching that Google doesn’t expose a fixed cache-hit rate for):

- All input (2,200 tokens × 1,000,000 calls × $0.50/M): $1,100.00

- Output: 300 × 1,000,000 × $3.00/M = $900.00

- Total (no caching credit): $2,000.00

At V4-Pro rates: the same workload costs $355.25 during the 75% promo through 2026-05-31 (breakdown: cached input $7.25, uncached $87.00, output $261.00); list-price total is $1,421. At Gemini 3.1 Pro rates ($2 / $12 under 200K context): $2,200 input + $3,600 output = $5,800.

Read those numbers carefully. For the standard-tier workload, V4-Flash is about 11× cheaper than Gemini 3 Flash on output before any caching discount. For the frontier tier, V4-Pro is about 14× cheaper than Gemini 3.1 Pro on output during the 75% promo through 2026-05-31, narrowing to ~3.4× at list. Even if Gemini’s implicit caching trims its real-world cost by 50 %, DeepSeek still comes out ~5× cheaper on Flash output and ~7× cheaper on Pro output at promo (~1.7× at list). For a detailed breakdown of DeepSeek’s per-tier rates, see DeepSeek API pricing.

One caveat worth naming: Gemini Pro models moved to paid-only on April 1, 2026 — you cannot reach Gemini 3.1 Pro on the Gemini API free tier anymore. Gemini 3 Flash and Flash-Lite retain a reduced free quota. DeepSeek’s free web chat ran V4 from day one, and the API has no fixed subscription — you pay per token regardless of which tier you pick.

Coding: both strong, different sweet spots

SWE-Bench Verified is the benchmark most production engineers look at: fix real GitHub issues in real open-source Python projects. Both V4-Pro and Gemini 3.1 Pro land at 80.6 % — a dead tie. So on the headline score, there’s nothing to choose.

Where they diverge is the shape of the coding ability. On Terminal-Bench 2.0 (an agentic benchmark where the model runs an actual shell and completes multi-step tasks), DeepSeek reports V4-Pro ahead of Claude on DeepSeek’s own numbers; Gemini 3.1 Pro sits at 68.5 %. On LiveCodeBench Pro (competitive-programming style), Gemini 3.1 Pro posts 2,887 Elo — a high score, and DeepSeek has not published a directly comparable number at the time of the V4 Preview announcement.

In practice: for pull-request-sized patches and library integration, either model is a credible choice. For long-horizon agentic coding — an agent that runs for hours, touches many files, and calls many tools — Gemini 3.1 Pro has a larger body of public evaluation behind it, but V4-Pro with reasoning_effort="max" is narrowing that gap. For routine code completion, IDE assist, and CI-generated tests, V4-Flash at $0.28/M output is almost ridiculously cheap relative to Gemini 3 Flash at $3/M output. If coding cost is the decision, V4 wins.

For a detailed coding-focused comparison, see our DeepSeek Coder vs Copilot write-up and the model-level V4-Pro deep-dive.

Reasoning & thinking modes: two different control panels

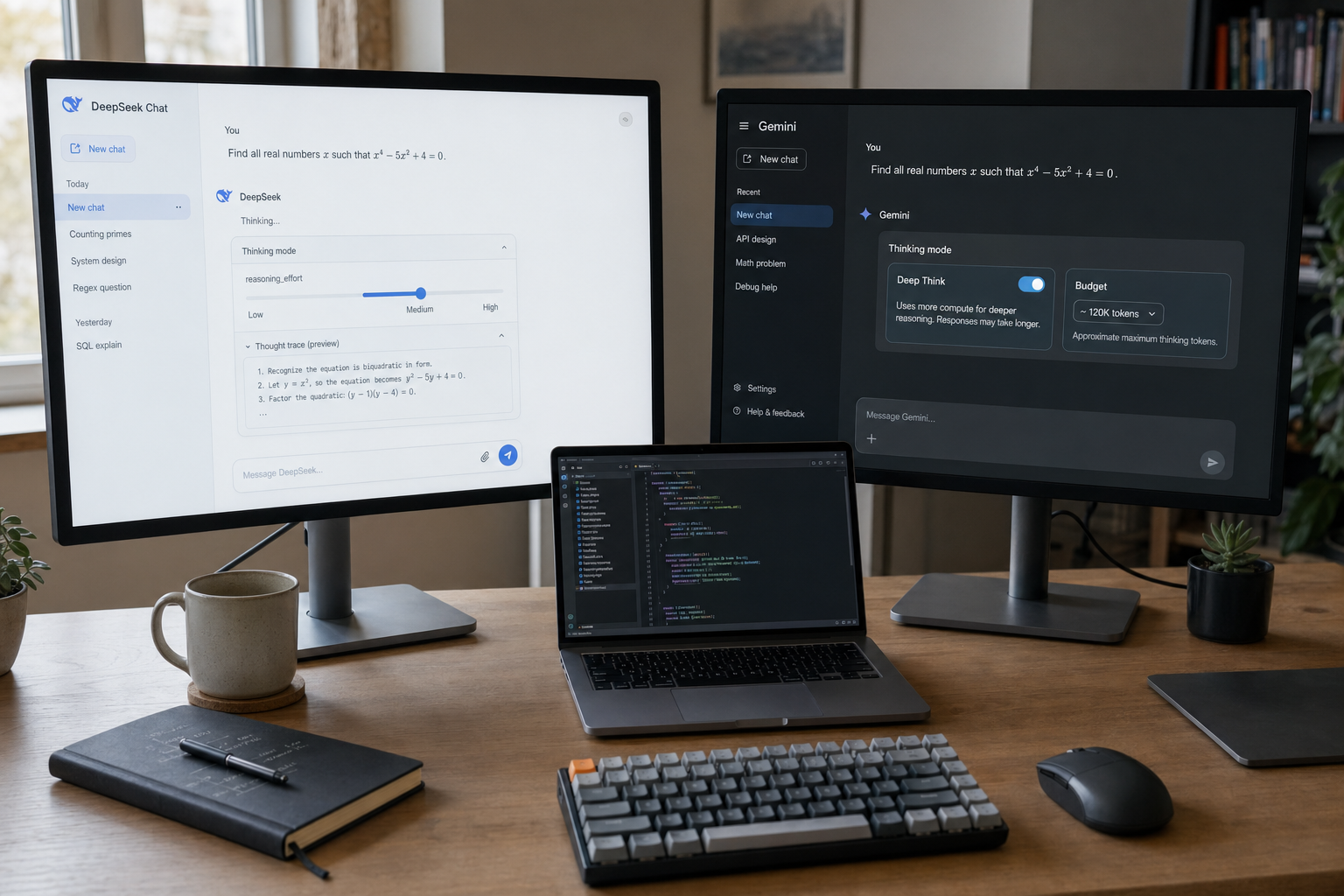

Both models expose a thinking mode, but the controls are different.

In DeepSeek’s V4 API, thinking is a request parameter, not a model ID. You pick deepseek-v4-pro or deepseek-v4-flash in the model field, then set reasoning_effort to either "high" or "max" and pair it with extra_body={"thinking": {"type": "enabled"}} to engage thinking mode. When thinking is enabled, the response returns reasoning_content alongside the final content. Minimal Python using the OpenAI SDK:

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Plan the migration."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)

All chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. DeepSeek also ships an Anthropic-compatible surface against the same base URL, so the Anthropic SDK works with a one-line base_url swap. The API is stateless — the client must resend the full conversation history on every request; DeepSeek does not store your messages between calls. Contrast with the web chat and mobile app, which keep state for the user’s session. Core parameters worth knowing: temperature, top_p, max_tokens (can go up to 384,000 on V4), and the V4-specific reasoning_effort.

Legacy API model IDs deepseek-chat and deepseek-reasoner still work and currently route to deepseek-v4-flash, but they retire on 2026-07-24 at 15:59 UTC. If you’re maintaining an older integration, swap the model= string before that date — nothing else changes, and base_url stays at https://api.deepseek.com.

In Gemini 3’s API, thinking is controlled by the thinking_level parameter, with four values: "minimal", "low", "medium", and "high". If you don’t set it, Gemini 3 defaults to "high" — close to DeepSeek’s posture, which also has thinking enabled by default on V4 (pass extra_body={"thinking": {"type": "disabled"}} to opt out). Gemini’s "medium" setting is useful in production when you want more than surface reasoning without paying for maximum deliberation latency; V4 does not have a direct equivalent.

On published reasoning benchmarks, Gemini 3.1 Pro posts ARC-AGI-2 at 77.1 %, GPQA Diamond at 94.3 %, and Humanity’s Last Exam at 44.4 %. DeepSeek hasn’t published a comparable full matrix for V4-Pro on those specific benchmarks at preview. The R1-report-era benchmark tables you may have seen for older DeepSeek models (GPT-4o-0513, Claude-3.5-Sonnet-1022) are no longer the right reference frame for a 2026 comparison — both labs have moved on.

Writing & nuance: Gemini’s edge, narrower than it used to be

Writing quality is the least-quantifiable dimension in any AI comparison, which is why we don’t assign a winner lightly. In our hands-on evaluation against matched prompts — long-form explainers, copy-editing tasks, persuasive outlines — Gemini 3.1 Pro still produces slightly more polished first-draft prose than V4-Pro, with a less formulaic structure and fewer transitional-phrase tics. V4-Pro has closed most of the gap that existed between DeepSeek V3.2 and Gemini 2.5 Pro, but for the specific workflow of producing client-facing or published copy with minimal editing, Gemini remains the more natural default.

For technical writing, API documentation, and in-line code comments, the gap narrows further; V4-Pro is typically within noise of Gemini on those tasks and V4-Flash is a strong value pick. For creative fiction, Gemini’s longer window of coherent tone over a multi-thousand-word output is the edge most writers will notice.

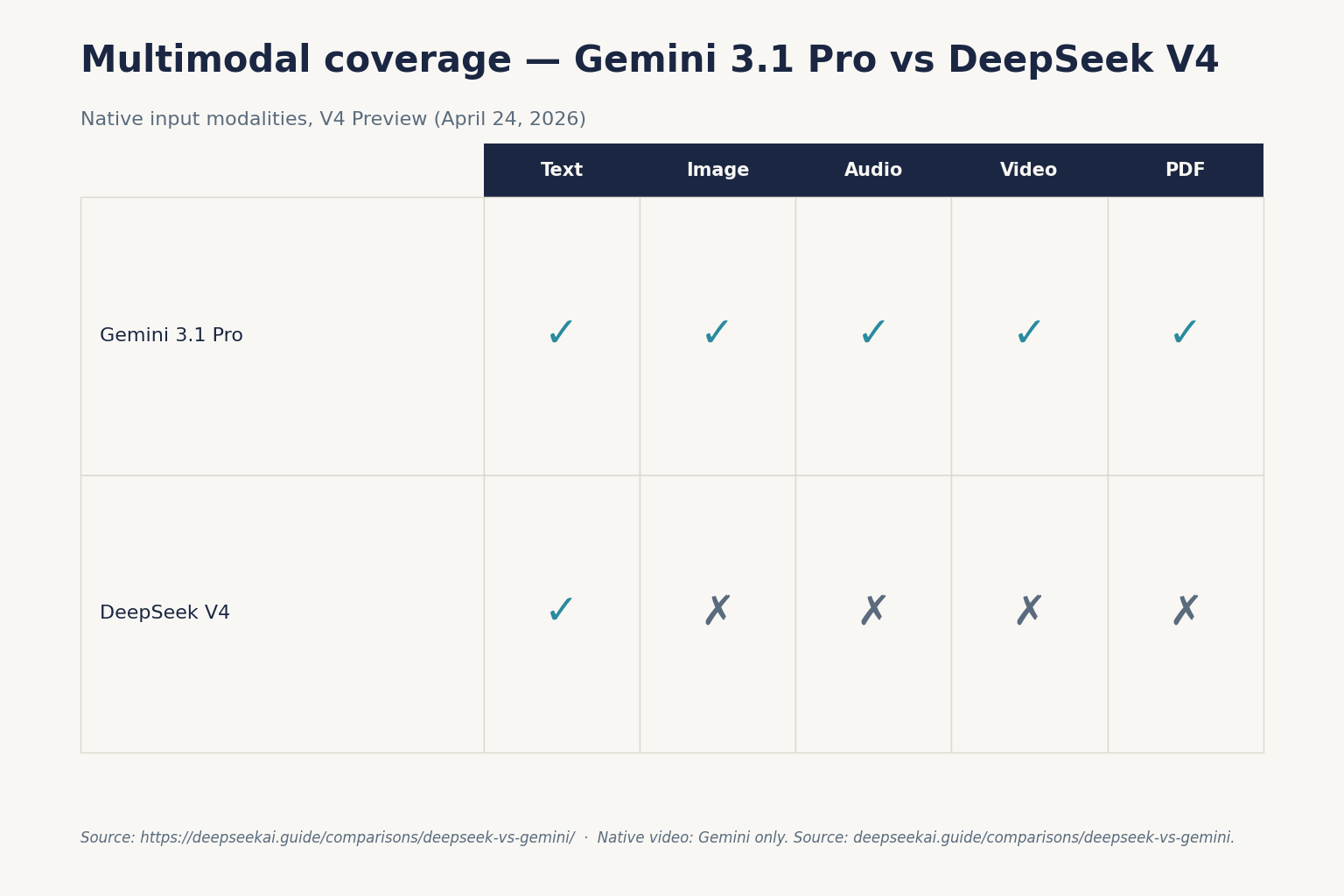

Multimodal: Gemini wins now, V4 hasn’t entered

As of the April 24, 2026 V4 Preview, DeepSeek V4 is a text-only release. There is no V4 image input, image generation, audio, or video understanding in the Preview. DeepSeek ships a separate VL2 vision model (older, under a split code/weights license) and a Janus multimodal family, but neither is integrated into V4.

Gemini 3.1 Pro handles text, image, video, and audio as native modalities on a single model endpoint. Gemini’s image-generation and video tooling (Veo 3.1 for paid Ultra subscribers, producing up to 60-second 1080p clips) has no DeepSeek counterpart at the time of writing. If any part of your workflow requires analysing screenshots, processing a document scan, or generating images, Gemini is the straightforward pick until DeepSeek ships a V4-multimodal update.

If you must use DeepSeek and need multimodal, the honest recommendation is to route: V4-Flash (or V4-Pro) for text, plus a separate vision or image-gen model for multimodal steps — covered in our DeepSeek alternatives hub.

Privacy, availability and data handling

This is where the two products diverge most, and it’s the dimension most business buyers care about after price.

DeepSeek: servers in China, subject to Chinese law. Conversations with the web chat and mobile app are processed on DeepSeek’s infrastructure and may be stored and used for training unless you opt out (the web chat has a training-data toggle; check the current setting before sending sensitive prompts). The service was blocked by Italy’s Garante (data-protection authority) in January 2025 over GDPR concerns and has faced state-level restrictions in Texas, New York, Virginia and other US states on government devices. For privacy-sensitive deployments, the self-hosted route is the honest answer: both V4-Pro and V4-Flash are MIT-licensed open weights on Hugging Face, so you can download the model and run it on hardware you control — zero data leaves your network. See our DeepSeek privacy guide for the full picture.

Gemini: Google infrastructure, globally distributed with region options in Vertex AI. Data handling under Google’s enterprise privacy terms (Workspace with Gemini, Vertex AI) means your prompts are not used to train consumer Gemini models by default. The consumer Gemini app has its own privacy settings. Gemini is broadly available in the US, EU, UK, Canada, Australia — fewer regulatory restrictions than DeepSeek outside the countries where Google itself is blocked.

If your workload is privacy-sensitive and you can’t self-host, Gemini via Vertex AI (with the no-training-on-your-data enterprise contract) is the simpler choice. If you can self-host, V4 on your own GPUs is the strongest possible privacy answer — no third party ever sees your prompts.

Ecosystem: Google’s advantage

Gemini plugs directly into the Google ecosystem: Google Search grounding, Google Maps context, URL context retrieval, File Search, code execution with image output, and Workspace integrations (Docs, Sheets, Gmail, Meet). For a team already on Google Workspace, that integration is the buying rationale on its own. Google also operates an enterprise agent platform (Gemini Agent, currently US English only) that has no direct DeepSeek equivalent.

DeepSeek’s ecosystem is smaller. The API is OpenAI-compatible (and now Anthropic-compatible too), which means any existing LangChain, LlamaIndex, or OpenAI-SDK-using application can switch to V4 with a one-line base_url change — a meaningful kind of ecosystem compatibility. But DeepSeek does not ship native Slack/Workspace connectors or an end-user agent product; you build the integration yourself or pull from the open-source community.

Consumer access: free chat vs tiered subscription

The chatbot experiences are also structured differently. DeepSeek’s web chat and mobile app run V4 by default for everyone — free, no subscription, with a DeepThink toggle for thinking mode. File uploads, web search, and Canvas-like surfaces are included. Rate limits exist but aren’t published as fixed figures.

Google’s Gemini app is a tiered product. The free plan provides Gemini 3 Flash as the “Auto” model, with limited access to Thinking (3 Pro). Google AI Pro ($19.99/month in the US) unlocks full Gemini 3 Pro access including Deep Think, multimodal reasoning, long-form processing, and Workspace integration. Google AI Ultra ($249.99/month) adds Gemini 3.1 Pro, Deep Research, the Gemini Agent (US English only), and Veo 3.1 for video generation. If you want the frontier-tier Gemini model without restrictions, you’re committing to a subscription. DeepSeek V4 on chat.deepseek.com is free at the same capability tier.

When to pick DeepSeek V4, when to pick Gemini

Pick DeepSeek V4 if:

- Cost per token is the primary constraint — V4-Flash is 10×+ cheaper than Gemini 3 Flash on output.

- You need open weights for self-hosting, fine-tuning, or regulatory reasons. V4-Pro and V4-Flash are MIT-licensed on Hugging Face.

- Your workload is text-only — chat, summarisation, RAG, standard coding, long-context analysis.

- You’re already on an OpenAI-compatible or Anthropic-compatible stack — swap

base_urland you’re running V4. - You’re doing high-volume API work where a ~5× cost gap compounds materially over a year.

Pick Gemini 3.1 Pro or 3 Flash if:

- You need native multimodal (image understanding, video, audio) on a single model endpoint.

- You need Google Search grounding or tight Workspace / Docs / Sheets integration.

- Your buyer or compliance team has concerns about Chinese-hosted data processing and self-hosting isn’t an option.

- You want the polished first-draft writing style that Gemini tends to produce on long-form content.

- You need Veo 3.1 for video generation or the Gemini Agent product.

Pick both and route:

The sophisticated answer for teams at scale is to route each task to the cheapest model that passes your quality bar. V4-Flash for high-volume classification, extraction, and routine coding. Gemini 3.1 Pro via Vertex AI for anything multimodal or Search-grounded. V4-Pro at reasoning_effort="max" for frontier-tier agentic coding where the price gap pays for itself over the evaluation cycle. Multi-model routing is the 2026 default for any application past the pilot stage.

Related comparisons and further reading

If you’re evaluating across multiple providers, our DeepSeek vs ChatGPT and DeepSeek vs Claude comparisons use the same April 2026 benchmark frame. For the broader open-weight landscape, see the DeepSeek alternatives hub. For a full technical deep-dive on the V4 family, start at DeepSeek V4, then the tier-specific pages for V4-Pro and V4-Flash. Full comparison-hub index: DeepSeek comparisons.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company, nor with Google or Alphabet. Model IDs, pricing, and API behaviour change; check the official DeepSeek pricing page and Gemini API pricing page before committing to a production decision.

DeepSeek vs Gemini: frequently asked questions

Is DeepSeek V4 better than Gemini 3.1 Pro?

On SWE-Bench Verified they’re tied at 80.6 %. On Terminal-Bench 2.0, DeepSeek V4-Pro is reportedly ahead on DeepSeek’s own numbers; Gemini 3.1 Pro sits at 68.5 %. On ARC-AGI-2 and GPQA Diamond, Gemini 3.1 Pro has published scores (77.1 % and 94.3 % respectively) and V4-Pro has not at Preview. So “better” depends on the task. V4-Pro is ~14× cheaper per output token at the 75% promo through 2026-05-31 (~3.4× at list) and ships MIT weights; Gemini 3.1 Pro wins on multimodal and published reasoning breadth. See our V4-Pro deep-dive for full benchmarks.

Which is cheaper for API use, DeepSeek V4 or Gemini 3?

DeepSeek, by a meaningful margin. V4-Flash runs $0.14 input (cache-miss) and $0.28 output per 1M tokens; Gemini 3 Flash runs $0.50 input and $3.00 output per 1M tokens. For the frontier tier, V4-Pro is $0.435/$0.87 promo through 2026-05-31 (list $1.74/$3.48) vs Gemini 3.1 Pro’s $2.00/$12.00. Even after accounting for Gemini’s implicit caching, DeepSeek is ~3–10× cheaper depending on tier and workload mix. For a step-by-step breakdown see DeepSeek API pricing.

Can I self-host DeepSeek V4 but not Gemini?

Yes. Both V4-Pro (1.6T total / 49B active) and V4-Flash (284B / 13B) are published on Hugging Face under MIT license, so you can download the weights, run them on your own hardware, and fine-tune. Gemini 3 and 3.1 are proprietary — there is no downloadable weight release for any Gemini model in 2026. If self-hosting is a requirement (regulatory, privacy, latency, offline use), DeepSeek is the only option between the two. See is DeepSeek open source for licensing specifics.

Does Gemini have a thinking mode like DeepSeek’s DeepThink?

Yes. Gemini 3 uses a thinking_level API parameter with values minimal, low, medium, and high; the default is high. DeepSeek V4 uses reasoning_effort with values high and max, and defaults to thinking enabled (pass extra_body={"thinking": {"type": "disabled"}} for non-thinking). In the consumer Gemini app, Deep Think is the user-facing name for Pro / Ultra subscribers. The DeepThink toggle in DeepSeek’s web chat plays the same role. Both return a reasoning trace alongside the final answer.

Which model is safer for enterprise data?

Depends on your threat model. Gemini via Vertex AI, on Google’s enterprise contract, processes data under Google’s no-training-on-your-data terms and runs on globally distributed infrastructure. DeepSeek’s hosted service runs on Chinese-based infrastructure under Chinese law, which some compliance teams will not accept — Italy’s Garante ordered the app blocked in January 2025 and several US states have restricted it on government devices. The strongest privacy answer for DeepSeek is self-hosting the open weights; see our DeepSeek privacy guide. For the hosted-API comparison, enterprise Gemini via Vertex AI has fewer regulatory asterisks than hosted DeepSeek.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- RepositoryDeepSeek-AI on GitHubOpen-weight release details, training/inference notesLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingGoogle Gemini API pricingGemini API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingDeepSeek API pricing pageV4-Flash / V4-Pro per-million-token ratesLast checked: April 30, 2026

- PricingGoogle Gemini API pricing pageGemini 3 Flash / 3.1 Pro input/output ratesLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- BenchmarkLiveCodeBenchLive coding benchmark scoresLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.