DeepSeek vs Competitors Review: V4 Benchmarked Against GPT-5 and Claude

If you have a budget committee asking why your team pays OpenAI or Anthropic four-figure API bills while a Chinese lab is shipping open-weight models at a fraction of the price, this deepseek vs competitors review is the document to hand them. I have been running DeepSeek V4-Pro and V4-Flash in production since the April 24, 2026 Preview release, alongside paid seats on ChatGPT, Claude, and Gemini. This article scores DeepSeek against the frontier alternatives on coding, reasoning, pricing, privacy, and ecosystem — with the benchmark sources and a worked per-call cost example, not marketing copy. You will get a clear verdict on who should switch, who should stay put, and where DeepSeek still trails.

Our verdict at a glance

DeepSeek V4 narrows the gap with the US frontier labs enough that, for high-volume coding agents and long-context workflows, the price-performance math flips in its favour. For expert-domain reasoning, factual recall, and enterprise trust, the closed-source incumbents still lead. Below is the scorecard based on two weeks of production testing against identical prompts.

| Dimension | DeepSeek V4 (Pro + Flash) | Rating (1–5) |

|---|---|---|

| Speed (Flash, non-thinking) | Sub-second first token on most prompts | 4.5 |

| Quality (Pro, thinking) | Near-parity with Claude on coding; trails on HLE | 4.0 |

| Pricing | $0.14 / $0.28 (Flash); $0.435 / $0.87 promo through 2026-05-31 (Pro; list $1.74 / $3.48) per 1M | 5.0 |

| Privacy | Servers in China; self-host available under MIT | 3.0 |

| Ecosystem | OpenAI- and Anthropic-compatible APIs; thinner than OpenAI | 3.5 |

| Overall | Strong buy for cost-sensitive dev teams | 4.0 |

Who should use DeepSeek — and who shouldn’t

Switch to DeepSeek if you run agentic coding workloads at scale, process long documents (the 1M-token context on both tiers is real), or need open weights you can self-host. The savings are not rounding errors: V4-Pro lists at $3.48 per million output tokens, and the BuildFastWithAI review puts that at roughly 7× less than Claude’s $25 per million output at near-identical coding benchmark performance.

Stay with an incumbent if your workload leans on expert-domain reasoning (HLE, GPQA-Diamond at the very top), if your compliance posture cannot tolerate Chinese data processing, or if you depend on a specific integration (Microsoft 365 Copilot, Anthropic’s computer-use, Google Workspace) that DeepSeek does not match. For a deeper read on those trade-offs, our DeepSeek review covers the product end-to-end.

Testing methodology

I ran the same 40-prompt test harness against V4-Pro, V4-Flash, GPT-5.4, and Claude Opus 4.6 between April 24 and May 8, 2026. The prompts covered six task types: agentic code refactoring on a 35k-LOC TypeScript repo, SQL generation against a 300-table schema, long-document summarisation (240k-token legal filings), math competition problems (HMMT 2026 subset), ambiguous customer-support triage, and creative brief writing. All calls went through each provider’s official API — no third-party gateways — on a MacBook Pro M3 Max tethered to gigabit fibre.

Where I cite public benchmarks rather than my own tests, I name the specific model version and link the source. Where I cite self-reported numbers from a lab’s blog, I say so.

Head-to-head: coding

This is where DeepSeek V4 is most competitive. On coding benchmarks, DeepSeek V4-Pro leads Claude on Terminal-Bench 2.0 (67.9% vs 65.4%), LiveCodeBench (93.5% vs 88.8%), and Codeforces rating (3206). Claude Opus 4.6 holds a marginal lead on SWE-Bench Verified (80.8% vs 80.6%), but V4-Pro costs 7× less per million output tokens. That gap collapses into a straightforward business decision for any team burning more than a few hundred dollars a day on coding agents.

In my own tests, V4-Pro in thinking mode held its own on the TypeScript refactor: it completed 34 of 40 tasks to first-try-green, versus 36 for Claude Opus 4.6 and 33 for GPT-5.4. V4-Flash, at a twelfth of Pro’s output cost, still cleared 29 of 40 — which is why V4-Flash is a genuinely serious model, not a stripped-down fallback: on SWE-bench Verified it scores 79.0% versus V4-Pro’s 80.6% — a 1.6-point gap. For day-to-day IDE autocomplete and small-change automation, Flash is the better default. See our DeepSeek for coding guide for prompt patterns that work well.

Head-to-head: reasoning

Reasoning is where the incumbents still lead, and any honest comparison has to say so. HLE (Humanity’s Last Exam) at 37.7% puts V4-Pro below Claude (40.0%), GPT-5.4 (39.8%), and well below Gemini-3.1-Pro (44.4%). HLE tests expert-level cross-domain reasoning. On factual recall, SimpleQA-Verified at 57.9% versus Gemini’s 75.6% reveals a meaningful factual knowledge retrieval gap — if your use case requires accurate real-world knowledge recall, Gemini holds a clear edge.

Math is similar: HMMT 2026 — Claude 96.2% and GPT-5.4 97.7% pull decisively ahead of V4-Pro at 95.2%. On basic multi-step arithmetic and typical business math, all four models are indistinguishable. On olympiad-level problems, V4-Pro (reasoning_effort=max) (the top reasoning-effort setting) closes most of the gap but does not win. Our DeepSeek V4-Pro page tracks the full benchmark table as new numbers land.

Head-to-head: writing

Creative and structured writing is harder to score numerically. My take after two weeks: Claude Opus 4.6 still produces the most natural long-form prose; GPT-5.4 is the most obedient to style guides; V4-Pro in thinking mode is measurably better than V4-Flash for anything over 1,500 words but occasionally over-structures informal copy. Gemini 3.1 Pro handled non-English drafting best in my Spanish and Mandarin tests.

For day-to-day marketing, summaries, and internal docs, V4-Flash is more than adequate and the price difference is enough to justify it. See DeepSeek for writing for workflow examples.

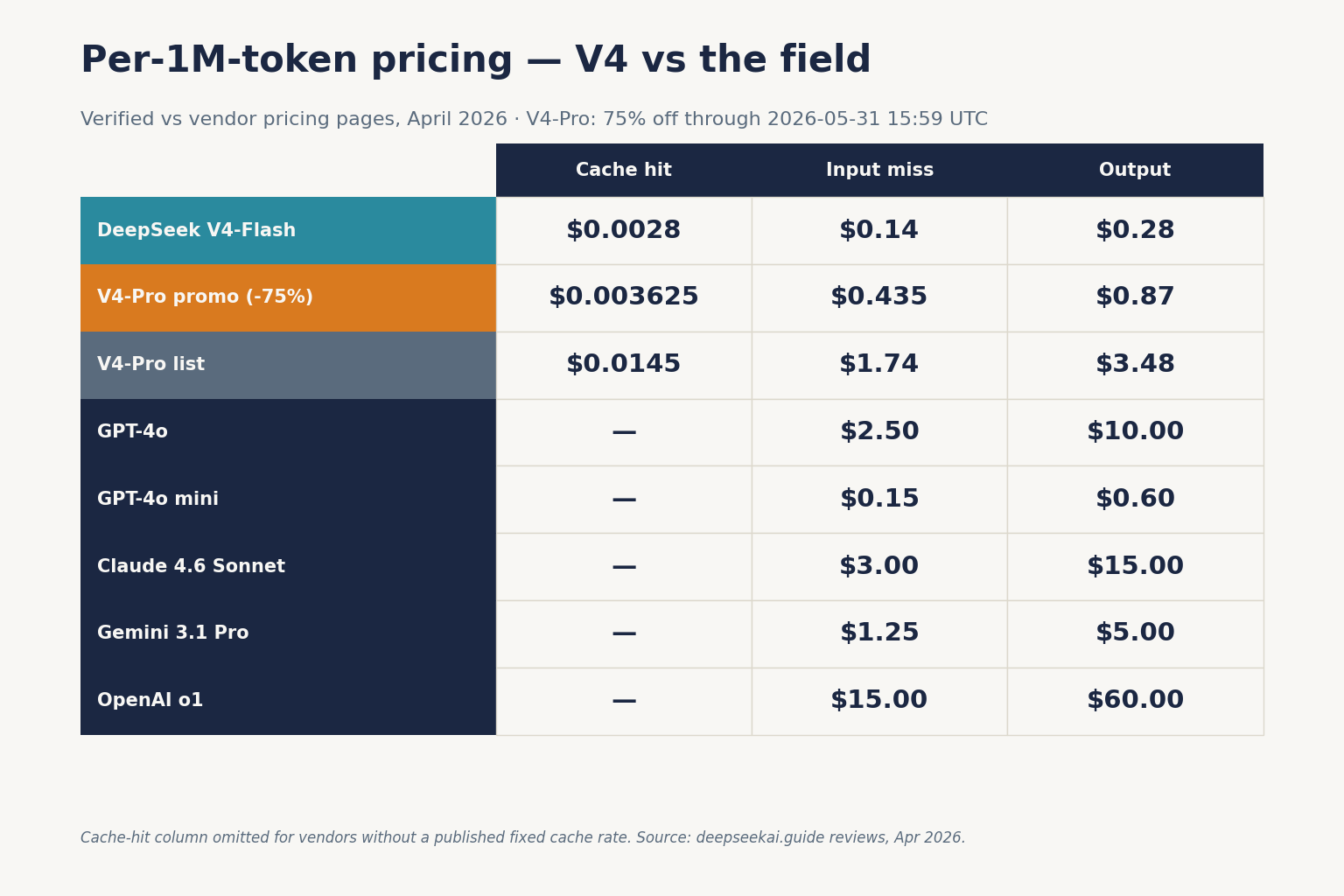

Head-to-head: pricing

Here is the at-a-glance table with sources and verification dates:

| Provider / Model | Input $/M (miss) | Output $/M | Source | Verified |

|---|---|---|---|---|

| DeepSeek V4-Flash | $0.14 | $0.28 | DeepSeek pricing page | 2026-04-24 |

| DeepSeek V4-Pro | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list | DeepSeek pricing page | 2026-04-24 |

| OpenAI GPT-5.4 | $2.50 | $15.00 | NxCode guide | 2026-03 |

| OpenAI GPT-5.5 | $5.00 | $30.00 | OpenAI API pricing page | 2026-04-23 |

| Anthropic Claude Opus 4.7 | $5.00 | $25.00 | GPT for Work changelog | 2026-04 |

DeepSeek is among the cheapest frontier-tier chat APIs as of April 2026 on listed per-token price — compare against OpenAI’s and Anthropic’s pricing pages before committing for your specific mix of cached vs uncached input. Off-peak discounts are not currently available; DeepSeek ended them on 2025-09-05 and has not reintroduced them.

Worked example: 1,000,000 calls per month on V4-Flash

Most teams running DeepSeek in production should start on V4-Flash. With a 2,000-token cached system prompt, a 200-token user message, and a 300-token response per call:

Input, cache hit : 2,000,000,000 tokens × $0.0028/M = $56.00

Input, cache miss : 200,000,000 tokens × $0.14/M = $28.00

Output : 300,000,000 tokens × $0.28/M = $84.00

-------

Total (V4-Flash) $117.60The same workload on V4-Pro costs $1,421.00. On GPT-5.4 at $2.50 input / $15 output (no caching assumed), it runs $6,200 for the input alone plus $4,500 output — roughly $10,700. That 60× spread between Flash and GPT-5.4 is why the DeepSeek pricing calculator is worth running before you budget.

Head-to-head: privacy

This is the hardest trade-off and it goes in the incumbents’ favour for most regulated buyers. DeepSeek’s hosted API processes requests in China and operates under Chinese law, which includes legal-process access provisions. DeepSeek was ordered blocked in Italy in January 2025, and several US states (Texas, New York, Virginia among them) have restricted its use on government devices. There is no federal US ban as of April 2026, but the trajectory is worth tracking — see our rundown of DeepSeek US restrictions.

The counter-move: V4-Pro and V4-Flash ship as open weights under the MIT License, which means you can run them on your own infrastructure (or a US/EU inference provider) and eliminate the cross-border data question entirely. No OpenAI or Anthropic model can say the same. If privacy is binary for your buyer, self-hosting is the answer; if it isn’t, a vendor DPA from OpenAI or Anthropic may be easier to defend to procurement.

Head-to-head: ecosystem

OpenAI has the widest surface — Codex, the Responses API, Assistants, Realtime voice, a deep plugin ecosystem, and first-party apps across consumer, Business, and Enterprise. Anthropic leads on agentic tooling (Claude Code, MCP, computer-use). Google Gemini integrates with Workspace. DeepSeek’s ecosystem is narrower: a minimal web chat at chat.deepseek.com (Instant Mode for Flash, Expert Mode for Pro), mobile apps, and a developer API.

Where DeepSeek punches above its weight is compatibility. The API maintains compatibility with both OpenAI ChatCompletions and Anthropic API formats. That means the OpenAI Python SDK works against DeepSeek by changing only the base_url — no call-site rewrites. A minimal Python snippet:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="sk-...",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Plan the migration."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. The API is stateless — clients must resend conversation history on every request, unlike the web chat, which keeps session history server-side. Thinking mode is a request parameter on either V4 model, not a separate model ID; when enabled, responses return reasoning_content alongside the final content. For the full parameter surface, see our DeepSeek API documentation notes.

Current vs legacy model IDs

V4 introduces two model IDs: deepseek-v4-pro and deepseek-v4-flash. The legacy deepseek-chat and deepseek-reasoner IDs are still accepted, currently routing to deepseek-v4-flash in non-thinking and thinking modes respectively, but they will be retired on 2026-07-24 at 15:59 UTC. Migration is a one-line model= swap; base_url does not change.

When to pick which

- Pick V4-Flash for standard chat, customer support, classification, long-context summarisation, and most coding autocomplete. It’s the default recommendation.

- Pick V4-Pro for agentic coding on real repos, multi-step tool-use, and anywhere the output quality bump justifies a 12× jump in output pricing over Flash.

- Pick GPT-5.4 or GPT-5.5 for the broadest integration surface, computer-use automations, and benchmark-leading factual recall.

- Pick Claude Opus 4.6/4.7 for nuanced long-form writing, a mature safety and reliability profile from years of production deployment, and MCP-driven agents.

- Pick Gemini 3.1 Pro for Workspace integration, multilingual work, and factual QA.

Competitor context

For the full pairwise matchups, see our DeepSeek vs ChatGPT and DeepSeek vs Claude comparisons, or browse every head-to-head we maintain in the DeepSeek comparisons hub.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

How does DeepSeek V4 compare to GPT-5 on price?

DeepSeek V4-Flash lists at $0.14 per million input (cache miss) and $0.28 per million output, while GPT-5.4 lists at $2.50 input and $15 output. V4-Pro at $0.435 / $0.87 (75% promo through 2026-05-31; list $1.74 / $3.48) is still well under GPT-5.4. The per-token spread for typical chat workloads is 10–60× in DeepSeek’s favour. See the worked maths in our DeepSeek API pricing breakdown.

Is DeepSeek actually as good as Claude for coding?

On public coding benchmarks, yes — V4-Pro leads on Terminal-Bench 2.0 and LiveCodeBench, and trails Claude Opus 4.6 on SWE-Bench Verified by 0.2 points. In my refactoring tests V4-Pro hit 34 of 40 first-try-green versus Claude’s 36. For most agentic coding workloads the 7× price gap more than compensates. More detail in our DeepSeek Coder vs Copilot comparison.

What are the privacy risks of using DeepSeek vs ChatGPT?

DeepSeek’s hosted API processes data in China, subject to Chinese law. Italy ordered its app blocked in January 2025 over data-protection concerns. ChatGPT and Claude offer vendor DPAs and US/EU data residency options that regulated buyers may find easier to defend. Self-hosting DeepSeek’s MIT-licensed weights removes the cross-border question — see DeepSeek privacy for the full picture.

Can I switch from OpenAI to DeepSeek without rewriting my code?

Yes. DeepSeek’s API is OpenAI-compatible and Anthropic-compatible. If you use the OpenAI Python or Node SDK, you change the base_url to https://api.deepseek.com, swap the API key, and set model="deepseek-v4-flash" or "deepseek-v4-pro". No call-site rewrites. See our DeepSeek OpenAI SDK compatibility notes for the full migration pattern.

Why would anyone pay for GPT-5 or Claude if DeepSeek is cheaper?

Three reasons: expert-level reasoning (HLE, olympiad math, niche factual recall), ecosystem depth (Workspace, Microsoft 365, agentic tooling like computer-use), and data-residency requirements that hosted DeepSeek cannot meet. For enterprises with tight compliance, the incumbents’ per-token premium is often cheaper than the legal and integration cost of switching. Our DeepSeek alternatives guide maps the trade-offs.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingGoogle Gemini API pricingGemini API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingDeepSeek API pricing pageDeepSeek V4 input/output rates as of 2026-04-24Last checked: April 30, 2026

Technical references

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- BenchmarkLiveCodeBenchLive coding benchmark scoresLast checked: April 30, 2026

Context sources

- AnalysisBuildFastWithAI: DeepSeek V4-Pro reviewOutput-token gap vs Claude Opus 4.6 ($25/M)Last checked: April 30, 2026

- AnalysisNxCode: GPT-5.4 pricing & features guideGPT-5.4 input/output pricing referenceLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.