The Best Chinese AI Models in 2026: A Practitioner’s Comparison

Which Chinese lab actually ships the model you should put in production today? That’s the question I get most often, and the honest answer changed twice in the last quarter. The best Chinese AI models in April 2026 are no longer a one-horse race: DeepSeek’s V4 release sits next to Moonshot’s Kimi K2.6, Alibaba’s Qwen 3.6 family, Zhipu’s GLM-5/5.1 and MiniMax’s M2.5 — each strong in a different lane. I’ve run all of them in real workloads (production chat, agentic coding, long-document analysis) and tracked their pricing pages weekly. This guide ranks them by what matters for English-speaking developers and teams: price-per-token, benchmark evidence, licensing, and where each one actually wins. You’ll get a comparison table, a worked cost example, and clear “pick this if…” guidance.

The shortlist: five labs worth your attention

If you’re building on a Chinese model in 2026, five labs cover almost every credible option. The other names (Baichuan, 01.AI, ByteDance Doubao, Tencent Hunyuan) ship interesting work, but the labs below are the ones competing on the open-weight frontier and exposing public APIs in English.

- DeepSeek — Hangzhou-based, the price leader, two open-weight V4 tiers under MIT.

- Moonshot AI (Kimi) — Beijing-based, agentic coding specialists, K2.6 open weights.

- Alibaba (Qwen) — broad family, strong open-source heritage, mixing open and proprietary tiers.

- Zhipu AI (GLM / Z.ai) — reasoning- and knowledge-leaning, GLM-5 and GLM-5.1 trained on Huawei Ascend.

- MiniMax — Shanghai-based, agentic and coding-tuned, aggressive pricing on M2.5.

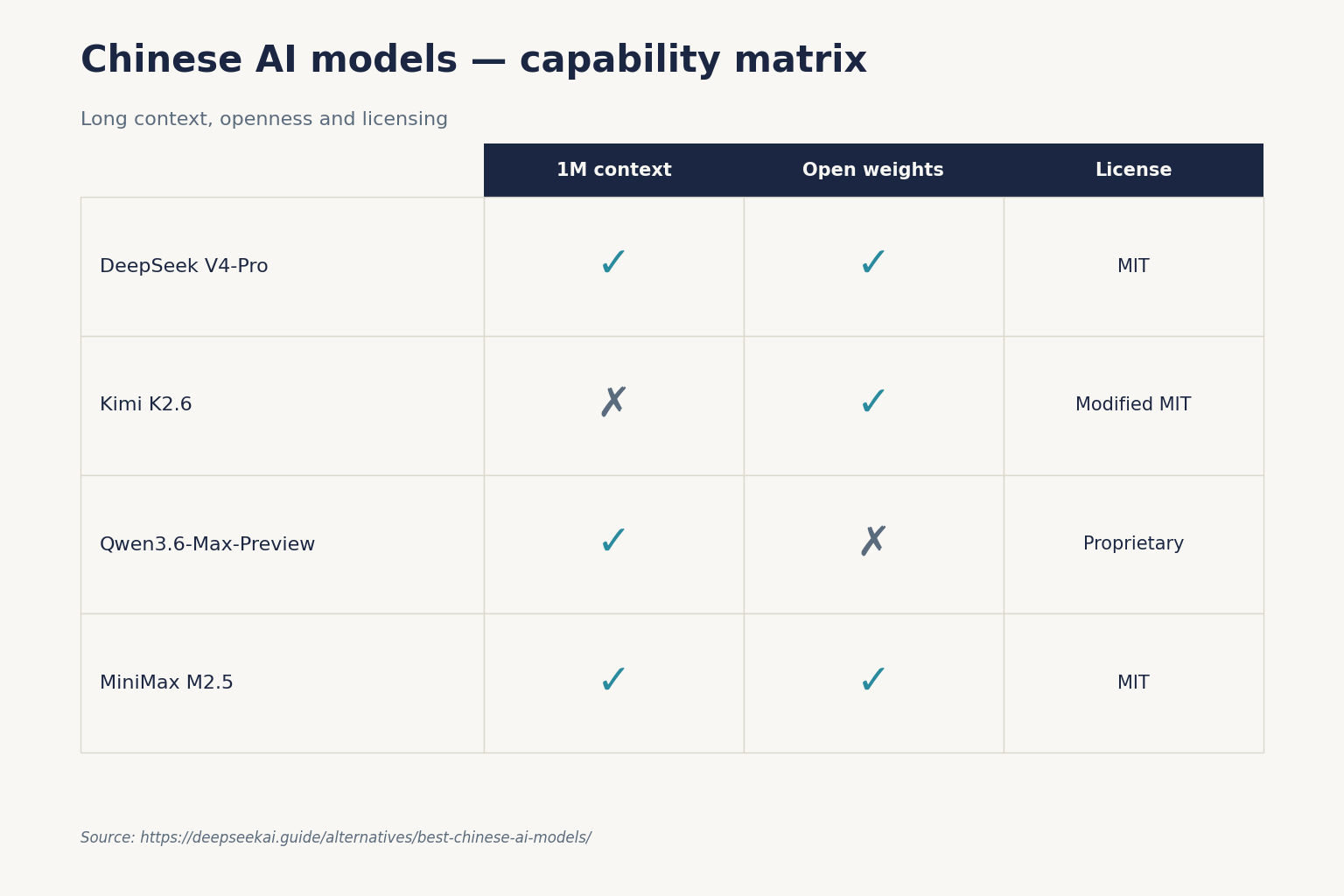

At-a-glance comparison (April 2026)

Every row uses figures I could verify against an official page or a primary news source within the last month. Cross-version comparisons (e.g., DeepSeek V4-Flash vs Kimi K2.6) are unavoidable here — these are the current generations of each lab as of April 25, 2026. Pricing is per 1M tokens in USD.

| Model | Lab | Architecture | Context | Input (miss) | Output | Weights |

|---|---|---|---|---|---|---|

| DeepSeek-V4-Flash | DeepSeek | 284B / 13B active MoE | 1,000,000 | $0.14 | $0.28 | MIT |

| DeepSeek-V4-Pro | DeepSeek | 1.6T / 49B active MoE | 1,000,000 | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list | MIT |

| Kimi K2.6 | Moonshot AI | 1T total / 32B active, 384 experts, 256K context MoE | 256,000 | $0.60 | $4.00 | Modified MIT |

| Qwen3.6-Max-Preview | Alibaba | Proprietary frontier tier | 1,000,000 | See provider | See provider | Proprietary, hosted, no open weights |

| Qwen3.6-35B-A3B | Alibaba | 35B / 3B active MoE | Up to 256K | Self-host | Self-host | Apache 2.0 |

| GLM-5 | Zhipu AI | 745B total, 256 experts, 8 activated (~44B active), 202K context | ~202K | ~$0.60 | ~$2.20 | MIT (zai-org/GLM-5) |

| MiniMax M2.5 | MiniMax | 230B MoE | Up to 1M | $0.30 | $1.20 | MIT |

Sources: DeepSeek pricing page (V4 Flash/Pro rates); Alibaba Cloud Model Studio model docs; Moonshot, Zhipu and MiniMax pricing pages, accessed April 2026. Verify before committing — the V4 Preview window is days old at publication.

DeepSeek V4 — the price leader

DeepSeek released V4 (Preview) on April 24, 2026, the day before this article published. It ships as two open-weight MoE models under MIT: deepseek-v4-pro (1.6T total / 49B active) and deepseek-v4-flash (284B / 13B active). Both default to a 1,000,000-token context window with up to 384,000 tokens of output, which is a real step up from V3.2’s 128K.

Thinking mode in V4 is a request parameter, not a separate model ID. You hit the OpenAI-compatible POST /chat/completions endpoint and toggle reasoning via reasoning_effort plus an extra_body flag — the API returns reasoning_content alongside the final content. Legacy IDs deepseek-chat and deepseek-reasoner still work but route to V4-Flash and retire on 2026-07-24 at 15:59 UTC.

Pricing is what makes V4 hard to beat for chat workloads: V4-Flash lists $0.0028 cache hit / $0.14 cache miss / $0.28 output per 1M tokens. V4-Pro lists $0.0145 / $1.74 / $3.48 (currently 75% off through 2026-05-31, making the effective rates $0.003625 / $0.435 / $0.87). For the standard chatbot/agent use case, V4-Flash undercuts every other lab in this article on output rates. For deeper coverage, see our DeepSeek V4-Flash and DeepSeek V4-Pro pages, or the broader DeepSeek models hub.

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Summarise this RFC."}],

)The API is stateless — you resend the conversation history with every request. The web chat and mobile app maintain session history; the API does not.

Moonshot Kimi K2.6 — the agentic coding specialist

If your workload is autonomous coding agents that run for hours, Kimi K2.6 is the most credible Chinese option in April 2026. Moonshot open-sourced K2.6 on April 20, 2026, targeting long-running coding agents, front-end generation, and massively parallel agent swarms.

Architecture: 1 trillion total parameters with 32 billion active per forward pass, 384 experts with 8 activated per token, and a 256K-token context window. Benchmarks Moonshot reports against current frontier competitors:

- SWE-Bench Pro: 58.6, vs 57.7 for GPT-5.4 (xhigh), 53.4 for Claude Opus 4.6 (max effort), and 54.2 for Gemini 3.1 Pro (thinking high).

- SWE-Bench Verified: 80.2.

- Terminal-Bench 2.0 with the Terminus-2 agent framework: 66.7, vs 65.4 for GPT-5.4 and Claude Opus 4.6, and 68.5 for Gemini 3.1 Pro.

- Humanity’s Last Exam (HLE-Full) with tools: 54.0, leading every model in Moonshot’s comparison including GPT-5.4 (52.1), Claude Opus 4.6 (53.0), and Gemini 3.1 Pro (51.4).

Pricing is the catch: at $0.60 input / $4.00 output per 1M tokens, Kimi K2.6 is roughly 4× DeepSeek V4-Flash on input and 14× on output. You pay for the agentic capability. Weights are published on Hugging Face under a Modified MIT License, so self-hosting is on the table for teams with the GPUs.

Alibaba Qwen 3.6 — the broadest family

Alibaba’s Qwen lineup is the broadest of any Chinese lab — from 0.6B dense models to a proprietary frontier tier. The April 2026 line-up matters because Alibaba’s strategy has shifted: Alibaba released Qwen 3.6-Max-Preview on April 21, 2026, the most powerful model the company has shipped, topping six major coding benchmarks and posting gains in world knowledge and instruction following over Qwen 3.6-Plus.

The trade-off: it is a proprietary, hosted model with no open weights, with API compatibility for OpenAI and Anthropic specifications. This is also a shift in Alibaba’s business model, as it was known to provide powerful open-source models by default. The lower-end Qwen 3.6 models — including the Qwen3.6-35B-A3B model released under the Apache 2.0 license — remain open.

If permissive licensing matters to you, Qwen’s Apache 2.0 footprint is the most commercially flexible of the Chinese options. If you want the Max tier’s benchmark numbers, you’re back on a hosted API.

Zhipu GLM-5 / GLM-5.1 — reasoning and knowledge

Zhipu (now branding the platform as Z.ai) released GLM-5 in February 2026 and GLM-5.1 on March 27, 2026. The training story is geopolitically interesting: GLM-5 and 5.1 were trained entirely on Huawei Ascend chips — no Nvidia GPUs — on 100,000 Ascend 910B chips.

Architecturally, GLM-5 has a total of 745B parameters, about twice that of its predecessor GLM-4.7. Its architecture includes 78 hidden layers and 256 experts (8 activated each time, with about 44B activated parameters), supporting a maximum context window of 202K tokens.

Where GLM-5 wins is reasoning and factual reliability. On Zhipu’s own evaluation, GLM-5 scores 92.7% on AIME 2026, 86.0% on GPQA-Diamond, and 50.4 on Humanity’s Last Exam with tools, surpassing Opus 4.5’s 43.4 and GPT-5.2’s 45.5. Verify those numbers against Artificial Analysis or the model card before quoting them in production decisions.

MiniMax M2.5 — the cheap agent runner

MiniMax’s M2.5 sits between DeepSeek and Kimi on price and leans hard into coding. MiniMax M2.5 Standard costs $0.30 per million input tokens and $1.20 per million output tokens. The Lightning version costs $0.30 input and $2.40 output per million tokens, with double the speed.

On benchmarks, MiniMax M2.5 hits 80.2% on SWE-bench Verified — essentially matching the best closed models — but be careful here: that’s competitive with Kimi K2.6’s 80.2 on the same benchmark, and the cost gap matters. M2.5 is text-only at the API surface, so if your pipeline needs vision, look at GLM-5 or Qwen instead.

Worth flagging: Alibaba shut down the free tier of Qwen Code just days after MiniMax rewrote its open-source license to block commercial use without written authorization — both moves signal a shift toward monetized, proprietary offerings. Read the licence on the version you actually download.

A worked cost example: 1M agent calls on V4-Flash

To make the price differences concrete, here’s the standard workload — 1,000,000 API calls with a 2,000-token cached system prompt, a 200-token user message (uncached miss), and a 300-token response — costed against deepseek-v4-flash:

Cached input : 2,000,000,000 tokens × $0.0028/M = $5.60

Uncached input: 200,000,000 tokens × $0.14/M = $28.00

Output : 300,000,000 tokens × $0.28/M = $84.00

-------

Total $117.60Same workload on deepseek-v4-pro at promotional $0.435 / $0.87 (75% off through 2026-05-31; list $0.0145 / $1.74 / $3.48) lands at $1,421.00 — roughly 12× the spend for the frontier tier. On Kimi K2.6 (no cache pricing here, so I treat all input as uncached at $0.60), the same 200 + 2,000 input tokens at full rate plus 300 output tokens runs ~$2,520 across 1M calls. Cache discipline and tier choice matter more than benchmark deltas for most production budgets. See our DeepSeek pricing calculator to reproduce this against your own traffic shape.

Picking the right model

- Cheapest production chat: DeepSeek V4-Flash. Output at $0.28/M is hard to match.

- Best long-running coding agent: Kimi K2.6. Strong SWE-Bench Pro and HLE-with-tools numbers, designed for multi-hour runs.

- Strongest reasoning + factual reliability: GLM-5/5.1, especially for research and technical documentation.

- Most permissive open licence: Qwen3.6-35B-A3B (Apache 2.0).

- Best frontier-tier benchmarks against Western models: DeepSeek V4-Pro on SWE-Bench Verified or Qwen3.6-Max-Preview on coding/agent benchmarks (proprietary, hosted).

- Cheapest agentic coding API: MiniMax M2.5 Standard at $0.30/$1.20.

Caveats every reader should keep in mind

Three honest warnings before you commit:

- Data residency. All five labs run primarily out of Chinese data centres. Both Zhipu and MiniMax services run primarily from Chinese data centers; expect higher latency from North America or Europe. Conversations are processed under Chinese law. Read your security review carefully if you handle regulated data.

- Regulatory status changes. Italy’s Garante restricted the DeepSeek app in January 2025; several US states limit Chinese model use on government devices. Check current status for your jurisdiction before procurement.

- Licences vary by release. “Open-weight” is not “MIT”. Kimi K2.6’s weights are under a Modified MIT License, Qwen 3.6’s flagship is hosted-only, and DeepSeek’s V4 weights are MIT — but older models like Coder-V2 split MIT code from a separate DeepSeek Model License on weights. Read the repo before you redistribute.

For deeper one-on-one comparisons, see DeepSeek vs Qwen, DeepSeek vs Kimi and DeepSeek vs GLM. The full DeepSeek alternatives hub also covers non-Chinese options like Llama and Mistral.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

What are the best Chinese AI models in 2026?

The strongest options as of April 2026 are DeepSeek V4 (V4-Flash for cost, V4-Pro for the frontier tier), Moonshot’s Kimi K2.6 for agentic coding, Alibaba’s Qwen 3.6 family (open and proprietary tiers), Zhipu’s GLM-5/5.1 for reasoning, and MiniMax M2.5 for cheap coding agents. Pick by workload, not brand — see our AI comparison hub for head-to-head detail.

Which Chinese AI model is the cheapest to use?

DeepSeek V4-Flash is the cheapest of the major hosted APIs as of April 2026, listing $0.0028 cache-hit / $0.14 cache-miss input and $0.28 output per 1M tokens. MiniMax M2.5 Standard at $0.30/$1.20 is the next cheapest. Compare against current rates on the DeepSeek API pricing page before estimating spend.

Are Chinese AI models open source?

Many are, but licences vary. DeepSeek V4-Pro and V4-Flash ship under MIT for both code and weights. Kimi K2.6 uses a Modified MIT licence. Qwen3.6-35B-A3B is Apache 2.0, but Qwen3.6-Max-Preview is proprietary. GLM-5 weights are MIT on Hugging Face. Always read the specific repo — see our guide on whether DeepSeek is open source.

How does DeepSeek V4 compare to Kimi K2.6 for coding?

Kimi K2.6 currently leads on agentic benchmarks: 58.6 on SWE-Bench Pro and 80.2 on SWE-Bench Verified, designed for multi-hour autonomous runs with up to 300 sub-agents. DeepSeek V4-Pro posted 80.6% on SWE-Bench Verified at launch and costs roughly an order of magnitude less per output token. For deeper analysis see our DeepSeek for coding writeup.

Can I use Chinese AI models safely for business data?

It depends on your data classification and jurisdiction. Hosted APIs from these labs run primarily from Chinese data centres, with conversations subject to local law. Regulated data (health, legal, government) generally should not transit those endpoints; consider self-hosting open weights on infrastructure you control. Our DeepSeek privacy guide walks through the trade-offs.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingGoogle Gemini API pricingGemini API rates for cross-vendor comparisonsLast checked: April 30, 2026

- OfficialAlibaba Cloud Model Studio model docsQwen / Alibaba Cloud model architecture and pricingLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

Context sources

- OfficialMoonshot Kimi K2.6 benchmark reportSWE-Bench Pro/Verified, Terminal-Bench, HLE-with-tools claimsLast checked: April 30, 2026

- OfficialZhipu GLM-5 / GLM-5.1 evaluation reportAIME 2026 / GPQA-Diamond / HLE numbers; trained on AscendLast checked: April 30, 2026

- BenchmarkArtificial Analysis cross-lab benchmark refereeIndependent verification source for vendor numbersLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.