How to Use DeepSeek on Mac: Web, App, Local and API

If you own a MacBook Air, a Mac mini, or a Mac Studio and want to use DeepSeek without juggling a Linux box, you have four working paths: the web chat in Safari, the official iOS-via-Apple-Silicon app, a locally hosted open-weight model through Ollama or LM Studio, and the developer API. Running DeepSeek on Mac in 2026 is easier than it was a year ago — V4 launched as an open-weight family on April 24, 2026, and tooling on Apple Silicon has matured around it. This guide walks through each option, including realistic hardware expectations for local inference, sample API code, current pricing, and the trade-offs nobody mentions until you hit them.

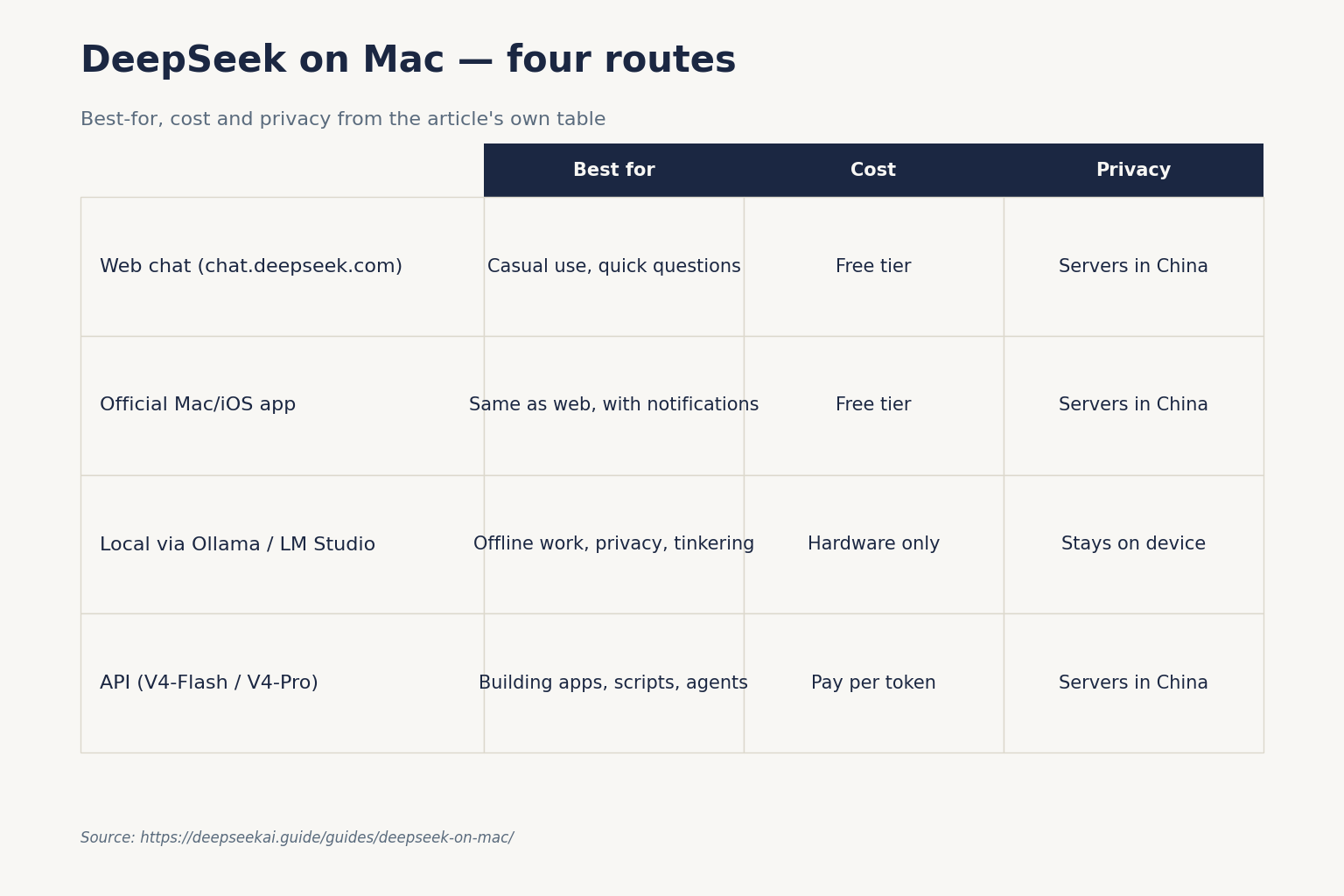

The four ways to run DeepSeek on a Mac

Before you install anything, pick the right access path. Each one optimises for a different goal.

| Method | Best for | Cost | Privacy | Setup time |

|---|---|---|---|---|

| Web chat (chat.deepseek.com) | Casual use, quick questions | Free tier | Servers in China | 2 minutes |

| Official Mac/iOS app | Same as web, with notifications | Free tier | Servers in China | 5 minutes |

| Local via Ollama / LM Studio | Offline work, privacy, tinkering | Hardware only | Stays on device | 20–60 minutes |

| API (V4-Flash / V4-Pro) | Building apps, scripts, agents | Pay per token | Servers in China | 10 minutes |

The right choice depends on three questions: do you need offline access, do you handle sensitive data, and do you want to write code against it. If you answered no to all three, the web or app is the easiest start.

Option 1: DeepSeek web chat in Safari, Chrome or Arc

The simplest route is chat.deepseek.com in any modern browser on macOS. Sign in with email, Google or Apple ID. The web chat now defaults to DeepSeek V4 with a DeepThink toggle that switches between non-thinking and thinking mode. There is no install, no Rosetta concern, no Apple Silicon vs Intel question — it runs wherever Safari runs.

Things to know about the web surface:

- Conversation history is preserved server-side per account, unlike the API.

- File uploads (PDFs, images) are supported on the web; large attachments may be summarised before processing.

- The DeepThink toggle adds latency but improves multi-step reasoning quality.

- For an account walkthrough, see the sign up for DeepSeek guide.

If you mostly chat and occasionally paste code, the web is enough. Move to a heavier option only when you have a reason to.

Option 2: The official DeepSeek app on Apple Silicon

DeepSeek publishes an official iOS app that runs natively on M-series Macs through Apple’s “Designed for iPad” compatibility layer. Open the App Store on macOS, search “DeepSeek – AI Assistant”, and confirm the publisher is DeepSeek before installing — there have been counterfeits. The verify official DeepSeek app guide covers the spoofing risk in detail.

The app shares your account and chat history with the web. It adds push notifications and a slightly better mobile-style UI, but it does not expose anything the web doesn’t. Intel Macs cannot run the iOS app — they are limited to the web. For an iOS-specific walkthrough that mostly applies to Apple Silicon Macs, see DeepSeek on iPhone.

Option 3: Run DeepSeek locally on Mac with Ollama or LM Studio

Local inference is where Apple Silicon earns its keep. The unified memory architecture on M-series chips means a 24 GB MacBook Pro can hold model weights that would need a discrete GPU on a PC. The catch: V4-Pro (1.6T total) and V4-Flash (284B) are too large for any consumer Mac. Local users run the older R1 distilled models or the original DeepSeek Coder family.

Recommended local models by Mac

| Mac configuration | Recommended model | Quantisation | Approx. RAM use |

|---|---|---|---|

| M1/M2/M3 8 GB | R1-Distill-Qwen-1.5B | Q4_K_M | ~2 GB |

| M-series 16 GB | R1-Distill-Qwen-7B | Q4_K_M | ~5 GB |

| M-series 24–32 GB | R1-Distill-Llama-8B or Qwen-14B | Q4_K_M / Q5 | ~9–12 GB |

| M-Pro/M-Max 64 GB | R1-Distill-Qwen-32B | Q4_K_M | ~22 GB |

| M-Ultra / Studio 128 GB+ | R1-Distill-Llama-70B | Q4_K_M | ~45 GB |

For a sizing check before you download 40 GB of weights, run the numbers through the DeepSeek hardware calculator.

Ollama install on macOS

Ollama is the path of least resistance. Install it once, then pull models on demand. In Terminal:

brew install ollama

ollama serve &

ollama pull deepseek-r1:7b

ollama run deepseek-r1:7bThat gives you a local REPL. Models are stored in ~/.ollama/models. Ollama exposes a local OpenAI-compatible API on http://localhost:11434/v1, so any tool that speaks OpenAI’s wire format will work against it. The full walkthrough — including model variants and GPU offload settings — is in the running DeepSeek on Ollama tutorial.

LM Studio for a graphical option

LM Studio is a native macOS app with a model browser, chat UI, and a built-in local server. It is friendlier than Ollama if you prefer not to live in Terminal, and it surfaces quantisation options clearly. Same underlying GGUF weights, same local-only privacy posture. For a broader local setup that covers both, see install DeepSeek locally.

Realistic expectations: an R1-Distill-7B model on an M2 with 16 GB delivers around 25–40 tokens per second in my testing. A 32B model on an M2 Max 64 GB drops to roughly 8–12 tokens per second. Both are usable; neither matches the cloud V4 models on quality. Local distill models are good for privacy-first workflows and offline coding assistance, not frontier reasoning.

Option 4: The DeepSeek V4 API from your Mac

If you are building anything — a script, a CLI tool, a Raycast extension, a custom Obsidian plugin — the API is the right surface. DeepSeek V4 launched on April 24, 2026 as a family of two open-weight MoE models: deepseek-v4-pro (1.6T total, 49B active) for frontier-tier work, and deepseek-v4-flash (284B total, 13B active) for cost-efficient workloads. Both run under the MIT license. Both default to a 1,000,000-token context window with output up to 384,000 tokens.

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. DeepSeek also ships an Anthropic-compatible surface on the same base URL, so you can swap in the Anthropic SDK if your codebase already uses it.

Quickstart on macOS

Install the OpenAI Python SDK in a virtualenv, then point it at DeepSeek:

python3 -m venv ~/.venvs/deepseek

source ~/.venvs/deepseek/bin/activate

pip install openai

export DEEPSEEK_API_KEY="sk-..."A minimal Python script:

import os

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key=os.environ["DEEPSEEK_API_KEY"],

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Summarise the M4 Max in one paragraph."}],

temperature=1.3,

max_tokens=400,

)

print(resp.choices[0].message.content)Two things to remember. First, the API is stateless — DeepSeek does not store turns server-side. Your client must resend the full messages array each request. Second, thinking mode is a parameter on either V4 model, not a separate ID. To enable it, add reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}}; the response then returns reasoning_content alongside the final content. For maximum reasoning depth, use reasoning_effort="max" and ensure max_tokens is high enough.

Legacy IDs and the migration window

If you have older Mac scripts using deepseek-chat or deepseek-reasoner, they still work — both currently route to deepseek-v4-flash (non-thinking and thinking respectively). They will be retired on 2026-07-24 at 15:59 UTC. Migration is a one-line model= swap; base_url does not change. The full DeepSeek API documentation covers the parameter set in detail.

Useful parameters and features

temperature— DeepSeek’s official guidance: 0.0 for code/maths, 1.0 for data analysis, 1.3 for general conversation and translation, 1.5 for creative writing.max_tokens— cap on output length; up to 384K on V4. Always set this when using JSON mode.response_format={"type": "json_object"}— JSON mode is designed to return valid JSON, not guaranteed. Include the word “json” and a small example schema in your prompt; handle occasional empty content.- Tool calling, streaming (

stream=true), context caching, FIM completion (Beta — requiresthinking: {"type": "disabled"}), and Chat Prefix Completion (Beta) are all supported.

Pricing on a Mac developer’s workload

API costs depend entirely on which tier you choose. As of April 2026 (always confirm on the DeepSeek API pricing page before committing):

| Tier | Input cache hit | Input cache miss | Output |

|---|---|---|---|

deepseek-v4-flash |

$0.0028 / 1M | $0.14 / 1M | $0.28 / 1M |

deepseek-v4-pro |

$0.0145 / 1M (list; promo $0.003625 through 2026-05-31) | $0.435 promo / $1.74 list per 1M | $0.87 promo / $3.48 list per 1M |

Worked example for a Mac-side coding assistant making 1,000,000 calls with a 2,000-token cached system prompt, a 200-token uncached user message, and a 300-token response, running on deepseek-v4-flash:

Cached input : 2,000,000,000 tokens × $0.0028/M = $5.60

Uncached input: 200,000,000 tokens × $0.14/M = $28.00

Output : 300,000,000 tokens × $0.28/M = $84.00

-------

Total $117.60The same workload on deepseek-v4-pro totals $1,421.00 — roughly 12× more, justified only when frontier-tier benchmark performance matters. Off-peak discounts ended on 2025-09-05 and have not returned, so don’t budget for them.

Common Mac-specific issues and fixes

| Symptom | Likely cause | Fix |

|---|---|---|

| Ollama stalls on first prompt | Model still loading into memory | Wait 10–30 seconds; check Activity Monitor for memory pressure |

| “Out of memory” on M1 8 GB | Model too large for unified RAM | Drop to a smaller distill (1.5B) or use the API instead |

| App Store app won’t install | Intel Mac, no Apple Silicon | Use the web chat in Safari |

| API request returns 401 | Missing or wrong key | Re-export DEEPSEEK_API_KEY; rotate in the console |

| Empty JSON response | Model truncation or prompt without “json” | Raise max_tokens; add example schema to prompt |

For a broader catalogue, see the DeepSeek troubleshooting guide.

Privacy and where your data goes

The web, app and API all send conversations to DeepSeek’s infrastructure in China. That is fine for casual use; it is not appropriate for client-confidential legal work, medical records, or anything covered by GDPR data-residency clauses unless your employer has explicitly approved it. The local Ollama or LM Studio path keeps every byte on your Mac — that’s the privacy trade-off worth the extra setup time. The DeepSeek privacy guide goes into the contractual details.

Which path should you pick?

- Casual user, just curious: the web chat. Move on with your day.

- Mac power user wanting offline access: Ollama with R1-Distill-Qwen-7B on 16 GB or larger.

- Developer building tools: V4-Flash via the API; upgrade specific calls to V4-Pro when you have a benchmark reason.

- Privacy-sensitive work: local only; do not use the cloud surfaces.

For a more general beginner pathway, the DeepSeek beginners guide is a softer entry point. If you want to compare DeepSeek’s Mac story against ChatGPT’s, the DeepSeek vs ChatGPT comparison covers ecosystem differences honestly. And for a curated index of every guide on this site, the DeepSeek beginner guides hub is the catalog.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Is there a native DeepSeek app for macOS?

DeepSeek does not publish a dedicated AppKit Mac app. On Apple Silicon Macs (M1 and later) you can install the official iOS DeepSeek app from the Mac App Store via “Designed for iPad” compatibility — confirm the publisher before installing, since spoofs exist. Intel Macs do not support this and should use the web chat. The DeepSeek app guide covers verification.

How do I run DeepSeek locally on a MacBook?

Install Ollama with brew install ollama, pull a distill model with ollama pull deepseek-r1:7b, then run it. A 16 GB Apple Silicon Mac handles 7B models comfortably; 32B needs 64 GB. The full V4 models are too large for any Mac. Step-by-step setup lives in the install DeepSeek locally tutorial.

Can I use the DeepSeek API from a Mac terminal?

Yes. Install the OpenAI Python SDK with pip, set DEEPSEEK_API_KEY as an environment variable, and point base_url at https://api.deepseek.com. Chat requests hit POST /chat/completions. The API is stateless, so resend message history each call. The DeepSeek API getting started tutorial walks through the full setup.

What hardware do I need to run DeepSeek on Mac?

For the web or app, any modern Mac works. For local inference, Apple Silicon with 16 GB unified memory handles 7B-class distill models; 32 GB handles 14B; 64 GB handles 32B comfortably. Intel Macs can run small distill models on CPU but performance is poor. Check sizing in the DeepSeek hardware calculator before downloading weights.

Does DeepSeek on Mac cost anything?

The web chat and official app have a free tier with no documented daily cap. Local Ollama and LM Studio are free aside from electricity. The API charges per token: V4-Flash is $0.14 input miss / $0.28 output per million tokens; V4-Pro is currently $0.435 / $0.87 during the 75% promo through 2026-05-31 (list $1.74 / $3.48). Always confirm on the live DeepSeek API pricing page before budgeting.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.