Using DeepSeek for Ecommerce: Workflows That Actually Ship

You have 40,000 SKUs with thin descriptions, a support inbox that grows faster than your team, and a marketing calendar that never lets up. Picking DeepSeek for ecommerce work is mostly a cost-and-control decision: the models are open-weight, the API is OpenAI-compatible, and the per-token rates are low enough that bulk catalog jobs become a line item rather than a project. I run DeepSeek V4 in production across two stores — a 12,000-SKU apparel catalog and a smaller homewares brand — and this article is the workflow set I actually use. You will see the prompts, the cost math at V4-Flash and V4-Pro rates, the integration code, and where I still reach for a different tool.

The concrete problem ecommerce teams face

Most online stores share a predictable stack of text problems. Product copy is inconsistent across categories because it was written by 14 different freelancers over five years. Customer reviews pile up faster than anyone can read them, so trends in sizing complaints or shipping issues surface only when they hit the support queue. Translation backlogs block international expansion. Support tickets repeat the same 30 questions endlessly. Category pages read like keyword soup, and the SEO team wants meta descriptions for 8,000 URLs by Friday.

Each of those is a text-in, text-out task at scale — exactly the shape of work where a cheap, capable LLM beats hiring more people or buying narrow SaaS tools. The reason DeepSeek shows up in this conversation in 2026 is straightforward economics. DeepSeek V4, released April 24, 2026, ships in two open-weight Mixture-of-Experts tiers under the MIT license: deepseek-v4-pro (1.6T total / 49B active parameters) and deepseek-v4-flash (284B / 13B active). Both models default to a 1,000,000-token context window with output up to 384,000 tokens, which matters when you want to pass a 5,000-product CSV in one request. For full background on the family, see our overview of DeepSeek V4.

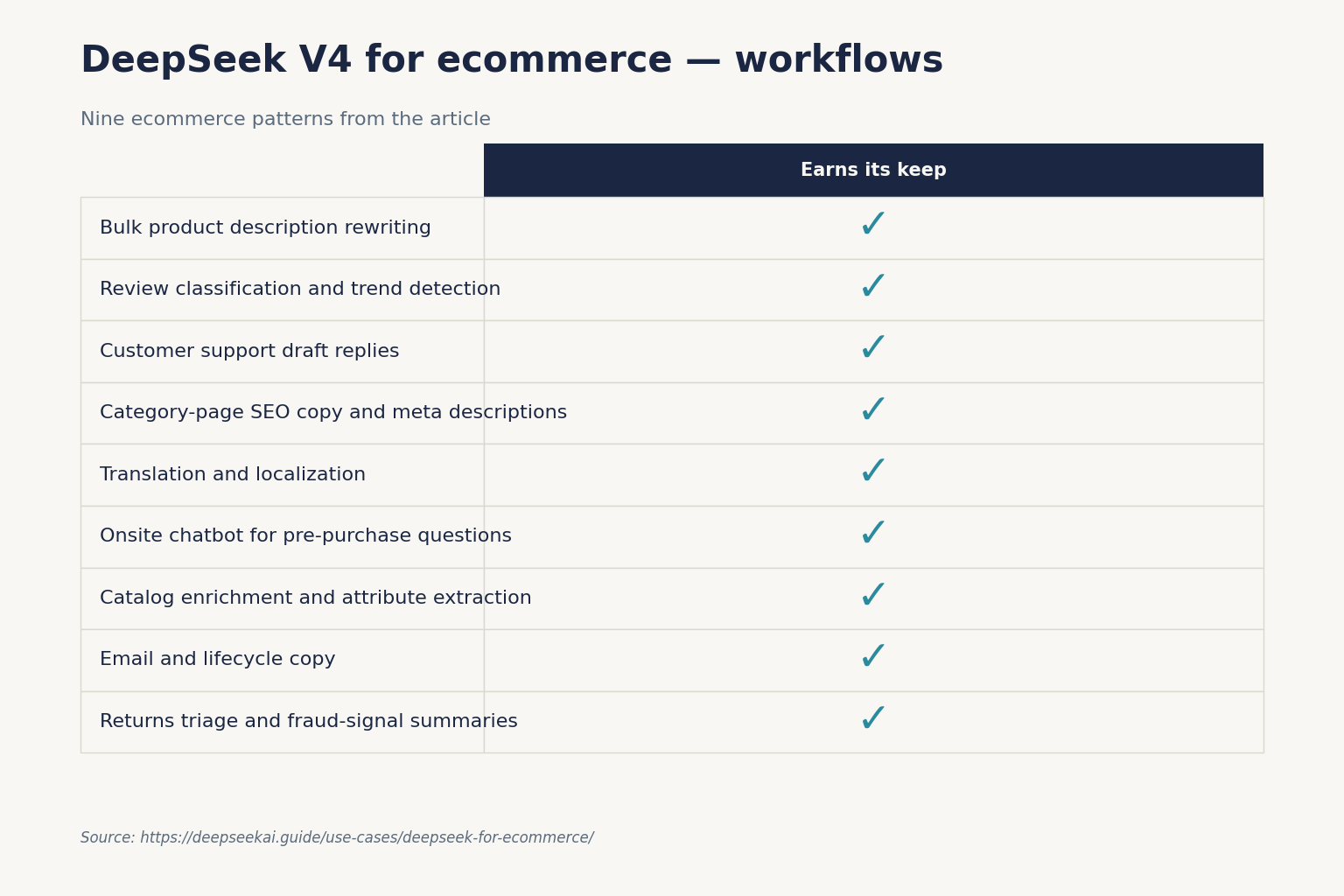

How DeepSeek helps: nine workflows with prompts

I will walk through the workflows in order of return on effort. The first three pay for themselves in week one.

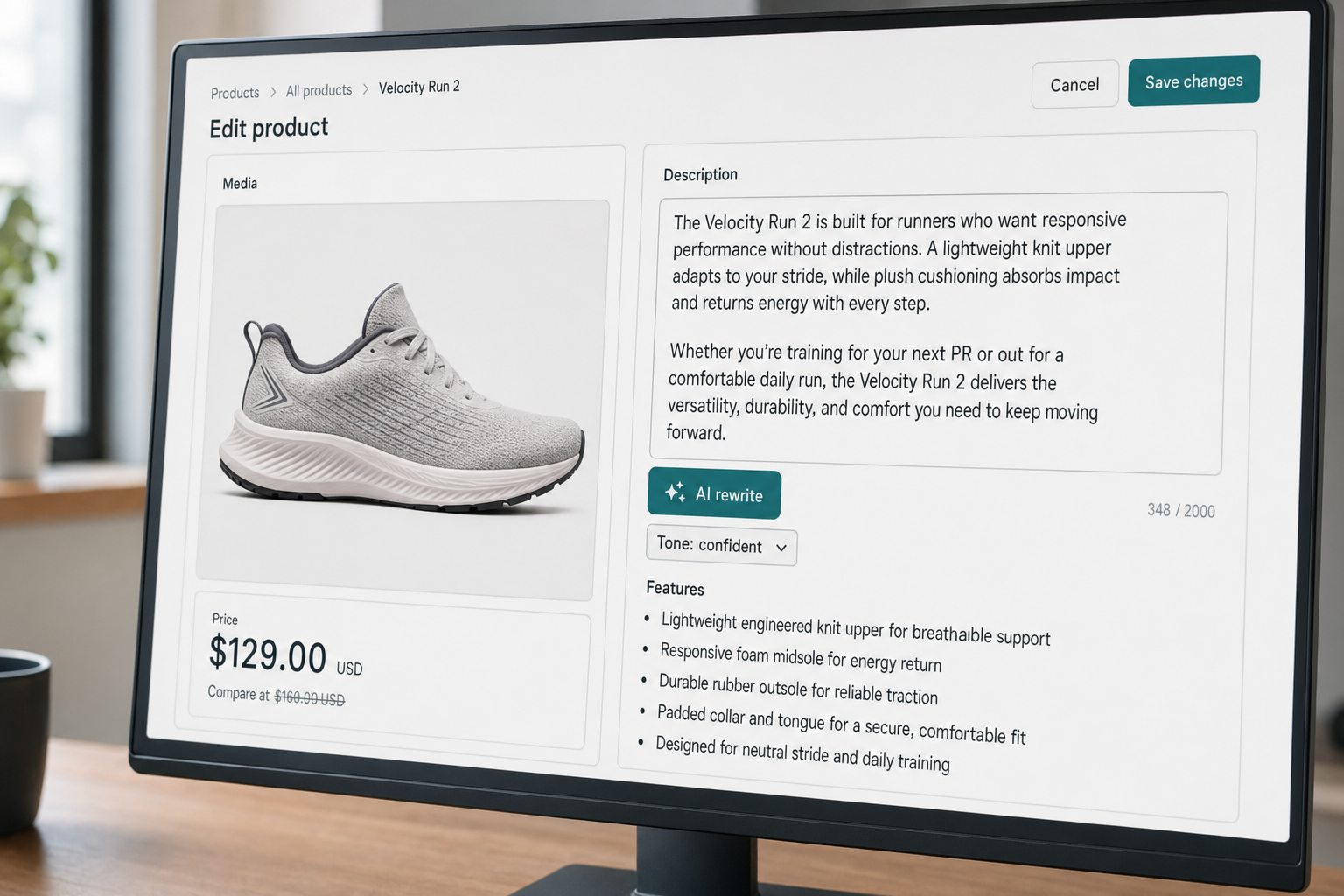

1. Bulk product description rewriting

Take a CSV of SKU, title, attributes, and a thin existing description. Send it to deepseek-v4-flash in non-thinking mode with a system prompt that fixes tone, length, and brand vocabulary. A working prompt skeleton:

System: You write product descriptions for [Brand]. Tone: warm, plainspoken, never superlative. Length: 60-90 words. Always include the primary material, fit/size note, and one care instruction. Output JSON with keys: sku, description.

User: Rewrite descriptions for these SKUs. Return one JSON object per SKU.

[CSV rows]

At V4-Flash rates, rewriting 10,000 descriptions with a 400-token system prompt (cached), 250 tokens of input per SKU, and a 120-token response runs about $4–$6 — well under the price of a single freelance day.

2. Review classification and trend detection

Pipe new reviews through a classifier prompt with fixed labels (sizing, shipping, quality, fit, expectation, other) plus a sentiment score and a one-line summary. Use JSON mode so the output drops straight into a database. Aggregate weekly. The first time you do this, you will find two or three issues your support team has been quietly absorbing.

3. Customer support draft replies

Wire DeepSeek into Zendesk or Front via webhook. For each new ticket, retrieve the three closest help-center articles (a basic embedding-based RAG setup — see our DeepSeek RAG tutorial), pass them as context, and ask V4-Flash to draft a reply. Agents edit and send. This is also where the DeepSeek for customer support playbook goes deeper on macros and escalation rules.

4. Category-page SEO copy and meta descriptions

Generate a 120-word intro paragraph and a 150-character meta description for each category, given the category name, top 10 products, and three target keywords. Constrain length with max_tokens and validate the meta description length in post-processing. The full pattern is in DeepSeek for SEO.

5. Translation and localization

Translate descriptions, size charts, and email flows into the languages your store actually serves. Set temperature=1.3 per DeepSeek’s guidance for translation tasks. For European Portuguese vs Brazilian Portuguese, or US vs UK English spelling and units, give the model an explicit instruction in the system prompt — it follows locale rules well when told plainly.

6. Onsite chatbot for pre-purchase questions

This is where many teams overspend. A pre-purchase chatbot does not need frontier reasoning. V4-Flash in non-thinking mode, grounded in your catalog and policies via RAG, handles “does this run small?” and “when will it ship to Ireland?” perfectly. Save V4-Pro for harder cases.

7. Catalog enrichment and attribute extraction

Given a freeform description, extract structured attributes (material, color family, fit, occasion, season). JSON mode plus a tight schema turns this into a deterministic-feeling job. This is what fills the holes in your filtered navigation.

8. Email and lifecycle copy

Welcome series, abandoned cart, win-back, post-purchase. Generate variants per segment, then A/B test. Pair this with the patterns in DeepSeek for marketing.

9. Returns triage and fraud-signal summaries

For each return request, summarize the customer’s reason, flag inconsistencies (claimed never received vs delivery confirmation), and suggest a resolution path. This is one of the few places I switch to deepseek-v4-pro with reasoning_effort="high" — the cost premium is worth it when the model needs to weigh contradictory evidence.

The minimal API integration

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. The API is stateless — you must resend the full conversation history on every request, in contrast to the web chat and mobile app, which persist session history for you. Here is a Python snippet using the OpenAI SDK:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": SYSTEM_PROMPT},

{"role": "user", "content": csv_chunk},

],

temperature=0.0,

max_tokens=8000,

response_format={"type": "json_object"},

)

Notes on the parameters that matter for ecommerce work:

temperature: 0.0 for code/data extraction, 1.0 for review analysis, 1.3 for translation and conversational copy, 1.5 for creative writing.max_tokens: set generously when using JSON mode — truncated JSON is invalid JSON.reasoning_effort: omit for fast/cheap calls; set to"high"withextra_body={"thinking": {"type": "enabled"}}on V4 when you need the model to plan. Thinking mode returnsreasoning_contentalongside the finalcontent.- JSON mode is designed to return valid JSON, not guaranteed. Include the word “json” in your prompt with a small example schema, set a high enough

max_tokens, and handle the rare empty-content response. - Streaming via

stream=trueis what you want for the onsite chatbot. Tool calling handles add-to-cart, check-stock, and start-return functions.

If you are migrating from the legacy IDs, deepseek-chat and deepseek-reasoner still work but currently route to deepseek-v4-flash and will be retired on 2026-07-24 at 15:59 UTC. Update the model field; base_url does not change. The DeepSeek OpenAI SDK compatibility page covers the swap. DeepSeek also exposes an Anthropic-compatible surface against the same base URL if your stack already uses that SDK.

What it actually costs at V4-Flash rates

Worked example for a mid-sized store running the bulk-description, classification, and chatbot workloads on deepseek-v4-flash. Assume one million calls per month with a 2,000-token cached system prompt, a 200-token user message, and a 300-token response:

| Bucket | Tokens (1M calls) | Rate (per 1M) | Subtotal |

|---|---|---|---|

| Input, cache hit | 2,000,000,000 | $0.0028 | $56.00 |

| Input, cache miss | 200,000,000 | $0.14 | $28.00 |

| Output | 300,000,000 | $0.28 | $84.00 |

| Total | $117.60 |

For comparison, the same workload on deepseek-v4-pro at promotional $0.435 / $0.87 (75% off through 2026-05-31; list $0.0145 / $1.74 / $3.48) per 1M tokens runs $29.00 + $348.00 + $1,044.00 = $1,421.00 — roughly 10× the cost. Use Pro selectively. The pattern I follow: Flash by default, Pro only for returns triage and any agentic workflow that touches inventory or refunds. The DeepSeek API pricing page has the live rate card, and the DeepSeek pricing calculator handles custom workload math.

Context caching kicks in automatically when DeepSeek detects a repeated prefix in messages. Structuring your system prompt as a stable prefix — brand voice, schema definitions, examples — and putting per-call variables (the SKU data, the customer message) at the end is the single biggest cost lever you have. See DeepSeek context caching for the implementation pattern.

Limitations to plan around

- Image understanding is not in V4 yet. If product photo analysis (alt-text generation, on-model vs flat-lay classification, defect detection from returns photos) is core to your workflow, you currently need a separate vision model. DeepSeek VL2 exists but is on a different release track.

- Real-time inventory and pricing decisions need tool calling done right. The model will confidently make up stock numbers if you do not ground every answer in a tool call against your actual systems.

- Data residency. API calls are processed on DeepSeek’s servers, which are subject to Chinese law. For EU stores handling personal data, that is a real GDPR conversation — review the position in DeepSeek privacy and decide based on your data classification.

- Hallucinated product attributes. JSON mode does not guarantee factual accuracy. Always validate extracted attributes against an allow-list before writing them to the catalog.

- Brand voice drift over long sessions. The 1M-token context is generous, but long multi-turn conversations still drift. Pin the voice rules in the system prompt and re-anchor in long sessions.

When to reach for something else

Honest comparison matters here, because no single model wins every ecommerce task.

| Task | My pick | Why |

|---|---|---|

| Bulk text generation, classification, translation | DeepSeek V4-Flash | Cost per token is hard to beat at this quality tier |

| Agentic workflows touching refunds, inventory | DeepSeek V4-Pro or Claude | Frontier-tier reasoning earns its keep when stakes are high |

| Image understanding (alt-text, defect detection) | Gemini or GPT-5 family | Mature multimodal pipelines today |

| Voice support agents | OpenAI Realtime API | Lowest-latency speech stack currently |

| On-prem deployment with no third-party data sharing | DeepSeek V4 open weights or Llama | MIT-licensed weights mean you can self-host |

For deeper head-to-heads, see DeepSeek vs ChatGPT and DeepSeek vs Claude.

Getting started for an ecommerce team

- Create an API key and load $20 of credit to run pilots without budget approval friction. Walkthrough: get a DeepSeek API key.

- Pick one workflow from the nine above. Bulk description rewriting is the easiest to measure.

- Build the prompt and run it on 100 SKUs in a notebook. Have a copywriter rate the output blind against the originals.

- Move to a batch script with caching and JSON output. Iterate the prompt until rejection rate is under 10 percent.

- Wire it into your PIM or Shopify metafields. Keep a human in the loop for the first 1,000 items.

- Add monitoring: track rejection rate, average tokens per call, and cost per accepted output.

The first workflow takes about a week to ship end-to-end. The second one takes two days, because most of the plumbing is now reusable. By workflow four you stop counting hours.

For a broader read on similar ecommerce-adjacent applications, browse the full set of DeepSeek use cases.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

How does DeepSeek for ecommerce compare to ChatGPT for product description writing?

For bulk product copy, the two produce comparable quality at the brief I tested (60–90 word descriptions, fixed tone). DeepSeek V4-Flash wins on cost — roughly 5–10× cheaper per million output tokens than mainstream alternatives at frontier-quality tier. ChatGPT wins on ecosystem (image inputs, plugins). For a side-by-side, see DeepSeek vs ChatGPT.

Can DeepSeek integrate with Shopify or WooCommerce directly?

There is no first-party Shopify app from DeepSeek. You connect via the API using webhooks, a middleware layer (n8n, Make, a custom Node service), or the metafield bulk import. Most teams I work with run a small Node or Python service that listens to Shopify webhooks and calls the DeepSeek API. Start with DeepSeek Node.js integration for the wiring.

Is DeepSeek safe to use for customer data in an online store?

API requests are processed on DeepSeek’s servers in China and may be retained according to their policies and applicable law. For most product-catalog text that is not a concern, but personal customer data (names, addresses, payment details) needs a privacy review and likely redaction before sending. The trade-offs are covered in DeepSeek privacy.

What does it cost to run DeepSeek for an ecommerce chatbot at scale?

For a chatbot handling one million conversations per month with a cached system prompt of 2,000 tokens, a 200-token user message, and a 300-token reply, V4-Flash costs about $117.60/month total ($56 cached input + $28 uncached input + $84 output). The same workload on V4-Pro is about $1,421. The DeepSeek pricing calculator handles your specific numbers.

Why use DeepSeek V4-Flash instead of V4-Pro for ecommerce work?

V4-Flash is roughly 10× cheaper on output tokens and handles 90 percent of ecommerce text tasks — descriptions, classification, translation, support drafts — at quality indistinguishable from Pro in blind reviews I have run. Reserve V4-Pro for agentic workflows that touch refunds, inventory decisions, or multi-step planning. Background on both tiers is on the DeepSeek V4-Flash page.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.