Using DeepSeek for Healthcare: Workflows, Costs, and Cautions

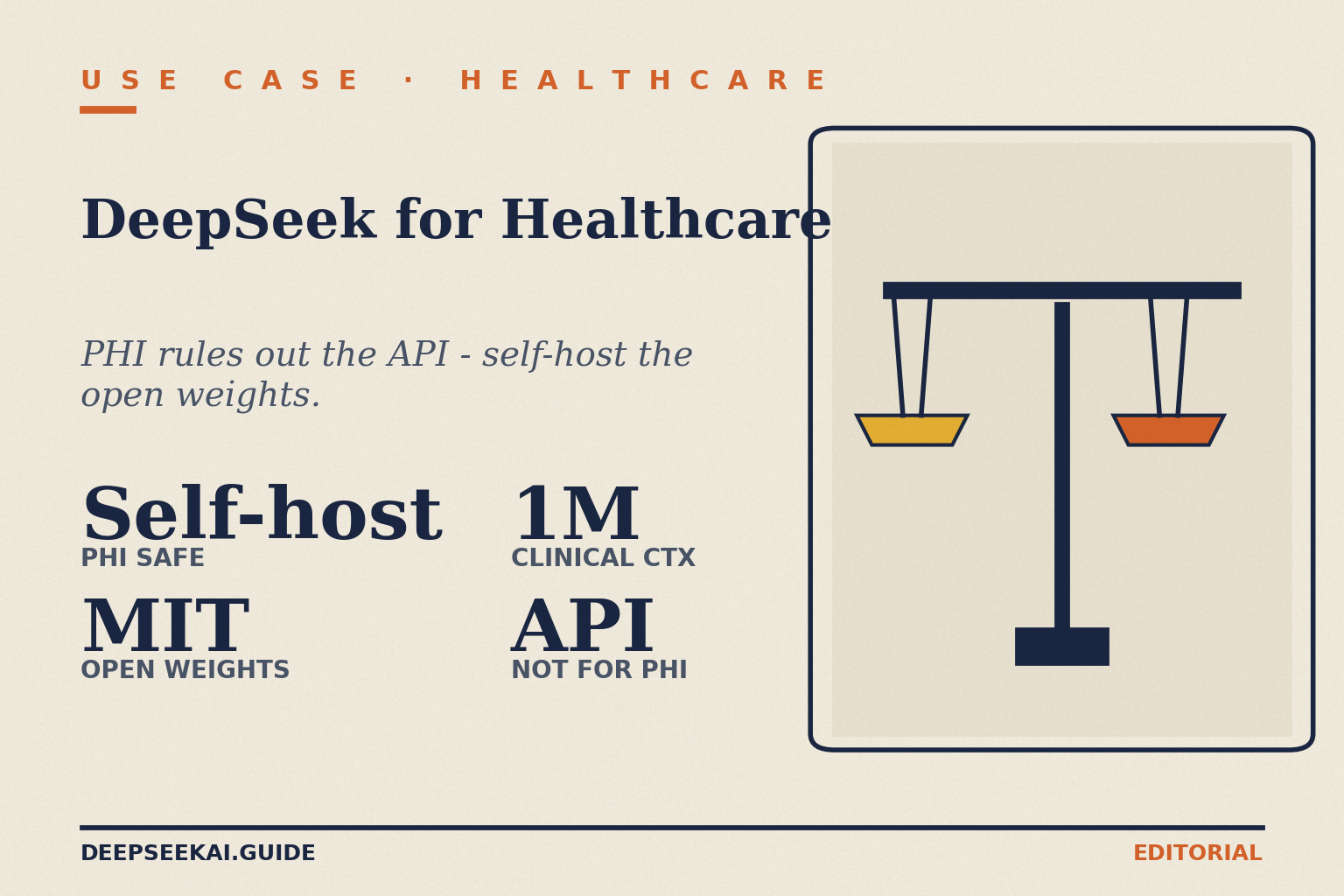

Can a hospital research team, a private clinic, or a digital health startup actually use DeepSeek for healthcare work without putting patient data — or its budget — at risk? The honest answer is: yes for some tasks, no for others, and the line runs through how you deploy it. DeepSeek’s web app and hosted API send conversations to servers in China, which is a non-starter for protected health information (PHI). The open weights, run locally, are a different story — and with V4 they fit a million-token clinical context onto reasonable hardware. This article maps the workflows where DeepSeek genuinely earns its place, the ones where it does not, and the prompts, costs, and guardrails that separate the two.

The concrete problem: AI in clinical work is mostly a privacy problem

Clinicians, researchers, and health-tech teams all face the same wall when adopting general-purpose chatbots: the data they want to process is regulated. In the United States that means HIPAA; in the UK and Ireland, UK GDPR and the EU GDPR; in Australia, the Privacy Act and APPs; in Canada, PIPEDA and provincial equivalents. None of these regimes care that the model is clever — they care where the bytes go.

That framing matters because two of the most common questions about deepseek for healthcare have different answers depending on which DeepSeek you mean:

- The hosted DeepSeek chat and API — convenient, cheap, but routes data to servers subject to Chinese law. Independent compliance reviewers have flagged this as incompatible with HIPAA-covered workflows on PHI.

- The open-weight models, run locally — the same architecture, but the data never leaves your infrastructure. This is what most published medical work, including the PMC editorial on DeepSeek in healthcare, points to as the realistic path for clinical use.

The current generation is DeepSeek V4, released April 24, 2026, in two open-weight Mixture-of-Experts tiers: deepseek-v4-pro (1.6T total / 49B active parameters) and deepseek-v4-flash (284B / 13B active). Both ship under the MIT license, both default to a 1,000,000-token context window, and both can output up to 384,000 tokens. Thinking mode is a request parameter on either tier, not a separate model.

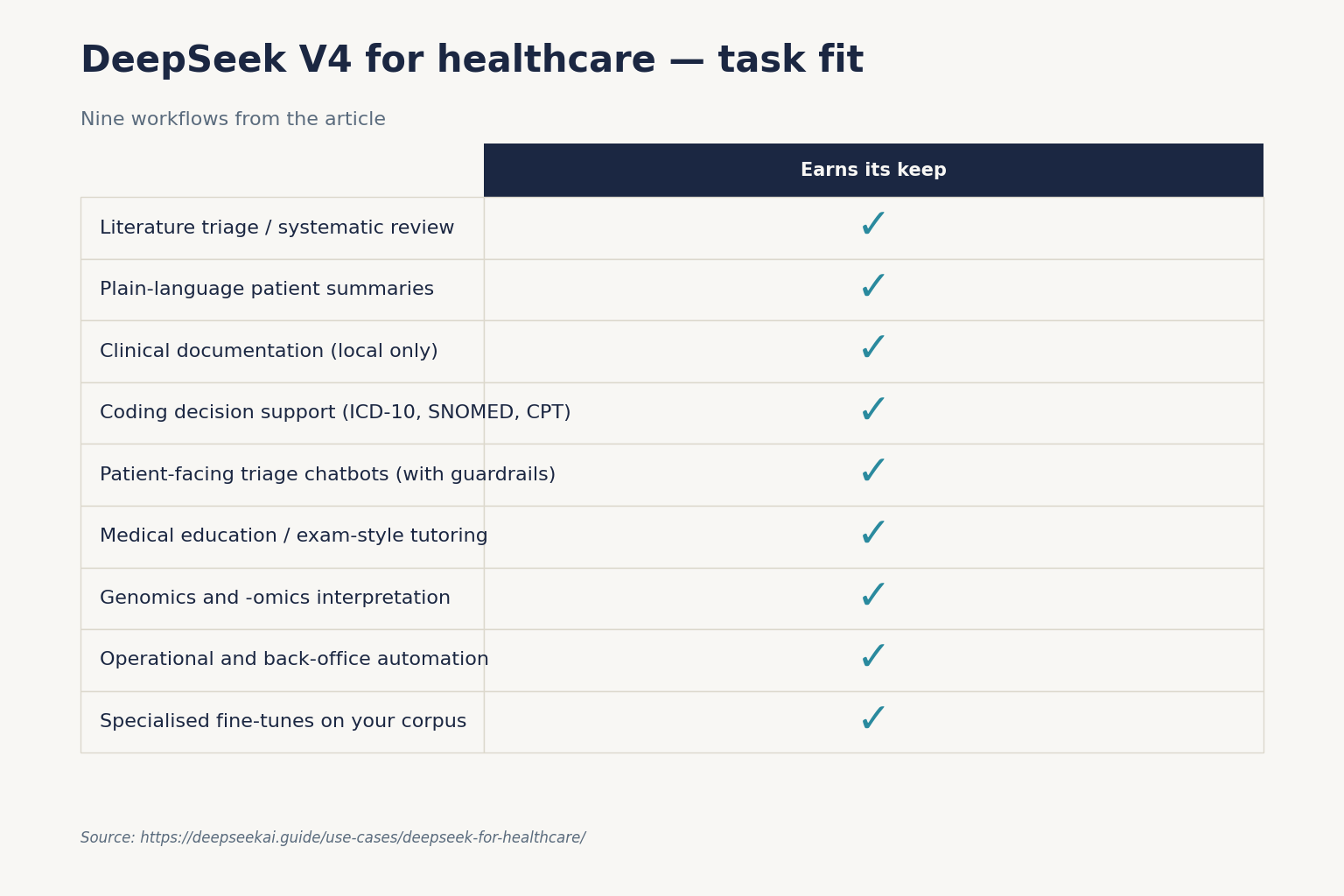

Where DeepSeek earns its place in healthcare

Below are nine specific workflows I have either tested or seen run reliably in clinical-adjacent settings. The split between “do this on the hosted API” and “do this only on local weights” is deliberate. Treat any patient-identifiable text as PHI by default.

1. Literature triage and systematic-review scaffolding

Million-token context lets you paste a stack of abstracts (or full open-access papers) and ask the model to extract PICO elements, risk-of-bias signals, or effect sizes into a structured table. This is non-PHI work, so the hosted API is fine.

Prompt template:

You are a research assistant supporting a systematic review on [topic].

For each article below, return JSON with: title, design, n, population,

intervention, comparator, primary_outcome, effect_estimate, risk_of_bias_notes.

If a field is missing, return null. Do not infer values.

[paste 30-100 abstracts]Pair this with DeepSeek API JSON mode for clean output. JSON mode is designed to return valid JSON, not guaranteed — include the word “json” in your prompt, supply a small example schema, and set max_tokens high enough to avoid truncation.

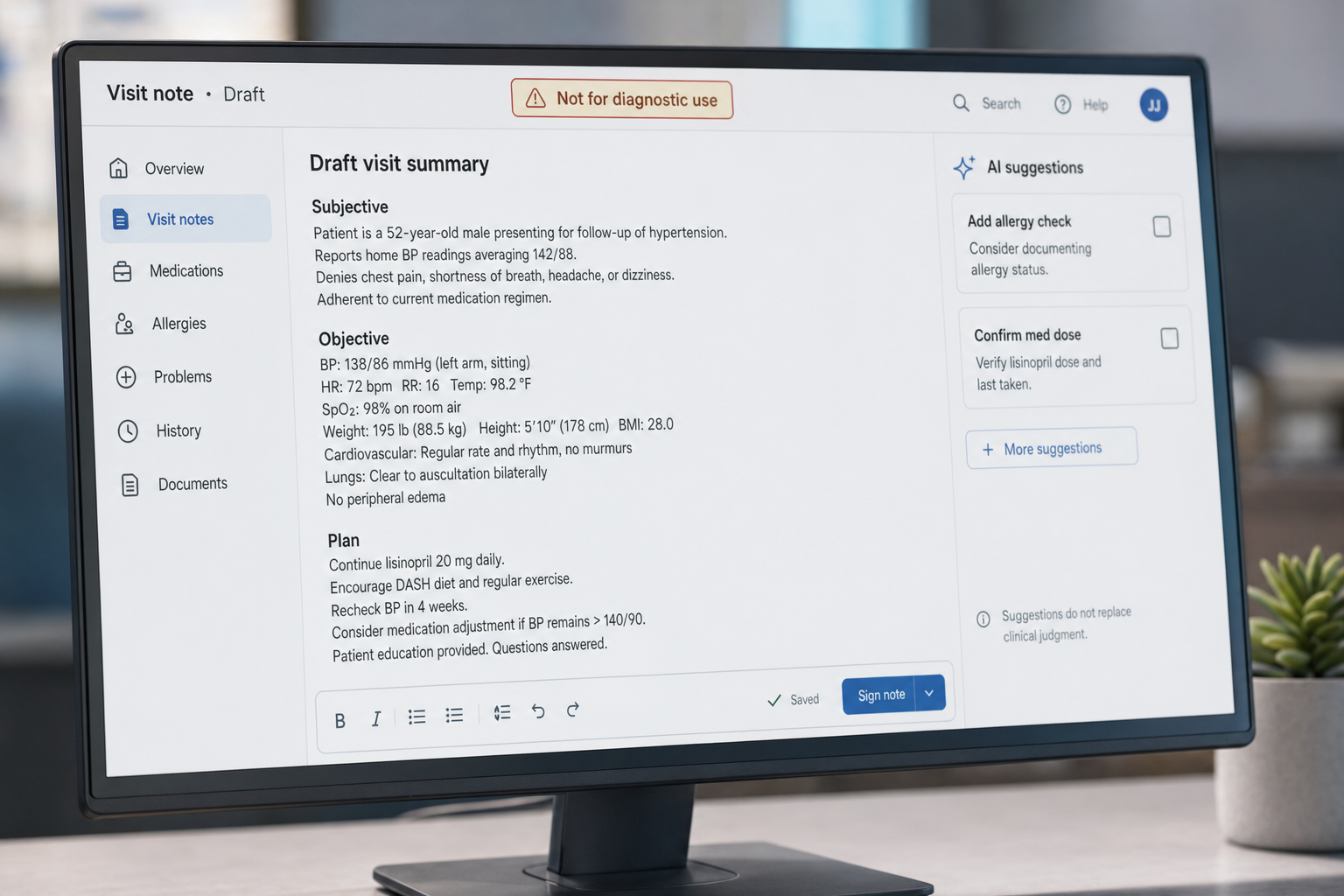

2. Drafting plain-language patient summaries

Discharge instructions, post-procedure leaflets, medication explainers — fed in as de-identified templates, V4-Flash drafts a Year 8 reading-level version in seconds. A clinician must edit before it ever reaches a patient. The model is a first-draft engine, not an authority.

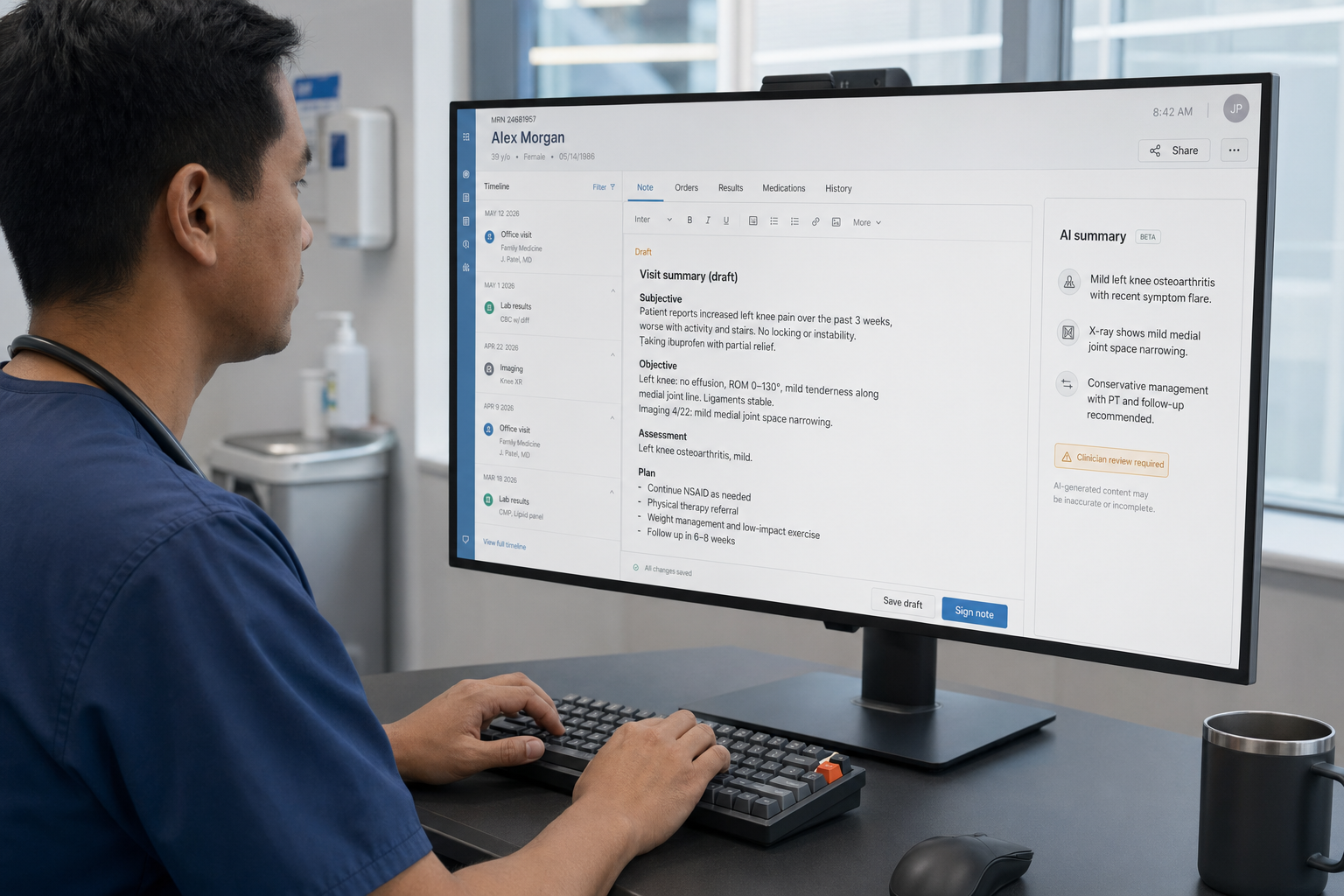

3. Clinical documentation assistance — local only

Turning a recorded consultation transcript into a SOAP note is exactly the kind of task LLMs do well, but the transcript is PHI. Run a quantised deepseek-v4-flash on hospital infrastructure (or a HIPAA-eligible cloud you control) and the data never leaves the boundary. Offline deployment is the feature most cited in the academic discussion of DeepSeek’s healthcare fit.

4. Coding decision support (ICD-10, SNOMED, CPT)

Given a de-identified narrative, V4 maps phrases to candidate codes with confidence notes. Clinicians and coders verify; the model handles the rote lookup.

5. Patient-facing triage chatbots (with strong guardrails)

For self-service symptom triage, a tightly scoped DeepSeek deployment can route patients to “see a GP today / call 111 / call 999” categories. The system prompt must refuse diagnosis, limit topics, and log every conversation for audit. Use function calling to hand off to a human queue on red-flag symptoms.

6. Medical education and exam-style tutoring

Trainees can use the hosted chat to walk through differential diagnoses, drug interactions, or clinical-reasoning exercises, provided the cases are anonymised or fictional. Thinking mode (reasoning_effort="high") returns reasoning_content alongside the final content, which is genuinely useful when learners want to see the chain of reasoning, not just the answer.

7. Genomics and -omics interpretation

Long-context models pair well with variant tables, GWAS summaries, and pathway analyses. DeepSeek for research covers the broader pattern; the healthcare-specific twist is that you almost always want this in your own VPC.

8. Operational and back-office automation

Prior-auth letters, appeals, payer-policy summarisation, and rota optimisation. These are non-clinical text problems where DeepSeek is competitive on cost and quality. Many of the same patterns from DeepSeek for business apply.

9. Specialised fine-tunes on your own corpus

The MIT-licensed weights mean you can fine-tune on de-identified records inside your boundary. Independent groups have already done this — PatientSeek, an open-source med-legal model, fine-tunes a DeepSeek reasoner on a large corpus of patient records and runs locally for medical summaries and Q&A.

What it actually costs to run V4 against medical text

Cost calculations must enumerate all three token buckets and name the tier. Here is a worked example for an ambulatory clinic running 50,000 daily LLM calls — say, draft-note generation and coding — with a 3,000-token system prompt (cached), a 1,500-token user message (uncached), and a 600-token response. Tier: deepseek-v4-flash.

| Bucket | Tokens / day | Rate (per 1M) | Daily cost |

|---|---|---|---|

| Input, cache hit | 150,000,000 | $0.0028 | $4.20 |

| Input, cache miss | 75,000,000 | $0.14 | $10.50 |

| Output | 30,000,000 | $0.28 | $8.40 |

| Total | $23.10 |

That is roughly $700/month for a workload that would cost a multiple of that on most frontier-tier APIs. But — and this is the entire point of this article — those are hosted rates. For PHI you should be costing self-hosted GPU time instead, which is a different calculation. Use the DeepSeek pricing calculator for hosted estimates and the DeepSeek hardware calculator for local-deployment sizing.

If your workload genuinely needs the frontier tier — complex multi-document reasoning across thousands of pages, agentic chart review — V4-Pro lists at $0.0145 cache-hit list / $1.74 cache-miss / $3.48 output (currently $0.003625 / $0.435 / $0.87 during the 75% promo through 2026-05-31) per million tokens. Verify both rate cards on DeepSeek API pricing before committing.

A minimal, OpenAI-compatible setup

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. The API is stateless: the client must resend the full conversation history with every request. Contrast with the web/app, which maintains session history for the user. Below is a minimal Python example that calls V4-Flash in non-thinking mode.

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": "You draft de-identified discharge summaries. Refuse PHI."},

{"role": "user", "content": "Summarise the following case as a Year 8 patient leaflet: ..."},

],

temperature=0.3,

max_tokens=2000,

)

print(resp.choices[0].message.content)Useful parameters in healthcare prompts:

temperature— 0.0 for code or numeric extraction, 0.3–0.7 for clinical-style prose, 1.3 for general dialogue (DeepSeek’s official guidance for translation and conversation).max_tokens— set deliberately; 384,000 is the V4 ceiling.reasoning_effort—"high"withextra_body={"thinking": {"type": "enabled"}}when you want the model to plan before answering.- Tool calling and streaming — both supported; streaming pairs well with chatbot UIs that surface partial answers.

- Context caching — applies automatically when the provider detects a repeated prefix; see DeepSeek context caching.

If you maintain older code against legacy IDs, deepseek-chat and deepseek-reasoner currently route to deepseek-v4-flash until they are retired on 2026-07-24 at 15:59 UTC. Migration is a one-line model= swap; base_url does not change.

Limitations you must respect

DeepSeek does not become safe for clinical use just because the prompt is well-crafted. The published literature is clear about this.

- Privacy. DeepSeek’s privacy policy reserves the right to use user-generated content, with no documented opt-out for healthcare professionals or institutions on the hosted service. That is a HIPAA non-starter for PHI on the hosted endpoints.

- Hallucinations. Like all LLMs, DeepSeek can produce plausible but incorrect output — including invented citations, dosages, or guideline references. The PMC editorial flags hallucinations and bias as core risks requiring human oversight before any clinical application.

- Knowledge cutoff. The model’s training data has a cutoff. Treatment guidelines change. Always verify against current evidence sources.

- Regulatory status. The Italian Garante blocked the DeepSeek app over data-protection concerns in early 2025, and several US states restrict its use on government devices. Check current rules in your jurisdiction before any deployment touches public-sector healthcare.

- Not a medical device. Nothing in this article makes DeepSeek a regulated medical device. If your use case meets the FDA, MHRA, TGA, or Health Canada definition of clinical decision support that requires authorisation, an off-the-shelf LLM is not it.

Where you should reach for something else

Honest comparison matters more in healthcare than in most domains. A few cases where DeepSeek is not the best tool:

- Vetted clinical decision support — purpose-built systems like UpToDate, BMJ Best Practice, or vendor-validated CDS modules in your EHR. They are slower and pricier, but they carry liability and currency that an LLM does not.

- Radiology and pathology image reading — use specialised, regulator-cleared image AI, not a general LLM. DeepSeek’s vision models can describe images but should not drive diagnoses.

- HIPAA-covered hosted AI — if you need a hosted API on PHI without operating your own GPUs, consider providers that sign a BAA and retain US data residency. That is a different procurement track from deepseek for healthcare.

- Highly conversational patient companions — Claude often produces gentler, better-bounded patient-facing text out of the box. See DeepSeek vs Claude.

- Mainstream EHR integrations — if your team needs Microsoft 365, Epic, or Cerner connectors today, the broader ecosystem around DeepSeek vs ChatGPT still favours the incumbents.

Getting started for clinical and research teams

- Decide your data class. If any input is PHI, plan for a self-hosted deployment from day one.

- Pilot on de-identified data. Use the hosted API for prompt design and evaluation against a small test set.

- Bring a clinician into the loop. Every output destined for a patient or chart needs a human reviewer. Log everything.

- Stand up a local environment. Follow install DeepSeek locally and running DeepSeek on Ollama for V4-Flash on a workstation, or scale to a multi-GPU server using the DeepSeek system requirements.

- Build retrieval, not just chat. A DeepSeek RAG tutorial over your de-identified corpus is more useful than free-form Q&A.

- Validate continuously. Maintain an eval set of curated medical Q&A and re-run it on every model or prompt change.

For more domain-adjacent patterns, browse the broader set of DeepSeek use cases.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Is DeepSeek HIPAA-compliant for handling patient data?

The hosted DeepSeek chat and API are not HIPAA-compliant for protected health information. DeepSeek does not sign a Business Associate Agreement, and conversations route to servers in China. The open-weight models, run inside your own HIPAA-eligible infrastructure, can be part of a compliant workflow because the data never leaves your boundary. See DeepSeek privacy for details.

Can DeepSeek diagnose medical conditions?

No. DeepSeek is a general-purpose language model, not a regulated medical device, and it is not authorised by the FDA, MHRA, or equivalent agencies for diagnosis. It can support clinicians with literature triage, documentation drafting, and education, but every clinically relevant output needs a qualified human reviewer. The DeepSeek limitations page covers the broader caveats.

How does DeepSeek compare to ChatGPT for medical research?

Both can summarise literature and structure data competently. DeepSeek V4’s million-token context and MIT-licensed weights make it stronger for long-document review and on-premise deployment; ChatGPT has a deeper integration ecosystem and BAAs available on its enterprise tiers. The right choice depends on data sensitivity and budget. See DeepSeek vs ChatGPT.

What does it cost to run DeepSeek locally for a clinic?

A quantised deepseek-v4-flash can run on a single high-memory workstation; deepseek-v4-pro needs serious multi-GPU hardware. Hardware capex typically lands in the low five figures for Flash and well into six figures for Pro at scale, plus power and ops. Use the DeepSeek hardware calculator to size your specific workload before procurement.

Why use the open-weight V4 instead of a hosted medical AI service?

Three reasons: data residency (everything stays inside your network), cost predictability (no per-token bill scaling with usage), and the ability to fine-tune on your de-identified corpus under the MIT license. Hosted medical-AI vendors offer convenience and BAAs in return; pick based on which you value more. The DeepSeek V4 page covers what you get with the open weights.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

Context sources

- AnalysisHathr.ai: DeepSeek AI is dangerous for healthcareIndependent compliance review flagging hosted DeepSeek vs HIPAALast checked: April 30, 2026

- AnalysisPMC editorial: DeepSeek in healthcare (PMC11836063)Academic discussion of offline deployment, hallucination risk, Garante blockLast checked: April 30, 2026

- AnalysisPatientSeek: open-source med-legal DeepSeek reasoning model (Medium)Example of a healthcare-specific DeepSeek fine-tuneLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.