Using DeepSeek for Data Analysis: A Hands-On 2026 Guide

If you spend your week wrangling CSVs, writing SQL, and reverse-engineering colleagues’ notebooks, you have probably wondered whether DeepSeek for data analysis is finally cheap and capable enough to take over the boring half. After running DeepSeek-V4-Pro (1.6T parameters, 49B activated) and DeepSeek-V4-Flash (284B parameters, 13B activated) — both supporting a context length of one million tokens against my usual analytics workload for two weeks, the answer is yes for most tasks and no for a specific few. This guide walks through the workflows that actually pay off — schema inference, SQL generation, pandas refactors, statistical narrative, JSON-mode extraction — with real prompts, code, and per-task cost math. By the end you will know which V4 tier to point at which job, where to trust the output, and where to keep your hands on the wheel.

The concrete problem analysts face

Most data work is not modelling. It is the unglamorous middle: reading someone else’s schema, guessing why a column is 17% null, writing the join that nobody documented, and turning a dashboard into three sentences a director will actually read. That work is repetitive, context-heavy, and exactly the shape of problem an LLM with a long context window handles well — provided it is cheap enough to use casually and accurate enough to not waste your afternoon debugging hallucinated column names.

Two things changed in April 2026. First, DeepSeek-V4 ships as two MoE models — Pro (1.6T/49B active) and Flash (284B/13B active) — both with a one-million-token context, plus a hybrid attention mechanism combining Compressed Sparse Attention and Heavily Compressed Attention to improve long-context efficiency. Second, the pricing collapsed. V4-Pro is currently $0.435 input / $0.87 output during the 75% promo through 2026-05-31 (list $1.74 / $3.48) per million tokens; V4-Flash is $0.14 input and $0.28 output. That is the line that makes “throw the whole CSV at it” a reasonable default for a working analyst.

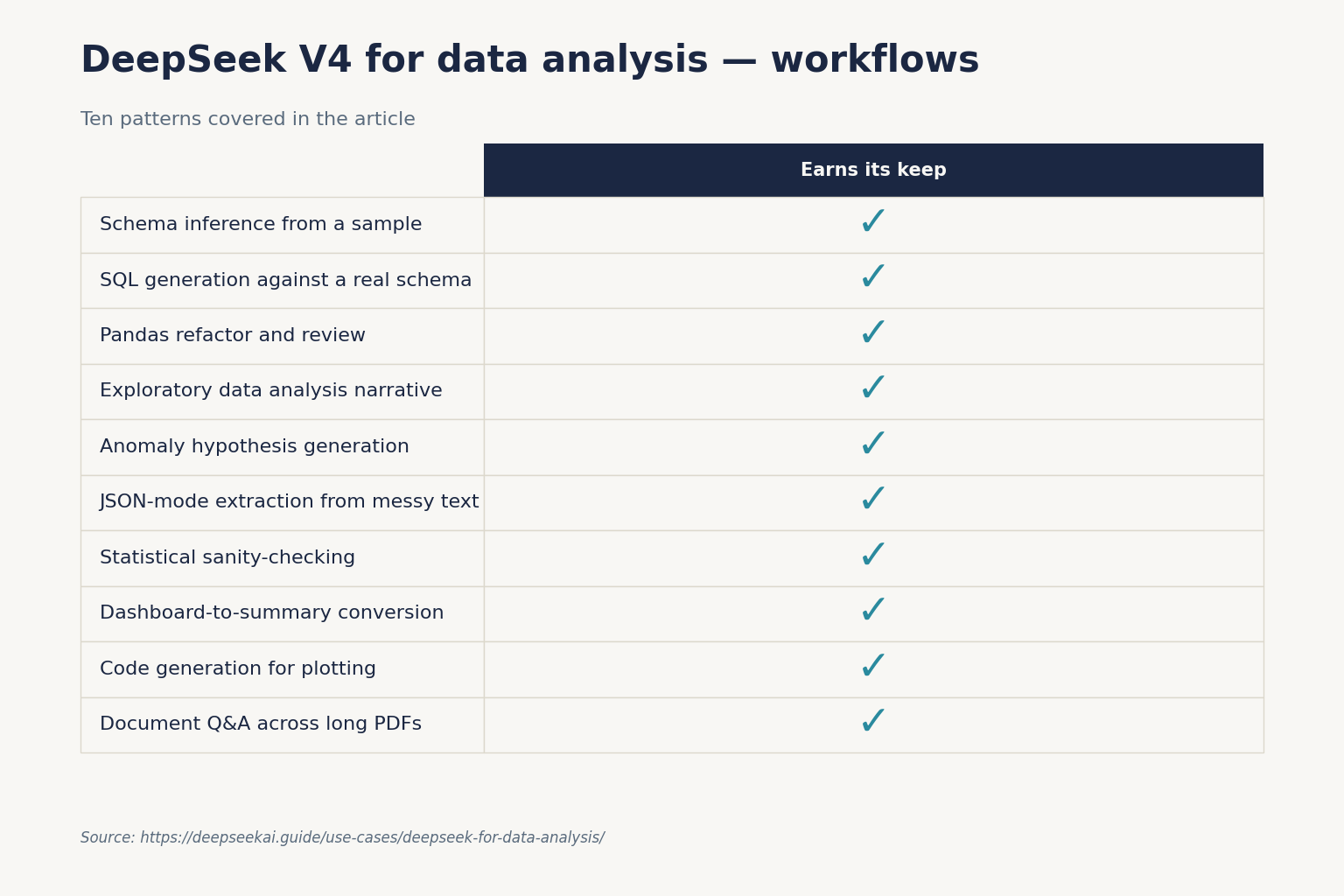

How DeepSeek helps: ten workflows that actually pay off

These are workflows I run weekly, with the prompts I use and the model tier I default to. The tier matters: V4-Flash is fine for 80% of analytical chores; V4-Pro earns its keep on multi-step reasoning over messy data.

1. Schema inference from a sample

Drop the first 200 rows of an unknown CSV in and ask: “Infer column types, propose primary and foreign keys, flag columns that look like coded enumerations, and list three plausible business meanings for the column ‘flag_v2’.” V4-Flash handles this in one shot. If you have an actual data dictionary, paste it in too — at 1M context you have room for the whole thing.

2. SQL generation against a real schema

Paste your CREATE TABLE statements (or information_schema output) and ask in plain English. Set temperature=0.0 for deterministic SQL — this matches DeepSeek’s official guidance for code and maths tasks. Always read the JOIN conditions before running. The model will confidently invent column names if your schema is ambiguous.

3. Pandas refactor and review

Paste a 200-line analysis notebook, ask for a vectorised version with the same output. V4-Flash is the right tier here. Useful prompt suffix: “List any places where the rewrite changes behaviour at edge cases (NaN, empty groups, ties).” That single sentence catches the silent bugs.

4. Exploratory data analysis narrative

Feed a df.describe() output plus a few value_counts() tables. Ask for a 200-word “what is unusual here” summary. This is where you want temperature=1.0 — DeepSeek’s recommended setting for data analysis tasks — to get a more useful spread of hypotheses.

5. Anomaly hypothesis generation

Give it a time series and a list of known events. Ask: “For each spike larger than 2σ, propose three plausible causes and rank by which is most testable from the data we have.” V4-Pro with thinking enabled is worth the extra cost here.

6. JSON-mode extraction from messy text

This is the workflow that most often replaces a regex-and-prayer script. A minimal Python example using the OpenAI SDK against the POST /chat/completions endpoint:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

response_format={"type": "json_object"},

max_tokens=2000,

temperature=1.0,

messages=[

{"role": "system",

"content": "Return json matching this schema: "

"{invoice_id: str, total: float, line_items: [...]}"},

{"role": "user", "content": invoice_text},

],

)

Two caveats the docs are explicit about: JSON mode is designed to return valid JSON, not guaranteed — the model can occasionally return empty content, your prompt must include the word “json” and a small example schema, and max_tokens needs to be high enough that the response cannot be truncated mid-object.

7. Statistical sanity-checking

Paste a paragraph of analysis (“conversion lifted 14% after the redesign”) plus the source numbers. Ask the model to identify which claims are supported, which require a significance test you have not run, and which are flat-out wrong. V4-Pro thinking mode catches subtle errors V4-Flash misses.

8. Dashboard-to-summary conversion

Screenshot the dashboard, dump the underlying numbers as a table, ask for an executive summary in three bullets and one risk. Treat the bullets as a draft, not the final write-up.

9. Code generation for plotting

“Write matplotlib code to make a small-multiples chart with one subplot per region, shared y-axis, and the COVID period shaded grey.” V4-Flash, temperature=0.0. Faster than digging through Stack Overflow.

10. Document Q&A across long PDFs

Convert PDFs to text, paste the lot in, ask questions. The 1M-token context handles a year of board packs comfortably. For repeat queries against the same corpus, context caching pays off — see the cost section below.

Picking a tier: V4-Flash vs V4-Pro for analytics

| Task | Tier | Why |

|---|---|---|

| SQL drafts, pandas refactors, JSON extraction | V4-Flash | Cheap, fast, good enough |

| EDA narrative, schema inference | V4-Flash | Volume work, low risk per call |

| Multi-step causal hypotheses | V4-Pro (thinking) | Reasoning depth matters |

| Statistical claim audits | V4-Pro (thinking) | Fewer subtle false-positives |

| Long-doc Q&A with caching | Either; start Flash | Cache hit makes price negligible |

The cost math, with both tiers

Here is a realistic month for a single analyst running 5,000 calls: a 3,000-token cached system prompt (schema + instructions), 500-token user message per call, 800-token average response. Both totals enumerate all three buckets — cache-hit input, cache-miss input, and output — because the user message on each call is still a miss against the cached prefix.

Worked example — deepseek-v4-flash:

Cache-hit input : 3,000 × 5,000 = 15,000,000 tokens × $0.0028/M = $0.42

Cache-miss input: 500 × 5,000 = 2,500,000 tokens × $0.14/M = $0.35

Output : 800 × 5,000 = 4,000,000 tokens × $0.28/M = $1.12

------

Total $1.89

Worked example — deepseek-v4-pro:

Cache-hit input : 15,000,000 tokens × $0.0145/M (list; promo $0.003625 through 2026-05-31) = $2.18

Cache-miss input: 2,500,000 tokens × $1.74/M (list) = $4.35

Output : 4,000,000 tokens × $3.48/M (list) = $13.92

------

Total $20.45

Cache-hit pricing is automatic: every request with a repeated prefix against the same account benefits with no opt-in, but prefixes must be at least 1,024 tokens long and match byte-for-byte. Pin your system prompt above that threshold and keep it stable. For pricing comparisons against competitors, see our DeepSeek API pricing breakdown and run your own numbers in the DeepSeek pricing calculator.

Setting it up: from API key to first query

The chat endpoint is POST /chat/completions, the OpenAI-compatible surface at https://api.deepseek.com. Both V4 models support the OpenAI ChatCompletions format and the Anthropic API format, so whichever SDK you already use will work — swap base_url and api_key, point model at deepseek-v4-flash or deepseek-v4-pro, done.

The API is stateless: each call must include the full message history. The web chat at chat.deepseek.com keeps session state for you; the API does not. If you are coming off legacy integrations, deepseek-chat and deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 UTC, currently routing to deepseek-v4-flash non-thinking/thinking — migrating is a one-line model= change, no base URL update needed. Step-by-step setup lives in the DeepSeek API getting started tutorial.

Thinking mode for analytical reasoning

For statistical audits and multi-step hypotheses, enable thinking. Thinking mode is a request parameter on either V4 model, not a separate model ID. The response returns reasoning_content alongside the final content:

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[...],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)

# resp.choices[0].message.reasoning_content -> the trace

# resp.choices[0].message.content -> the answer

Use reasoning_effort="max" only when correctness justifies the extra tokens; for the Think Max reasoning mode, set the context window to at least 384K tokens to avoid truncation. More integration recipes are in the DeepSeek Python integration guide.

Limitations that matter for analysts

- Hallucinated column and table names. If your schema is ambiguous or you paste only sample rows, the model invents plausible identifiers. Always paste the real DDL.

- Arithmetic on long tables. Do not ask the model to “sum this column with 50,000 rows.” Have it write the SQL or pandas, then run it yourself.

- Statistical tests are described, not run. The model can name the right test and explain the assumptions; verifying with actual data needs Python or R.

- Long-context retrieval still trails Claude. Claude still beats V4-Pro on long-context retrieval benchmarks; if your workload depends on needle-in-a-haystack retrieval across a million tokens, the per-token savings may not recover the quality gap.

- No native code execution. DeepSeek does not ship a sandboxed interpreter. Pair it with a local Jupyter kernel or a tool-calling harness.

- Privacy. API requests are processed on DeepSeek’s servers in China. Do not paste regulated personal data, customer PII or commercially sensitive datasets without checking your compliance posture; see DeepSeek privacy for the trade-offs.

Honest alternatives for specific sub-tasks

- If you need a sandboxed Python interpreter built in: ChatGPT’s Advanced Data Analysis is still the smoothest one-click experience; see our DeepSeek vs ChatGPT comparison.

- If your work is dominated by long-document retrieval: Claude is worth pricing alongside; see DeepSeek vs Claude.

- If you want fully local inference for sensitive data: a quantised V4-Flash on a single workstation is now plausible — start with running DeepSeek on Ollama.

- For a managed retrieval layer: wire DeepSeek into a DeepSeek RAG tutorial stack rather than relying on raw context.

Getting started for a working analyst

- Sign up and grab a key — see how to get a DeepSeek API key.

- Install the OpenAI Python SDK and point

base_urlathttps://api.deepseek.com. - Default to

deepseek-v4-flashwithtemperature=1.0for analytical work,temperature=0.0for code or SQL. - Build a stable, >1,024-token system prompt that includes your schema, conventions, and any glossary. The cache-hit discount is the single biggest cost lever.

- Reach for

deepseek-v4-prowith thinking only when V4-Flash output disappoints. Most days it will not.

For a wider tour of analytical and adjacent workflows, browse the DeepSeek use cases hub, and if your analysis bleeds into modelling work, the DeepSeek for research piece picks up where this one ends.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Can DeepSeek replace a Python data analyst?

No, but it absorbs a meaningful slice of the busywork. DeepSeek is good at drafting SQL, refactoring pandas, narrating exploratory analyses, and extracting structured fields from messy text. It cannot run code, verify statistics on real data, or replace judgement about what is worth analysing in the first place. Use it as a fast junior, not a decision-maker. Walk through concrete prompt patterns in the DeepSeek prompt engineering guide.

What temperature should I use for data analysis tasks?

DeepSeek’s official guidance recommends temperature=1.0 for data analysis and data-cleaning workflows, 0.0 for SQL or Python code generation, and 1.3 for general translation or summarisation. Higher temperatures help when you want a spread of hypotheses; zero is right when the answer must be deterministic and runnable. More parameter detail is in the DeepSeek API best practices reference.

How much does DeepSeek cost for a typical analyst’s workload?

For around 5,000 calls a month with a cached system prompt, V4-Flash comes in under $2 and V4-Pro under $25. The dominant cost is output tokens, so cap max_tokens and ask for terse responses. Build a stable system prompt above 1,024 tokens to qualify for the cache-hit rate. Run your own scenarios in the DeepSeek cost estimator.

Is DeepSeek’s JSON mode safe for production extraction pipelines?

It is designed to return valid JSON, not guaranteed. Always include the word “json” in your prompt with a small example schema, set max_tokens high enough to avoid truncation, and handle the case where the response is empty or invalid. Wrap calls in a validator and a single retry. Setup details live in the DeepSeek API JSON mode reference.

Does DeepSeek work with pandas notebooks and Jupyter?

Yes, via the API. DeepSeek does not ship its own interpreter, but you can call it from any notebook with the OpenAI Python SDK and pipe responses straight into your dataframes. The pattern is: model writes the code, you run it locally, paste any errors back. The DeepSeek Python integration tutorial covers the wiring end-to-end.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.