DeepSeek for Lawyers: A Practitioner’s Guide to AI in Legal Work

Can a Chinese open-weight model help you draft a discovery response, summarise a 400-page deposition, or pressure-test a contract clause without inventing case law? That is the question every partner asking about DeepSeek for lawyers actually wants answered. The honest reply: yes for some tasks, absolutely not for others, and the line between them matters more than the brochure-grade benchmark numbers.

I have used DeepSeek in production through V3, V3.2, R1 and now V4, alongside ChatGPT, Claude and Gemini, on real legal-adjacent workloads — contracts, regulatory memos, deposition prep. This guide walks through the workflows where DeepSeek genuinely earns its place in a legal stack, the workflows where it shouldn’t get near a court filing, and the prompts, costs and verification steps that separate the two.

The concrete problem: lawyers need volume without fabrication

Legal work is text-heavy and citation-sensitive. A single matter can produce thousands of pages of discovery; a transactional close can hinge on a redline buried on page 84 of a master services agreement. Generative AI is genuinely useful at this scale — and genuinely dangerous when it invents a case.

The cautionary record is now well-established. In Mata v. Avianca, two New York attorneys submitted a brief containing six fabricated case citations from ChatGPT; Judge P. Kevin Castel dismissed the case and ordered the plaintiff’s attorneys to pay a $5,000 fine, noting numerous inconsistencies in the opinion summaries and describing one of the legal analyses as “gibberish.” That was June 2023. The problem has not gone away — a database maintained by Damien Charlotin documents the progression from a handful of cases in 2023 to over 300 identified instances of AI hallucinations in court filings, and federal courts continued sanctioning attorneys through 2025 and into 2026.

The lesson is not “don’t use AI.” It is: pick the right tool for the right task, verify everything that touches a tribunal, and understand what your model can and cannot do. DeepSeek’s V4 generation, released April 24, 2026, changes the calculus for legal teams in two specific ways: a 1-million-token context window as the default, and pricing low enough to make per-document workflows economically rational.

What DeepSeek V4 brings to a legal stack

DeepSeek published the V4 Preview on April 24, 2026 — an open-weight Mixture-of-Experts series. Two models ship: V4-Pro at 1.6 trillion total parameters with 49 billion activated per token, and V4-Flash at 284 billion total / 13 billion active. Both support native 1M context, and both are open weights. For lawyers, three properties matter:

- 1,000,000-token context on both tiers, with output up to 384,000 tokens. A full deposition transcript, a master agreement plus all schedules, or a year of board minutes all fit in a single prompt without retrieval gymnastics.

- Two thinking modes on either tier, set as a request parameter rather than a separate model. You can ask V4-Flash for a quick summary or V4-Pro to reason carefully through a multi-step argument.

- Open weights under MIT. A firm with the hardware can host the Flash variant on its own infrastructure, keeping privileged material off any third-party server.

For an architectural deep-dive, see our page on DeepSeek V4; for the cost-efficient tier most firms will start with, the DeepSeek V4-Flash page covers parameters and trade-offs in detail.

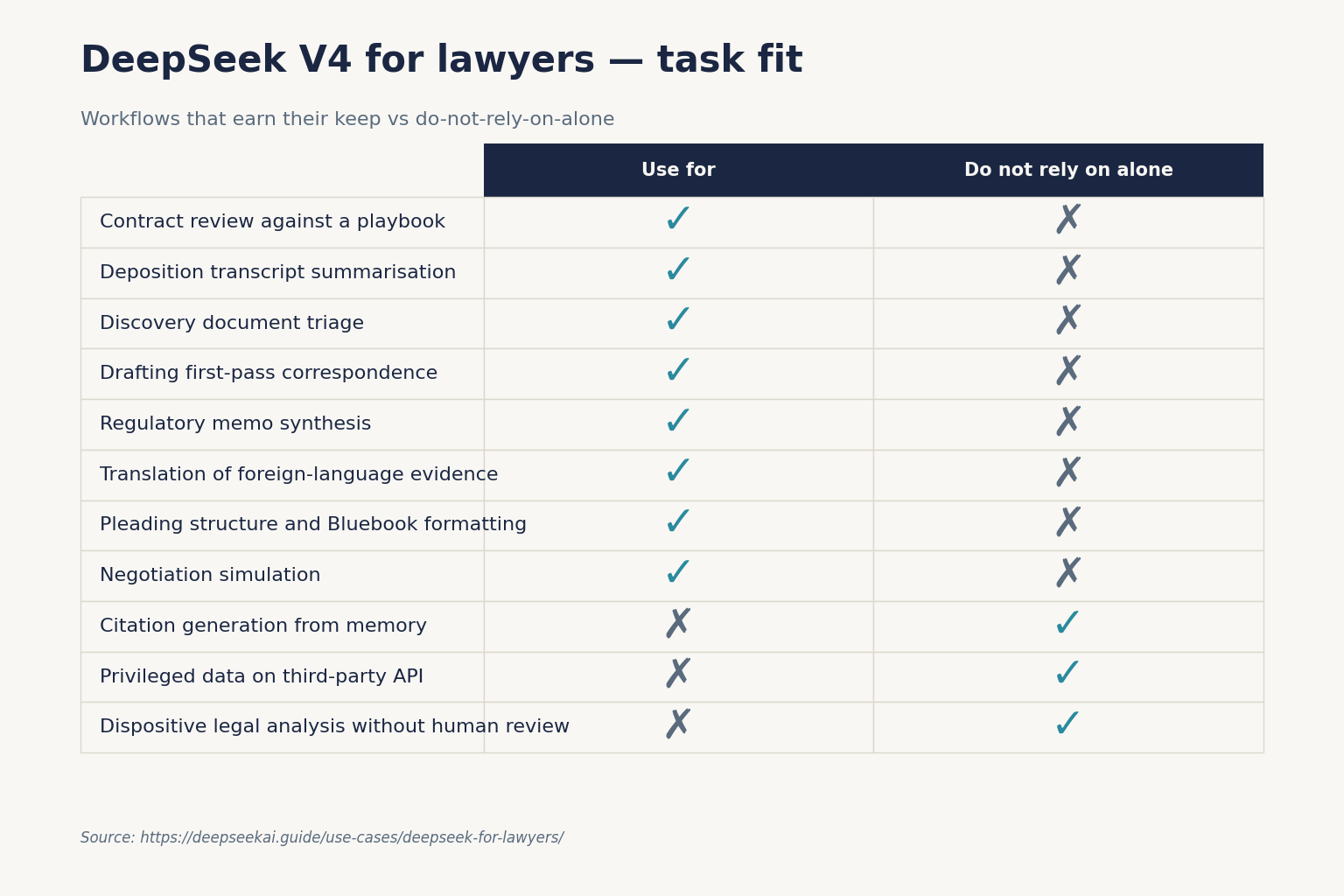

Ten workflows where DeepSeek earns its keep

These are the legal-adjacent tasks where I have personally seen V4 produce work I would build on (after review). Each comes with a prompt scaffold you can adapt.

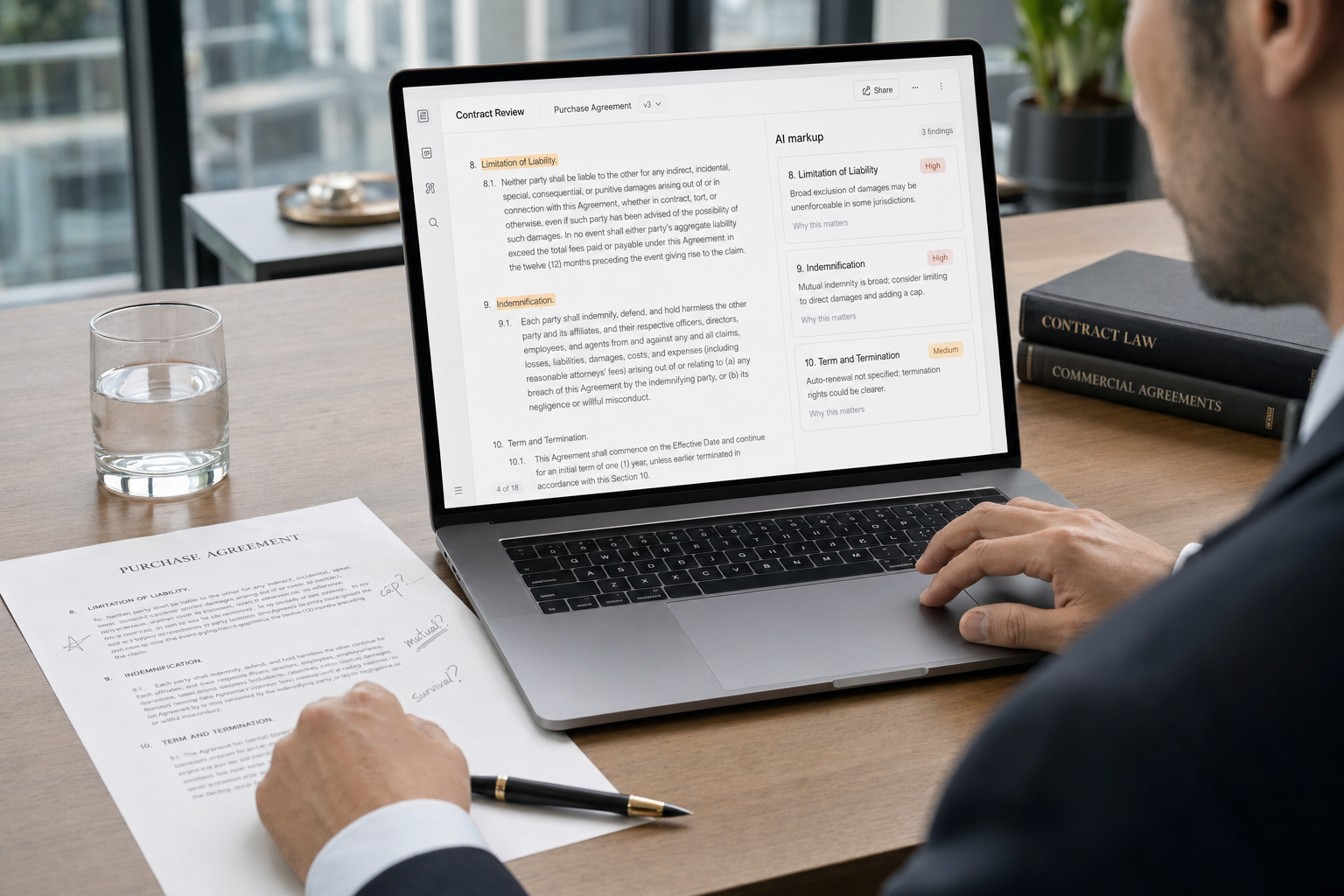

1. Contract review against a playbook

Paste the agreement and your firm’s clause playbook into a single V4-Pro request with thinking mode enabled. Ask for a clause-by-clause comparison, flagging deviations and proposing fallback language. The 1M context handles even the most baroque syndicated-loan documents in one shot.

System: You are reviewing a draft agreement against [Firm] playbook.

For each clause, output: clause name, deviation from playbook (if any),

risk level (low/medium/high), suggested redline. Do not invent law.

User: [paste full agreement] [paste playbook]2. Deposition transcript summarisation

Feed the full transcript and ask for a topic-indexed summary with line-and-page cites. V4 is reliable at extractive tasks like this because the source text is in the context — it is summarising, not recalling.

3. Discovery document triage

Classify documents as responsive, non-responsive, or privileged-flag-for-review. Run V4-Flash in non-thinking mode for cost; reserve V4-Pro for ambiguous edge cases. Pair with our retrieval-augmented generation walkthrough if your corpus exceeds even a million tokens.

4. Drafting first-pass correspondence

Demand letters, status updates to clients, scheduling letters — anything where the structure is templated and the facts come from you. Set temperature=1.3 for natural prose.

5. Regulatory memo synthesis

Provide the relevant statute, regulation and any published guidance as context. Ask V4-Pro in thinking mode to produce an issue-spotting memo. Critically: provide the source text yourself. Do not ask the model to recall what the regulation says.

6. Translation of foreign-language evidence

DeepSeek handles translation competently across major languages. Useful for cross-border matters where you need a working translation before commissioning a certified one.

7. Pleading structure and Bluebook formatting

Have V4 reformat a draft to local rules — headings, citation style, signature blocks. Mechanical work that humans get wrong when tired.

8. Negotiation simulation

Role-play opposing counsel before a mediation. Feed the model the issues list and ask it to argue the other side. Surfaces weaknesses in your own position cheaply.

9. Internal client intake summarisation

Convert a long intake interview transcript into a structured matter-opening memo with conflict-check fields populated.

10. Time-entry narrative generation

From a list of activities, generate compliant time entries that match firm style. Saves the dreaded Friday-afternoon billing exercise.

For a wider lens on adjacent disciplines, our DeepSeek for legal research page covers research-specific patterns in more depth.

API mechanics every legal-tech lead should understand

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. The API is stateless — you must resend the full conversation history on every request, unlike the web/app at chat.deepseek.com which maintains session history server-side. A minimal Python call using the OpenAI SDK looks like this:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[

{"role": "system", "content": "You are a contracts paralegal."},

{"role": "user", "content": "Summarise the indemnity clause: ..."},

],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

temperature=0.0,

max_tokens=8000,

)With thinking enabled, the response returns reasoning_content alongside the final content. Set temperature=0.0 for clause analysis and citation-sensitive tasks; reserve 1.3 for client-facing prose. If you maintain older integrations, the legacy deepseek-chat and deepseek-reasoner IDs currently route to deepseek-v4-flash in non-thinking and thinking modes respectively, and will be fully retired after July 24, 2026, 15:59 UTC. Migration is a one-line model= swap; base_url does not change. DeepSeek also exposes an Anthropic-compatible surface against the same base URL if your shop standardises on that SDK.

For a step-by-step setup, see our DeepSeek API getting started tutorial. Other parameters worth knowing: top_p, stream for token-by-token UI updates, JSON mode (response_format={"type": "json_object"}) for structured outputs, and tool calling for agent-style workflows. JSON mode is designed to return valid JSON, not guaranteed — handle occasional empty outputs and prompt explicitly with the word “json” plus an example schema; set max_tokens high enough to avoid truncation.

Cost: what 100,000 contracts a year actually costs

Pricing as of April 2026, per the official DeepSeek pricing page:

| Model | Input cache hit | Input cache miss | Output |

|---|---|---|---|

| deepseek-v4-flash | $0.0028 / 1M | $0.14 / 1M | $0.28 / 1M |

| deepseek-v4-pro | $0.0145 / 1M (list; promo $0.003625 through 2026-05-31) | $0.435 promo / $1.74 list per 1M | $0.87 promo / $3.48 list per 1M |

A worked example. Suppose a mid-size firm runs 100,000 contract reviews per year. Each review uses a 4,000-token cached system prompt (the playbook), a 20,000-token uncached user message (the contract), and produces a 3,000-token output. On deepseek-v4-flash:

- Cached input: 4,000 × 100,000 = 400,000,000 tokens × $0.0028/M = $11.20

- Uncached input: 20,000 × 100,000 = 2,000,000,000 tokens × $0.14/M = $280.00

- Output: 3,000 × 100,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $375.20 per year.

The same workload on deepseek-v4-pro:

- Cached input: 400,000,000 × $0.0145/M (list; promo $0.003625 through 2026-05-31) = $58.00

- Uncached input: 2,000,000,000 × $1.74/M (list) = $3,480.00

- Output: 300,000,000 × $3.48/M (list) = $1,044.00

- Total: $4,582.00 per year.

Pro is roughly 12× more expensive on this workload. Use Flash for triage and high-volume tasks; reserve Pro for the matters where reasoning depth justifies the spend. Run your own numbers with the DeepSeek pricing calculator.

Limitations: where DeepSeek shouldn’t go near your filings

Three categories of legal task where DeepSeek — and frankly any general-purpose LLM — is the wrong tool.

Citation generation from memory

Never ask the model to find cases. Ever. At least six attorneys have been sanctioned for filing AI-generated fake case citations as of March 2026, including the widely reported Mata v. Avianca case where lawyers submitted fabricated cases generated by ChatGPT. The fix is structural: do legal research in Westlaw, Lexis or Bloomberg Law, then bring verified citations into your DeepSeek prompt as context. The model can synthesise; it cannot reliably recall.

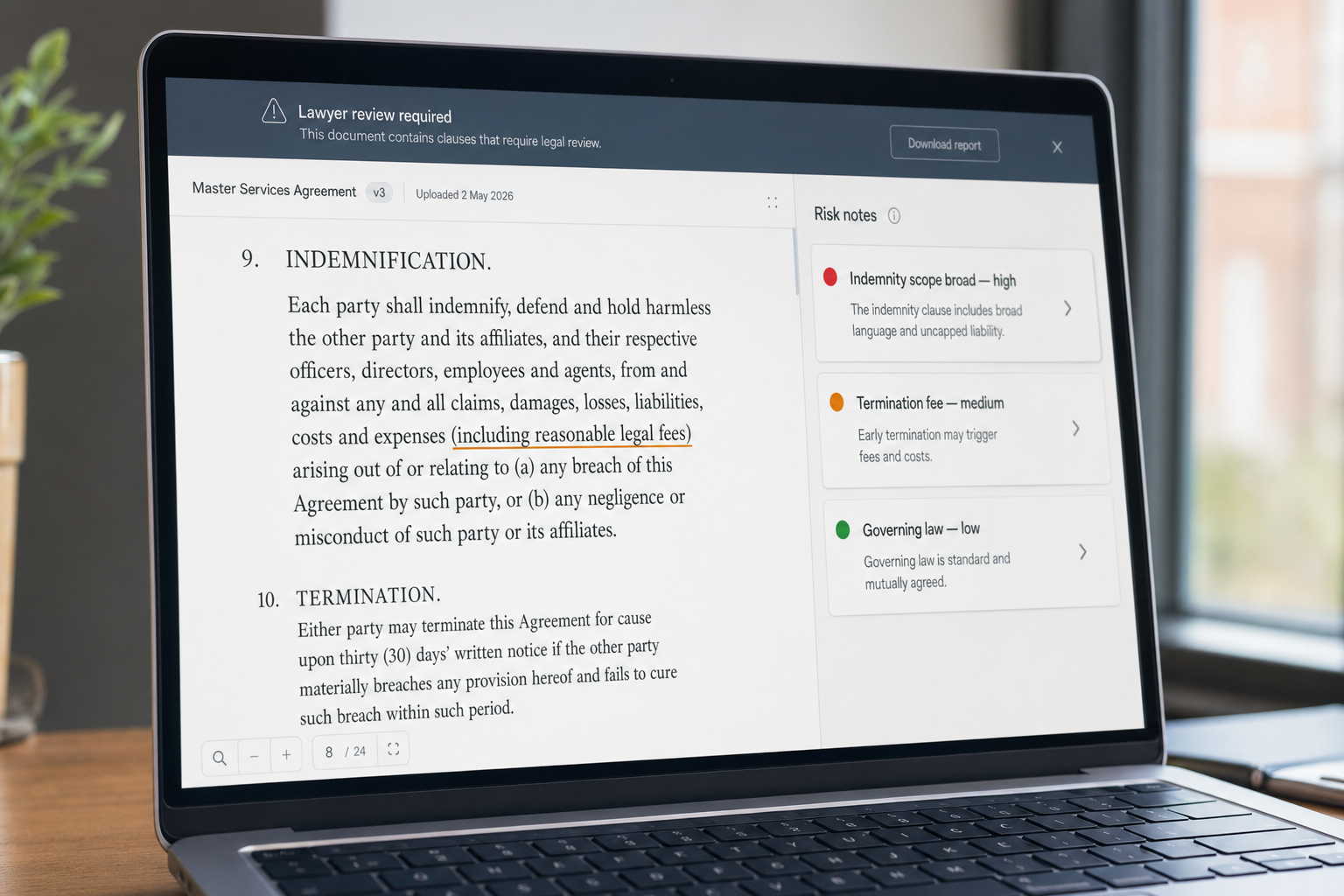

Privileged data on a third-party API

The hosted DeepSeek API processes data on servers in China. For matters subject to attorney-client privilege, US-Chinese trade-secret considerations, EU GDPR data-residency obligations, or UK/Australian/Canadian/Irish equivalents, this is often a non-starter. The remedy: run V4-Flash on firm-controlled infrastructure under MIT-licensed weights, or use a different provider for privileged work. Our page on DeepSeek privacy covers the trade-offs.

Dispositive legal analysis without human review

Attorneys using generative AI must carefully review any work they did not produce — Judge Castel emphasised the “gatekeeping role” attorneys must play “to ensure the accuracy of their filings.” Treat AI output as an associate’s first draft from a brand-new associate who occasionally hallucinates. The signature on the brief is yours.

When a different tool is the better choice

Honesty matters here, because no model is best at everything.

- Westlaw Precision, Lexis+ AI, Harvey, CoCounsel. Purpose-built legal research tools with curated citation databases. Use these for the research step; use DeepSeek for synthesis once you have the verified sources.

- Claude (Anthropic) for highly sensitive client communications. Anthropic’s enterprise privacy posture is more familiar to risk-averse general counsel. See our DeepSeek vs Claude comparison.

- ChatGPT Enterprise where existing firm SSO and DLP controls demand it. The DeepSeek vs ChatGPT page goes deeper on procurement-side considerations.

None of those replace DeepSeek’s combination of 1M context and low per-token pricing for high-volume document work — but they may sit alongside it in a mature firm stack.

Getting started for a legal team

- Pilot on non-privileged work first — public regulatory comments, internal training, marketing copy.

- Document a verification protocol: every citation checked in Westlaw or Lexis, every numeric figure traced to source.

- Train staff on prompt patterns. Our guide to DeepSeek prompt engineering covers the fundamentals.

- Decide on hosting. Hosted API for low-stakes, high-volume tasks; self-hosted V4-Flash for privileged matters.

- Read your jurisdiction’s ethical guidance. ABA Model Rules 1.1, 1.6, 3.3, 5.1 and 5.3 all bear on AI use; UK SRA, Law Society of Ontario and equivalents have published their own.

For broader context across roles and industries, browse our other real-world applications.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Is DeepSeek safe for lawyers to use on client matters?

It depends on the matter and the deployment. The hosted API processes requests on servers subject to Chinese law, which is usually unsuitable for privileged or regulated data. The MIT-licensed open weights, however, can be self-hosted on firm-controlled infrastructure, removing that concern. Review our DeepSeek privacy page and consult your information-governance team before any privileged use.

Can DeepSeek do legal research like Westlaw or Lexis?

No, and you should not ask it to. DeepSeek has no live connection to Westlaw, Lexis or Bloomberg Law citation databases. It can synthesise legal analysis when you supply verified source materials in the prompt, but it can hallucinate citations if asked to recall them from training data. See our dedicated DeepSeek for legal research guide for the safe pattern.

How does DeepSeek compare to ChatGPT for legal drafting?

Both are capable general-purpose models; DeepSeek V4’s 1M context handles longer documents in one shot, and per-token pricing is materially lower. ChatGPT Enterprise has more mature compliance tooling for large firms. For a side-by-side breakdown of latency, output quality and procurement considerations, see our DeepSeek vs ChatGPT comparison.

What does it cost to run a contract review through DeepSeek?

On V4-Flash, a 20,000-token contract with a 4,000-token cached playbook prompt and a 3,000-token output costs roughly $0.0038 per review at April 2026 rates — under half a cent. V4-Pro runs around $0.046 per review for the same workload. Check the DeepSeek API pricing page and re-run the math against your own document profile before committing.

Can I run DeepSeek locally so client data never leaves my firm?

Yes for V4-Flash with the right hardware — its 13 billion active parameters are within reach of a well-equipped server, though full precision still demands significant GPU memory. V4-Pro requires an enterprise GPU cluster. Our walkthrough on how to install DeepSeek locally covers options including Ollama and llama.cpp.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-token cost reference for legal-research workloadsLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.