DeepSeek vs Claude in 2026: Honest Head-to-Head Comparison

If you are deciding between DeepSeek and Claude for a real project in 2026 — a coding agent, a research assistant, a customer-support bot — the choice comes down to four levers: benchmark quality on your task, output-token price, privacy posture, and the tooling around the model. This deepseek vs claude breakdown is written from production use of both, with today’s DeepSeek V4 launch numbers and Anthropic’s current Claude 4.6 / 4.7 rate card side by side. No marketing, no hand-waving. You will get a verdict up top, a head-to-head table, six category deep-dives, a worked cost example on a realistic workload, and a decision framework for picking the right model for the right job.

Verdict: who wins, for whom

Short answer. For coding agents, high-volume API workloads, and teams that value open weights, DeepSeek V4 wins on price-performance. For enterprise deployments that need mature tooling, a deeper policy stance on safety, and a first-party app with broad integrations, Claude wins on ecosystem maturity. The two products are closer on benchmark quality than they have ever been, and further apart on price than any previous generation.

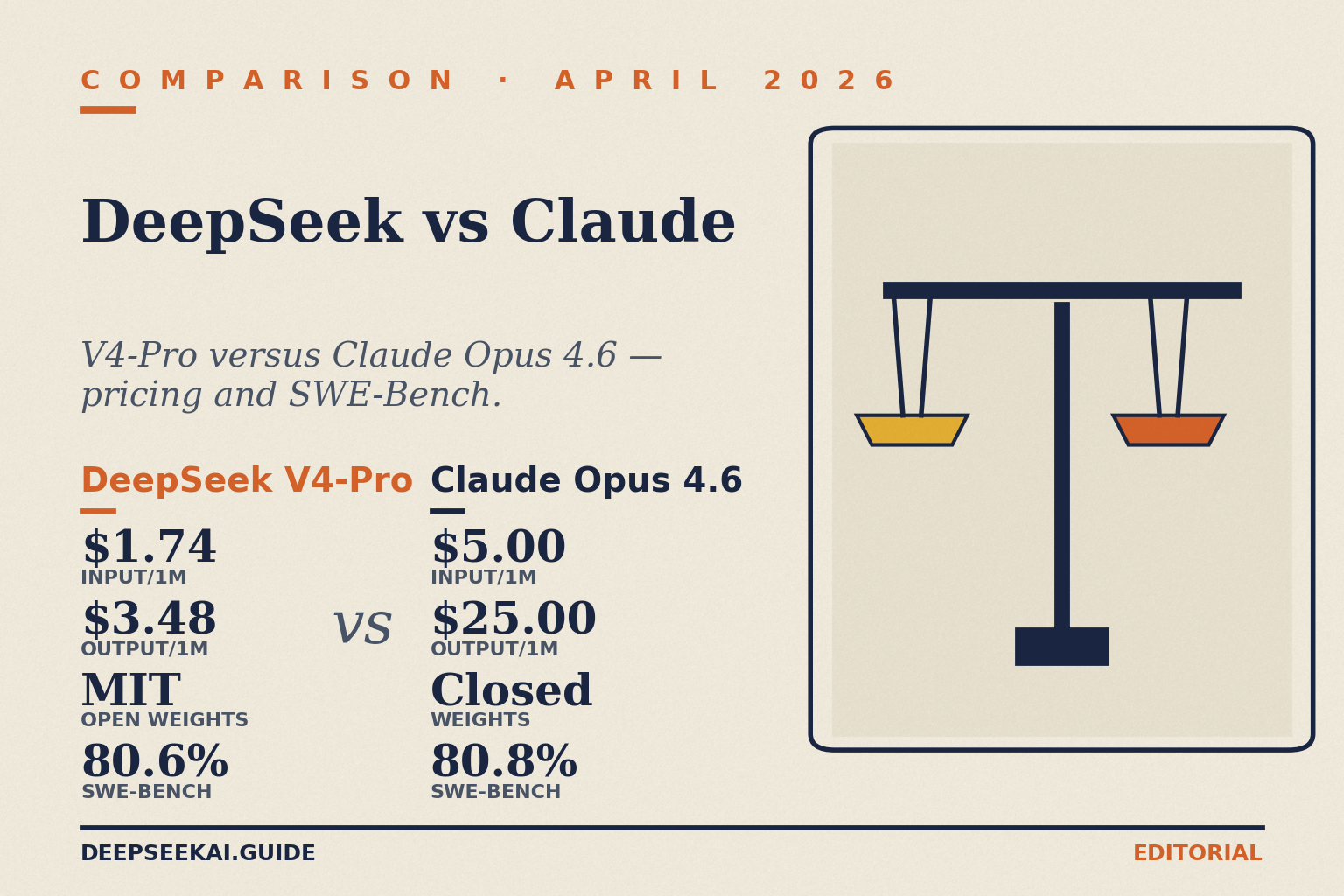

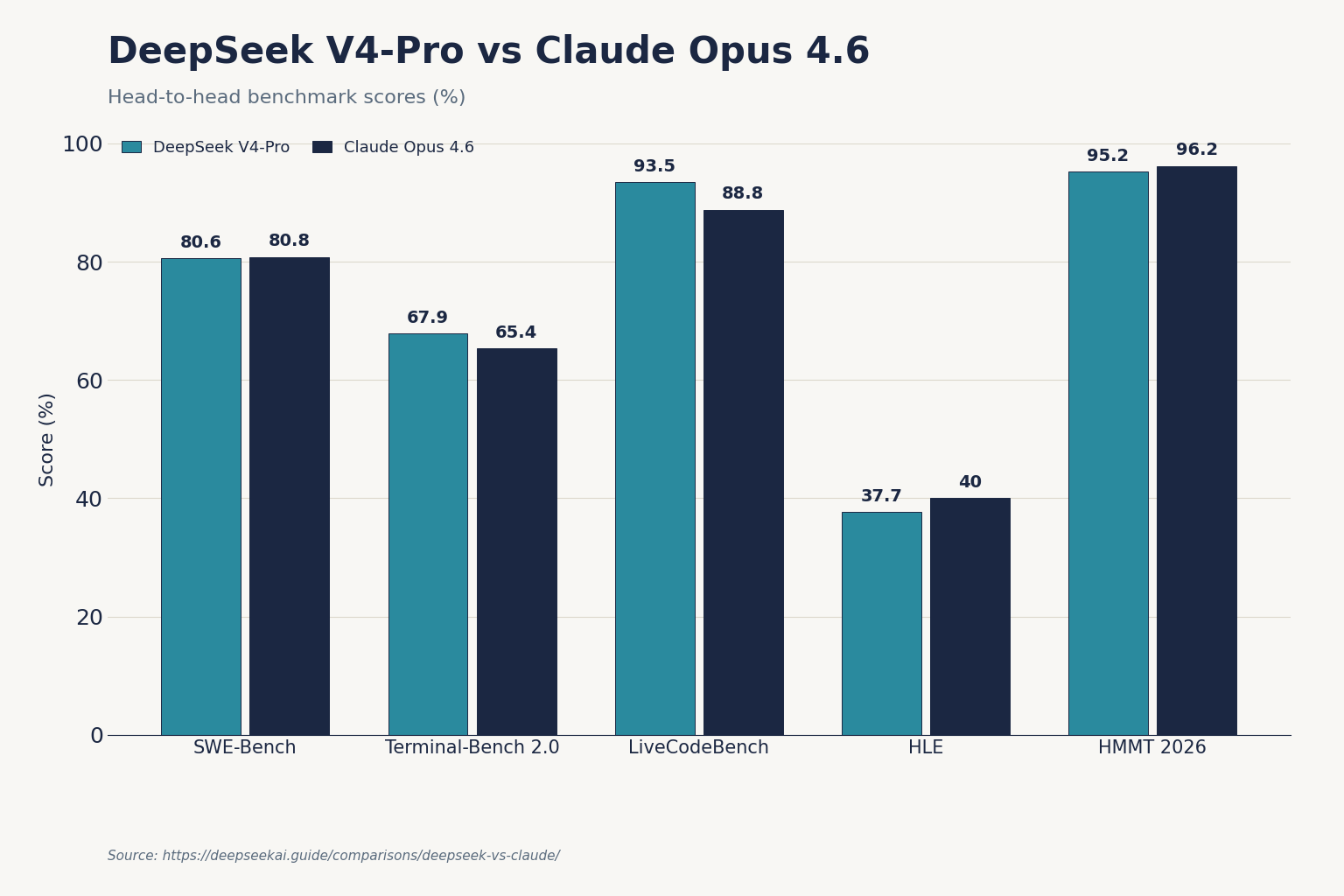

Concretely: DeepSeek V4-Pro leads Claude on Terminal-Bench 2.0 (67.9% vs 65.4%), LiveCodeBench (93.5% vs 88.8%), and Codeforces rating (3206 vs no reported score), while Claude Opus 4.6 holds a marginal lead on SWE-bench Verified (80.8% vs 80.6%), and a meaningful lead on HLE (40.0% vs 37.7%) and HMMT 2026 math (96.2% vs 95.2%). On price, V4-Pro lists $1.74 / $3.48 per million input-miss / output tokens (currently $0.435 / $0.87 during the 75% promo through 2026-05-31) versus Claude Opus 4.6 at $5 / $25 — roughly 7× cheaper on output. If you are running anything at scale, that gap compounds fast.

At-a-glance: DeepSeek vs Claude (April 2026)

This table compares DeepSeek’s two current tiers against Anthropic’s three recommended 2026 tiers. All pricing per 1M tokens in USD, verified against each vendor’s pricing page on 2026-04-24.

| Model | Input (cache miss) | Output | Context | Weights | Headline benchmark |

|---|---|---|---|---|---|

| DeepSeek V4-Pro | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list | 1M | MIT (open) | SWE-Bench Verified 80.6% |

| DeepSeek V4-Flash | $0.14 | $0.28 | 1M | MIT (open) | SWE-Bench Verified 79.0% |

| Claude Opus 4.6 | $5.00 | $25.00 | 1M | Closed | SWE-Bench Verified 80.8% |

| Claude Sonnet 4.6 | $3.00 | $15.00 | 1M | Closed | Default production pick |

| Claude Haiku 4.5 | $1.00 | $5.00 | 200K (typical) | Closed | High-volume workloads |

Sources: DeepSeek pricing as of April 2026 (verify on the DeepSeek pricing page). Claude Opus 4.6 at $5.00/$25.00 per MTok, Claude Sonnet 4.6 at $3.00/$15.00 per MTok, Claude Haiku 4.5 at $1.00/$5.00 per MTok. Anthropic released Claude Opus 4.7 on April 16, 2026 at the same headline $5 / $25 pricing as Opus 4.6.

Note on generations: the V4 launch ships as a family of two tiers — deepseek-v4-pro (1.6T total / 49B active) and deepseek-v4-flash (284B total / 13B active), both open-weight MoE models under the MIT license. Both support thinking-enabled mode (default), thinking-max, and non-thinking (pass extra_body={"thinking": {"type": "disabled"}} to disable) via a single reasoning_effort parameter. Internal details at DeepSeek V4.

Coding

Coding is where this comparison is most interesting. In the R1 era, Claude was simply the better engineer. That is no longer true on the public numbers.

On the benchmarks DeepSeek published at the V4 launch: on LiveCodeBench, V4-Pro leads the pack at 93.5, ahead of Gemini (91.7) and Claude (88.8), and Codeforces rating puts V4-Pro at 3206, ahead of GPT-5.4 (3168) and Gemini (3052), with no score reported for Claude. On SWE-Bench Verified, the two models are essentially tied (80.6% vs 80.8%). On Terminal-Bench 2.0 — a more realistic test of autonomous agent behaviour — V4-Pro at 67.9% beats Claude Opus 4.6 (65.4%) by 2.5 points — this benchmark involves real autonomous terminal execution with a 3-hour timeout, and that gap matters for agentic workflows more than a single-turn coding benchmark would.

What that means in practice: for an IDE-attached pair programmer, Claude’s Sonnet 4.6 still has the edge on latency and conversational fluency in my testing. For a cron-scheduled agent that files PRs overnight, V4-Pro gives you similar quality at a fraction of the spend. Claude also integrates tightly with Claude Code (Anthropic’s terminal agent), and — interesting for this comparison — DeepSeek also said that V4 has been optimized for use with popular agent tools such as Anthropic’s Claude Code and OpenClaw. You can point Claude Code at a DeepSeek backend.

For repo-scale work, see our notes in DeepSeek for coding and the dedicated comparison at DeepSeek Coder vs Copilot.

Reasoning and math

Claude has the edge on general reasoning and expert-level tasks. HLE (Humanity’s Last Exam) at 37.7% puts V4-Pro below Claude (40.0%), GPT-5.4 (39.8%), and well below Gemini-3.1-Pro (44.4%). HLE tests expert-level cross-domain reasoning. DeepSeek acknowledges this gap directly. On competition math, HMMT 2026 math competition: Claude (96.2%) and GPT-5.4 (97.7%) pull decisively ahead of V4-Pro (95.2%), and for the most complex mathematical reasoning tasks specifically, V4-Pro trails on this metric.

In V4, thinking mode is a request parameter on either model ID, not a separate model. The response returns reasoning_content alongside the final content. Claude’s equivalent is “extended thinking,” which — per Anthropic — improves quality on complex tasks but generates additional tokens, and extended thinking tokens are billed as standard output tokens at the model’s normal rate, not as a separate pricing tier. That matters for budgeting: a thinking-heavy workload on Claude Opus amplifies the $25/M output rate; the same workload on V4-Pro amplifies $3.48/M at list (currently $0.87/M during the 75% promo through 2026-05-31).

If you need maximum reasoning quality on hard, ambiguous problems — legal analysis, scientific reasoning, research synthesis — Claude still has the nicer ceiling. Our DeepSeek for research guide covers where the Flash tier struggles.

Writing and general knowledge

Claude remains, in my testing, the better writer for anything that has to sound like a human wrote it — long-form drafts, marketing copy, nuanced summaries, editing tasks. The gap is narrower than it used to be, and DeepSeek V4 handles tone instructions more reliably than V3.2 did, but Claude’s defaults are still closer to what most writers actually want.

On factual recall the picture is more nuanced. SimpleQA-Verified at 57.9% puts V4-Pro behind both Gemini (75.6%) and Claude. Claude’s factual recall sits between V4-Pro and Gemini in our testing, with the additional advantage of stronger source-citation discipline in long-form answers. For retrieval-augmented workloads, wire in grounding rather than trusting either model’s parametric memory — see DeepSeek RAG tutorial.

Pricing

The pricing gap is the story of this comparison. DeepSeek V4-Flash rates are $0.0028 cache-hit / $0.14 cache-miss / $0.28 output per million tokens; V4-Pro rates are $0.003625 / $0.435 / $0.87 per million tokens during the 75% promo through 2026-05-31 (list $0.0145 / $1.74 / $3.48). Anthropic’s recommended tier is Claude Sonnet 4.6 at $3 / $15 per million — Claude Sonnet 4.6 ($3/$15) sits between OpenAI’s mid-tier GPT-5.2 ($1.75/$14, December 2025) and the newer GPT-5.4 ($2.50/$15) on direct vendor pricing, with Gemini 3.1 Pro ($2/$12, ≤200K context) close to Sonnet on input but cheaper on output; Claude Opus 4.7 and Claude Opus 4.6 both sit at the premium Claude tier of $5/$25.

Both vendors offer caching. Claude’s model: a cache hit costs 10% of the standard input price, which means caching pays off after just one cache read for the 5-minute duration (1.25x write), or after two cache reads for the 1-hour duration (2x write). DeepSeek’s model is simpler — the cache-hit rate applies automatically when the provider detects a repeated prefix, at ~20% of the miss rate for Flash. Anthropic also ships the Anthropic Message Batches API processes requests asynchronously and returns results within 24 hours, at exactly 50% off standard token prices, with no quality difference between batch and real-time responses — only timing. DeepSeek does not currently ship a batch-price equivalent.

Worked example: 1M support-bot calls (V4-Flash)

Workload: 1,000,000 chat requests, 2,000-token cached system prompt, 200-token user message (uncached), 300-token reply. Tier: deepseek-v4-flash.

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

Same workload on Claude Sonnet 4.6

Applying Sonnet 4.6 rates ($3 input / $15 output) with a 10% cache-hit multiplier on cached input:

- Cached input: 2,000,000,000 × $0.30/M ≈ $600.00

- Uncached input: 200,000,000 × $3.00/M = $600.00

- Output: 300,000,000 × $15.00/M = $4,500.00

- Total: ~$5,700.00

Same workload, ~48× the bill. Halve it with Anthropic’s batch API for async jobs; you are still roughly 24× DeepSeek V4-Flash. The DeepSeek pricing calculator and DeepSeek API pricing page let you run your own numbers.

Privacy and data handling

This is where many enterprise buyers draw the line. DeepSeek’s consumer API routes traffic to servers in China; conversations can be processed and stored on infrastructure subject to Chinese law. Anthropic is a US company and also ships Claude on AWS Bedrock, Google Vertex AI, and — newer — Microsoft Foundry, with regional residency options. Claude models are now available on AWS Bedrock, Google Vertex AI, and Microsoft Foundry (a newer integration), each with their own regional and multi-region pricing tiers, and regional endpoints on these platforms include a 10% premium over global endpoints for data residency guarantees.

Because DeepSeek V4 weights are MIT-licensed, the cleanest answer for privacy-sensitive workloads is self-hosting. That gets you frontier-class quality on your own infrastructure. See install DeepSeek locally and DeepSeek privacy. Claude offers no such route; it is closed-weight by design.

Regulatory status is worth checking before you ship. Italy’s Garante blocked the DeepSeek app in January 2025; several US states restrict DeepSeek on government devices. No federal US ban exists as of this writing. For the latest, see DeepSeek US restrictions.

Ecosystem and developer experience

Claude is a broader product ecosystem spanning consumer, business, and enterprise use, with apps, agents, and integrations across examples like Slack, Google Workspace, and GitHub; DeepSeek is more model-and-API-centric, with a minimal chat UI that exists mainly to let people try the underlying models. Claude Code alone has reached approximately $2.5 billion in annualized revenue (Anthropic, February 2026), and business subscriptions have quadrupled since the start of the year — that is a mature go-to-market around a mature developer tool.

On the API itself, both vendors are pleasant to work with. DeepSeek exposes an OpenAI-compatible Chat Completions API, plus — added alongside V4 — an Anthropic-compatible surface on the same base URL. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. A minimal Python example using the OpenAI SDK:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Refactor this Flask route."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

temperature=0.0,

max_tokens=4096,

)

print(resp.choices[0].message.content)

Key parameters to know on DeepSeek: temperature (0.0 for code, 1.3 for general chat, 1.5 for creative writing per DeepSeek’s guidance), top_p, max_tokens (up to 384,000 on V4), reasoning_effort ("high" or "max"), plus JSON mode, tool calling, streaming, and context caching. JSON mode is designed to return valid JSON, not guaranteed — include the word “json” and a small example schema in the prompt, and set max_tokens high enough to avoid truncation. For full coverage see DeepSeek API documentation.

A crucial architectural note: the API is stateless. You must resend the full conversation history with every request. This differs from the DeepSeek web chat and mobile app, which maintain session history for you. Claude’s API is the same shape (stateless). Do not let the chatbot UX fool you about API behaviour.

Legacy DeepSeek model IDs (deepseek-chat, deepseek-reasoner) still work today; they route to deepseek-v4-flash and will be retired on 2026-07-24 15:59 UTC. Migration is a one-line model= swap; the base_url does not change.

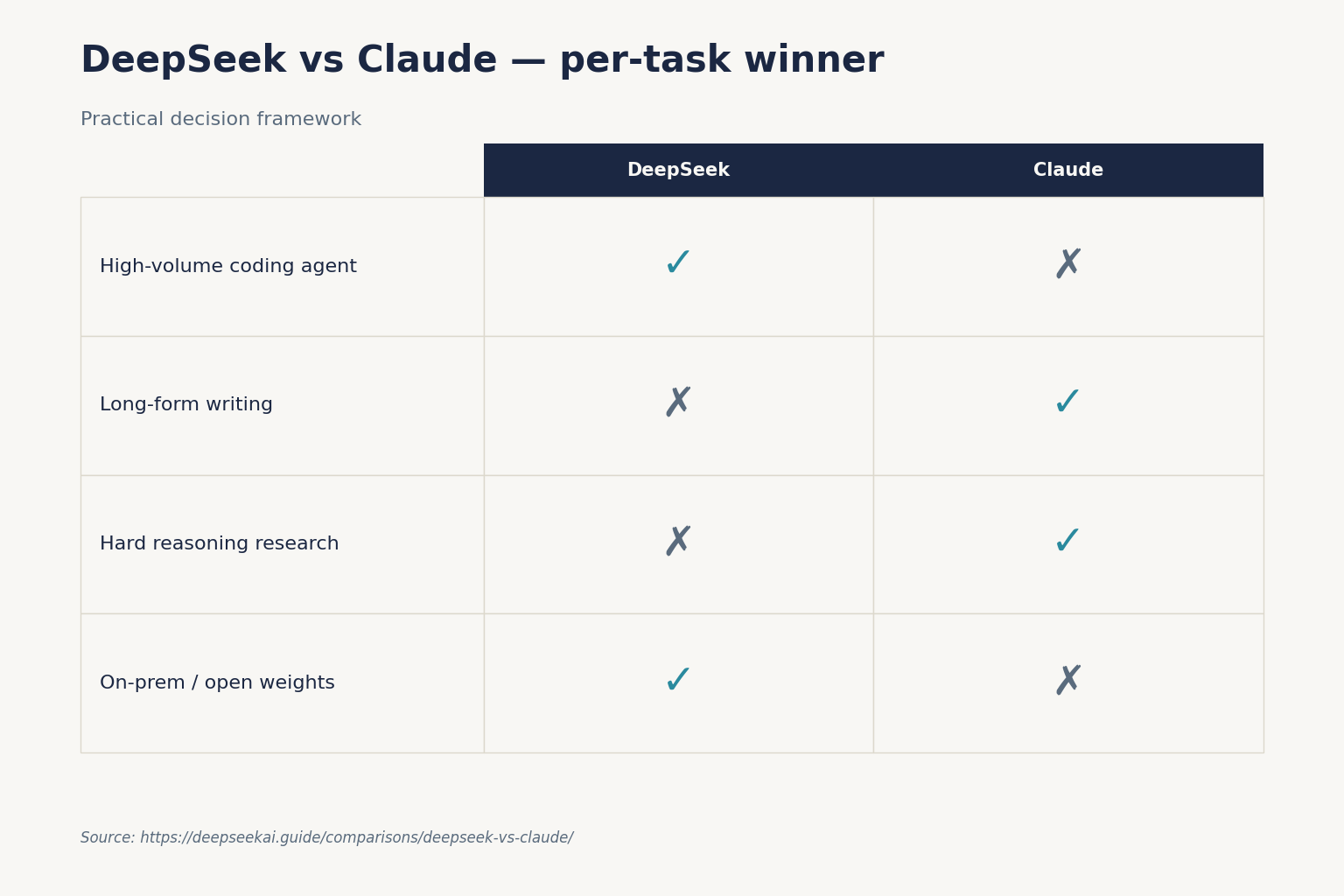

When to pick which

A practical decision framework for the most common scenarios:

- High-volume coding agent, cost matters — DeepSeek V4-Pro. You give up 0.2 points on SWE-Bench; you save ~86% on output tokens.

- Chat-style copilot inside an IDE — Claude Sonnet 4.6 for the default experience; V4-Flash if you are budget-constrained and willing to tune prompts.

- Long-form writing, editing, and nuanced language tasks — Claude. Still has the most natural defaults for tone among the models we tested.

- Research assistant with hard reasoning problems — Claude Opus 4.6/4.7. Higher HLE and competition-math scores; worth the spend if the task warrants it.

- On-prem or sovereign-cloud deployment — DeepSeek V4 (MIT weights). Claude is not an option here.

- Regulated enterprise with procurement and data-residency requirements — Claude via AWS Bedrock or Google Vertex.

- Multi-turn customer-support bot at scale — V4-Flash with context caching. Claude Haiku 4.5 if you already have Anthropic procurement in place.

Alternatives worth considering

This is not a two-horse race. For coding, GPT-5.2 sits between the two on price; for raw reasoning quality, Gemini 3.1 Pro leads on HLE. For open-weight alternatives to DeepSeek specifically, the obvious rivals are Qwen and Llama. Useful comparisons next door: DeepSeek vs ChatGPT, DeepSeek vs Gemini, and the full AI comparison hub. For head-to-head API-cost runners, see DeepSeek API alternatives.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Is DeepSeek better than Claude for coding?

On public benchmarks from DeepSeek’s V4 launch, V4-Pro leads Claude Opus 4.6 on Terminal-Bench 2.0 (67.9% vs 65.4%) and LiveCodeBench (93.5% vs 88.8%), and is within 0.2 points on SWE-Bench Verified. Claude still tends to feel more polished in interactive IDE use. For autonomous agents and high-volume workloads, V4-Pro is usually the better buy — see DeepSeek for coding.

How much cheaper is DeepSeek than Claude?

DeepSeek V4-Flash lists at $0.14 input / $0.28 output per million tokens versus Claude Sonnet 4.6 at $3 / $15 — roughly 50× cheaper on output at list price. V4-Pro at $0.435 / $0.87 (75% promo through 2026-05-31; list $1.74 / $3.48) is about 7× cheaper than Claude Opus 4.6 on output. Anthropic’s batch API offers 50% off; DeepSeek does not currently match that specific discount. Full breakdown in DeepSeek API pricing.

Can I use Claude Code with DeepSeek?

Yes. DeepSeek ships an Anthropic-compatible endpoint on the same base URL as its OpenAI-compatible API, and DeepSeek explicitly optimised V4 for agent tools including Claude Code and OpenClaw. You swap base_url and api_key, pick a V4 model ID, and Claude Code talks to DeepSeek. Details in the DeepSeek API documentation.

Does DeepSeek have an app like Claude does?

DeepSeek ships a web chat at chat.deepseek.com and mobile apps for iOS and Android, but the product surface is narrower than Claude’s. Claude’s ecosystem includes Claude Code, desktop and mobile apps, and integrations across examples like Google Workspace, GitHub, and Slack. For the DeepSeek app experience, see the DeepSeek app guide.

Why would a team pick Claude over DeepSeek in 2026?

Three reasons dominate: mature enterprise tooling (Bedrock, Vertex, Foundry availability with data-residency options), stronger defaults for long-form writing and nuanced reasoning, and procurement comfort with a US-based vendor. Claude also edges DeepSeek on HLE and competition math. The trade-off is output cost: roughly 7× Opus versus V4-Pro. Compare directly at DeepSeek vs Anthropic Claude.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- RepositoryDeepSeek-AI on GitHubOpen-weight release details, training/inference notesLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingGoogle Gemini API pricingGemini API rates for cross-vendor comparisonsLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- BenchmarkLiveCodeBenchLive coding benchmark scoresLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.