Using DeepSeek for Marketing: Workflows, Prompts and Costs in 2026

Most marketing teams I talk to are stuck between two bad options: pay enterprise rates for GPT-5.5 or Claude Opus 4.7 to draft routine campaign assets, or settle for free-tier tools that throttle, hallucinate brand voice, and refuse long briefs. DeepSeek for marketing is the third option I now run in production — the V4 Preview that DeepSeek released on April 24, 2026 puts a million-token context window and frontier-adjacent quality at roughly one-seventh the output price of GPT-5.5. This guide walks through the workflows I actually use (briefs, ad copy, SEO outlines, email sequences, repurposing), the prompts that work, the limits that matter, and a worked cost calculation so you can size your own pilot before committing.

The concrete problem marketers face right now

Marketing operations have an awkward shape for AI procurement. The volume is high (dozens of variants per campaign), the quality bar is non-trivial (brand voice, factual accuracy, regulatory compliance for finance and health), and the budgets are squeezed. GPT-5.5 is priced at $5.00 per million input tokens and $30.00 per million output tokens, and Claude Opus 4.7 is priced at $5.00 input and $25.00 output — which adds up fast when you’re generating 50 ad variants a day across 12 SKUs.

DeepSeek’s pitch to marketers is brutally simple: similar quality on the workflows that matter (drafting, summarising, rewriting, ideation), one-sixth to one-hundredth of the cost. DeepSeek-V4 is positioned as a challenge to the status quo: by proving that architectural innovation can substitute for raw compute-maximalism, the lab made high-end AI accessible at a far lower cost. That doesn’t mean it wins every benchmark — it doesn’t — but for the marketing tasks where teams actually burn tokens, the cost-quality math has shifted.

What you’re actually buying with DeepSeek V4

The current generation is DeepSeek V4 (Preview), released on April 24, 2026. It ships as two open-weight Mixture-of-Experts models under the MIT license:

deepseek-v4-pro— 1.6T total parameters, 49B active. Frontier tier. Use it for long-form research syntheses, complex strategy briefs, and anything that will be published with the company’s name on it.deepseek-v4-flash— 284B total, 13B active. The cost-efficient tier and the default choice for most marketing pipelines.

Both models run with a 1,000,000-token default context window and can output up to 384,000 tokens. Thinking mode is a request parameter, not a separate model — set reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}} when you need deeper reasoning (campaign strategy, multi-source research), or leave it off (the default) for routine drafting where speed and cost matter more.

If your team is on legacy IDs from V3.2 — deepseek-chat or deepseek-reasoner — they currently route to deepseek-v4-flash and will be fully retired on 2026-07-24 at 15:59 UTC. Migration is a one-line model= swap; the base_url does not change.

Pricing snapshot for marketing budgets

All rates are USD per 1M tokens, taken from DeepSeek’s official pricing page as of April 2026. Pricing during a Preview window can change — check DeepSeek API pricing for the current numbers.

| Model | Input (cache hit) | Input (cache miss) | Output | Best for |

|---|---|---|---|---|

deepseek-v4-flash |

$0.0028 | $0.14 | $0.28 | High-volume drafting, social, email |

deepseek-v4-pro |

$0.003625 promo / $0.0145 list | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list | Strategy, long research, hero copy |

| GPT-5.5 (for comparison) | — | $5.00 | $30.00 | Reference price; verify on OpenAI’s page |

| Claude Opus 4.7 (for comparison) | — | $5.00 | $25.00 | Reference price; verify on Anthropic’s page |

On standard, cache-miss pricing, DeepSeek-V4-Pro comes in at roughly one-seventh the cost of GPT-5.5 and about one-sixth the cost of Claude Opus 4.7, according to VentureBeat’s April 24, 2026 analysis. Off-peak discounts are discontinued (DeepSeek ended them on 2025-09-05), so don’t budget against the old night-time 50–75% off rate.

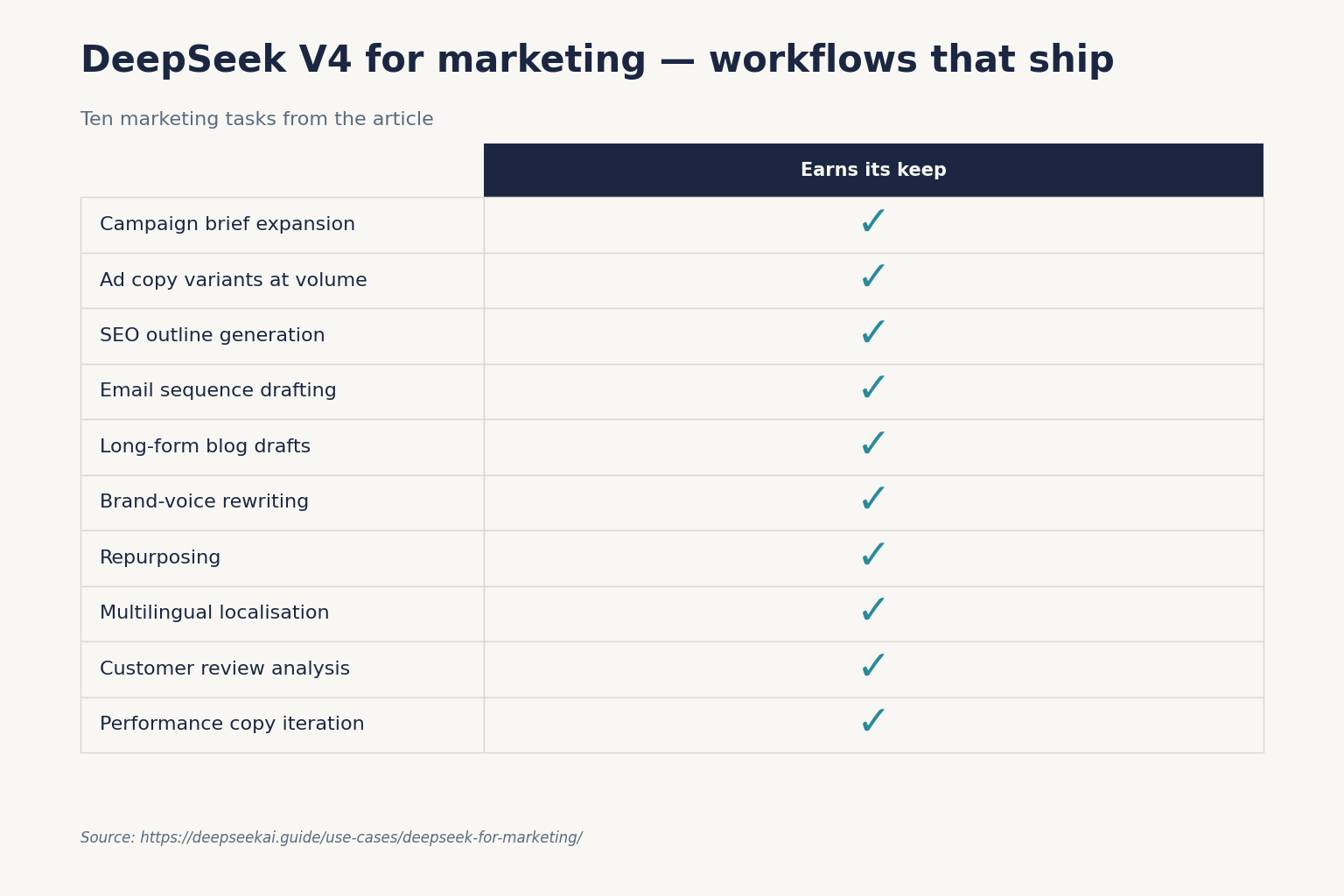

Ten marketing workflows that actually work

These are workflows I’ve run in production over the V3.2 → V4 transition. For each, I’m giving you the prompt skeleton and the temperature setting I use. DeepSeek’s official guidance is 1.3 for general conversation and translation, 1.5 for creative writing, and 1.0 for data analysis — those defaults are good starting points.

1. Campaign brief expansion

Drop a 200-word stakeholder email into V4-Flash with non-thinking mode and a templated system prompt that asks for: target persona, primary message, three supporting proof points, channel mix recommendation, and three risks. Temperature 1.0. Output is typically 600–900 tokens — call it $0.0003 per brief on Flash.

2. Ad copy variants at volume

For Google and Meta variant generation, I batch 20 variants per call with a system prompt that loads brand voice rules and a banned-words list. The 1M-token context lets you cache that brand-voice prefix and pay the cache-hit rate ($0.0028/M) on every subsequent call. Temperature 1.5 for headlines, 1.3 for descriptions.

3. SEO outline generation

For pillar pages, I feed in 5–10 competitor URLs (scraped, not linked) plus the target query and ask V4-Pro in thinking mode for an H1, 6–10 H2s with intent annotations, the People-Also-Ask cluster, and a schema recommendation. Pair this with the DeepSeek for SEO guide if you want the deeper version with E-E-A-T checks.

4. Email sequence drafting

Five-email nurture sequences are a near-perfect fit for V4-Flash. System prompt: customer journey stage, primary CTA per email, banned phrases. User prompt: product details and offer. Temperature 1.3. The model handles cross-email continuity well because the entire sequence fits in a single response.

5. Long-form blog drafts from research dumps

The 1M-token window is genuinely useful here. Paste 30–50 research notes, transcripts, or PDFs (extracted to text) and ask for a 2,000-word draft to a brief. V4-Pro in thinking mode gives you a noticeably better draft than V4-Flash on this task; V4-Flash is a serious model — on SWE-bench Verified it scores 79.0% versus V4-Pro’s 80.6%, a 1.6-point gap — but for synthesis-heavy long form, the Pro lift is worth it.

6. Brand-voice rewriting

Take legal-approved copy and rewrite it for three personas. Keep the system prompt as a frozen brand-voice document (cached) and the user prompt as just the source paragraph. This is where context caching pays for itself — the brand-voice doc might be 4,000 tokens, and you’ll pay the $0.0028/M rate every call after the first.

7. Repurposing

One blog post → LinkedIn post + X thread + newsletter blurb + YouTube description + 3 carousel slide texts. V4-Flash, single call, structured output via JSON mode. JSON mode is designed to return valid JSON, not guaranteed — include the word “json” in your prompt with a small example schema, set max_tokens high enough to avoid truncation, and handle occasional empty content.

8. Multilingual localisation

DeepSeek handles English and a long list of secondary languages competently. For US/UK/AU/IE English variants of the same campaign, run a single call with a system prompt enumerating the spelling and idiom differences. For non-English markets, see DeepSeek for translation for the version-by-version quality notes.

9. Customer review analysis

Drop 500 reviews into the context window and ask for sentiment clusters, top three product complaints, and a verbatim pull-quote per cluster. Temperature 1.0. This is the kind of task that used to mean a separate NLP pipeline; now it’s one API call.

10. Performance copy iteration

Feed V4 the past 30 days of subject-line performance (open rate per line) and ask for 10 new subject lines that hypothesise about why the winners won. Don’t trust the explanations as ground truth — they’re directional, not causal — but the new lines are usually a measurable lift over a human-only round.

The minimum-viable API setup

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. Here is a minimal Python example using the OpenAI SDK against DeepSeek’s base URL:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": "You are a senior B2B copywriter. Brand voice: direct, specific, no jargon."},

{"role": "user", "content": "Write 5 LinkedIn ad variants for a SaaS observability tool, 90 chars max each."},

],

temperature=1.3,

max_tokens=800,

)

print(resp.choices[0].message.content)The API is stateless — DeepSeek does not remember prior turns, so for multi-turn flows your client must resend the full messages array on every call. The web chat at chat.deepseek.com keeps session state for you; the API does not. DeepSeek also exposes an Anthropic-compatible surface against the same base URL if your stack already uses the Anthropic SDK. For a fuller walk-through, see the DeepSeek API getting started tutorial.

Worked cost example: 1M ad-variant calls per month

Assume deepseek-v4-flash, a 2,000-token cached brand-voice system prompt, a 200-token user message (uncached on each call), and a 300-token response.

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 × $0.28/M = $84.00

- Total: $117.60 per million calls.

The same workload on deepseek-v4-pro would cost about $1,421.00 (cached input $290 + uncached input $348 + output $1,044). For routine ad and email work, Flash is the obvious pick. Pro earns its keep on briefs and strategy where the quality lift moves real money downstream. Run your own numbers with the DeepSeek pricing calculator.

Where DeepSeek is genuinely weaker for marketing

I run V4 in production and I’d still pick a competitor for these specific sub-tasks:

- Real-time factual recall. SimpleQA-Verified at 57.9% versus Gemini’s 75.6% reveals a meaningful factual knowledge retrieval gap; if your use case requires accurate real-world knowledge recall, Gemini holds a clear edge. For news-anchored campaigns, pair DeepSeek with a search tool or use a Gemini-class model.

- Cross-domain expert reasoning. HLE (Humanity’s Last Exam) at 37.7% puts V4-Pro below Claude (40.0%), GPT-5.4 (39.8%), and well below Gemini-3.1-Pro (44.4%). Most marketing copy doesn’t need this; regulated finance/health/legal copy sometimes does.

- Brand-image creative. DeepSeek V4 is text-only. For ad imagery you still need Midjourney, DALL-E, or a Gemini multimodal model.

- Sensitive content. DeepSeek’s content controls and the regulatory picture (Italy’s Garante action in January 2025; multiple US state restrictions on government devices) mean it’s not the right tool for politically sensitive campaigns or government-adjacent comms.

Honest comparisons help: see DeepSeek vs ChatGPT and DeepSeek vs Claude for head-to-head workflow tests, or browse the broader DeepSeek use cases hub for adjacent functions — agents writing MLS listings, lease summaries and CMA drafts will want the property-marketing workflow guide.

Privacy, data residency and the marketing-data question

Marketing data often includes PII — email lists, CRM exports, customer reviews with names. DeepSeek-hosted endpoints process requests on infrastructure subject to Chinese law, which means law-enforcement access under legal process is possible. Two practical options:

- Strip PII before the API call. Replace names, emails and account IDs with tokens client-side; reinsert after the response.

- Self-host the open weights. Both V4 models are MIT-licensed and published on Hugging Face. Inference at 1.6T parameters is non-trivial, but Flash at 284B / 13B active is within reach for a serious GPU box. The install DeepSeek locally tutorial covers the setup.

For a fuller treatment, see DeepSeek privacy.

Getting started: a one-week pilot

- Day 1: Get an API key and run the Python snippet above against

deepseek-v4-flash. - Day 2: Pick one workflow from the ten above. Build a system prompt that bakes in brand voice and a banned-words list.

- Day 3: Run 50 outputs side-by-side against your current tool. Score on a 5-point rubric (accuracy, voice, usability, novelty, length).

- Day 4: Add context caching by pinning the brand-voice prefix. Watch your cache-hit ratio in the billing console.

- Day 5: Cost the workflow at projected monthly volume using the worked example above as a template.

If V4-Flash holds quality at your volume, that’s your default. Keep V4-Pro for the 5–10% of work that justifies the spend.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and technical report before committing to a production decision.

Frequently asked questions

Is DeepSeek good for marketing content?

Yes, for most drafting, ideation, rewriting and repurposing tasks. DeepSeek V4-Flash handles ad copy, email sequences, social posts and brand-voice rewrites at roughly one-hundredth the output cost of GPT-5.5 or Claude Opus 4.7. For highly factual or regulated content, pair it with a search tool or a competitor with stronger factual recall. See the DeepSeek for content creation guide for task-specific notes.

How much does DeepSeek cost for a marketing team?

For a team running 1 million Flash calls per month with a cached system prompt, expect about $117.60 — versus thousands on premium closed models. V4-Pro on the same workload is roughly $1,421. Off-peak discounts ended in September 2025 and have not returned. Run your own numbers with the DeepSeek cost estimator before committing to volume.

Can DeepSeek write in our brand voice?

Yes, with a properly engineered system prompt. Load a 1,000–4,000-token brand voice document (tone rules, banned phrases, sample paragraphs) into the system message and pin it so context caching kicks in. Temperature 1.3 for general voice work, 1.5 for creative. The DeepSeek prompt engineering tutorial covers the exact patterns I use for voice consistency.

What is the difference between DeepSeek V4-Pro and V4-Flash for marketing?

V4-Flash (284B/13B active) handles routine drafting at $0.14 input / $0.28 output per million tokens. V4-Pro (1.6T/49B active) is the frontier tier at $0.435/$0.87 promo through 2026-05-31 (list $1.74/$3.48) — about 12× the spend per output token. For ad copy, email and social, Flash is the right default. Pick Pro for strategy briefs, long research synthesis and hero copy. Compare specs on DeepSeek V4.

How does DeepSeek compare to ChatGPT for marketing tasks?

ChatGPT (GPT-5 family) tends to lead on factual recall, image generation and the breadth of its plugin/agent ecosystem. DeepSeek wins decisively on price and on long-context tasks thanks to its 1M-token default window. For most marketing drafting and rewriting, the quality difference is small enough that the cost gap dominates. The detailed head-to-head is in DeepSeek vs ChatGPT.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- OfficialDeepSeek official API documentationAuthoritative API behaviour and model-ID referenceLast checked: April 30, 2026

- Model cardDeepSeek-V4-Pro Hugging Face model cardV4-Pro technical report and architecture detailsLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.