How to Complete DeepSeek API Key Setup (V4 Walkthrough, 2026)

You have a DeepSeek account, you want to hit the API from your own code, and you are not sure which console page to open, which model ID to use, or what to do with the `sk-…` string once it appears. This guide handles the full DeepSeek API key setup end to end: creating a platform account, generating a key, topping up a balance, storing the secret safely, and sending your first `POST /chat/completions` request against `deepseek-v4-pro` or `deepseek-v4-flash`. Everything below was checked against DeepSeek’s live platform and API docs on April 24, 2026 — the day V4 Preview shipped. By the end you will have a working key, a tested Python and curl example, and a pricing sanity-check for your first invoice.

What a DeepSeek API key actually is

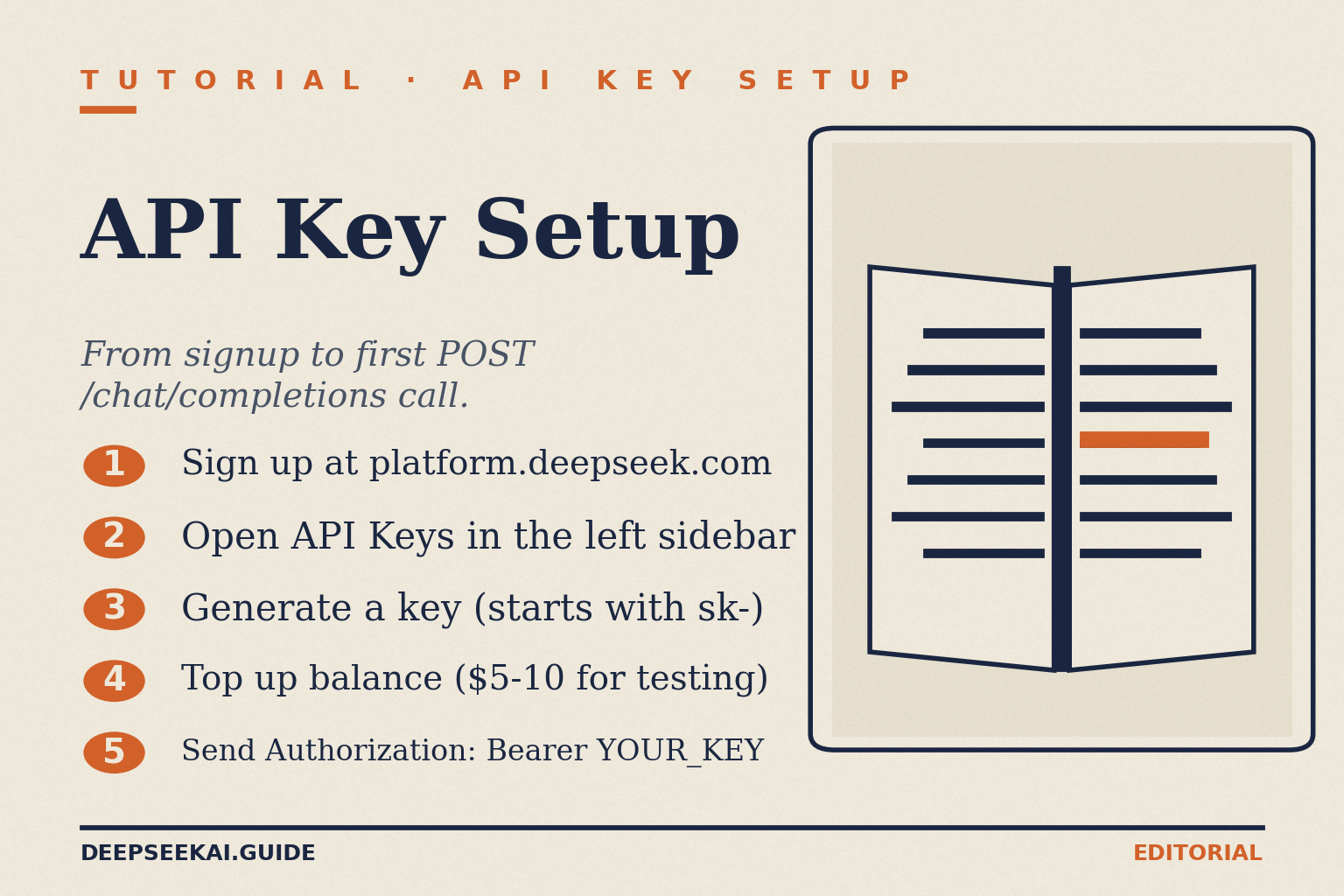

A DeepSeek API key is a bearer token — a string starting with sk- — that authenticates requests against https://api.deepseek.com. Every request must carry an Authorization: Bearer YOUR_API_KEY header, and a wrong or missing key leads to 401, and if your balance is exhausted the API returns 402. Keys are created and revoked from the platform console at platform.deepseek.com/api_keys; the dashboard also shows your balance, usage, and billing history.

Two things are worth stating up front because they trip up developers moving from the DeepSeek web chat:

- The API is stateless. The web app remembers your conversation across turns. The API does not — your client must resend the full

messagesarray on every call. - The current generation is DeepSeek V4, released April 24, 2026. It ships as two open-weight MoE model IDs:

deepseek-v4-pro(1.6T total / 49B active parameters, frontier tier) anddeepseek-v4-flash(284B / 13B active, cost-efficient tier). Both weights are MIT-licensed. You pick the tier at request time via themodelfield.

If you are maintaining an older integration, the legacy IDs still work for now. The model names deepseek-chat and deepseek-reasoner will be deprecated on 2026/07/24, and for compatibility they correspond to the non-thinking mode and thinking mode of deepseek-v4-flash, respectively. Migration is a one-line model= change — base_url stays the same.

Prerequisites

- An email address (recommended over social sign-in for developer accounts; it survives org changes).

- A payment method to top up the prepaid balance. The account runs on a prepaid credit model, not monthly invoicing.

- Python 3.9+ or Node.js 18+ installed locally, if you want to run the quickstart snippets.

- A secrets manager or at minimum a gitignored

.envfile. Keys should never sit in application source.

Step-by-step DeepSeek API key setup

1. Create a platform account

Open platform.deepseek.com in a browser. This is the developer console — a separate surface from the consumer chat at chat.deepseek.com. On the landing page the V4 Preview banner confirms the model is now available on web, app, and API. Register with email and a strong password, verify via the confirmation email, and sign in. You will land on a dashboard that surfaces usage, billing, and keys.

2. Top up your balance

The API will not dispatch requests until there is a credit balance or a granted balance on the account. Navigate to Billing in the sidebar and add a payment method, then purchase credit. DeepSeek may offer a granted balance — a small promotional credit that can expire — so check the billing console for current offers before topping up. Fees are deducted from your topped-up balance or granted balance, with a preference for using the granted balance first when both balances are available.

A sensible first top-up for exploratory work is $5–$10. That funds tens of thousands of V4-Flash requests or several thousand V4-Pro requests at typical chat sizes — more than enough to prototype.

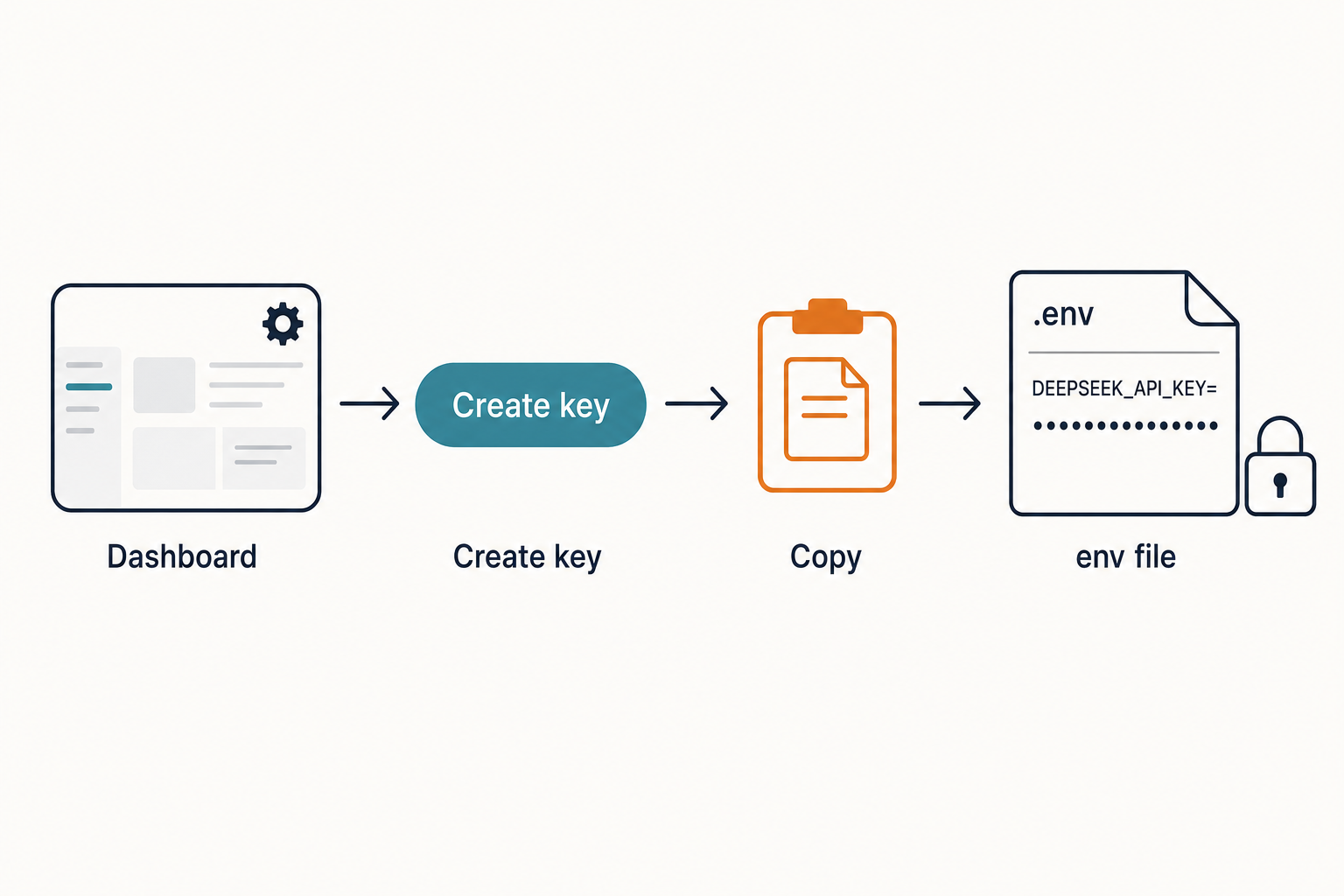

3. Generate the API key

In the left sidebar, open API Keys. Click Create new API key, give the key a descriptive name (for example v4-prod-2026-04 or dev-scratchpad), and confirm. The key string appears once, in a modal. Copy the key immediately — DeepSeek only shows it once. If you lose it, you cannot retrieve it; you revoke and regenerate.

Conventions that save pain later:

- Use one key per environment —

dev,staging,prod— so you can revoke one without breaking the others. - Label by date so rotation is obvious (

v4-prod-2026-04). - Never commit keys to a repo, even a private one. CI logs leak; forks happen.

4. Store the key safely

Two acceptable patterns: a secrets manager (AWS Secrets Manager, Google Secret Manager, 1Password, Doppler, HashiCorp Vault) or a local .env file that is listed in .gitignore. For a local shell, export it:

export DEEPSEEK_API_KEY="sk-..."The official OpenAI and DeepSeek SDK examples both read from this environment variable by default, so setting it means your code can reference it without hard-coding.

5. Send your first request

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. Below is the minimal curl call — confirmed from DeepSeek’s first-API-call documentation — against deepseek-v4-pro with thinking enabled:

curl https://api.deepseek.com/chat/completions

-H "Content-Type: application/json"

-H "Authorization: Bearer ${DEEPSEEK_API_KEY}"

-d '{

"model": "deepseek-v4-pro",

"messages": [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Hello!"}

],

"thinking": {"type": "enabled"},

"reasoning_effort": "high",

"stream": false

}'The Python equivalent uses the OpenAI SDK — the DeepSeek API uses an API format compatible with OpenAI, which in practice means you can often reuse the OpenAI SDK or other OpenAI-compatible tooling by changing the base URL to https://api.deepseek.com:

import os

from openai import OpenAI

client = OpenAI(

api_key=os.environ["DEEPSEEK_API_KEY"],

base_url="https://api.deepseek.com",

)

response = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Hello"},

],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)

print(response.choices[0].message.content)A successful response returns a JSON object containing a choices array with the assistant’s message and a usage object with token counts. In thinking mode the response carries reasoning_content alongside the final content. For non-thinking mode — faster, cheaper — pass extra_body={"thinking": {"type": "disabled"}}; omitting it leaves V4 in its default thinking-enabled mode. For compatibility, https://api.deepseek.com/v1 is also supported, but the /v1 path is not a model-version indicator.

DeepSeek also ships an Anthropic-compatible surface at the same base URL, so the Anthropic SDK works by swapping base_url and api_key — useful if your codebase is already on it.

V4 model IDs and thinking modes

Thinking mode is a request parameter on either V4 model, not a separate model ID. The accepted settings are:

- Non-thinking: V4 enables thinking by default; pass

extra_body={"thinking": {"type": "disabled"}}for non-thinking. Fastest, cheapest per request. This mode also enables FIM (Fill-In-the-Middle) completion in Beta. - Thinking (high): set

reasoning_effort="high"andextra_body={"thinking": {"type": "enabled"}}. The response returnsreasoning_contentalongside the finalcontent. - Thinking-max: set

reasoning_effort="max". Maximum reasoning effort; ensuremax_tokensis high enough to avoid truncation.

Which tier should a new key target?

| Tier | Model ID | Active / total params | Best for | Output $/1M |

|---|---|---|---|---|

| Flash | deepseek-v4-flash |

13B / 284B | Chat, RAG, classification, most production workloads | $0.28 |

| Pro | deepseek-v4-pro |

49B / 1.6T | Frontier coding, long agentic workflows, hard reasoning | $0.87 promo through 2026-05-31; $3.48 list |

| Legacy | deepseek-chat, deepseek-reasoner |

Routes to Flash | Migration only — retires 2026-07-24 15:59 UTC | $0.28 |

Flash is the default recommendation. Pro is roughly 12× the output price of Flash, so promote workloads to Pro only where a benchmark uplift (e.g. on agentic coding or Terminal-Bench) justifies the spend.

Core parameters worth knowing

temperature— randomness. DeepSeek’s own guidance: 0.0 for code and maths, 1.0 for data analysis, 1.3 for general conversation and translation, 1.5 for creative writing.top_p— nucleus sampling; set this or temperature, not both.max_tokens— output cap. On V4 this can reach 384,000 tokens. Keep it high enough for JSON mode so replies are not truncated.reasoning_effort— V4-only; pair with the thinking flag as shown above.stream— settruefor server-sent-events chunking.response_format={"type": "json_object"}— JSON mode. It is designed to return valid JSON, not guaranteed; prompt with the word “json” and a small example schema, and setmax_tokenshigh enough to avoid truncation.

Both V4 tiers default to a 1,000,000-token context window with output up to 384,000 tokens. Streaming, tool calling, context caching, and Chat Prefix Completion (Beta) are all available. For the full parameter reference, see the DeepSeek API documentation.

Rate limits, errors and what to expect on day one

DeepSeek does not publish fixed per-key QPS numbers — limits flex with platform load, and the platform reserves the right to throttle heavy bursts. Build defensively:

- Retry on 429 with exponential backoff and jitter.

- Handle 401 (bad or missing key) and 402 (insufficient balance) as non-retriable — they require operator intervention.

- Set soft usage alerts in the billing console at 60% and 90% so you are not surprised when a batch job burns the balance.

For the full error taxonomy see the guide to DeepSeek API error codes.

Pricing sanity check — worked example

Pricing as of April 2026 per the official DeepSeek pricing page:

| Model | Input, cache hit | Input, cache miss | Output |

|---|---|---|---|

deepseek-v4-flash |

$0.0028 / 1M | $0.14 / 1M | $0.28 / 1M |

deepseek-v4-pro |

$0.003625 / 1M (promo, list $0.0145) | $0.435 / 1M (promo, list $1.74) | $0.87 / 1M (promo, list $3.48) |

Off-peak discounts are discontinued (ended 2025-09-05) and have not returned with V4. Consider a realistic workload: 1,000,000 calls with a 2,000-token cached system prompt, a 200-token uncached user message, and a 300-token response.

On deepseek-v4-flash:

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

On deepseek-v4-pro, same workload:

- Cached input: 2,000,000,000 × $0.003625/M (promo) = $7.25 (list $29.00)

- Uncached input: 200,000,000 × $0.435/M (promo) = $87.00 (list $348.00)

- Output: 300,000,000 × $0.87/M (promo) = $261.00 (list $1,044.00)

- Total: $355.25 (V4-Pro 75% promo through 2026-05-31; list $1,421.00)

Every new user message is a miss against the cached prefix until the model sees it — the uncached line never goes to zero, even with perfect system-prompt caching. For a live calculator, use our DeepSeek pricing calculator.

Security: treating the key like production credentials

- Server-side only. Never embed the key in browser JavaScript, mobile binaries, or desktop apps that ship to users. Proxy through a backend.

- Rotate on a schedule. Quarterly is a reasonable minimum; monthly if the key touches customer data.

- Revoke on suspicion. Any key that appears in a log, screenshot, or public repo is compromised. Revoke from the console and regenerate.

- Scan your repos. GitGuardian, TruffleHog, and GitHub secret scanning all detect the

sk-pattern. Enable push protection if your host supports it. - Minimise data sent. Redact PII from prompts unless the use case genuinely requires it; conversations are processed on servers subject to Chinese law.

For deeper coverage of headers, key rotation and audit logging, see the companion piece on DeepSeek API authentication.

Verify the setup worked

- Run the curl or Python snippet above. A 200 response with a

choices[0].message.contentstring means the key and billing are both live. - Open the platform Usage page. A small deduction should show within a minute, matching the

usageobject in the response. - Hit

GET /user/balancewith the same bearer header to confirm the remaining credit programmatically. - If the first call fails: 401 means the bearer header is wrong, 402 means the balance is empty, and a model-not-found error usually means a typo in

model— the correct IDs aredeepseek-v4-proanddeepseek-v4-flash.

For a fuller end-to-end onboarding walkthrough with sample projects, see DeepSeek API getting started, and explore the broader DeepSeek API docs and guides for topics like streaming, caching and function calling.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

How do I get a DeepSeek API key?

Sign up at platform.deepseek.com, verify your email, and open the API Keys section in the dashboard sidebar. Click Create new API key, label it (for example dev-2026-04), and copy the sk- string immediately — DeepSeek only shows it once. Add a payment method under Billing and top up a balance before your first call. Full walkthrough in our DeepSeek API getting started guide.

Is the DeepSeek API key free?

The key itself costs nothing to generate, but API requests are billed against a prepaid balance. DeepSeek may offer a granted balance — a small promotional credit that can expire — so check the billing console for current offers. Beyond that you pay per token at the rates published on the official pricing page. For a breakdown of free versus paid surfaces, see is DeepSeek free.

What model ID should I use with a new key?

For most workloads, deepseek-v4-flash — the cost-efficient V4 tier at $0.14 input miss / $0.28 output per 1M tokens. Promote to deepseek-v4-pro only where the benchmark lift justifies roughly 12× the output cost. Legacy IDs deepseek-chat and deepseek-reasoner still work but retire on 2026-07-24 at 15:59 UTC. Comparison details on the DeepSeek V4-Flash page.

Can I use the OpenAI SDK with my DeepSeek API key?

Yes. DeepSeek implements the OpenAI Chat Completions wire format, so the official OpenAI Python or Node SDK works by setting base_url="https://api.deepseek.com" and passing your DeepSeek key as api_key. No call-site rewrites. DeepSeek also exposes an Anthropic-compatible surface at the same base URL. Full details in the DeepSeek OpenAI SDK compatibility reference.

Why is my API key returning a 401 or 402 error?

A 401 Authentication Fails error means the bearer header is missing, malformed, or the key has been revoked — check the exact Authorization: Bearer sk-... string and verify the key is still listed in the console. A 402 Insufficient Balance error means your top-up has run out; add credit in the billing page. See the full error taxonomy in DeepSeek API error codes.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- RepositoryDeepSeek-AI on GitHubOpen-weight release details, training/inference notesLast checked: April 30, 2026

- PricingOfficial DeepSeek pricing pageV4 worked-cost example rates verified April 2026Last checked: April 30, 2026

- Officialplatform.deepseek.com developer consoleWhere to register, top up balance, and create keysLast checked: April 30, 2026

Methodology

Pricing figures were checked against the official DeepSeek pricing page and normalised into estimated monthly costs using sample token usage scenarios. Actual costs may vary depending on caching, input/output ratio, regional availability, and provider updates.

Data confidence

High — figures checked directly against the official pricing page on the review date.

Editorial note

DeepSeek occasionally runs promotional discounts that are not always reflected in the headline page. The pricing dataset on this site auto-refreshes monthly; this article reflects the dataset on the date shown above.